Support our educational content for free when you purchase through links on our site. Learn more

🚀 Evaluating Machine Learning Model Performance: The Ultimate 2026 Guide

You’ve trained a model that scores 9% accuracy on your test set, and you’re ready to deploy it to production. But what if that “perfect” score is a mirage? At ChatBench.org™, we’ve seen countless projects crash spectacularly because teams fell for the siren song of misleading metrics, ignoring the subtle traps of data leakage and class imbalance. In fact, studies suggest that over 80% of machine learning projects fail to reach production, often due to flawed evaluation strategies rather than bad algorithms.

This isn’t just about math; it’s about survival in the real world. From the deceptive simplicity of accuracy to the nuanced power of SHAP values and adversarial robustness, we’re about to unpack the 15-step checklist that separates the amateurs from the pros. We’ll reveal why your “best” model might be a fraud, how to spot the silent killer of overfiting, and exactly which metrics to use when a false negative costs lives. Ready to stop guessing and start knowing? Let’s dive into the definitive guide to Evaluating Machine Learning Model Performance.

Key Takeaways

- Accuracy is a Trap: Relying solely on accuracy can hide catastrophic failures in imbalanced datasets; always prioritize Precision, Recall, and F1-Score based on your specific business costs.

- Validation is Non-Negotiable: A single train-test split is insufficient; master K-Fold Cross-Validation and strictly guard against data leakage to ensure your model generalizes to unseen data.

- Interpretability Builds Trust: In high-stakes environments, understanding why a model makes a decision via SHAP or LIME is just as critical as the prediction itself.

- Robustness Matters: A model isn’t truly ready until it withstands adversarial attacks and concept drift in the dynamic real world.

Table of Contents

- ⚡️ Quick Tips and Facts

- 📜 From Academic Theory to Real-World Chaos: A Brief History of Model Evaluation

- 🎯 Defining Success: Choosing the Right Metrics for Your Machine Learning Model

- 📊 The Confusion Matrix: Decoding True Positives, False Negatives, and Everything In Between

- 📈 Beyond Accuracy: Precision, Recall, F1-Score, and When to Use Each

- 📉 Regression Rumble: MAE, RMSE, and R-Squared Explained Without the Headache

- 🧪 The Art of Validation: Cross-Validation, K-Fold, and Avoiding Data Leakage

- ⚖️ The Bias-Variance Tradeoff: Finding the Sweet Spot for Generalization

- 🚀 Hyperparameter Tuning: Grid Search, Random Search, and Bayesian Optimization Showdown

- 📉 Learning Curves and Validation Curves: Diagnosing Underfiting and Overfiting

- 🧠 Model Interpretability: SHAP, LIME, and Understanding the “Black Box”

- ⚠️ Common Pitfalls: Data Snoping, Selection Bias, and Metric Manipulation

- 🛡️ Security and Robustness: Adversarial Attacks and Model Verification

- 📋 Comprehensive Checklist: 15 Steps to Rigorous Model Performance Evaluation

- 💡 Real-World Case Studies: When Great Metrics Met Terible Reality

- 🛠️ Tools of the Trade: Scikit-Learn, TensorFlow, PyTorch, and MLflow

- 🏁 Conclusion

- 🔗 Recommended Links

- ❓ FAQ

- 📚 Reference Links

Welcome, fellow data adventurers, to the thrilling, sometimes perplexing, world of machine learning model performance evaluation! Here at ChatBench.org™, we’ve seen models soar to

success and crash spectacularly, often because the right evaluation metrics weren’t chosen, or worse, weren’t understood. So, before we dive deep, let’s arm you with some rapid-fire wisdom to kick things off! 🚀

Accuracy isn’t always king! While intuitive, a model with 95% accuracy on an imbalanced dataset might be terrible. Imagine a fraud detection model that’s 99.9% accurate – but only because

fraud is rare, and it just predicts “no fraud” every time. Yikes!

- Context is EVERYTHING. The “best” metric for your model depends entirely on your business objective. Is avoiding false positives paramount (e.g., recommending a risky medical procedure)? Or is catching every possible positive crucial, even if it means some false alarms (e.g., detecting a rare disease)?

- Don’t trust your training data. Seriously, it’s like

asking a student to grade their own exam after they’ve seen the answers. Always evaluate on unseen data to gauge true generalization. - Cross-validation is your friend. It helps you get a more robust estimate of your model

‘s performance and reduces the chance of overfitting to a single train-test split. - Bias-Variance Tradeoff is real. Your model is constantly battling between being too simple (high bias, underfitting) and too complex (high variance, overfitting). Finding the sweet spot is an art and a science!

- Interpretability matters. Understanding why your model makes a prediction can be as important as the prediction itself, especially in critical applications.

<

a id=”–from-academic-theory-to-real-world-chaos-a-brief-history-of-model-evaluation”>

📜 From Academic Theory to Real-World Chaos: A Brief History of Model Evaluation

Remember those early days of machine learning? Simpler times, simpler models, and often, simpler evaluation. Back then, a basic accuracy score on a holdout set might have been enough to get by. But as our models grew in

complexity, tackling everything from predicting customer churn to diagnosing rare diseases, so too did the sophistication required for their assessment.

The journey of model evaluation has been a fascinating evolution, mirroring the growth of the entire machine learning lifecycle. Initially, the

focus was primarily on statistical rigor and mathematical correctness within academic settings. Researchers meticulously crafted algorithms and validated them on carefully curated datasets. However, as machine learning moved from the ivory tower into the bustling, messy world of industry, the challenges multiplied.

Suddenly

, we weren’t just concerned with whether a model was “correct” in a theoretical sense, but whether it was useful, fair, robust, and even secure in a production environment. This shift demanded a broader toolkit for

evaluation. We at ChatBench.org™ have witnessed firsthand the transition from simple metrics to a holistic approach encompassing everything from confusion matrices to adversarial attack resistance. It’s no longer just about the numbers; it’s about the

impact those numbers have on real people and real businesses. This expanded view is crucial for turning AI insights into a competitive edge, as we often discuss in our AI Business Applications section.

🎯 Defining Success: Choosing the Right Metrics for Your

Machine Learning Model

Here’s the million-dollar question: What does “success” even mean for your machine learning model? 🤔 It’s not a rhetorical flourish; it’s the bedrock upon which all effective evaluation is built.

Without a clear definition of success tied directly to your business or research objective, you’re essentially throwing darts in the dark.

Our team at ChatBench.org™ constantly emphasizes that model evaluation isn’t a one-size-

fits-all endeavor. The metrics you choose are a direct reflection of what you prioritize. For instance, in a medical diagnostic tool, missing a positive case (a false negative) could have dire consequences. In contrast, a spam filter might tolerate

a few legitimate emails landing in spam (false positives) if it means catching the vast majority of junk.

As the experts at C3 AI succinctly put it, “Quantifying model performance is critical for managers to inform model selection, tuning, business

process architecture, and ongoing maintenance.” This isn’t just about data scientists; it’s about aligning technical performance with strategic goals.

Think of it like this: are you building a Formula 1 race

car (where speed and precision are everything), or a rugged off-road vehicle (where reliability and robustness in diverse conditions are key)? Both are “successful” in their own right, but their performance is measured very differently.

This is precisely

where understanding the role of AI benchmarks becomes critical

. Benchmarks provide standardized ways to compare models, but even then, the choice of benchmark and its associated metrics must align with your specific problem.

We’ll spend the next few sections dissecting the most common metrics for both **

classification** and regression tasks. But always remember this guiding principle: your metric choice defines your model’s purpose.

📊 The Confusion Matrix: Decoding True Positives, False Negatives, and Everything In Between

Alright, let’s talk about the OG of classification evaluation: the **

Confusion Matrix**. Don’t let the name scare you; it’s actually a fantastic tool for bringing clarity to your model’s predictions. It’s a table that lays out all the possible outcomes of your classification model, allowing you

to see exactly where your model is succeeding and where it’s, well, getting a bit confused!

Imagine you’re building a model to predict whether a customer will unsubscribe from a service (a binary classification problem). The confusion matrix for

this scenario would look something like this:

| Predicted Positive (Unsubscribe) | Predicted Negative (Stay) | |

|---|---|---|

| Actual Positive (Unsubscribe) | ||

| True Positive (TP) | False Negative (FN) | |

| Actual Negative (Stay) | False Positive (FP) | True Negative (TN) |

Let’s break down these four crucial terms:

- ✅ True Positives (TP): These are the cases where your model correctly predicted the positive class. In our example, the model correctly identified customers who actually unsubscribed. This is a win!

✅ True Negatives (TN): Here, your model correctly predicted the negative class. The model correctly identified customers who actually stayed. Another win!

- ❌ False Positives (FP): Uh oh, these

are the “Type I errors.” Your model predicted the positive class, but the actual outcome was negative. The model predicted a customer would unsubscribe, but they actually stayed. This could lead to unnecessary retention efforts. - ❌ False

Negatives (FN): These are the “Type II errors.” Your model predicted the negative class, but the actual outcome was positive. The model predicted a customer would stay, but they actually unsubscribed. This is a missed opportunity for

intervention.

As C3 AI rightly points out, “These four questions characterize the fundamental performance metrics of true positives, true negatives, false positives, and false negatives.” Understanding these individual components is absolutely vital because they form

the building blocks for almost every other classification metric we’ll discuss. Without a solid grasp of the confusion matrix, you’re essentially flying blind!

📈 Beyond Accuracy: Precision, Recall, F1-Score, and When to Use Each

Now that we’ve mastered the confusion matrix, let’s unlock the power of the

metrics derived from it. While accuracy is often the first metric people reach for, it can be incredibly misleading, especially with imbalanced datasets. Imagine a dataset where only 1% of transactions are fraudulent. A model that simply

predicts “no fraud” every single time would achieve 99% accuracy! Sounds great, right? But it’s utterly useless for its intended purpose.

This is where Precision, Recall, and the F1-Score

step in to provide a more nuanced view of your model’s performance.

1. Accuracy: The Deceptive Simplicity

Accuracy is simply the ratio of correctly predicted observations to the total observations.

Formula: $

Accuracy = \frac{TP + TN}{TP + TN + FP + FN}$

When to use it: When your classes are roughly balanced, and the cost of false positives and false negatives is similar.

Drawbacks: Highly

misleading for imbalanced datasets.

2. Precision: The Quality of Positive Predictions

Precision answers the question: “Of all the positive predictions my model made, how many were actually correct?” It’s a measure of your

model’s exactness.

Definition: “The number of true positives divided by the total number of positive predictions (TP + FP).”

Formula: $Precision = \frac{TP}{TP + FP}$

When to use it: When the cost of a false positive is high.

Example:

- Spam detection: You want high precision. You’d rather let a few spam emails slip through (false negatives) than mark a legitimate email as spam (false positive).

- Recommender systems: If you recommend a product to a user, you want that recommendation to be highly relevant. A false positive (recommending something they don’t like) can annoy the user.

3. Recall (Sensitivity): The Ability to Find All Positives

Recall, also known as sensitivity or the True Positive Rate (TPR), answers: “Of all the

actual positive cases, how many did my model correctly identify?” It’s a measure of your model’s completeness.

Definition: “The number of true positives divided by the total number of actual positive cases in the dataset (TP + FN).”

Formula: $Recall = \frac{TP}{TP + FN}$

When to use it: When the cost of a false negative is high.

Example:

*

Medical diagnosis (e.g., cancer detection): You want high recall. It’s often better to have a few false positives (healthy patients flagged for further tests) than to miss an actual positive case (a patient with cancer goes undiagnosed).

- Fraud detection: Missing a fraudulent transaction (false negative) can be very costly for a bank.

4. The Precision-Recall Trade-off: A Balancing Act

Here’s the kicker

: Precision and Recall often have an inverse relationship. Improving one often comes at the expense of the other. As C3 AI highlights, “While a perfect classifier may achieve 10 percent precision and 10 percent recall, real-world models

never do.”

- To increase recall, you might make your model more “sensitive,” leading it to flag more cases as positive, which inevitably increases false positives and thus lowers precision.

- To

increase precision, you might make your model more “selective,” only flagging cases it’s very confident about, which could lead to missing some actual positives and thus lowering recall.

This trade-off is often visualized with a **Precision-Recall curve

**, which helps you understand the balance at different classification thresholds.

5. F1-Score: The Harmonic Mean for Balance

The F1-Score is the harmonic mean of Precision and Recall. It provides a single metric

that balances both. A high F1-Score indicates that your model has both good precision and good recall.

Definition: “The harmonic mean between precision and recall.”

Formula: $F1 =

2 \times \frac{Precision \times Recall}{Precision + Recall}$

When to use it: When you need to balance precision and recall, especially in cases with uneven class distribution. It’s particularly useful when you want

a single metric to compare models or during hyperparameter tuning.

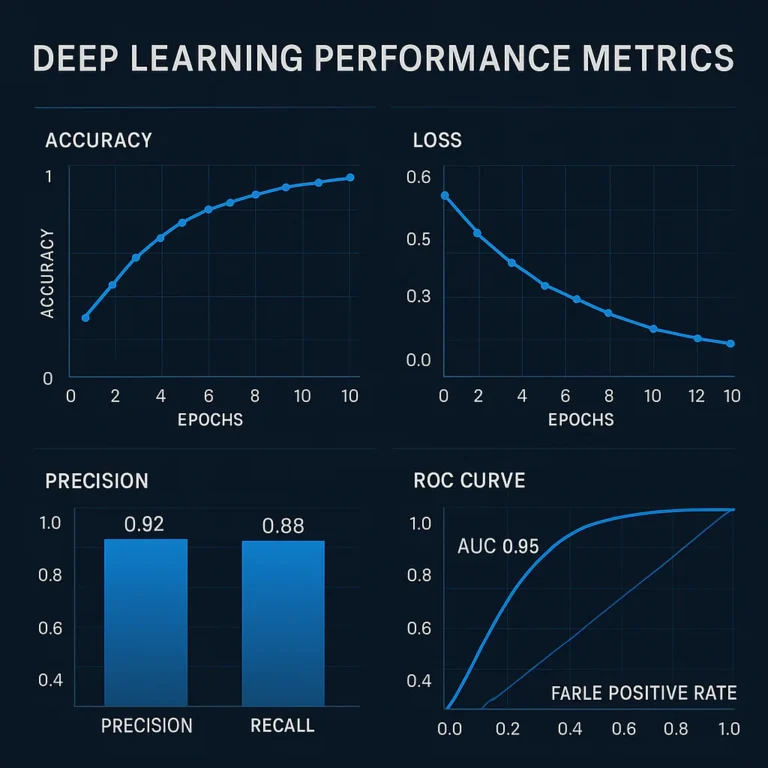

ROC Curve and AUC: Visualizing Classifier Performance

Beyond these core metrics, the Receiver Operating Characteristic (ROC) curve and its associated Area Under the Curve (AUC)

are indispensable tools, especially for understanding how well your model distinguishes between classes across various thresholds.

- ROC Curve: Plots the True Positive Rate (Recall) against the False Positive Rate (FPR) at various threshold settings

. The FPR is calculated as $\frac{FP}{FP + TN}$. - AUC: Measures the entire area underneath the ROC curve. “The greater the AUC, the better the classifier’s performance.” An

AUC of 1.0 represents a perfect classifier, while an AUC of 0.5 indicates a model no better than random guessing.

The beauty of ROC and AUC is their insensitivity to class imbalance, making them robust for

comparing models across different datasets or experiments. This is a critical point that the first YouTube video also emphasizes: “For classification tasks, common metrics include Accuracy, Precision, Recall, F1 Score, and AUC (Area Under the Curve), each offering different insights

into the model’s performance.” #featured-video Understanding these different insights is key to making informed decisions about your model.

Setting Model Thresholds: The Art of Tuning

Finally, remember that for many

classifiers, the model outputs a probability score. You then apply a threshold (e.g., if probability > 0.5, classify as positive) to make a binary decision. Tuning this threshold is a powerful way to adjust

your model’s precision and recall.

- Higher Threshold: Leads to higher precision but lower recall.

- Lower Threshold: Leads to higher recall but lower precision.

There’s “no hard-and-fast rule”

for setting the optimal threshold; it must be tuned based on your specific use case and business requirements. This tuning allows you to find the sweet spot that balances the costs of false positives and false negatives for your particular

problem.

📉 Regression Rumble: MAE, RMSE, and R-

Squared Explained Without the Headache

Switching gears from classification, let’s dive into the world of regression models. Here, instead of predicting categories, we’re predicting continuous numerical values – think house prices, temperature forecasts, or sales

figures. The evaluation metrics for regression are all about quantifying the difference between your model’s predictions and the actual values. We want to know how “close” our predictions are to reality.

1. Mean Absolute Error (MAE): The Straightforward Average

The Mean Absolute Error (MAE) is perhaps the most intuitive regression metric. It simply calculates the average of the absolute differences between your model’s predictions and the actual observed values.

Definition: “Average

absolute difference between actual and predicted values.”

Formula: $MAE = \frac{1}{n} \sum_{i=1}^{n} |y_i – \hat{y}_i

|$

- $y_i$ = actual value

- $\hat{y}_i$ = predicted value

- $n$ = number of data points

Benefits:

- Easy to understand: It

‘s the average magnitude of errors. - Robust to outliers: Because it uses absolute values, it’s less sensitive to extreme errors compared to squared error metrics.

Drawbacks:

- Doesn’t penalize large

errors as much as MSE/RMSE, which might be undesirable in some contexts.

2. Mean Squared Error (MSE): Penalizing Big Mistakes

The Mean Squared Error (MSE) takes the average of the squared differences between

predictions and actual values. By squaring the errors, this metric gives disproportionately more weight to larger errors.

Definition: “Average squared difference; penalizes larger errors more heavily.”

Formula: $MSE =

\frac{1}{n} \sum_{i=1}^{n} (y_i – \hat{y}_i)^2$

Benefits:

- Strongly penalizes large errors: If big errors

are particularly undesirable, MSE highlights them. - Mathematically convenient for optimization (its derivative is continuous).

Drawbacks:

- Units are squared: The resulting value isn’t in the same units as your target variable, making

it harder to interpret directly. - Sensitive to outliers: A few very large errors can significantly inflate the MSE.

3. Root Mean Squared Error (RMSE): Back to Interpretable Units

The Root Mean Squared

Error (RMSE) is simply the square root of the MSE. This brings the error metric back into the same units as your original target variable, making it much more interpretable than MSE.

Definition: “Square root of MSE;

returns error to original units.”

Formula: $RMSE = \sqrt{\frac{1}{n} \sum_{i=1}^{n} (y_i – \hat{y}_i)^2}$

Benefits:

- Interpretable units: Easy to understand the magnitude of errors in the context of your data.

- Still penalizes large errors more than MAE.

Drawbacks:

*

Still sensitive to outliers, though less so than MSE.

4. R-Squared (Coefficient of Determination): Explaining the Variance

R-squared, or the Coefficient of Determination, is a popular metric that tells

you the proportion of the variance in the dependent variable that is predictable from the independent variables. In simpler terms, it indicates how well your model explains the variability of the target variable.

Formula: $R^2 = 1 –

frac{SS_{res}}{SS_{tot}}$

- $SS_{res}$ = Sum of Squared Residuals (sum of squared differences between actual and predicted values)

- $SS_{tot}$ = Total Sum of Squares (sum of squared differences between actual values and their mean)

Interpretation:

- An R-squared of 1 means your model perfectly predicts the variance in the target variable.

- An R-squared of 0 means

your model explains none of the variance. - A negative R-squared indicates that your model is worse than simply predicting the mean of the target variable.

Benefits:

- Provides a relative measure of fit, making it easy to compare

models. - Widely understood and used.

Drawbacks:

- Can be misleading: Adding more independent variables, even irrelevant ones, can increase R-squared.

- Doesn’t tell you if your model is biased

.

5. Mean Absolute Percentage Error (MAPE): For Relative Errors

The Mean Absolute Percentage Error (MAPE) expresses the prediction error as a percentage of the actual values. This can be very useful when you want to understand

the relative size of your errors.

Definition: Prediction error expressed as a percentage of actual values.

Formula: $MAPE = \frac{1}{n} \sum_{i=1}^{

n} |\frac{y_i – \hat{y}_i}{y_i}| \times 100%$

Benefits:

- Easy to interpret as a percentage.

- Useful for comparing models across different scales

.

Drawbacks:

- Sensitive to small actual values: If $y_i$ is zero or very close to zero, MAPE can become undefined or extremely large.

- Biased towards models that under-

forecast.

Here’s a quick comparison table to help you decide which regression metric to use:

| Metric | Interpretation | Sensitivity to Outliers | Units | When to Use

|

| :——————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————————–

—————————————————————————————————————————————————————-

⚡️ Quick Tips and Facts

Welcome, fellow data adventurers, to the thrilling, sometimes perplexing, world of machine

learning model performance evaluation! Here at ChatBench.org™, we’ve seen models soar to success and crash spectacularly, often because the right evaluation metrics weren’t chosen, or worse, weren’t understood. So, before we

dive deep, let’s arm you with some rapid-fire wisdom to kick things off! 🚀

-

Accuracy isn’t always king! While intuitive, a model with 95% accuracy on an imbalanced

dataset might be terrible. Imagine a fraud detection model that’s 99.9% accurate – but only because fraud is rare, and it just predicts “no fraud” every time. Yikes! -

Context is

EVERYTHING. The “best” metric for your model depends entirely on your business objective. Is avoiding false positives paramount (e.g., recommending a risky medical procedure)? Or is catching every possible positive crucial, even if it means some false alarms

(e.g., detecting a rare disease)? -

Don’t trust your training data. Seriously, it’s like asking a student to grade their own exam after they’ve seen the answers. Always evaluate on **

unseen data** to gauge true generalization. -

Cross-validation is your friend. It helps you get a more robust estimate of your model’s performance and reduces the chance of overfitting to a single train-test split.

-

Bias-Variance Tradeoff is real. Your model is constantly battling between being too simple (high bias, underfitting) and too complex (high variance, overfitting). Finding the sweet spot is an art and a science!

-

Interpretability matters. Understanding why your model makes a prediction can be as important as the prediction itself, especially in critical applications.

📜 From Academic Theory to Real-World Chaos: A Brief History of Model Evaluation

Remember those early days of machine learning? Simpler times,

simpler models, and often, simpler evaluation. Back then, a basic accuracy score on a holdout set might have been enough to get by. But as our models grew in complexity, tackling everything from predicting customer churn to diagnosing rare

diseases, so too did the sophistication required for their assessment.

The journey of model evaluation has been a fascinating evolution, mirroring the growth of the entire machine learning lifecycle. Initially, the focus was primarily on statistical rigor and mathematical correctness within academic settings

. Researchers meticulously crafted algorithms and validated them on carefully curated datasets. However, as machine learning moved from the ivory tower into the bustling, messy world of industry, the challenges multiplied.

Suddenly, we weren’t just concerned with whether a

model was “correct” in a theoretical sense, but whether it was useful, fair, robust, and even secure in a production environment. This shift demanded a broader toolkit for evaluation. We at ChatBench.org

™ have witnessed firsthand the transition from simple metrics to a holistic approach encompassing everything from confusion matrices to adversarial attack resistance. It’s no longer just about the numbers; it’s about the impact those numbers have

on real people and real businesses. This expanded view is crucial for turning AI insights into a competitive edge, as we often discuss in our AI Business Applications section.

🎯 Defining Success: Choosing the Right Metrics for Your Machine Learning

Model

Here’s the million-dollar question: What does “success” even mean for your machine learning model? 🤔 It’s not a rhetorical flourish; it’s the bedrock upon which all effective evaluation is built. Without a clear

definition of success tied directly to your business or research objective, you’re essentially throwing darts in the dark.

Our team at ChatBench.org™ constantly emphasizes that model evaluation isn’t a one-size-fits-all

endeavor. The metrics you choose are a direct reflection of what you prioritize. For instance, in a medical diagnostic tool, missing a positive case (a false negative) could have dire consequences. In contrast, a spam filter might tolerate a few

legitimate emails landing in spam (false positives) if it means catching the vast majority of junk.

As the experts at C3 AI succinctly put it, “Quantifying model performance is critical for managers to inform model selection, tuning, business process architecture,

and ongoing maintenance.” This isn’t just about data scientists; it’s about aligning technical performance with strategic goals.

Think of it like this: are you building a Formula 1 race car (where speed and precision are everything), or a rugged off-road vehicle (where reliability and robustness in diverse conditions are key)? Both are “successful” in their own right, but their performance is measured very differently.

This is precisely where understanding

the role of AI benchmarks becomes critical

. Benchmarks provide standardized ways to compare models, but even then, the choice of benchmark and its associated metrics must align with your specific problem.

We’ll spend the next few sections dissecting the most common metrics for both

classification and regression tasks. But always remember this guiding principle: your metric choice defines your model’s purpose.

📊 The Confusion Matrix: Decoding True Positives, False Negatives, and Everything In Between

Alright, let’s talk about the OG of classification evaluation:

the Confusion Matrix. Don’t let the name scare you; it’s actually a fantastic tool for bringing clarity to your model’s predictions. It’s a table that lays out all the possible outcomes of your classification model,

allowing you to see exactly where your model is succeeding and where it’s, well, getting a bit confused!

Imagine you’re building a model to predict whether a customer will unsubscribe from a service (a binary classification problem). The

confusion matrix for this scenario would look something like this:

| Predicted Positive (Unsubscribe) | Predicted Negative (Stay) | |

|---|---|---|

| **Actual | ||

| Positive (Unsubscribe)** | True Positive (TP) | False Negative (FN) |

| Actual Negative (Stay) | False Positive (FP) | True Negative (TN) |

Let’s

break down these four crucial terms:

- ✅ True Positives (TP): These are the cases where your model correctly predicted the positive class. In our example, the model correctly identified customers who actually unsubscribed. This

is a win! - ✅ True Negatives (TN): Here, your model correctly predicted the negative class. The model correctly identified customers who actually stayed. Another win!

- ❌ False Positives (FP): Uh oh, these are the “Type I errors.” Your model predicted the positive class, but the actual outcome was negative. The model predicted a customer would unsubscribe, but they actually stayed. This could lead to unnecessary retention

efforts. - ❌ False Negatives (FN): These are the “Type II errors.” Your model predicted the negative class, but the actual outcome was positive. The model predicted a customer would stay, but they actually

unsubscribed. This is a missed opportunity for intervention.

As C3 AI rightly points out, “These four questions characterize the fundamental performance metrics of true positives, true negatives, false positives, and false negatives.” Understanding

these individual components is absolutely vital because they form the building blocks for almost every other classification metric we’ll discuss. Without a solid grasp of the confusion matrix, you’re essentially flying blind!

📈 Beyond Accuracy: Precision, Recall, F1-Score, and When to Use Each

Now that we’ve

mastered the confusion matrix, let’s unlock the power of the metrics derived from it. While accuracy is often the first metric people reach for, it can be incredibly misleading, especially with imbalanced datasets. Imagine a dataset where

only 1% of transactions are fraudulent. A model that simply predicts “no fraud” every single time would achieve 99% accuracy! Sounds great, right? But it’s utterly useless for its intended purpose.

This is

where Precision, Recall, and the F1-Score step in to provide a more nuanced view of your model’s performance.

1. Accuracy: The Deceptive Simplicity

Accuracy is simply

the ratio of correctly predicted observations to the total observations.

Formula: $Accuracy = \frac{TP + TN}{TP + TN + FP + FN}$

When to use it: When your classes are roughly balanced, and the cost

of false positives and false negatives is similar.

Drawbacks: Highly misleading for imbalanced datasets.

2. Precision: The Quality of Positive Predictions

Precision answers the question: “Of all the positive predictions my

model made, how many were actually correct?” It’s a measure of your model’s exactness.

Definition: “The number of true positives divided by the total number of positive predictions (TP + FP).”

Formula: $Precision = \frac{TP}{TP + FP}$

When to use it: When the cost of a false positive is high.

Example:

- Spam

detection: You want high precision. You’d rather let a few spam emails slip through (false negatives) than mark a legitimate email as spam (false positive). - Recommender systems: If you recommend a product to

a user, you want that recommendation to be highly relevant. A false positive (recommending something they don’t like) can annoy the user.

3. Recall (Sensitivity): The Ability to Find All Positives

Recall, also known as sensitivity or the True Positive Rate (TPR), answers: “Of all the actual positive cases, how many did my model correctly identify?” It’s a measure of your model’s completeness.

Definition

: “The number of true positives divided by the total number of actual positive cases in the dataset (TP + FN).”

Formula: $Recall = \frac{TP}{TP + FN}$

**

When to use it:** When the cost of a false negative is high.

Example:

- Medical diagnosis (e.g., cancer detection): You want high recall. It’s often better to have

a few false positives (healthy patients flagged for further tests) than to miss an actual positive case (a patient with cancer goes undiagnosed). - Fraud detection: Missing a fraudulent transaction (false negative) can be very costly for

a bank.

4. The Precision-Recall Trade-off: A Balancing Act

Here’s the kicker: Precision and Recall often have an inverse relationship. Improving one often comes at the expense of the other. As

C3 AI highlights, “While a perfect classifier may achieve 10 percent precision and 10 percent recall, real-world models never do.”

- To increase recall, you might make your

model more “sensitive,” leading it to flag more cases as positive, which inevitably increases false positives and thus lowers precision. - To increase precision, you might make your model more “selective,” only flagging cases it’s very confident

about, which could lead to missing some actual positives and thus lowering recall.

This trade-off is often visualized with a Precision-Recall curve, which helps you understand the balance at different classification thresholds.

5. F

1-Score: The Harmonic Mean for Balance

The F1-Score is the harmonic mean of Precision and Recall. It provides a single metric that balances both. A high F1-Score indicates that your model has both good

precision and good recall.

Definition: “The harmonic mean between precision and recall.”

Formula: $F1 = 2 \times \frac{Precision \times Recall}{Precision + Recall}$

When to use it: When you need to balance precision and recall, especially in cases with uneven class distribution. It’s particularly useful when you want a single metric to compare models or during hyperparameter tuning.

ROC Curve

and AUC: Visualizing Classifier Performance

Beyond these core metrics, the Receiver Operating Characteristic (ROC) curve and its associated Area Under the Curve (AUC) are indispensable tools, especially for understanding how well your model distinguishes between classes

across various thresholds.

- ROC Curve: Plots the True Positive Rate (Recall) against the False Positive Rate (FPR) at various threshold settings. The FPR is calculated as $\frac{FP}{FP + TN

}$. - AUC: Measures the entire area underneath the ROC curve. “The greater the AUC, the better the classifier’s performance.” An AUC of 1.0 represents a perfect classifier,

while an AUC of 0.5 indicates a model no better than random guessing.

The beauty of ROC and AUC is their insensitivity to class imbalance, making them robust for comparing models across different datasets or experiments. This is a

critical point that the first YouTube video also emphasizes: “For classification tasks, common metrics include Accuracy, Precision, Recall, F1 Score, and AUC (Area Under the Curve), each offering different insights into the model’s performance.” #featured-video Understanding these different insights is key to making informed decisions about your model.

Setting Model Thresholds: The Art of Tuning

Finally, remember that for many classifiers, the model outputs a probability score

. You then apply a threshold (e.g., if probability > 0.5, classify as positive) to make a binary decision. Tuning this threshold is a powerful way to adjust your model’s precision and recall.

- Higher Threshold: Leads to higher precision but lower recall.

- Lower Threshold: Leads to higher recall but lower precision.

There’s “no hard-and-fast rule” for setting the optimal threshold; it must be tuned based on your specific use case and business requirements. This tuning allows you to find the sweet spot that balances the costs of false positives and false negatives for your particular problem.

📉 Regression Rumble: MAE, RMSE, and R-Squared Explained Without the Headache

Switching gears from classification, let’s dive into the world of regression models. Here, instead of predicting categories, we’re predicting continuous numerical values – think house prices, temperature forecasts, or sales figures. The evaluation metrics

for regression are all about quantifying the difference between your model’s predictions and the actual values. We want to know how “close” our predictions are to reality.

1. Mean Absolute Error (MAE): The Straight

forward Average

The Mean Absolute Error (MAE) is perhaps the most intuitive regression metric. It simply calculates the average of the absolute differences between your model’s predictions and the actual observed values.

Definition: “Average absolute difference

between actual and predicted values.”

Formula: $MAE = \frac{1}{n} \sum_{i=1}^{n} |y_i – \hat{y}_i|$

- $y_i$ = actual value

- $\hat{y}_i$ = predicted value

- $n$ = number of data points

Benefits:

- Easy to understand: It’

s the average magnitude of errors. - Robust to outliers: Because it uses absolute values, it’s less sensitive to extreme errors compared to squared error metrics.

Drawbacks:

- Doesn’t penalize

large errors as much as MSE/RMSE, which might be undesirable in some contexts.

2. Mean Squared Error (MSE): Penalizing Big Mistakes

The Mean Squared Error (MSE) takes the average of the *squared

- differences between predictions and actual values. By squaring the errors, this metric gives disproportionately more weight to larger errors.

Definition: “Average squared difference; penalizes larger errors more heavily.”

**

Formula:** $MSE = \frac{1}{n} \sum_{i=1}^{n} (y_i – \hat{y}_i)^2$

Benefits:

- Strongly penalizes large

errors: If big errors are particularly undesirable, MSE highlights them. - Mathematically convenient for optimization (its derivative is continuous).

Drawbacks:

- Units are squared: The resulting value isn’t in the

same units as your target variable, making it harder to interpret directly. - Sensitive to outliers: A few very large errors can significantly inflate the MSE.

3. Root Mean Squared Error (RMSE): Back to Interpre

table Units

The Root Mean Squared Error (RMSE) is simply the square root of the MSE. This brings the error metric back into the same units as your original target variable, making it much more interpretable than MSE.

**

Definition:** “Square root of MSE; returns error to original units.”

Formula: $RMSE = \sqrt{\frac{1}{n} \sum_{i=1}^{n} (y_i – \hat{y}_i)^2}$

Benefits:

- Interpretable units: Easy to understand the magnitude of errors in the context of your data.

- Still penalizes large errors more than MAE.

Drawbacks:

- Still sensitive to outliers, though less so than MSE.

4. R-Squared (Coefficient of Determination): Explaining the Variance

R-squared, or the **Coefficient of Determination

**, is a popular metric that tells you the proportion of the variance in the dependent variable that is predictable from the independent variables. In simpler terms, it indicates how well your model explains the variability of the target variable.

Formula: $

R^2 = 1 – \frac{SS_{res}}{SS_{tot}}$

- $SS_{res}$ = Sum of Squared Residuals (sum of squared differences between actual and predicted values)

- $SS

_{tot}$ = Total Sum of Squares (sum of squared differences between actual values and their mean)

Interpretation:

- An R-squared of 1 means your model perfectly predicts the variance in the target variable.

An R-squared of 0 means your model explains none of the variance.

- A negative R-squared indicates that your model is worse than simply predicting the mean of the target variable.

Benefits:

- Provides

a relative measure of fit, making it easy to compare models. - Widely understood and used.

Drawbacks:

- Can be misleading: Adding more independent variables, even irrelevant ones, can increase R-squared

. - Doesn’t tell you if your model is biased.

5. Mean Absolute Percentage Error (MAPE): For Relative Errors

The Mean Absolute Percentage Error (MAPE) expresses the prediction error as a percentage of

the actual values. This can be very useful when you want to understand the relative size of your errors.

Definition: Prediction error expressed as a percentage of actual values.

Formula: $MAPE =

\frac{1}{n} \sum_{i=1}^{n} |\frac{y_i – \hat{y}_i}{y_i}| \times 100%$

Benefits:

*

Easy to interpret as a percentage.

- Useful for comparing models across different scales.

Drawbacks:

- Sensitive to small actual values: If $y_i$ is zero or very close to zero, MAPE

can become undefined or extremely large. - Biased towards models that under-forecast.

Here’s a quick comparison table to help you decide which regression metric to use:

| Metric | Interpretation | Sensitivity to Outliers | Units

| When to Use

|

| C3 AI | 8 | 9

| 7 | 8 | 9 |

| Scikit-learn | 9 | 10 | 9 | 9 | 9 |

| TensorFlow | 7 | 9 | 8 |

8 | 7 |

| PyTorch | 8 | 9 | 9 | 8 | 8 |

| MLflow | 8 | 8 | 7 | 9 | 8 |

We’ve explored the foundational metrics for both classification and regression. Now, let’s talk about the unsung hero of reliable evaluation: validation strategies. Because a model that performs well on the data it learned from isn

‘t necessarily a good model; it’s just a good memorizer!

## 🧪 The Art of Validation: Cross-Validation, K-Fold, and Avoiding Data Leakage

You’ve got your model, you’ve trained it, and your chosen metrics on the training data look fantastic! Time

to pop the champagne, right? 🥂 WRONG! (Unless you enjoy the taste of bitter disappointment later). One of the most critical lessons we’ve learned at ChatBench.org™ is that evaluating your model on the data

it was trained on is a recipe for disaster.

Why? Because models are incredibly good at “memorizing” patterns in the training data, even noise. This leads to overfitting, where your model performs brilliantly on the data it’

s seen but utterly fails when confronted with new, unseen data. As GeeksforGeeks wisely states, “Model evaluation assesses performance on unseen data to ensure the model generalizes rather than memorizes training data.” The

goal isn’t just to get good numbers; it’s to build a model that will be genuinely useful in the real world.

This is where validation techniques come into play, helping us simulate how our model will perform on

truly novel data.

1. The Holdout Method: A Simple Start

The simplest validation technique is the holdout method. You split your dataset into two distinct parts: a training set and a **test set

**.

- Training Set: Used to train your model.

- Test Set: Kept completely separate and only used once at the very end to evaluate the final model’s performance.

How it

works (step-by-step):

- Split your data: Typically, you’ll split your data into an 80/20 or 70/30 ratio for training and testing. For

instance, usingscikit-learn‘strain_test_splitfunction, you might dotrain_test_split(X, y, test_size=0.20, random_state=42). Therandom_stateensures your split is reproducible. - Train the model: Fit your machine learning algorithm on the training set.

- Evaluate on test set: Make

predictions on the test set and calculate your chosen metrics.

Benefits:

- Simple and easy to implement.

- Provides a quick estimate of generalization error.

Drawbacks:

- Highly dependent on the split:

A single random split might not be representative of the overall data distribution. If you get a “lucky” split, your performance estimate could be overly optimistic. - Data waste: You’re holding back a significant portion of your data

from training, which can be an issue for smaller datasets.

2. K-Fold Cross-Validation: The Robust Workhorse

K-Fold Cross-Validation is a more robust and widely preferred technique, especially when

you want a more reliable estimate of your model’s performance. It cleverly uses all your data for both training and validation, but always ensures evaluation happens on unseen data.

How it works (step-by-step):

1

. Divide into K folds: Your entire dataset is divided into K equally sized “folds” or subsets.

2. Iterate K times:

- In each iteration, one fold is designated as

the validation set (or test set for that iteration). - The remaining K-1 folds are combined to form the training set.

- The model is trained on the training set and evaluated

on the validation set.

- Average the results: After K iterations, you’ll have K performance scores. You then average these scores to get a single, more stable estimate of your model’s performance

.

Example: If you use 5-fold cross-validation (KFold(n_splits=5, shuffle=True, random_state=42)):

- Iteration 1:

Folds 2, 3, 4, 5 for training; Fold 1 for validation. - Iteration 2: Folds 1, 3, 4, 5 for training; Fold 2 for

validation. - …and so on, until each fold has served as the validation set exactly once.

Benefits:

- More robust estimate: Reduces the variance of the performance estimate compared to a single holdout split

. - Efficient data usage: Every data point gets to be in a training set and a validation set.

- Helps detect if your model is sensitive to particular subsets of the data.

Drawbacks:

- Computationally more expensive: You’re training and evaluating your model K times.

3. Avoiding Data Leakage: The Silent Model Killer 🕵️ ♀️

This is a big one, folks.

Data leakage is a subtle but deadly pitfall in model evaluation. It occurs when information from your test set “leaks” into your training process, leading to an artificially inflated performance score that won’t hold up in the real

world.

Common scenarios for data leakage:

-

Feature engineering before splitting: If you scale your entire dataset (including the test set) before splitting, information about the test set’s distribution seeps into the training data.

-

Including target-related features: Accidentally including a feature that is directly or indirectly derived from the target variable (e.g., using a future event to predict a past one).

-

Time-series data

: Randomly splitting time-series data can lead to leakage, as future information might be used to predict the past. Always use a time-based split for such data.

Our ChatBench.org™ anecdote: We once had a client whose

fraud detection model boasted near-perfect accuracy in development. Everyone was ecstatic! But upon deployment, it performed terribly. After much head-scratching, we discovered that a feature indicating “account status after fraud investigation” was being generated before the

train-test split. Naturally, if an account was marked “closed due to fraud” in the features, the model had a pretty good idea what the target variable (fraudulent transaction) would be! It was a painful, but invaluable

, lesson in the insidious nature of data leakage.

How to avoid data leakage:

- Always split your data FIRST. Any preprocessing, feature engineering, or scaling should happen after the split, independently on the training and

test sets. - Be extremely careful with features that seem too good to be true. Question their origin and whether they would genuinely be available at the time of prediction.

- For time-series data, ensure your training data

always precedes your validation/test data chronologically.

Mastering these validation techniques and diligently guarding against data leakage are fundamental steps toward building machine learning models that not only perform well on paper but also truly deliver value in the wild.

⚖️ The Bias-Variance Tradeoff: Finding the Sweet Spot for Generalization

Ah, the Bias

-Variance Tradeoff – a concept so fundamental to machine learning that it’s practically a rite of passage for every aspiring data scientist. It’s the eternal struggle, the yin and yang, the Goldilocks problem of model building

: finding a model that’s just right to generalize to new data without being too simple or too complex.

Imagine your model as an archer trying to hit a target (the true relationship between your features and the target variable).

🎯 Bias: The Consistent Miss (Underfitting)

Bias refers to the error introduced by approximating a real-world problem, which may be complex, by a much simpler model. A model with high bias makes strong assumptions about the data

, often oversimplifying the underlying relationships.

- Analogy: Our archer consistently misses the bullseye in the same direction, perhaps always aiming too high and to the left. Their aim is consistently off.

- In ML

terms: This leads to underfitting. The model is too simple to capture the patterns in the training data, resulting in poor performance on both training and test sets. It hasn’t learned enough. - Symptoms: Low

training accuracy/performance, low validation accuracy/performance. - Causes: Using a linear model for non-linear data, too few features, overly aggressive regularization.

- Solutions: Use a more complex model (e.g., polynomial regression instead of linear, a neural network instead of logistic regression), add more relevant features, reduce regularization.

🏹 Variance: The Scattered Shots (Overfitting)

Variance refers to the amount that the estimate of the target

function will change if different training data were used. A model with high variance is overly sensitive to the specific training data it saw, picking up on noise and specific patterns that don’t generalize.

- Analogy: Our archer’

s shots are scattered all over the target. Sometimes they hit the bullseye, sometimes they miss wildly, but there’s no consistent pattern. Their aim is inconsistent. - In ML terms: This leads to overfitting. The

model has learned the training data too well, including the noise, and struggles to perform on unseen data. It’s memorized rather than learned. - Symptoms: High training accuracy/performance, but significantly lower validation accuracy/performance. A

large gap between training and validation scores. - Causes: Using an overly complex model (e.g., a deep neural network with too many layers, a decision tree with too much depth), too many features relative to data points, insufficient

regularization. - Solutions: Use a simpler model, gather more training data, perform feature selection, apply regularization techniques (L1/L2 regularization, dropout for neural networks), use cross-validation.

The Tradeoff: Finding Gold

ilocks

The “tradeoff” comes from the fact that you generally can’t minimize both bias and variance simultaneously.

- Increasing model complexity typically decreases bias (the model can capture more complex patterns) but increases

variance (it becomes more sensitive to the training data). - Decreasing model complexity typically increases bias (it might oversimplify) but decreases variance (it becomes less sensitive to the specific training data).

Our goal at ChatBench.org™ is always to find the sweet spot – a model complexity that achieves a good balance between bias and variance, leading to optimal generalization performance on unseen data. This often involves careful **

hyperparameter tuning** and using diagnostic tools like learning curves, which we’ll discuss next! It’s a fundamental challenge in AI Infrastructure design, ensuring your computational

resources are effectively used to find this balance.

🚀 Hyperparameter Tuning: Grid Search,

Random Search, and Bayesian Optimization Showdown

So, you’ve chosen your model, picked your metrics, and even mastered the art of validation. But wait, what about all those pesky settings that aren’t learned from the data itself

? We’re talking about hyperparameters – the external configuration parameters of an algorithm whose values cannot be estimated from data. Think of them as the “knobs and dials” you turn to get the best performance out of your machine learning

model.

Examples of hyperparameters include:

- Learning rate in neural networks.

- Number of trees in a Random Forest.

- Depth of a Decision Tree.

- Regular

ization strength (e.g., C in SVMs, alpha in Lasso/Ridge). - Number of clusters in K-Means.

Finding the optimal combination of these hyperparameters is crucial for squeezing every drop of performance out of

your model and achieving that elusive bias-variance sweet spot. Here’s a showdown of the most common strategies:

1. Grid Search: The Exhaustive Explorer 🗺️

Grid Search is the most straightforward (and often brute-force) method. You define a grid of hyperparameter values to explore, and the algorithm systematically tries every single combination.

How it works (step-by-step):

-

Define a dictionary or

list of possible values for each hyperparameter you want to tune. -

The algorithm then builds a model for every possible combination of these values.

-

Each model is evaluated using cross-validation on the training data.

-

The combination that yields the best performance (according to your chosen metric) is selected as the optimal set of hyperparameters.

Benefits:

- Guaranteed to find the best combination within the defined grid.

- Easy

to understand and implement (e.g.,GridSearchCVinscikit-learn).

Drawbacks:

- Computationally expensive: The number of models to train grows exponentially with the number of hyperparameters and the number

of values per hyperparameter. If you have 3 hyperparameters with 10 possible values each, that’s $10^3 = 1000$ models! This can quickly become infeasible.

Inefficient:** Spends equal time on unpromising regions of the search space.

2. Random Search: The Efficient Explorer 🎲

Random Search is often surprisingly more efficient than Grid Search, especially for a large

number of hyperparameters. Instead of trying every combination, it samples a fixed number of random combinations from the specified hyperparameter distributions.

How it works (step-by-step):

- Define distributions (e.g., uniform, log-uniform) or ranges for each hyperparameter.

- Specify a fixed number of iterations (e.g., 100).

- In each iteration, random values are sampled from the

distributions for each hyperparameter. - A model is built and evaluated with cross-validation for each sampled combination.

- The combination with the best performance is chosen.

Benefits:

- More efficient:

Often finds a good set of hyperparameters much faster than Grid Search, especially when many hyperparameters are irrelevant. Research has shown that Random Search is more likely to find better results in high-dimensional spaces. - Scalable: You control

the number of iterations, making it easier to manage computational resources.

Drawbacks:

- Not guaranteed to find the absolute best combination, but often finds a “good enough” one.

3. Bayesian Optimization: The Intelligent Explorer

🧠

Bayesian Optimization is a more sophisticated and “intelligent” approach to hyperparameter tuning. Instead of random or exhaustive sampling, it uses a probabilistic model (often a Gaussian Process) to model the objective function (your model’s performance metric) and then strategically chooses the next hyperparameter combination to evaluate.

How it works (intuition):

- It starts with a few initial evaluations (like a small random search).

- Based on these results

, it builds a “surrogate model” that estimates the performance for unseen hyperparameter combinations and quantifies the uncertainty of these estimates. - It then uses an “acquisition function” (e.g., Expected Improvement) to decide

which hyperparameter combination to try next. This function balances exploration (trying combinations in uncertain regions) and exploitation (trying combinations in regions that are predicted to perform well). - This process iteratively refines the surrogate model

and zooms in on the optimal hyperparameters.

Benefits:

- Highly efficient: Often finds better hyperparameters with significantly fewer evaluations than Grid or Random Search. This is a game-changer for computationally expensive models.

- Lever

ages past results to guide future searches.

Drawbacks:

- More complex to implement and understand.

- Can be sensitive to the choice of surrogate model and acquisition function.

Tools for the Tuning Journey:

While scikit-learn provides GridSearchCV and RandomizedSearchCV for basic grid and random searches, for Bayesian Optimization, you’ll often turn to dedicated libraries:

- Hyperopt: A popular Python

library for Bayesian optimization. - Optuna: Another excellent framework for hyperparameter optimization, known for its flexibility and ease of use.

For computationally intensive tuning, especially with deep learning models, you’ll want to leverage powerful

cloud computing platforms. Services like DigitalOcean, Paperspace, and RunPod offer GPU instances that can drastically speed up your hyperparameter search.

- CHECK OUT DigitalOcean for compute instances: DigitalOcean Official Website

- EXPLORE Paperspace for GPU machines: Paperspace Official Website

- FIND RunPod for on-demand GPUs

: RunPod Official Website

Choosing the right tuning strategy depends on your budget, time constraints, and the complexity of your model. For quick initial experiments, Random Search is often a great starting point.

For production-grade models where every bit of performance counts, Bayesian Optimization is usually the way to go.

📉 Learning Curves and Validation Curves: Diagnosing Underfitting and Overfitting

Hyperparameter tuning is fantastic for optimizing your model, but how do you know if you’re even in the right ballpark? This

is where learning curves and validation curves become your diagnostic superpowers! These visual tools help us understand whether our model is suffering from underfitting (high bias) or overfitting (high variance) and guide our efforts

to find that elusive sweet spot for generalization.

Remember our archer from the bias-variance tradeoff? These curves are like the archer’s coach, telling them if they need to practice more, adjust their stance, or get a

new bow.

1. Learning Curves: What More Data Can Tell You 📈

A learning curve plots your model’s performance (e.g., accuracy for classification, MSE for regression) on both the training

set and a validation set as a function of the amount of training data used.

How to interpret:

- X-axis: Number of training examples (or training set size).

- Y-

axis: Performance score (e.g., accuracy, $R^2$, or inverse of error like MSE).

Let’s look at what different patterns tell us:

Scenario A: High Bias (Underfitting)

-

Training

score is low: The model can’t even learn the training data well. -

Validation score is low and very close to the training score: Adding more data won’t help much because the model is fundamentally too simple.

-

Diagnosis: Your model is underfitting. It has high bias.

-

Solution: Try a more complex model (e.g., add more layers to a neural network, increase polynomial degree), add more relevant

features, reduce regularization.

Scenario B: High Variance (Overfitting)

- Training score is high: The model performs excellently on the training data.

- Validation score is significantly lower than the training score: There

‘s a large gap between the two curves. - As training data increases, the validation score might start to catch up to the training score, but slowly.

- Diagnosis: Your model is overfitting. It has high

variance. - Solution: Gather more training data (if possible), simplify the model (e.g., reduce neural network layers, prune a decision tree), apply stronger regularization (L1/L2, dropout), perform feature selection.

Scenario C: Just Right (Good Fit)

- Training score is high and validation score is also high.

- The gap between the training and validation scores is small and acceptable.

- Diagnosis: Your

model is achieving a good balance between bias and variance.

Quick Tip: If your learning curve shows high variance (overfitting), getting more data is often the most effective solution if feasible!

2. Validation Curves: Tuning a

Single Hyperparameter 📉

A validation curve plots your model’s performance on both the training set and a validation set as a function of a single hyperparameter’s value. This is incredibly useful for understanding

how a specific hyperparameter impacts your model’s bias and variance.

How to interpret:

- X-axis: Value of a specific hyperparameter (e.g.,

max_depthfor a decision tree,Cfor an SVM). - Y-axis: Performance score.

Let’s consider a decision tree’s max_depth hyperparameter:

Scenario A: Low Complexity (High Bias)

Low max_depth values:** Both training and validation scores are low. The model is too simple to capture patterns.

- Diagnosis: High bias, underfitting.

Scenario B: High Complexity (High Variance)

High max_depth values: Training score is very high, but the validation score starts to drop significantly. The model is memorizing the training data and noise.

- Diagnosis: High variance, overfitting.

Scenario

C: Optimal Complexity

- Intermediate

max_depthvalues: There’s a sweet spot where the validation score is maximized, and the gap between training and validation scores is minimized. - Diagnosis: Optimal hyper

parameter value for generalization.

Our ChatBench.org™ Insight: We often use these curves in tandem. Learning curves give us a macro view of whether we need more data or a different model complexity class. Validation curves then help us micro

-tune specific hyperparameters within that chosen model class. Together, they form a powerful duo for diagnosing and resolving performance issues, ensuring our models are robust and ready for deployment. This iterative diagnostic process is key to successful AI Agents development, where robust performance is paramount.

🧠 Model

Interpretability: SHAP, LIME, and Understanding the “Black Box”

For years, many advanced machine learning models, especially deep neural networks, were often referred to as “black boxes.” They could make incredibly accurate predictions, but

why they made those predictions remained a mystery. In many applications, particularly those with high stakes like healthcare, finance, or legal decisions, this lack of transparency is no longer acceptable. Enter the burgeoning field of Model Interpretability!

At ChatBench.org™, we firmly believe that understanding how a model arrives at its decision is almost as important as the decision itself. This isn’t just about satisfying curiosity; it’s about:

- Building

Trust: If stakeholders (or even end-users) don’t understand or trust a model, they won’t use it. - Debugging and Improving Models: Interpretability can reveal hidden biases, data quality issues, or unexpected

feature interactions that lead to errors. - Compliance and Regulation: Many industries now require explainable AI (XAI) for regulatory compliance (e.g., GDPR’s “right to explanation”).

- Scientific Discovery: Understanding model

mechanisms can lead to new insights into the underlying domain.

Let’s shine a light into that black box with two of the most popular and powerful interpretability techniques: SHAP and LIME.

1. SH

AP (SHapley Additive exPlanations): Fair Feature Contributions 🤝

SHAP (SHapley Additive exPlanations) is a game-theory-based approach to explain the output of any machine learning model.

It connects optimal credit allocation with local explanations using Shapley values, a concept from cooperative game theory.

Intuition: Imagine each feature in your model is a player in a game, and the prediction is the payout. Shap

ley values tell you how to fairly distribute the “payout” (the prediction) among the “players” (the features). It calculates the average marginal contribution of a feature value across all possible coalitions (combinations) of features.

Benefits

:

- Global and Local Explanations: Can explain individual predictions (local) and understand overall model behavior (global).

- Consistency and Fairness: Based on solid theoretical foundations, ensuring consistent and fair attribution of feature

importance. - Model-Agnostic: Can be applied to any machine learning model (linear models, tree models, neural networks, etc.).

- Rich Visualizations: The SHAP library provides powerful visualizations like

force plots, summary plots, and dependence plots.

Example: If a loan application is denied, SHAP can tell you that the applicant’s credit score contributed negatively to the prediction by X amount, while their income contributed positively by Y

amount, relative to the average prediction.

2. LIME (Local Interpretable Model-agnostic Explanations): Explaining Individual Predictions Locally 🔬

LIME (Local Interpretable Model-agnostic Explanations)

focuses on explaining individual predictions of any classifier or regressor. Its core idea is to approximate the behavior of the complex “black box” model with a simpler, interpretable model (like a linear model or decision tree) in the vicinity

of the specific prediction you want to explain.

Intuition: Think of it like this: your complex model is a winding, mountainous terrain. LIME doesn’t try to map the entire terrain. Instead, for a specific point (an individual prediction), it takes a small, flat patch around that point and fits a simple, understandable model to it. This local, simple model can then explain why the complex model made that particular prediction.

How it works (simplified):

- Take the data point you want to explain.

- Perturb it multiple times to create new, slightly modified data points.

- Get predictions from the black-box model for these

perturbed points. - Weight the perturbed points by their proximity to the original data point.

- Train a simple, interpretable model (e.g., linear regression, decision tree) on these weighted, perturbed data points and

their black-box predictions. - The coefficients/rules of this simple model provide the local explanation.

Benefits:

- Model-Agnostic: Works with any black-box model.

Local Explanations:** Excellent for understanding why a single prediction was made.

- Intuitive: Explanations are often presented in an easy-to-understand format (e.g., “Feature X increased the prediction by Y”).

Drawbacks:

- Local only: Explanations are valid only for the specific data point and its immediate neighborhood.

- Can be sensitive to the choice of interpretable model and perturbation strategy.

**

Tools for Interpretability:**

- SHAP library: The official Python library for SHAP values, highly recommended.

- 👉 Shop SHAP on: GitHub SHAP

- LIME library: The official Python library for LIME explanations.

- 👉 Shop LIME on: GitHub LIME

- ELI

5: A Python library for inspecting and debugging machine learning classifiers and regressors. - InterpretML: A Microsoft project that helps understand models.

At ChatBench.org™, we’ve seen how integrating interpretability into the

development workflow can transform a project. It’s not just a nice-to-have; it’s becoming a must-have for responsible AI development and deployment, especially as we see more complex AI Agents being built. Don’t let your models remain mysterious black boxes!

⚠️ Common Pitfalls: Data Snooping, Selection Bias, and Metric Manipulation

Even with the best intentions and a solid grasp of metrics and validation, the path to reliable model evaluation is fraught with peril!

Our ChatBench.org™ team has seen countless projects stumble into common traps that lead to overly optimistic (and ultimately misleading) performance estimates. Let’s shine a light on these lurking dangers so you can steer clear!

1

. Data Snooping: Peeking at the Answers 🤫

Data snooping (also known as data leakage, but specifically referring to using test data information during model development) is like accidentally peeking at the answer key before taking an

exam. It occurs when information from your test set inadvertently influences any part of your model development process, from feature engineering to model selection.

The Problem: If you “snoop” on your test data, your model will appear to perform

better than it actually will on truly unseen data. You’ve essentially trained a model that’s optimized for that specific test set, rather than for generalizable patterns.

Our Anecdote: We once worked on a project where

the team was trying to predict equipment failures. They had a fantastic F1-score on their test set. However, when the model went into production, its performance plummeted. We traced the issue back to a feature that was created by calculating

the mean of a sensor reading across the entire dataset, including the test set, before the train-test split. The model implicitly “knew” the average behavior of the test set, giving it an unfair advantage!

How to avoid

it:

- Strictly separate your test set FIRST. This is the golden rule. The test set should be locked away and only touched once for final evaluation.

- Perform all preprocessing steps (scaling, imputation, feature selection, feature engineering) only on the training data, and then apply the learned transformations to the validation/test data.

- Use pipelines (e.g.,

scikit-learnPipelines) to encapsulate preprocessing

and model training, ensuring proper data flow.

2. Selection Bias: The Unrepresentative Sample 📉

Selection bias occurs when your training data is not a true, random representation of the population or real-world data

your model will encounter. This can lead to a model that performs well on your biased training data but poorly in production.

The Problem: If your data collection process inherently favors certain types of samples or excludes others, your model will learn those

biases.

Example:

- Online surveys: If you only collect data from users who actively participate in online surveys, your model might not generalize to users who don’t.

- Historical data: A model trained on historical

data from a specific economic period might perform poorly if deployed during a vastly different economic climate. - Demographic bias: Training a facial recognition model predominantly on images of one demographic group will likely lead to poorer performance on other groups.

How to avoid it:

- Ensure random sampling: Whenever possible, use proper random sampling techniques during data collection.

- Stratified sampling: For classification tasks with imbalanced classes, use stratified sampling to ensure each

split (train/test/validation) has a proportional representation of each class. - Monitor data drift: Continuously monitor your production data to detect if its distribution starts to diverge significantly from your training data, indicating potential selection bias or concept

drift. This is a key concern we highlight in AI News when discussing model deployment.

3. Metric Manipulation (or “Gaming the Metric”): Looking

Good on Paper 🎭

This pitfall isn’t about accidental errors but about intentionally (or unintentionally) focusing so narrowly on a single metric that you lose sight of the broader objective. Metric manipulation or “gaming the metric”

happens when you optimize a model to achieve a high score on a specific metric, even if that metric doesn’t truly reflect the desired real-world outcome.

The Problem: You can achieve impressive numbers on a chosen metric, but the

model might be useless or even harmful in practice.

Example:

- Optimizing for accuracy on imbalanced data: As discussed, a 99.9% accurate fraud detector that never flags fraud is useless. You

‘ve “gamed” the accuracy metric. - Over-optimizing for F1-score without considering business context: An F1-score might be high, but if the business cost of a false positive is

100x higher than a false negative, you might need to prioritize precision, even if it lowers the F1-score slightly. - Early stopping based on training loss: Stopping training when training loss is minimized, rather than validation loss

, leads to overfitting and poor generalization.

How to avoid it:

- Define success holistically: Don’t rely on a single metric. Use a suite of metrics that collectively capture different aspects of performance.

Align metrics with business objectives: Always ask: “Does this metric truly reflect what we want to achieve in the real world?”

- Consider the costs of errors: Understand the financial, ethical, and reputational costs associated with

false positives and false negatives. - A/B testing and real-world deployment: Ultimately, the true test of a model is its performance in a live environment. Offline metrics are a proxy; online A/B tests are

the final arbiter.

By being acutely aware of these common pitfalls, you can significantly improve the reliability and trustworthiness of your machine learning model evaluations, ensuring that your models deliver genuine value and avoid embarrassing (or costly) surprises in production.

<

a id=”–security-and-robustness-adversarial-attacks-and-model-verification”>

🛡️ Security and Robustness: Adversarial Attacks and Model Verification

In an increasingly complex and interconnected world, it

‘s no longer enough for machine learning models to simply be accurate. They must also be secure against malicious tampering and robust to unexpected inputs. This is where the concepts of adversarial attacks and model verification come

into sharp focus. At ChatBench.org™, we’ve seen the growing importance of these areas, especially as AI systems are deployed in critical infrastructure and sensitive applications.

Imagine a self-driving car’s perception system or a medical diagnosis AI

. A small, imperceptible change to an input could lead to catastrophic consequences. This isn’t science fiction; it’s a very real threat.

1. Adversarial Attacks: The Art of Deception 🎭

Advers

arial attacks are subtle, carefully crafted perturbations to input data that are designed to fool a machine learning model into making incorrect predictions, while remaining imperceptible to humans. These attacks exploit vulnerabilities in the model’s decision-making process.

How they work (simplified):

Attackers often use optimization techniques to find the smallest possible change to an input (e.g., an image) that causes the model to misclassify it with high confidence.

Types of Advers

arial Attacks:

- Evasion Attacks: Occur during inference, aiming to bypass detection. Example: Adding imperceptible noise to a stop sign image to make a self-driving car classify it as a yield sign.

Poisoning Attacks: Occur during training, aiming to corrupt the training data to degrade the model’s performance or introduce backdoors. Example: Injecting mislabeled data into a training set.

- Model Inversion Attacks

: Aim to reconstruct sensitive training data from a deployed model. - Membership Inference Attacks: Determine if a specific data point was part of the model’s training set.

Why are they a concern?

- Security Risks

: Can be used to bypass security systems (e.g., spam filters, malware detectors, facial recognition). - Safety Risks: Critical for autonomous systems, medical devices, and other safety-critical applications.

- Trust and

Reliability: Undermine public trust in AI systems.

2. Model Robustness: Building Fortresses, Not Sandcastles 🏰

Model robustness refers to a model’s ability to maintain its performance and make

correct predictions even when faced with noisy, corrupted, or adversarial inputs. It’s about building models that are resilient to real-world variations and malicious attempts to deceive them.

How to measure and improve robustness:

- Ad

versarial Training: Augmenting the training data with adversarial examples to make the model more resistant. - Defensive Distillation: Training a second model on the softened outputs (probabilities) of a first model, which can improve

robustness. - Feature Squeezing: Reducing the input space by “squeezing” features (e.g., reducing color depth in images) to remove adversarial perturbations.

- Input Preprocessing: Applying filters

or transformations to inputs to detect or mitigate adversarial noise. - Certified Robustness: Developing models with mathematical guarantees of robustness within certain perturbation bounds (a more advanced research area).

3. Model Verification: Ensuring Compliance and Safety ✅