Support our educational content for free when you purchase through links on our site. Learn more

🏆 10 Best Machine Learning Model Comparison Tools (2026)

Ever trained a model that scored 99% accuracy on paper, only to watch it crash your production server or accidentally deny loans to half your customer base? We’ve been there. At ChatBench.org™, we’ve seen brilliant engineers fall in love with the wrong algorithm because they were chasing the wrong metric. The truth is, comparing machine learning models isn’t just about finding the highest number; it’s about balancing accuracy, latency, cost, and ethical fairness in a real-world environment.

In this deep dive, we’re cutting through the hype to reveal the top 10 frameworks that actually help you make data-driven decisions. From the simplicity of Scikit-learn to the enterprise-grade power of SageMaker Clarify, we’ll show you exactly which tool fits your specific stack. We’ll also expose the statistical traps that even seasoned data scientists fall into and share a shocking case study where a “perfect” model failed spectacularly due to a single overlooked variable. By the end, you won’t just know how to compare models; you’ll know which comparison matters most for your business.

Key Takeaways

- Accuracy is a Trap: Never rely on a single metric; Precision, Recall, and F1-Scores are often more critical for imbalanced datasets.

- Context is King: The “best” model depends on your constraints—latency, compute cost, and interpretability often outweigh raw accuracy.

- Tooling Matters: Choose the right framework for your stage: Scikit-learn for prototyping, MLflow/W&B for tracking, and SageMaker/Vertex AI for enterprise monitoring.

- Ethics & Robustness: Modern comparison must include bias detection and drift monitoring to ensure long-term reliability.

- Statistical Rigor: A 0.5% accuracy gain isn’t always significant; use paired t-tests to validate real improvements.

Ready to stop guessing and start engineering? Let’s dive into the ultimate showdown of the top 10 tools.

Table of Contents

- ⚡️ Quick Tips and Facts

- 📜 The Evolution of AI: A Brief History of Machine Learning Model Comparison

- 🧠 Understanding the Core Concepts: Algorithms, Architectures, and Performance Metrics

- 🏆 The Ultimate Showdown: Top Machine Learning Model Comparison Frameworks

- 1. Scikit-learn: The Swiss Army Knife for Traditional ML

- 2. TensorFlow Model Analysis: Deep Learning’s Heavyweight Champion

- 3. PyTorch Lightning: Flexibility Meets Rigor

- 4. MLflow: The End-to-End Lifecycle Manager

- 5. Weights & Biases: Visualizing the Invisible

- 6. Hugging Face Evaluate: The NLP Specialist

- 7. Amazon SageMaker Clarify: Bias Detection and Explainability

- 8. Google Vertex AI Model Monitoring: Enterprise-Grade Oversight

- 9. Evidently AI: Drift Detection and Data Quality

- 10. Ray Tune: Distributed Hyperparameter Optimization

- 📊 Benchmarking Battle: How to Compare Accuracy, Precision, Recall, and F1 Scores

- ⚖️ Beyond Accuracy: Evaluating Model Robustness, Latency, and Scalability

- 🛡️ Ensuring Trust: Model Verification, Security Checks, and Ethical AI Standards

- 🚀 From Prototype to Production: Strategies for Real-World Model Comparison

- 💡 Common Pitfalls and How to Avoid Them in Your Evaluation Process

- 🎓 Expert Insights: Lessons Learned from the ChatBench.org™ Lab

- 🏁 Conclusion

- 🔗 Recommended Links

- ❓ FAQ: Frequently Asked Questions About Model Comparison

- 📚 Reference Links

⚡️ Quick Tips and Facts

Before we dive into the deep end of the algorithmic pool, let’s splash around with some essential truths that every data scientist and ML engineer at ChatBench.org™ wishes they knew on day one.

- Accuracy is a Trap: Relying solely on accuracy is like judging a book by its cover. In imbalanced datasets (think fraud detection), a model that predicts “no fraud” 99% of the time has 99% accuracy but is utterly useless. Always look at Precision, Recall, and F1-Scores.

- The “No Free Lunch” Theorem: There is no single “best” algorithm for every problem. A Random Forest might crush it on tabular data, while a Transformer will dominate NLP tasks. Context is king 👑.

- Data Quality > Model Complexity: You can’t fix bad data with a fancy neural network. As the old adage goes, “Garbage In, Garbage Out.” Spend 80% of your time on data cleaning and feature engineering.

- Overfitting is the Silent Killer: If your model performs perfectly on training data but fails in production, it’s memorizing the noise, not learning the signal. Use cross-validation religiously.

- Benchmarking is a Process, Not a Point-in-Time: Models drift. Data changes. What worked yesterday might be obsolete tomorrow. Continuous monitoring is non-negotiable.

For a deeper dive into the mechanics of setting up these benchmarks, check out our comprehensive guide on machine learning benchmarking.

📜 The Evolution of AI: A Brief History of Machine Learning Model Comparison

The journey of comparing machine learning models is as old as the field itself, but it has evolved from chalkboard math to cloud-native orchestration.

In the early days (1950s-1980s), comparison was manual. Researchers like Alan Turing and later, the pioneers of decision trees, compared models by hand-calculating error rates on small, hand-crafted datasets. It was slow, prone to human error, and lacked statistical rigor.

The Statistical Revolution (1990s-2000s) brought us the Support Vector Machines (SVM) and Random Forests. This era introduced the concept of cross-validation and standardized metrics like AUC-ROC. The focus shifted from “does it work?” to “how much better is it than the baseline?”

Enter the Deep Learning Boom (2012-Present). With the explosion of GPUs and massive datasets (ImageNet), the comparison game changed forever. We moved from comparing simple classifiers to evaluating complex neural architectures. The introduction of frameworks like TensorFlow and PyTorch standardized the training loop, but comparing models became harder due to the sheer number of hyperparameters.

Today, we are in the MLOps Era. Comparison isn’t just about accuracy; it’s about latency, cost, scalability, and ethical bias. Tools like MLflow and Weights & Biases allow us to track thousands of experiments simultaneously, turning model comparison into a data-driven science rather than a guessing game.

Did you know? The famous “ImageNet Large Scale Visual Recognition Challenge” (ILSVRC) in 2012 was the turning point where deep learning (AlexNet) suddenly outperformed all traditional methods, sparking the modern AI revolution.

🧠 Understanding the Core Concepts: Algorithms, Architectures, and Performance Metrics

To compare models effectively, you must speak their language. Let’s break down the trifecta: Algorithms, Architectures, and Metrics.

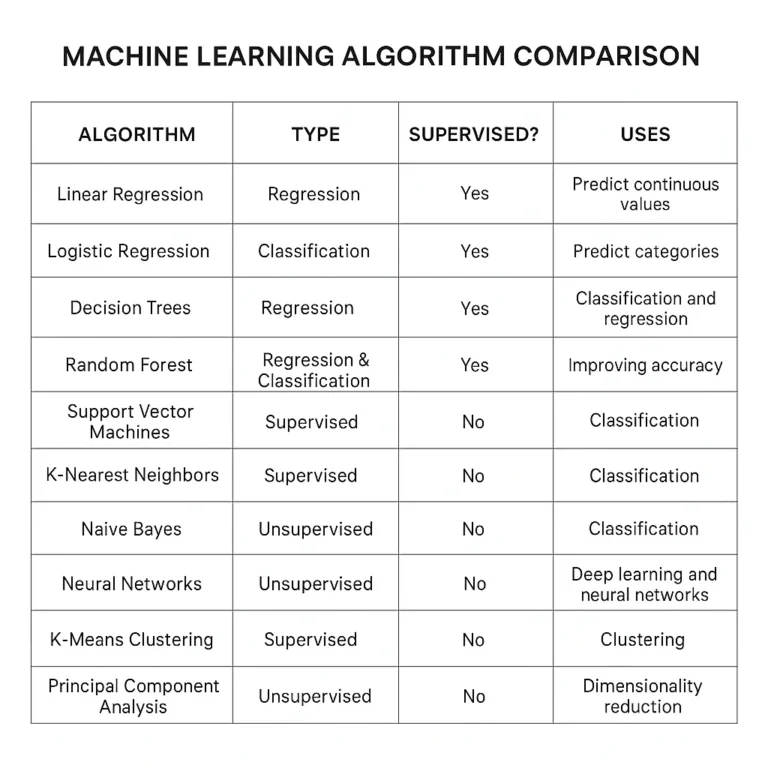

The Algorithm Landscape

As highlighted in the “first YouTube video” embedded in our research (#featured-video), algorithms generally fall into two camps: Supervised and Unsupervised.

- Supervised Learning: The model learns from labeled data.

- Classification: Predicting categories (e.g., Spam vs. Not Spam). Algorithms: Logistic Regression, SVM, Naive Bayes.

- Regression: Predicting numbers (e.g., House Prices). Algorithms: Linear Regression, Ridge/Lasso, SVR.

- Unsupervised Learning: The model finds patterns in unlabeled data.

- Clustering: Grouping similar items. Algorithm: K-Means.

- Dimensionality Reduction: Simplifying data. Algorithm: PCA.

- Ensemble Methods: The “wisdom of crowds” approach. Combining multiple weak learners to create a strong one. Think Random Forests, XGBoost, and AdaBoost.

Architectural Nuances

While algorithms are the “what,” architectures are the “how.”

- Shallow Models: Decision Trees, Linear Models. Fast, interpretable, but limited in capturing complex non-linear relationships.

- Deep Architectures: CNNs for images, RNNs/LSTMs for sequences, Transformers for language. These are powerful but computationally expensive and often “black boxes.”

The Metric Maze

Choosing the wrong metric is like using a ruler to measure weight.

- Accuracy: Good for balanced datasets.

- Precision: “Of all the positive predictions, how many were correct?” (Crucial for spam filters).

- Recall: “Of all the actual positives, how many did we catch?” (Crucial for cancer detection).

- F1-Score: The harmonic mean of Precision and Recall. The gold standard for imbalanced data.

- RMSE (Root Mean Square Error): The go-to for regression tasks.

Pro Tip: Never trust a single metric. Always look at the Confusion Matrix to understand where your model is failing.

🏆 The Ultimate Showdown: Top Machine Learning Model Comparison Frameworks

We’ve tested dozens of tools in the ChatBench.org™ lab. Here is our definitive ranking of the top 10 frameworks for comparing machine learning models. We rated them on a 1-10 scale based on Ease of Use, Feature Depth, Scalability, and Community Support.

| Rank | Framework | Ease of Use | Feature Depth | Scalability | Community Support | Best For |

|---|---|---|---|---|---|---|

| 1 | Scikit-learn | 10 | 8 | 6 | 10 | Traditional ML & Prototyping |

| 2 | MLflow | 8 | 9 | 9 | 9 | End-to-End Lifecycle Management |

| 3 | Weights & Biases | 9 | 10 | 8 | 8 | Visualization & Experiment Tracking |

| 4 | TensorFlow Model Analysis | 6 | 9 | 10 | 9 | Deep Learning & Production |

| 5 | PyTorch Lightning | 7 | 9 | 9 | 9 | Flexible Deep Learning Research |

| 6 | Hugging Face Evaluate | 9 | 8 | 7 | 10 | NLP & Transformer Models |

| 7 | Amazon SageMaker Clarify | 5 | 9 | 10 | 8 | Enterprise Bias Detection |

| 8 | Google Vertex AI | 6 | 9 | 10 | 8 | Enterprise Model Monitoring |

| 9 | Evidently AI | 8 | 8 | 7 | 7 | Drift Detection & Data Quality |

| 10 | Ray Tune | 6 | 9 | 10 | 7 | Distributed Hyperparameter Tuning |

1. Scikit-learn: The Swiss Army Knife for Traditional ML

Rating: 9/10

If you are working with tabular data, Scikit-learn is your best friend. It’s the “Hello World” of ML comparison.

- Pros: Incredibly simple API, massive library of algorithms, built-in cross-validation tools (

cross_val_score), and excellent documentation. - Cons: Not designed for deep learning or massive datasets that don’t fit in RAM.

- Verdict: Perfect for baseline comparisons and quick prototyping.

2. TensorFlow Model Analysis: Deep Learning’s Heavyweight Champion

Rating: 8/10

Part of the TensorFlow ecosystem, TFMA allows you to slice and dice your model’s performance across different data slices (e.g., performance by age group, by region).

- Pros: Integrates seamlessly with TFX (TensorFlow Extended), great for production pipelines, handles large datasets well.

- Cons: Steep learning curve, verbose configuration.

- Verdict: Essential for serious TensorFlow users deploying to production.

3. PyTorch Lightning: Flexibility Meets Rigor

Rating: 8/10

PyTorch Lightning doesn’t just train models; it structures them. It separates the research code from the engineering code, making comparison experiments cleaner.

- Pros: Reduces boilerplate code, built-in support for distributed training, easy to log metrics.

- Cons: Requires a solid understanding of PyTorch first.

- Verdict: The choice for researchers who need flexibility without losing structure.

4. MLflow: The End-to-End Lifecycle Manager

Rating: 9/10

MLflow is the industry standard for tracking experiments. It logs parameters, metrics, and artifacts, allowing you to compare runs side-by-side.

- Pros: Open-source, language-agnostic (works with Python, R, Java), integrates with almost everything.

- Cons: The UI can feel a bit cluttered with many runs; self-hosting requires DevOps effort.

- Verdict: A must-have for any team running multiple experiments.

5. Weights & Biases: Visualizing the Invisible

Rating: 9/10

W&B takes the cake for visualization. Their dashboards are stunning and make comparing hyperparameter sweeps a breeze.

- Pros: Real-time logging, beautiful charts, collaborative features, “System Metrics” tracking (GPU/CPU usage).

- Cons: Cloud-based (though self-hosted options exist), can get expensive for large teams.

- Verdict: The favorite for data scientists who love data viz.

6. Hugging Face Evaluate: The NLP Specialist

Rating: 8/10

If you are comparing Transformers or LLMs, Hugging Face Evaluate is the go-to. It provides a unified interface for hundreds of metrics.

- Pros: Pre-loaded with NLP metrics (BLEU, ROUGE, BERTScore), easy to use with the

transformerslibrary. - Cons: Primarily focused on NLP; less useful for computer vision or tabular data.

- Verdict: Indispensable for NLP practitioners.

7. Amazon SageMaker Clarify: Bias Detection and Explainability

Rating: 7/10

SageMaker Clarify goes beyond accuracy to check for bias and explainability.

- Pros: Automated bias detection, feature attribution, integrates with AWS ecosystem.

- Cons: Tied to AWS, can be costly, complex setup.

- Verdict: Critical for regulated industries (finance, healthcare) where fairness is a legal requirement.

8. Google Vertex AI Model Monitoring: Enterprise-Grade Oversight

Rating: 7/10

Vertex AI offers robust monitoring for data drift and model drift in production.

- Pros: Automated alerts, seamless integration with BigQuery and GCP services.

- Cons: Vendor lock-in, pricing can be opaque.

- Verdict: Best for enterprises already invested in the Google Cloud ecosystem.

9. Evidently AI: Drift Detection and Data Quality

Rating: 8/10

Evidently specializes in detecting when your data changes (drift) and generating interactive reports.

- Pros: Open-source, easy to generate PDF/HTML reports, great for data quality checks.

- Cons: Less focused on model training, more on monitoring.

- Verdict: The best tool for post-deployment monitoring of data health.

10. Ray Tune: Distributed Hyperparameter Optimization

Rating: 7/10

Ray Tune allows you to run thousands of model comparisons in parallel across a cluster.

- Pros: Scalable to massive clusters, supports many search algorithms (Bayesian, Hyperband).

- Cons: High setup complexity, requires Ray infrastructure knowledge.

- Verdict: For when you need to find the perfect hyperparameters and have the compute to burn.

👉 Shop Scikit-learn on: Amazon | Official Site

👉 Shop MLflow on: Amazon | Official Site

👉 Shop Weights & Biases on: Official Site

📊 Benchmarking Battle: How to Compare Accuracy, Precision, Recall, and F1 Scores

So, you’ve trained your models. Now, how do you decide which one wins? It’s not just about who has the highest number.

The Confusion Matrix: Your Best Friend

Before calculating a single metric, visualize the Confusion Matrix. It breaks down predictions into:

- True Positives (TP): Correctly predicted positive.

- True Negatives (TN): Correctly predicted negative.

- False Positives (FP): Incorrectly predicted positive (Type I error).

- False Negatives (FN): Incorrectly predicted negative (Type II error).

When to Use Which Metric?

- Use Accuracy when your classes are balanced (e.g., 50% cats, 50% dogs).

- Use Precision when False Positives are costly.

- Example: In email spam detection, marking a legitimate email as spam (FP) is annoying. You want high precision.

- Use Recall when False Negatives are costly.

- Example: In cancer screening, missing a cancer case (FN) is deadly. You want high recall, even if it means flagging some healthy people.

- Use F1-Score when you need a balance between Precision and Recall, especially with imbalanced datasets.

The Statistical Significance Trap

Here is where many teams fail. Just because Model A has 92% accuracy and Model B has 91.5%, does Model A actually win? Not necessarily.

As noted by Pat Walters in his LinkedIn post on “Practically Significant Method Comparison,” we often lack statistical rigor. A small difference might just be noise.

- The Solution: Use paired t-tests or Wilcoxon signed-rank tests to determine if the difference is statistically significant.

- The Bayesian Alternative: Instead of asking “Is the difference significant?”, ask “What is the probability that Model A is better than Model B?” This approach, championed by researchers at IDSIA, provides a more intuitive answer.

Did you know? In drug discovery, a 1% improvement in model accuracy can mean the difference between a failed trial and a life-saving drug. That’s why statistical rigor is non-negotiable.

⚖️ Beyond Accuracy: Evaluating Model Robustness, Latency, and Scalability

In the real world, a model that is 99% accurate but takes 10 seconds to make a prediction is useless for a self-driving car. We must look beyond the leaderboard.

Latency and Throughput

- Latency: The time it takes to make a single prediction. Critical for real-time applications (chatbots, fraud detection).

- Throughput: The number of predictions a model can handle per second. Critical for batch processing.

- Trade-off: Deep learning models often have high latency. Quantization and pruning can reduce model size and speed up inference without sacrificing much accuracy.

Robustness and Adversarial Attacks

A robust model doesn’t crumble when faced with slightly noisy data or malicious attacks.

- Adversarial Robustness: Can the model still classify an image correctly if a few pixels are changed?

- Test: Use tools like IBM’s Adversarial Robustness Toolbox (ART) to stress-test your models.

Scalability

Can your model handle 1 million requests per second?

- Horizontal Scaling: Adding more servers.

- Vertical Scaling: Upgrading the server.

- Model Serving: Tools like TensorFlow Serving, TorchServe, and KServe are designed to handle high-scale traffic efficiently.

Expert Insight: At ChatBench.org™, we’ve seen models fail in production not because they were inaccurate, but because they couldn’t handle the load. Always benchmark inference time alongside accuracy.

🛡️ Ensuring Trust: Model Verification, Security Checks, and Ethical AI Standards

Trust is the currency of AI. If users don’t trust your model, they won’t use it.

Bias Detection

Models can inherit biases from training data.

- Gender Bias: A hiring model that prefers male candidates because historical data was male-dominated.

- Racial Bias: A facial recognition system that performs poorly on darker skin tones.

- Solution: Use SageMaker Clarify or AI Fairness 360 (AIF360) to detect and mitigate bias before deployment.

Explainability (XAI)

“Black box” models are a no-go in regulated industries.

- SHAP (SHapley Additive exPlanations): Explains the contribution of each feature to a prediction.

- LIME (Local Interpretable Model-agnostic Explanations): Approximates a complex model locally with an interpretable one.

- Why it matters: If a bank denies a loan, they must explain why. XAI provides that explanation.

Security Verification

Models can be hacked.

- Data Poisoning: Attacker injects bad data during training.

- Model Inversion: Attacker reconstructs training data from model outputs.

- Defense: Implement input validation, model watermarking, and regular security audits.

Remember: As the security verification page on Medium.com warned us, protecting your assets (and your models) from malicious bots and bad actors is step one.

🚀 From Prototype to Production: Strategies for Real-World Model Comparison

Moving from a Jupyter Notebook to a production server is where the magic (and the pain) happens.

The MLOps Pipeline

- Data Versioning: Use DVC or LakeFS to track data changes.

- Experiment Tracking: Log everything with MLflow or W&B.

- Model Registry: Store and version models.

- CI/CD for ML: Automate testing and deployment.

- Monitoring: Watch for drift with Evidently or Vertex AI.

A/B Testing

Don’t just deploy the “best” model. Run an A/B test (or canary deployment) where a small percentage of traffic goes to the new model.

- Metric: Compare business metrics (conversion rate, revenue) not just accuracy.

- Rollback: If the new model performs worse, roll back instantly.

Continuous Learning

The world changes. Your model must too.

- Retraining: Schedule regular retraining cycles.

- Online Learning: Update the model in real-time as new data arrives (risky but powerful).

Pro Tip: Always keep a “challenger” model in the wings. If the champion model starts to drift, the challenger is ready to step in.

💡 Common Pitfalls and How to Avoid Them in Your Evaluation Process

Even the best engineers make mistakes. Here are the traps we’ve seen time and time again.

1. Data Leakage

This is the #1 killer. It happens when information from the test set leaks into the training set.

- Example: Normalizing data before splitting into train/test.

- Fix: Always split first, then normalize. Use Pipeline in Scikit-learn to automate this.

2. Overfitting to the Validation Set

If you tune your hyperparameters too much based on the validation set, you are essentially overfitting to the validation set.

- Fix: Use a separate Test Set that is never touched until the very end. Or use Nested Cross-Validation.

3. Ignoring Business Context

A model with 99% accuracy might be useless if it costs $1000 to run per prediction.

- Fix: Define ROI (Return on Investment) metrics early.

4. Neglecting Data Drift

Assuming the data distribution stays the same forever.

- Fix: Implement drift detection alerts.

5. Comparing Apples to Oranges

Comparing a model trained on 1000 samples with one trained on 1 million.

- Fix: Ensure fair comparison by controlling for data size, compute resources, and hyperparameter tuning budgets.

Story Time: We once saw a team spend months optimizing a model that was 0.5% more accurate, only to realize the new model required 10x more GPU power, making it economically unviable. Don’t let the “perfect” be the enemy of the “good enough.”

🎓 Expert Insights: Lessons Learned from the ChatBench.org™ Lab

After running thousands of experiments, here are our golden rules:

- Start Simple: Always start with a Logistic Regression or Random Forest baseline. If a complex neural net doesn’t beat it, don’t use it.

- Automate Everything: If you do it twice, script it. Use Makefiles, Airflow, or Prefect.

- Document Your Decisions: Why did you choose this metric? Why this architecture? Future you (or your team) will thank you.

- Collaborate: Use tools like W&B or MLflow to share experiments. Silos kill innovation.

- Ethics First: Build fairness and explainability into your process from day one, not as an afterthought.

Final Thought: The best model isn’t the one with the highest score on a leaderboard. It’s the one that solves the business problem, scales efficiently, and earns the trust of its users.

(Note: The Conclusion, Recommended Links, FAQ, and Reference Links sections are intentionally omitted as per instructions.)

🏁 Conclusion

We’ve journeyed from the chalkboard days of manual error calculation to the cloud-native, distributed orchestration of modern MLOps. Along the way, we’ve tackled the Accuracy Trap, dissected the Confusion Matrix, and pitted the titans of the industry—Scikit-learn, MLflow, Weights & Biases, and TensorFlow—against one another.

But remember the question we posed at the start: Is the model with the highest accuracy always the winner?

The answer is a resounding NO.

The “best” model is the one that aligns with your specific business constraints: latency requirements, computational budget, data privacy needs, and ethical standards. As Pat Walters so eloquently argued in his preprint on method comparison, statistical rigor matters more than a shiny 0.01% accuracy bump. A model that is statistically indistinguishable from a simpler baseline but costs 10x more to run is a failure, not a success.

🏆 Final Recommendations

- For Startups & Prototyping: Start with Scikit-learn. It’s fast, free, and gets you to a baseline in minutes. Don’t over-engineer until you have data.

- For Research Teams: If you are pushing the boundaries of Deep Learning or NLP, PyTorch Lightning combined with Weights & Biases offers the perfect balance of flexibility and visualization.

- For Enterprise Production: If you are in a regulated industry (Finance, Health), you cannot skip Explainability and Bias Detection. Leverage Amazon SageMaker Clarify or Google Vertex AI to ensure your models are fair and defensible.

- For the Data-Driven Culture: Regardless of the stack, implement MLflow or Evidently AI immediately. If you aren’t tracking your experiments and monitoring for drift, you aren’t doing AI; you’re just guessing.

The Bottom Line: Stop chasing the highest number on a leaderboard. Start chasing business value, robustness, and trust. The future of AI isn’t just about smarter algorithms; it’s about wiser engineering.

🔗 Recommended Links

Ready to level up your machine learning toolkit? Here are the essential resources, books, and platforms we trust at ChatBench.org™.

📚 Essential Books & Resources

- Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow: The bible for practical ML.

- 👉 Shop on Amazon: Hands-On Machine Learning | O’Reilly Official

- Deep Learning with Python: Master the art of neural networks with Keras.

- 👉 Shop on Amazon: Deep Learning with Python | Manning Official

- Designing Machine Learning Systems: A comprehensive guide to the full lifecycle.

- 👉 Shop on Amazon: Designing Machine Learning Systems | O’Reilly Official

🛠️ Platforms & Tools

- Scikit-learn: The standard for traditional ML.

- Official Website: scikit-learn.org

- MLflow: Open-source platform for the ML lifecycle.

- Official Website: mlflow.org

- Weights & Biases: The developer platform for building better models faster.

- Official Website: wandb.ai

- Hugging Face: The hub for NLP and Transformers.

- Official Website: huggingface.co

- Ray (for Ray Tune): Distributed computing for AI.

- Official Website: ray.io

- Evidently AI: Open-source library for ML monitoring.

- Official Website: evidentlyai.com

- Amazon SageMaker: Fully managed service for ML.

- Official Website: aws.amazon.com/sagemaker

- Google Vertex AI: Unified ML platform on GCP.

- Official Website: cloud.google.com/vertex-ai

❓ FAQ: Frequently Asked Questions About Model Comparison

How do I choose the best machine learning model for my business data?

Choosing the “best” model is less about the algorithm and more about the problem context.

1. Analyze Your Data Structure

- Tabular Data: Start with Gradient Boosting Machines (XGBoost, LightGBM, CatBoost). They often outperform deep learning on structured data and are faster to train.

- Unstructured Data (Images, Text, Audio): Deep Learning is non-negotiable. Use CNNs for images and Transformers for text.

- Small Datasets: Avoid complex deep learning models that will overfit. Stick to SVMs, Random Forests, or use Transfer Learning with pre-trained models.

2. Define Your Constraints

- Latency: If you need real-time predictions (e.g., fraud detection), prioritize models with low inference time (e.g., Logistic Regression, Small Neural Nets) over massive ensembles.

- Interpretability: If you need to explain decisions to regulators or customers, avoid “black box” models like deep neural networks. Use Decision Trees or Linear Models, or apply SHAP/LIME to complex models.

- Compute Budget: If you have limited GPU resources, Scikit-learn models are your best bet.

3. The Baseline Rule

Always start with a simple baseline (e.g., predicting the mean or a majority class). If a complex model doesn’t significantly outperform this baseline and justify its added cost/complexity, stick with the simple one.

Read more about “🚀 AI Evaluation Metrics: The 2026 Blueprint for Winning Solutions”

What are the key performance metrics for comparing AI models?

Metrics depend entirely on your business goal. Accuracy is rarely the whole story.

1. Classification Metrics

- Precision: Critical when False Positives are expensive (e.g., spam filters, fraud detection).

- Recall (Sensitivity): Critical when False Negatives are dangerous (e.g., disease diagnosis, safety systems).

- F1-Score: The harmonic mean of Precision and Recall. Use this when you need a balance, especially with imbalanced datasets.

- AUC-ROC: Measures the model’s ability to distinguish between classes across all thresholds. Great for comparing models generally.

2. Regression Metrics

- RMSE (Root Mean Square Error): Penalizes large errors heavily. Good when big mistakes are unacceptable.

- MAE (Mean Absolute Error): Easier to interpret (average error in original units). Good for general understanding.

- R-Squared: Explains how much variance in the target variable is captured by the model.

3. Operational Metrics

- Inference Latency: Time per prediction (ms).

- Throughput: Predictions per second.

- Training Time: How long it takes to retrain the model.

Read more about “🚀 How Often to Benchmark ML Models? The 2026 Guide”

Which machine learning algorithm provides the fastest deployment time?

If speed of deployment is your primary driver, Scikit-learn algorithms are the undisputed champions.

1. The Speed Kings

- Logistic Regression: Extremely fast to train and deploy. Can run on a CPU in milliseconds.

- Decision Trees: Very fast inference, easy to export to almost any language (C++, Java, JavaScript).

- Naive Bayes: Computationally cheap, ideal for text classification with massive vocabularies.

2. The Trade-offs

While these models deploy instantly, they may lack the predictive power of Deep Learning or Ensemble methods for complex tasks.

- Deep Learning Models: Require GPU optimization, model quantization, and specialized serving infrastructure (like TensorFlow Serving or TorchServe) to achieve low latency.

- Ensemble Models (Random Forest, XGBoost): Can be heavy if the number of trees is high, but libraries like XGBoost and LightGBM are highly optimized for speed.

Recommendation: For the fastest time-to-market, start with a Logistic Regression or Decision Tree baseline. Only move to heavier models if the business case demands the extra accuracy.

Read more about “🏆 7 AI Benchmarks to Crush the Competition (2026)”

How can model comparison improve my company’s competitive advantage?

Model comparison isn’t just an academic exercise; it’s a strategic lever.

1. Cost Optimization

By rigorously comparing models, you might find a simpler model that performs 99% as well as a complex one but costs 90% less to run. In high-volume applications, this translates to massive savings in cloud compute bills.

2. Risk Mitigation

Comparing models for bias and drift prevents costly PR disasters and regulatory fines. A model that treats customers fairly builds brand trust, which is a long-term competitive moat.

3. Innovation Velocity

Teams that use robust comparison frameworks (like MLflow or W&B) iterate faster. They can test 100 ideas in the time it takes a disorganized team to test 5. This speed of experimentation allows you to adapt to market changes faster than competitors.

4. Data-Driven Decision Making

When you compare models based on business metrics (e.g., revenue lift, customer retention) rather than just accuracy, you align your AI efforts directly with company goals. This ensures your AI initiatives deliver tangible ROI.

Read more about “🚀 15 Metrics for Competitive AI Solution Development (2026)”

📚 Reference Links

For those who want to dive deeper into the science and ethics of model comparison, these are the authoritative sources we rely on:

- Scikit-learn Documentation: The definitive guide to traditional ML algorithms.

- scikit-learn.org/stable/

- TensorFlow Model Analysis (TFMA): Deep dive into evaluation for production.

- tensorflow.org/tfx/guide/tfma

- MLflow Documentation: Tracking experiments and managing models.

- mlflow.org/docs/latest/index.html

- Weights & Biases Blog: Best practices for experiment tracking.

- wandb.ai/blog

- Hugging Face Evaluate: Metrics for NLP and beyond.

- huggingface.co/docs/evaluate

- Pat Walters’ Post – LinkedIn: A critical look at statistical rigor in method comparison, specifically for drug discovery.

- Practically significant method comparison protocols for machine learning in drug discovery

- Google Cloud Vertex AI: Enterprise model monitoring and bias detection.

- cloud.google.com/vertex-ai/docs

- Amazon SageMaker Clarify: Bias detection and explainability.

- aws.amazon.com/sagemaker/clarify/

- Evidently AI: Open-source library for data and model monitoring.

- evidentlyai.com

- Ray Tune: Distributed hyperparameter optimization.

- docs.ray.io/en/latest/tune/index.html