Support our educational content for free when you purchase through links on our site. Learn more

📈 Measuring ROI in Machine Learning Initiatives: The 8-Step 2026 Guide

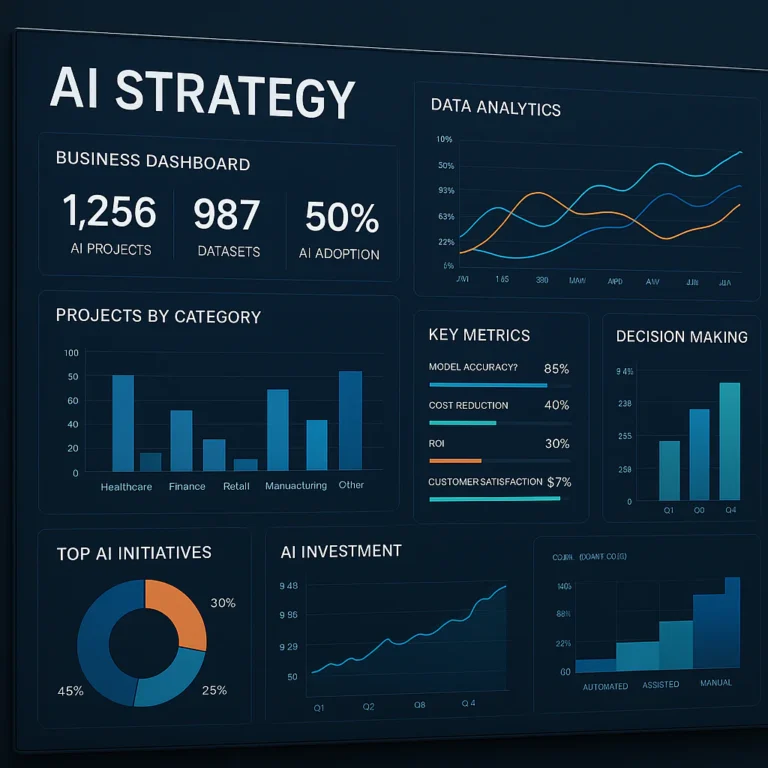

Why do 85% of AI projects never make it to production? It’s not because the technology failed; it’s because the ROI calculation was broken from day one. At ChatBench.org™, we’ve seen brilliant models gather digital dust while their creators obsess over F1 scores instead of the bottom line. The truth is, a model with 9% accuracy that solves a problem nobody cares about is a financial liability, not an asset.

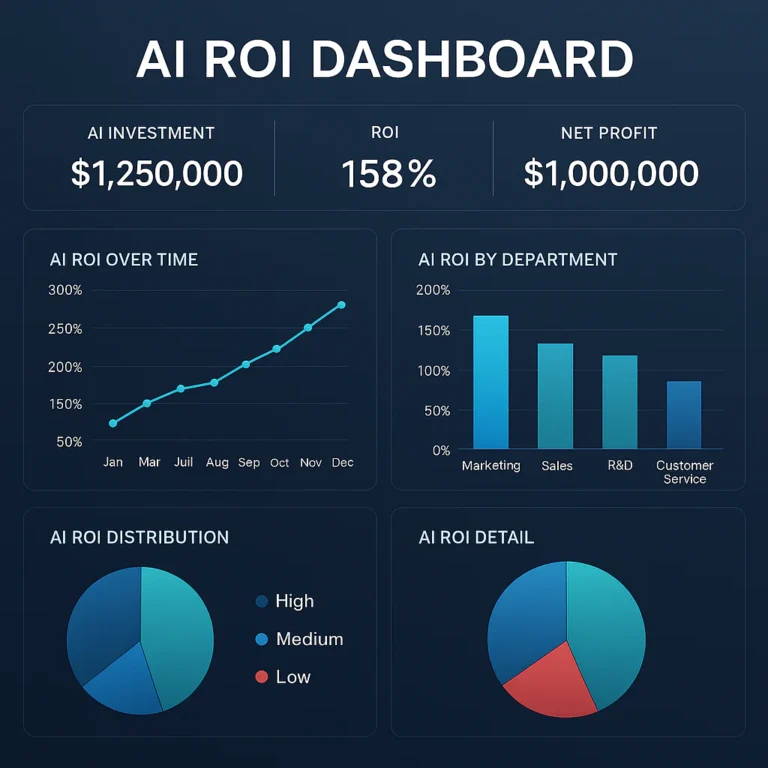

In this comprehensive guide, we strip away the hype to reveal the 8-step framework used by top-tier data teams to turn ML experiments into profit engines. We’ll expose the hidden costs of “free” open-source models, explain how to quantify the elusive “soft ROI” of employee satisfaction, and show you exactly how to avoid the dreaded Pilot Purgatory. By the end, you’ll know how to speak the language of the CFO and prove that your AI initiative is a strategic investment, not just a cost center.

Key Takeaways

- Shift from Accuracy to Value: Stop measuring success by model metrics alone; Hard ROI (revenue/cost savings) and Soft ROI (efficiency/risk reduction) are the only metrics that matter to stakeholders.

- Calculate True TCO: Your ROI is likely negative if you ignore the Total Cost of Ownership, including data labeling, inference costs, monitoring, and the hidden expense of technical debt.

- Avoid Pilot Purgatory: Establish clear baseline metrics and control groups before deployment to ensure you can accurately attribute business value to your AI, preventing projects from stalling in the proof-of-concept phase.

- Monitor for Drift: ROI is not a one-time calculation; continuous MLOps monitoring is essential to detect model decay and maintain long-term value.

- Bridge the Gap: Successful ROI realization requires a cross-functional approach where data scientists and business leaders align on financial KPIs from the very first line of code.

Table of Contents

- ⚡️ Quick Tips and Facts

- 📜 The Evolution of AI Economics: From Hype to Hard Numbers

- 🧠 Why Your ML ROI Calculation Might Be Broken (And How to Fix It)

- 📊 The Ultimate Guide to Measuring ROI in Machine Learning Initiatives

- 1. Defining Clear Business Objectives Before Writing a Single Line of Code

- 2. Calculating Total Cost of Ownership (TCO) for ML Projects

- 3. Quantifying Hard ROI: Revenue Uplift and Cost Reduction Metrics

- 4. Capturing Soft ROI: Efficiency Gains and Customer Satisfaction Scores

- 5. Establishing Baselines and Control Groups for Accurate Attribution

- 6. Tracking Model Drift and Its Impact on Long-Term Value

- 7. Integrating MLOps Metrics into Financial Reporting

- 8. Avoiding the “Pilot Purgatory” Trap in ROI Realization

- 🚀 Strategies for Optimizing ROI in Agentic AI and GenAI Deployments

- ⚖️ Hard ROI vs. Soft ROI: Balancing the Scales of AI Investment

- 🛠️ Overcoming Technical Debt and Organizational Friction in ML Projects

- 📈 From Data Science to CFO: Bridging the Communication Gap

- 💡 Real-World Case Studies: How Top Brands Measure ML Success

- 🔮 Future-Proofing Your AI Strategy: Trends Beyond the Hype Cycle

- ✅ Conclusion

- 🔗 Recommended Links

- ❓ FAQ: Common Questions About Measuring AI ROI

- 📚 Reference Links

⚡️ Quick Tips and Facts

Let’s cut through the noise, shall we? 📢 We’ve all seen the headlines: “AI will save the world!” or “AI is just a hype bubble!” But at ChatBench.org™, we deal in data, not dreams. When you ask us about Measuring ROI in Machine Learning Initiatives, we don’t give you fluff. We give you the cold, hard truth about where your budget goes and where it comes back.

Here is the executive summary for the busy CTO or CFO who needs the bottom line before their coffee gets cold:

- The “Pilot Purgatory” is Real: Most AI projects die in the pilot phase. Why? Because they measure accuracy instead of business value. A model with 9% accuracy that solves a problem nobody cares about has 0% ROI. 📉

- Hard vs. Soft ROI: You can’t just count dollars. Hard ROI is the money saved (e.g., reduced server costs). Soft ROI is the value gained (e.g., faster decision-making, employee satisfaction). Ignoring soft ROI is like ignoring the engine oil because you only care about the paint job. 🚗

- The Hidden Cost of “Free” Models: Using open-source LMs? Great. But the inference costs, fine-tuning time, and data engineering overhead often dwarf the license fee. Always calculate the Total Cost of Ownership (TCO). 💸

- Time is Money (Literally): According to recent industry analyses, the gap between AI investment and realized ROI is widening because technical debt accumulates faster than value. If you don’t monitor model drift, your ROI turns negative within months. 📉

- The 30% Rule: Only about 30% of AI leaders expect to assess ROI in less than six months, yet 0% have fully matured processes for it. This means you have a massive competitive advantage if you get it right now. 🏆

💡 Pro Tip: Before you write a single line of code, ask: “What is the minimal acceptable threshold for this model to be financially viable?” If the answer isn’t clear, stop. You’re building a science project, not a business asset.

📜 The Evolution of AI Economics: From Hype to Hard Numbers

Remember the “AI Winter”? It’s back, but this time it’s called “ROI Winter.” ❄️

In the early 2010s, AI was a novelty. Then came the Deep Learning boom, and suddenly, every startup claimed to be “AI-first.” But as we moved into the 2020s, the novelty wore off. Boards stopped asking, “Can you do AI?” and started asking, “How does AI make us money?”

From Buzzwords to Balance Sheets

Historically, measuring the effectiveness of machine learning algorithms was a black box. We relied on benchmarks like ImageNet or GLUE scores. But as we explore What role do AI benchmarks play in measuring the effectiveness of machine learning algorithms?, we find that benchmarks measure technical performance, not business performance.

A model can win a Kagle competition and still fail to generate a single dollar. The evolution of AI economics has shifted from accuracy-centric to value-centric.

The Gartner Shift: Industry-Specific Models

We are seeing a massive pivot. According to Gartner, by 2027, over 50% of enterprise Generative AI models will be industry or function-specific, up from just ~1% in 2023. This means generic models are becoming less relevant for ROI. Why? Because a generic chatbot doesn’t know your specific compliance regulations, but a fine-tuned model on Salesforce or SAP data does.

| Era | Primary Focus | Key Metric | Typical Outcome |

|---|---|---|---|

| 2015-2019 | Hype & Exploration | Model Accuracy (F1 Score) | Pilot Purgatory |

| 2020-202 | Integration & Scale | Deployment Speed | Mixed ROI (High Cost) |

| 2023-Present | Value & Efficiency | Hard/Soft ROI Ratio | Strategic Alignment |

As noted insights from IBM, stakeholders now demand metrics that inform annual budgeting and boardroom decisions, not just lofty technical objectives. The era of “AI for AI’s sake” is over. Welcome to the era of AI for Profit. 💰

🧠 Why Your ML ROI Calculation Might Be Broken (And How to Fix It)

Let’s be honest: most ROI calculations for AI are broken. They’re optimistic, static, and ignore the messy reality of production. At ChatBench.org™, we’ve audited dozens of ML pipelines, and we see the same three fatal errors repeatedly.

Error #1: Ignoring the “Long Tail” of Costs

Most teams calculate the cost of training the model. They forget the cost of inference, data labeling, monitoring, and retraining.

- The Mistake: “We spent $10k to train this model.”

- The Reality: It costs $5k/month to run it, $2k/month to monitor it, and $10k every quarter to retrain it with new data. Over two years, that “cheap” model costs $350k.

Error #2: The “Static Baseline” Fallacy

ROI is a ratio: (Gain - Cost) / Cost. But if your baseline (the “status quo”) is also improving, your ROI looks worse than it is.

- Scenario: Your AI reduces customer service time by 20%.

- Complication: Your human agents also got better training software at the same time, reducing time by 10%.

- The Fix: You must use A/B testing or control groups to isolate the AI’s impact. Without this, you’re attributing human improvement to your algorithm. 🧪

Error #3: Overestimating “Soft” Benefits

“Improved brand reputation” is a nice buzzword, but it doesn’t pay the cloud bill. However, Soft ROI is real. It includes:

- Employee Retention: Reducing burnout by automating boring tasks.

- Agility: Faster time-to-market for new products.

- Risk Mitigation: Avoiding fines from compliance errors.

The Fix: Quantify soft ROI where possible. If AI reduces employee turnover by 5%, calculate the savings in recruitment and training costs. That’s a tangible number. 📊

📊 The Ultimate Guide to Measuring ROI in Machine Learning Initiatives

Ready to get your hands dirty? Here is our step-by-step framework for calculating ML ROI that actually holds up to scrutiny. We’ve broken this down into actionable phases.

1. Defining Clear Business Objectives Before Writing a Single Line of Code

Before you touch Python or TensorFlow, you need a business case. Is the goal to reduce churn? Increase average order value (AOV)? Minimize fraud?

- Action: Align every ML use case with a specific Key Performance Indicator (KPI) tied to revenue or cost.

- Example: Instead of “Improve recommendation engine,” use “Increase AOV by 5% via cross-selling.”

2. Calculating Total Cost of Ownership (TCO) for ML Projects

TCO is your best friend. It includes:

- Data Costs: Collection, cleaning, labeling, and storage.

- Compute Costs: GPU hours for training, CPU hours for inference.

- Labor Costs: Data scientists, ML engineers, DevOps, and domain experts.

- Maintenance Costs: Monitoring, drift detection, and retraining.

💡 ChatBench Insight: Don’t forget the “Cloud Bill Shock.” Using AWS SageMaker or Google Vertex AI can lead to unexpected costs if you don’t set strict budgets and alerts.

3. Quantifying Hard ROI: Revenue Uplift and Cost Reduction Metrics

Hard ROI is the easiest to measure because it’s in dollars.

- Cost Reduction:

Formula:(Hours Saved × Hourly Wage) - Cost of AI Implementation

Example: Automating invoice processing saves 10 hours/month at $50/hour = $5,0/month savings. - Revenue Uplift:

Formula:(New Revenue - Baseline Revenue) - Cost of AI Implementation

Example: A fraud detection model prevents $1M in losses annually. If the AI costs $20k, the Net Gain is $80k.

4. Capturing Soft ROI: Efficiency Gains and Customer Satisfaction Scores

Soft ROI is harder to pin down but critical for long-term success.

- Customer Satisfaction (CSAT/NPS): AI chatbots that resolve issues in <2 minutes vs. 10 minutes.

- Employee Productivity: Developers using GitHub Copilot report 5% faster completion of tasks.

- Risk Reduction: Avoiding GDPR fines or brand damage from biased outputs.

How to Quantify Soft ROI:

- Survey employees on time saved.

- Track CSAT scores pre- and post-AI deployment.

- Estimate the cost of avoided risks (e.g., average fine size × probability of occurrence).

5. Establishing Baselines and Control Groups for Accurate Attribution

You cannot measure impact without a baseline.

- Control Group: Keep a segment of users or processes without the AI.

- A/B Testing: Run the AI on 50% of traffic and compare metrics against the 50% without it.

- Statistical Significance: Ensure your sample size is large enough to rule out chance. Use tools like Statistical Significance Calculators to verify your results. 📈

6. Tracking Model Drift and Its Impact on Long-Term Value

Models decay. Data drift (changes input data) and Concept drift (changes in the relationship between input and output) mean your ROI will drop over time if you don’t monitor it.

- The Trap: A model with 95% accuracy in January might be at 70% accuracy in July.

- The Fix: Implement MLOps pipelines to monitor drift and trigger retraining. Factor retraining costs into your ROI timeline.

7. Integrating MLOps Metrics into Financial Reporting

ROI isn’t a one-time calculation; it’s a continuous process.

- Monitor: Model performance, latency, cost per inference, and business KPIs.

- Report: Monthly ROI dashboards for stakeholders.

- Adjust: If ROI drops, investigate whether it’s a technical issue (drift) or a business issue (changing market conditions).

8. Avoiding the “Pilot Purgatory” Trap in ROI Realization

Pilot Purgatory is where AI projects go to die. They stay in “proof of concept” mode forever because they never scale.

- Why it Happens: Lack of clear ROI metrics, poor data infrastructure, organizational resistance.

- The Fix: Set a go/no-go decision point at the end of the pilot. If the ROI isn’t positive or projected to be positive within 6 months, kill the project or pivot. 🪓

🚀 Strategies for Optimizing ROI in Agentic AI and GenAI Deployments

Generative AI and Agentic AI are changing the game. They’re not just predicting; they’re acting. This creates new ROI opportunities—and new risks.

The Agentic AI Advantage

Agentic AI can perform complex, multi-step tasks autonomously.

- Use Case: An AI agent that researches competitors, drafts a report, and emails it to stakeholders.

- ROI Driver: Massive time savings for high-skilled workers.

- Risk: Hallucinations and lack of oversight.

Strategies for Maximizing GenAI ROI

- Start with High-Volume, Low-Risk Tasks: Content summarization, code generation, and customer support triage.

- Use RAG (Retrieval-Augmented Generation): Reduce hallucinations and improve accuracy by grounding LMs in your proprietary data.

- Monitor Token Usage: GenAI costs are tied tokens. Optimize prompts to reduce token count without sacrificing quality.

💡 ChatBench Insight: For Agentic AI, measure ROI by the number of tasks completed autonomously and the error rate. If the agent makes more errors than it saves time, the ROI is negative.

⚖️ Hard ROI vs. Soft ROI: Balancing the Scales of AI Investment

As we discussed, Hard ROI is king, but Soft ROI is the queen. You need both to rule the kingdom.

Hard ROI: The Bottom Line

- Pros: Easy to measure, easy to justify.

- Cons: Ignores long-term strategic value.

- Examples: Cost savings, revenue increase, error reduction.

Soft ROI: The Strategic Edge

- Pros: Captures long-term value, employee morale, and brand strength.

- Cons: Hard to quantify, subjective.

- Examples: Brand reputation, employee satisfaction, agility.

The Balance: Use Hard ROI to get the budget, and Soft ROI to justify the long-term investment. 🤝

🛠️ Overcoming Technical Debt and Organizational Friction in ML Projects

Technical debt is the hidden ROI killer.

- Code Debt: Poorly written ML code that’s hard to maintain.

- Data Debt: Messy, unstructured data that requires constant cleaning.

- Model Debt: Models that are outdated and need retraining.

Organizational Friction

- Siloed Data: Data trapped in different departments.

- Resistance to Change: Employees afraid of being replaced by AI.

- Lack of Expertise: Not enough ML engineers on staff.

The Fix: Invest in MLOps infrastructure and change management training. Make AI a team sport, not a data science silo. 🤝

📈 From Data Science to CFO: Bridging the Communication Gap

Data scientists speak in “accuracy” and “loss functions.” CFOs speak in “revenue” and “cost.” You need a translator.

- For Data Scientists: Learn to speak business. Translate “F1 Score of 0.95” into “10% reduction in customer churn.”

- For CFOs: Learn to speak AI. Understand that “model drift” is like “inventory spoilage”—it costs money if ignored.

The Fix: Create cross-functional teams with both data scientists and business leaders. Align on KPIs from day one. 🤝

💡 Real-World Case Studies: How Top Brands Measure ML Success

Let’s look at some real examples.

Case Study 1: PayPal’s Fraud Detection

- Challenge: Rising fraud rates as payment volume grew.

- Solution: Deep learning models to detect fraudulent transactions in real-time.

- ROI: Reduced fraud losses by 50% while doubling payment volume. The ROI was massive because the cost of fraud was high, and the AI prevented it at scale. 💳

Case Study 2: Netflix’s Recommendation Engine

- Challenge: Customer churn due to content overload.

- Solution: Personalized recommendations using ML.

- ROI: Netflix estimates that their recommendation engine saves $1 billion annually by reducing churn. That’s pure Hard ROI. 📺

Case Study 3: A Failed Pilot (Swell Investing)

- Challenge: Robo-advisor with high customer acquisition costs.

- Outcome: Failed to achieve scale. High CAC ($350/person) vs. low fees (0.75%).

- Lesson: ROI must account for Customer Acquisition Cost (CAC). If CAC > Lifetime Value (LTV), the model fails. 📉

🔮 Future-Proofing Your AI Strategy: Trends Beyond the Hype Cycle

The AI landscape is evolving rapidly. Here’s what’s next for ROI measurement.

Trend 1: AI Benchmarking as a Service

Just as we benchmark hardware, we’ll soon benchmark AI models for business value. Look for platforms that offer ROI benchmarking for specific industries.

Trend 2: Automated ROI Tracking

New MLOps tools are starting to integrate financial metrics. Imagine a dashboard that shows you the real-time ROI of your model as it runs. 📊

Trend 3: Ethical AI as an ROI Driver

Ethical AI isn’t just good for the planet; it’s good for the bottom line. Avoiding bias reduces legal risk and brand damage. Ethical AI is a cost-saving measure. ⚖️

Trend 4: The Rise of “AI ROI Engineers”

A new role is emerging: the AI ROI Engineer. This person bridges the gap between data science and finance, ensuring that every AI project is financially viable. 🤝

✅ Conclusion