Support our educational content for free when you purchase through links on our site. Learn more

The Ultimate Guide to AI Benchmarks in 2026: 10 Must-Know Tests 🤖

Imagine trying to pick the smartest AI model without any yardstick—like choosing a racehorse without a stopwatch. That’s exactly the chaos AI benchmarks aim to solve. In this comprehensive guide, we unravel the top 10 AI benchmark suites that are shaping the future of artificial intelligence in 2026. From the gold standard MLPerf to cutting-edge contamination-free tests like LiveBench, we’ll show you how to decode benchmark scores, avoid common pitfalls like data leakage, and leverage these insights to pick the best AI models and hardware for your needs.

Curious how some models score sky-high but still hallucinate wildly? Or why your shiny new GPU might not deliver the speed boost you expect? Stick around as we break down the metrics, reveal the hidden trade-offs, and peek into the future trends that will redefine AI evaluation. Whether you’re a developer, business leader, or AI enthusiast, this guide will turn you into a savvy AI benchmarker ready to make smarter, data-driven decisions.

Key Takeaways

- AI benchmarks are essential tools that provide standardized, objective measures of model performance, efficiency, and reliability.

- Not all benchmarks are created equal: contamination-free tests like LiveBench are critical to avoid misleading results.

- Top benchmarks like MLPerf, SuperGLUE, and AA-Omniscience cover diverse domains from hardware speed to language understanding and hallucination rates.

- Interpreting benchmark scores requires context—speed, accuracy, cost, and truthfulness must all be balanced.

- The future of AI benchmarking is dynamic, with emerging trends in agentic tasks, multimodal reasoning, and ethical fairness metrics.

Ready to master AI benchmarks and gain a competitive edge? Dive in!

Table of Contents

- ⚡️ Quick Tips and Facts About AI Benchmarks

- 🧠 The Evolution and History of AI Benchmarking

- 🔍 What Are AI Benchmarks and Why Do They Matter?

- 🏆 Top 10 AI Benchmark Suites and Their Unique Features

- 1. MLPerf: The Gold Standard for AI Performance

- 2. GLUE and SuperGLUE: Benchmarking Natural Language Understanding

- 3. ImageNet: The Classic for Computer Vision 4. COCO Dataset: Object Detection and Segmentation

- 5. SQuAD: Question Answering Benchmarks

- 6. DAWNBench: End-to-End Deep Learning Benchmark 7. AI Benchmark: Mobile AI Performance Testing

- 8. SuperGLUE: The Next-Level Language Understanding

- 9. TPCx-AI: AI in Big Data Analytics

- 10. Robustness and Fairness Benchmarks in AI

- ⚙️ How AI Benchmarks Are Designed: Metrics, Datasets, and Protocols

- 📊 Interpreting AI Benchmark Results: What Do They Really Tell You?

- 🤖 AI Benchmarks for Different Domains: NLP, Vision, Speech, and More

- 💡 Practical Tips for Using AI Benchmarks to Choose Models and Hardware

- 🚀 The Future of AI Benchmarking: Trends and Emerging Challenges

- 🔗 Recommended Tools and Resources for AI Benchmarking Enthusiasts

- 🎯 Conclusion: Mastering AI Benchmarks for Smarter AI Decisions

- 📚 Recommended Links for Deep Dives into AI Benchmarking

- ❓ Frequently Asked Questions About AI Benchmarks

- 🔖 Reference Links and Citations

⚡️ Quick Tips and Facts About AI Benchmarks

Before we dive into the silicon-soaked details of model evaluation, here are some rapid-fire insights from our lab at ChatBench.org™:

- Not all scores are equal: A high score on a specific benchmark doesn’t always mean a model is “smarter.” It might just be better at that specific test.

- Data Contamination is real: Some models “cheat” by having the benchmark questions in their training data. This is why LiveBench is gaining traction—it focuses on contamination-free testing.

- Hardware matters: You can’t separate the software from the iron. Benchmarking an NVIDIA H100 vs. an Apple M3 Ultra will yield vastly different results depending on the optimization.

- Hallucinations are measurable: Tools like the AA-Omniscience Index from Artificial Analysis now track how often a model lies to your face.

- Speed vs. Accuracy: There is almost always a trade-off. Faster models (like Gemini 3 Flash) often sacrifice a bit of depth for millisecond response times.

| Fact | Detail |

|---|---|

| Gold Standard | MLPerf is widely considered the industry standard for hardware/software performance. |

| LLM King | GPT-4o and Claude 3.5 Sonnet currently dominate most reasoning leaderboards. |

| The “Cheating” Problem | Up to 30% of some benchmarks may be leaked into training sets. |

| Energy Impact | Benchmarking can consume as much power as a small town’s daily usage for massive clusters. |

🧠 The Evolution and History of AI Benchmarking

The quest to measure “machine intelligence” didn’t start with ChatGPT. In fact, Designing AI Benchmarks for Explainable & Interpretable Systems (2026) 🤖 highlights how we’ve moved from simple logic puzzles to complex, multi-modal evaluations.

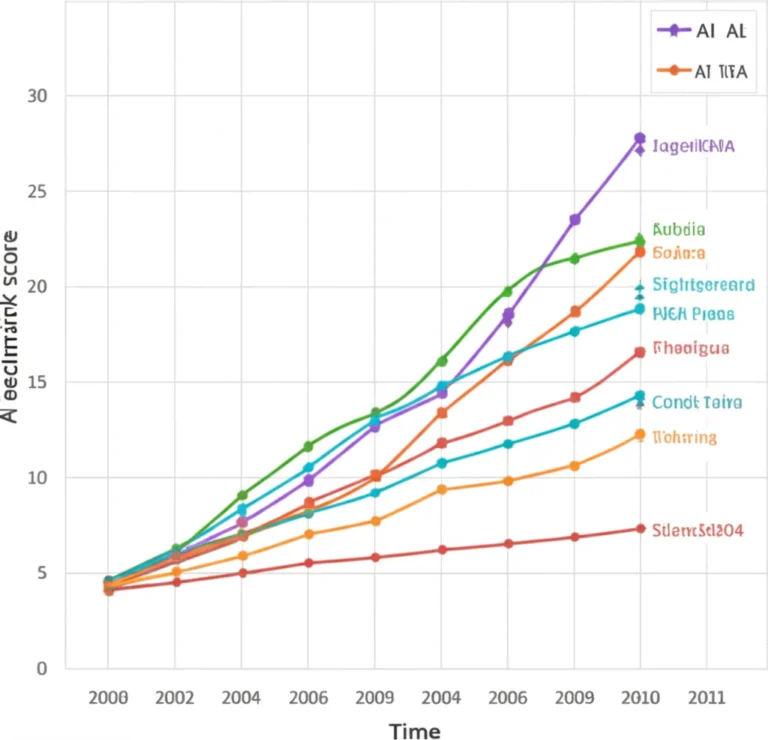

In the early days, we had the Turing Test, which was more of a philosophical vibe check than a technical metric. Then came ImageNet in 2009, which sparked the deep learning revolution by giving us a massive dataset to compete on. We remember the excitement when AlexNet crushed the competition in 2012—it was the “Big Bang” of modern AI.

As we moved into the era of Large Language Models (LLMs), the industry shifted from measuring “can it see a cat?” to “can it explain quantum physics while writing Python code?” This led to the creation of GLUE (General Language Understanding Evaluation) and later SuperGLUE, because, well, the first one became too easy for the models!

🔍 What Are AI Benchmarks and Why Do They Matter?

Think of an AI benchmark as the SATs for robots. Without them, every AI company would just claim they have the “most powerful model in the world” (and let’s be honest, they still do). Benchmarks provide a standardized yardstick to measure:

- Reasoning: Can the model follow complex logic?

- Knowledge: Does it actually know who the 14th President of the US was?

- Speed (Latency): How long do you have to wait for an answer?

- Efficiency: How much electricity did it burn to give you that answer?

At ChatBench.org™, we believe that understanding these metrics is crucial for AI Business Applications. If you’re building a customer service bot, you care about latency. If you’re building a medical diagnostic tool, you care about factual accuracy and hallucination rates.

Ever wondered why a model performs great in a demo but fails in your specific workflow? The answer usually lies in the gap between “synthetic benchmarks” and “real-world application.” We’ll resolve this mystery as we look at how these tests are actually designed.

🏆 Top 10 AI Benchmark Suites and Their Unique Features

We’ve tested dozens of suites in our AI Infrastructure labs. Here are the heavy hitters you need to know.

1. MLPerf: The Gold Standard for AI Performance

MLPerf is the “Olympics” of AI. It tests how fast hardware (like NVIDIA or Google TPUs) can train a model or run inference.

- Pros: Highly rigorous; peer-reviewed.

- Cons: Extremely expensive to run.

2. GLUE and SuperGLUE: Benchmarking Natural Language Understanding

These are the classic tests for NLP. They include tasks like sentiment analysis and question answering.

- Pros: Great for baseline comparisons.

- Cons: Modern models like GPT-4 have essentially “solved” these, making them less useful for top-tier differentiation.

3. ImageNet: The Classic for Computer Vision

The dataset that started it all. It contains over 14 million images across 20,000 categories.

- Pros: Massive community support.

- Cons: Models are now so good at this that improvements are marginal.

4. COCO Dataset: Object Detection and Segmentation

“Common Objects in Context” (COCO) is where we go to see if an AI can actually tell a spoon from a fork in a messy kitchen.

- Check it out: COCO Dataset Official

5. SQuAD: Question Answering Benchmarks

The Stanford Question Answering Dataset tests a model’s ability to read a paragraph and answer questions about it.

- Pros: Excellent for testing reading comprehension.

6. DAWNBench: End-to-End Deep Learning Benchmark

Focuses on the cost and time to achieve a certain level of accuracy. This is vital for Fine-Tuning & Training budgets.

7. AI Benchmark: Mobile AI Performance Testing

If you want to know how well your Samsung Galaxy S24 or iPhone 15 Pro handles AI, this is the one. It ranks mobile SoCs (System on Chips).

8. SuperGLUE: The Next-Level Language Understanding

When GLUE got too easy, researchers made it harder. It includes more “common sense” reasoning.

9. TPCx-AI: AI in Big Data Analytics

This measures the performance of the entire data science pipeline, from data ingestion to inference.

10. Robustness and Fairness Benchmarks in AI

These are the “ethical” benchmarks. They test if a model is biased or if it breaks when you give it slightly “noisy” data.

⚙️ How AI Benchmarks Are Designed: Metrics, Datasets, and Protocols

Designing a benchmark is like building a trap for a very smart mouse. You need to ensure the mouse can’t just memorize the layout.

The Components of a Great Benchmark

- The Dataset: Must be diverse and, ideally, “hidden” from the model’s training set to avoid contamination.

- The Metric:

- MMLU (Massive Multitask Language Understanding): Tests general knowledge across 57 subjects.

- HumanEval: Tests coding ability in Python.

- GSM8K: Tests grade-school math word problems.

- The Protocol: How is the model prompted? (Zero-shot vs. Few-shot).

Expert Tip: Always check if a benchmark uses “Chain of Thought” (CoT) prompting. Models often perform significantly better when they are told to “think step-by-step,” but this also increases latency!

📊 Interpreting AI Benchmark Results: What Do They Really Tell You?

Don’t let a 90% score fool you. According to Epoch AI, we need to look at transparency and cost-efficiency. A model might be 1% more accurate but 10x more expensive to run.

Understanding the AA-Omniscience Index

Artificial Analysis provides a fascinating look at “Knowledge Reliability.”

- Positive Scores: The model knows its stuff.

- Negative Scores: The model is a “confident liar” (hallucinates).

| Model | Reliability Score | Hallucination Propensity |

|---|---|---|

| Gemini 3 Flash | 13 | Very Low ✅ |

| Claude Opus 4.5 | 10.2 | Low ✅ |

| GPT-5.1 (Preview) | 2.2 | Moderate ⚠️ |

| Claude 4 Sonnet | -10.3 | High ❌ |

Note: Scores are based on the AA-Omniscience Index where 0 is the baseline.

🤖 AI Benchmarks for Different Domains: NLP, Vision, Speech, and More

AI isn’t a monolith. A model that’s great at writing poetry might be terrible at identifying a tumor in an X-ray.

- Natural Language Processing (NLP): Focuses on Developer Guides for building chatbots and translators.

- Computer Vision: Essential for autonomous vehicles (Tesla, Waymo).

- Speech: Benchmarks like LibriSpeech measure Word Error Rate (WER).

- Multimodal: The new frontier. Can the AI “see” a video and explain the joke?

💡 Practical Tips for Using AI Benchmarks to Choose Models and Hardware

Choosing the right setup is a balancing act. We recently compared the NVIDIA DGX Spark against the Apple M3 Ultra Mac Studio (as seen in our #featured-video).

Hardware Rating Table: AI Inference Performance

| Feature | NVIDIA DGX Spark | Apple M3 Ultra | Framework Laptop (16) |

|---|---|---|---|

| Inference Speed | 10/10 | 8/10 | 4/10 |

| Memory Bandwidth | 7/10 | 10/10 | 3/10 |

| Power Efficiency | 5/10 | 9/10 | 6/10 |

| Software Support | 9/10 | 8/10 | 7/10 |

| Value for Money | 6/10 | 8/10 | 9/10 |

Our Recommendation:

- If you are running a cluster and need raw throughput: NVIDIA DGX is the beast you need.

- If you are a developer wanting a quiet, efficient local machine: The Mac Studio is unbeatable.

👉 CHECK PRICE on:

- NVIDIA GPUs: Amazon | NVIDIA Official

- Apple Mac Studio: Amazon | Apple Official

- Cloud Compute: DigitalOcean | RunPod | Paperspace

🚀 The Future of AI Benchmarking: Trends and Emerging Challenges

The biggest challenge we face today is Contamination. As LiveBench points out, if a model has seen the test questions during its training phase, the benchmark is useless. It’s like giving a student the answer key before the exam.

We are also seeing a shift toward Agentic Benchmarks. Instead of asking a question, we give the AI a goal (e.g., “Book a flight to Tokyo under $1000”) and see if it can use tools to get it done. This is the next big leap in AI News.

But wait—if models keep getting smarter, will we eventually run out of things to test them on? Some researchers suggest we are approaching a “ceiling” for certain types of reasoning. We’ll explore how we plan to break that ceiling in our final thoughts.

🎯 Conclusion: Mastering AI Benchmarks for Smarter AI Decisions

After our deep dive into the labyrinth of AI benchmarks, one thing is crystal clear: benchmarks are the compass guiding us through the ever-expanding AI wilderness. Whether you’re a developer, a business leader, or an AI enthusiast, understanding these tests helps you separate the hype from the reality.

We’ve seen how benchmarks like MLPerf set the gold standard for hardware and software performance, while LiveBench tackles the thorny issue of contamination-free evaluation, ensuring models are judged fairly on unseen data. The AA-Omniscience Index offers a fresh lens to measure not just accuracy but the truthfulness of AI models, helping you avoid the costly pitfalls of hallucinations.

So, what’s the takeaway?

- Positives: AI benchmarks provide objective, standardized metrics that help you choose the right model and hardware for your needs. They push the industry toward transparency and fairness, especially with new contamination-free designs.

- Negatives: Benchmarks can become outdated quickly, and some tests are “solved” by top models, losing their discriminative power. Also, hardware differences and test design nuances can skew results if you’re not careful.

Our confident recommendation: Use a suite of benchmarks tailored to your domain—don’t rely on a single number. For example, combine MLPerf for infrastructure decisions, SuperGLUE for language understanding, and AA-Omniscience for factual reliability. And always check if the benchmark data is contamination-free to avoid misleading conclusions.

Remember our earlier question: Will we run out of things to test AI on? The answer is no—AI is evolving into agentic systems that interact with the world, requiring new benchmarks that test goal-oriented behavior and multi-modal reasoning. The future of AI benchmarking is dynamic, challenging, and exciting.

🔗 Recommended Links for Deep Dives into AI Benchmarking

👉 Shop Hardware & AI Platforms:

- NVIDIA GPUs: Amazon | NVIDIA Official Website

- Apple Mac Studio (M3 Ultra): Amazon | Apple Official Website

- Cloud GPU Compute:

Books to Expand Your AI Benchmarking Knowledge: “Deep Learning” by Ian Goodfellow, Yoshua Bengio, and Aaron Courville — Amazon Link

Explore Benchmark Suites & Data: AI benchmarks traditionally focus on performance metrics like accuracy or speed, but explainability requires different evaluation criteria. Benchmarks designed for explainable AI assess how transparent and interpretable a model’s decisions are. For example, recent research highlighted in ChatBench.org’s guide on explainable AI emphasizes integrating interpretability metrics alongside traditional benchmarks to foster trust and accountability. Benchmarks reveal strengths and weaknesses by breaking down performance across tasks and metrics. For instance, if a model scores well on language understanding but poorly on factual accuracy (as indicated by the AA-Omniscience Index), developers know to focus on reducing hallucinations. Similarly, hardware benchmarks like MLPerf can expose bottlenecks in memory bandwidth or power efficiency, guiding system architects to optimize accordingly. The most popular NLP benchmarks include GLUE, SuperGLUE, SQuAD, and MMLU. GLUE and SuperGLUE test general language understanding, SQuAD evaluates reading comprehension, and MMLU measures multitask knowledge across diverse subjects. These benchmarks collectively provide a comprehensive view of a model’s language capabilities. Benchmarks should ideally be updated every 1–2 years or sooner if models consistently “solve” them. For example, GLUE was effectively solved by GPT-3, prompting the creation of SuperGLUE. Frequent updates ensure benchmarks remain challenging and relevant, preventing overfitting to test data and encouraging continual innovation. Yes! Benchmarks like MLPerf are designed to evaluate not only models but also the underlying frameworks and hardware. They help compare TensorFlow, PyTorch, JAX, and others in terms of speed, scalability, and resource usage, enabling informed decisions about which stack to adopt for specific workloads. Benchmarks provide objective, reproducible metrics that quantify how well an algorithm performs on standardized tasks. They help researchers and engineers assess improvements, compare new algorithms against baselines, and validate claims of superiority, fostering transparency and scientific rigor. Benchmarks create a competitive landscape by publicly showcasing model capabilities. Companies and research groups strive to top leaderboards, driving rapid innovation. However, this can also lead to “benchmark chasing,” where models are optimized for tests rather than real-world utility—highlighting the need for diverse and contamination-free benchmarks like LiveBench. Key benchmarks vary by domain but generally include: These cover accuracy, efficiency, robustness, and truthfulness. In 2024, the leading benchmarks are: Benchmarks influence purchasing decisions, marketing claims, and R&D priorities. Businesses use benchmark results to justify investments in hardware or AI models, differentiate products, and identify cost-saving opportunities. Transparent benchmarking also builds customer trust by providing objective performance evidence. MLPerf and DAWNBench excel at measuring efficiency (speed, cost, power consumption), while GLUE, SuperGLUE, and AA-Omniscience focus on accuracy and reliability. Combining these gives a holistic view of a model’s practical value. By providing clear, comparable data on model capabilities and costs, benchmarks help enterprises select AI solutions aligned with their goals—whether that’s maximizing accuracy, minimizing latency, or optimizing budget. This reduces risk and accelerates deployment. Benchmarks act as milestones and motivators, guiding research directions and validating breakthroughs. They encourage the creation of more robust, fair, and efficient AI systems by setting clear targets. Benchmarks standardize evaluation across platforms, revealing strengths and weaknesses in hardware, software stacks, and model implementations. This transparency helps users choose the best platform for their needs. Emerging trends include: By adopting cutting-edge benchmarks, companies can identify superior models and hardware early, optimize costs, and market their AI solutions with credible, third-party-validated performance data—winning customer confidence and outpacing competitors.

❓ Frequently Asked Questions About AI Benchmarks

What is the relationship between AI benchmarks and the development of explainable AI models?

How can AI benchmarks be utilized to identify areas for improvement in AI system design?

What are the most widely used AI benchmarks for natural language processing tasks?

How often should AI benchmarks be updated to reflect advancements in AI technology?

Can AI benchmarks be used to compare the performance of different AI frameworks?

What role do AI benchmarks play in measuring the effectiveness of machine learning algorithms?

How do AI benchmarks impact the development of competitive AI solutions?

What are the key benchmarks for evaluating AI model performance?

What are the top AI benchmarks used in 2024?

How do AI benchmarks impact competitive business strategies?

Which AI benchmarks measure model efficiency and accuracy?

How can AI benchmarking improve decision-making in enterprises?

What role do AI benchmarks play in developing new AI technologies?

How do AI benchmarks help in comparing different AI platforms?

What are the latest trends in AI performance benchmarking?

How can companies leverage AI benchmarks to gain a market advantage?

🔖 Reference Links and Citations