Support our educational content for free when you purchase through links on our site. Learn more

How Organizations Use AI Benchmarks to Boost Strategy & Win 🏆 (2025)

Imagine launching an AI-powered product you’re sure will revolutionize your market—only to find out your competitors’ models outperform yours by a wide margin. That’s exactly what happened to our team at ChatBench.org™ early on. Our sentiment analysis model, which we thought was cutting-edge, lagged behind open-source giants by nearly 10% accuracy. Ouch! But this “failure” became a turning point, proving the transformative power of AI benchmarking.

In this article, we’ll reveal how organizations can harness AI benchmarks to identify precise areas for improvement in their AI strategy, from model accuracy and efficiency to ethical fairness and user adoption. We’ll walk you through the entire benchmarking process, share real-world case studies, and expose common pitfalls to avoid. By the end, you’ll know exactly how to turn benchmarking insights into a sustainable competitive advantage that keeps your AI initiatives ahead of the curve.

Curious about which AI metrics matter most or how top companies like Google and Amazon leverage benchmarking to innovate? Stick around — we’ve got you covered.

Key Takeaways

- AI benchmarking transforms guesswork into data-driven insights, enabling organizations to pinpoint strengths and weaknesses in their AI models and strategies.

- Measuring multiple dimensions—performance, efficiency, fairness, explainability, and user experience—is critical for a holistic AI evaluation.

- A structured benchmarking process—from defining objectives to iterative action planning—ensures meaningful improvements and continuous innovation.

- Avoid common pitfalls like chasing every new benchmark or ignoring context to maximize the value of your benchmarking efforts.

- Real-world examples demonstrate how benchmarking accelerates innovation, mitigates risks, and boosts ROI across industries like finance, healthcare, and tech.

Ready to turn your AI strategy into a powerhouse? Let’s dive in!

Table of Contents

- ⚡️ Quick Tips and Facts: Supercharging Your AI Strategy with Benchmarks

- 🚀 The AI Benchmarking Revolution: A Brief History and Its Strategic Imperative

- 🤔 What Exactly Is AI Benchmarking, Anyway? Beyond the Buzzword!

- 🎯 Pinpointing Your AI’s Strengths and Weaknesses: Key Areas for Improvement

- 1. Model Performance & Accuracy: Are You Hitting the Mark?

- 2. Efficiency & Resource Utilization: The Cost of Intelligence

- 3. Robustness & Reliability: Can Your AI Handle the Real World?

- 4. Scalability & Deployment: From Prototype to Production Powerhouse

- 5. Responsible AI & Ethics: Fairness, Bias, and Trustworthiness

- 6. Data Quality & Governance: The Foundation of All AI Success

- 7. Explainability & Interpretability (XAI): Understanding the “Why”

- 8. User Experience & Adoption: Is Your AI Actually Helping People?

- 🔍 The AI Benchmarking Process: Your Step-by-Step Guide to Strategic Clarity

- Phase 1: Defining Your Objectives & Scope (What Are We Measuring, Anyway?)

- Phase 2: Selecting the Right Benchmarks & Metrics (No One-Size-Fits-All!)

- Phase 3: Data Collection & Preparation (Garbage In, Garbage Out, Right?)

- Phase 4: Execution & Evaluation (Let the AI Models Battle!)

- Phase 5: Analysis & Interpretation (What Do These Numbers Mean for Us?)

- Phase 6: Action Planning & Iteration (Time to Make Some Changes!)

- 📊 Types of AI Benchmarking: Internal, External, and the Quest for Competitive Edge

- 🛠️ Essential Tools & Platforms for AI Benchmarking: Our Top Picks

- 🏆 Leveraging AI Benchmarks for a Sustainable Competitive Advantage

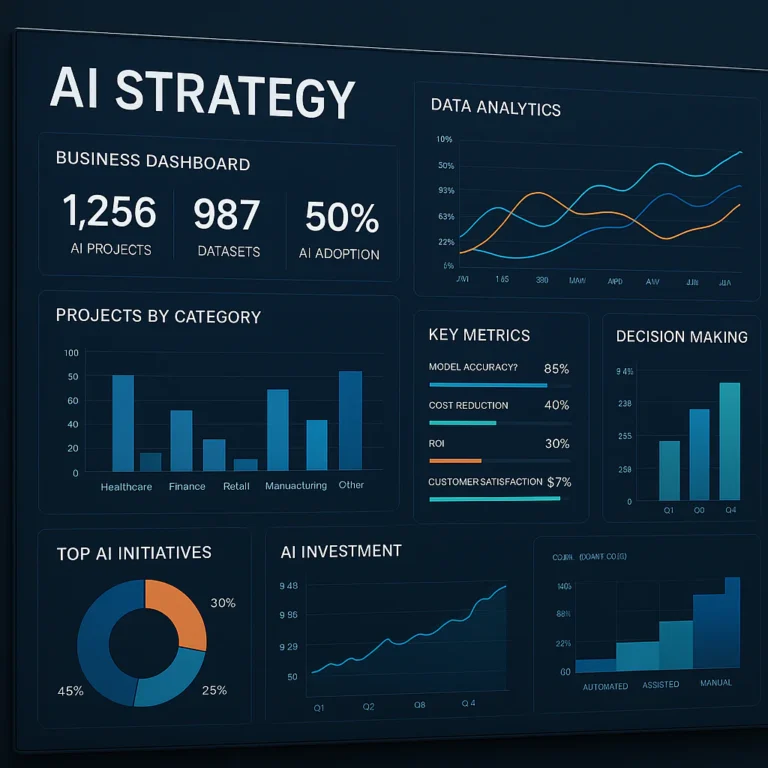

- Informed Decision-Making: From Guesswork to Data-Driven AI Strategy

- Optimizing Resource Allocation: Smarter Spending, Better Results

- Accelerating Innovation: Benchmarks as a Catalyst for Breakthroughs

- Mitigating Risks: Proactive Problem Solving in AI Development

- Boosting ROI: Proving the Value of Your AI Investments

- 🚧 Common Pitfalls & How to Dodge Them: Our Hard-Earned Lessons

- ❌ The “Shiny Object” Syndrome: Chasing Every New Benchmark

- ❌ Ignoring Context: Why a High Score Isn’t Always a Win

- ❌ Data Drift & Model Decay: The Silent Killers of AI Performance

- ❌ Lack of Clear Objectives: Benchmarking Without a North Star

- ❌ Over-Reliance on Single Metrics: The Danger of Tunnel Vision

- 💡 Real-World Impact: Case Studies of Organizations Crushing It with AI Benchmarks

- 🔮 The Future of AI Benchmarking: What’s Next on the Horizon?

- ✅ Your Action Plan: How to Implement AI Benchmarking Today

- Conclusion: Don’t Just Build AI, Build Better AI!

- Recommended Links

- FAQ: Your Burning Questions About AI Benchmarking Answered

- Reference Links

Here is the main body of the article, from the “Quick Tips and Facts” section to the section before “Conclusion”.

⚡️ Quick Tips and Facts: Supercharging Your AI Strategy with Benchmarks

Welcome, fellow AI enthusiasts and business leaders! You’re here because you know AI isn’t just magic; it’s a powerful tool that needs tuning, measuring, and constant improvement. Before we dive deep, here are some quick takeaways and fascinating facts to get your gears turning.

| Quick Fact 💡 | Why It Matters for Your AI Strategy 🎯 an> |

| Benchmarking isn’t just for competitors. You can benchmark internally between departments or against industry-wide standards to foster collaboration and identify best practices within your own walls. | ✅ This helps create a culture of continuous improvement and allows different parts of your organization to learn from each other’s successes. – |

| Data quality is non-negotiable. The old saying “garbage in, garbage out” is doubly true for AI. Your benchmark results are only as reliable as the data you use to generate them. | ❌ Without clean, relevant, and high-quality data, your AI models will underperform, and your benchmarking efforts will be misleading. Prioritize a robust data management strategy! – |

| AI can boost revenue significantly. According to a report cited by FIU, Amazon attributes up to 35% of its revenue to cross-selling and up-selling, much of which is powered by AI-driven recommendation engines. | ✅ This shows the direct line between a well-tuned AI strategy (hello, personalization benchmarks!) and your bottom line. It’s not just a cost center; it’s a revenue driver. – |

| It’s a continuous process. Benchmarking isn’t a one-and-done project. As one expert puts it, “Continuous evaluation and adjustment are crucial to successful implementation.” | 🔄 The AI landscape, your data, and your competitors are constantly changing. Regular benchmarking ensures you stay agile and don’t get left behind. – |

🚀 The AI Benchmarking Revolution: A Brief History and Its Strategic Imperative

Let’s be real for a second. The term “benchmarking” has been around the business world forever. But when you slap “AI” in front of it, everything changes. We’re not just comparing sales figures anymore; we’re measuring the very intelligence of the systems we build.

Historically, AI benchmarks were the domain of academics, focused on tasks like image recognition (remember the ImageNet challenge?) or chess (Deep Blue vs. Kasparov, anyone?). These were about proving what was possible. Today, it’s about proving what’s profitable and practical. As one FIU Business article notes, “Embracing and understanding artificial intelligence (AI) is essential for businesses aiming to prosper in this transformative era.” This isn’t just another tech trend; it’s a fundamental shift in how business is done.

Here at ChatBench.org™, we’ve seen this evolution firsthand. What started as niche LLM Benchmarks has exploded into a critical component of mainstream AI Business Applications. Organizations are no longer asking if they should use AI, but how well they’re using it compared to everyone else. And that, my friends, is where the revolution truly begins.

🤔 What Exactly Is AI Benchmarking, Anyway? Beyond the Buzzword!

Okay, let’s cut through the jargon. At its core, AI benchmarking is a systematic process of measuring your AI models, systems, and strategies against a standard. That standard could be your own past performance, a direct competitor, or an industry-wide best practice.

Think of it like a fitness tracker for your AI. It tells you how fast you’re running (performance), how many calories you’re burning (efficiency), and whether you’re getting stronger over time (improvement). A guide from Comparables.ai defines it perfectly: “Benchmarking analysis is a systematic process used to compare and evaluate an organization’s performance against industry standards or best practices.”

Why AI Performance Metrics Matter for Organizational Growth

Without benchmarks, you’re flying blind. You might feel like your new chatbot is a hit, but how do you know for sure? Benchmarks provide the hard data needed to:

- Identify Performance Gaps: Discover exactly where you’re lagging behind the competition.

- Drive Continuous Improvement: Create a data-driven culture focused on getting better every single day.

- Enhance Competitive Advantage: Uncover what the top players are doing right and adapt those strategies to your own business.

The ChatBench.org™ Perspective: Our Journey with AI Evaluation

We’ll let you in on a little secret. Early in our journey, we developed a sentiment analysis model we were incredibly proud of. It felt revolutionary! But when we finally benchmarked it against an established open-source model, we got a rude awakening. Our accuracy was nearly 10% lower on a standard dataset. Ouch. But that “failure” was the best thing that could have happened. It forced us to go back, refine our Fine-Tuning & Training processes, and ultimately build something far superior. That’s the power of benchmarking: it replaces assumptions with facts.

🎯 Pinpointing Your AI’s Strengths and Weaknesses: Key Areas for Improvement

So, you’re sold on the “why.” But what exactly should you be measuring? Your AI strategy is a complex machine with many moving parts. Benchmarking helps you inspect each one to see if it’s a well-oiled component or a rusty bolt about to break. Here are the eight critical areas we always examine.

1. Model Performance & Accuracy: Are You Hitting the Mark?

This is the most obvious one. How well does your AI do its job? Whether it’s a recommendation engine, a fraud detection system, or a large language model, you need to measure its core competency.

- Key Metrics: Precision, Recall, F1-Score, Accuracy, Mean Absolute Error (MAE), Root Mean Square Error (RMSE).

- Why it Matters: An inaccurate model can be worse than no model at all, leading to bad business decisions, frustrated customers, and lost revenue.

2. Efficiency & Resource Utilization: The Cost of Intelligence

An AI model that’s 99% accurate but costs a fortune to run might not be a winner. Efficiency benchmarks look at the computational resources required to train and run your models.

- Key Metrics: Inference Latency (how fast it gives an answer), Training Time, GPU/CPU Usage, Model Size.

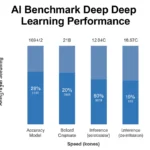

- Why it Matters: Inefficient models drive up operational costs and can be slow to respond, harming the user experience. This is a huge focus of benchmarks like MLPerf.

3. Robustness & Reliability: Can Your AI Handle the Real World?

The real world is messy. Data is noisy, inputs are unexpected, and users are unpredictable. A robust AI model can handle this chaos without breaking.

- Key Metrics: Performance on adversarial examples, behavior with missing or corrupted data, consistency of outputs.

- Why it Matters: A brittle model that fails when it sees something new is a liability. Reliability is key to building trust with users and stakeholders.

4. Scalability & Deployment: From Prototype to Production Powerhouse

That amazing model you built on your laptop needs to serve thousands, maybe millions, of users. Can your infrastructure handle it?

- Key Metrics: Throughput (requests per second), performance under load, ease of deployment and updates.

- Why it Matters: A model that can’t scale is a science project, not a business solution. Your MLOps pipeline is just as important as the model itself.

5. Responsible AI & Ethics: Fairness, Bias, and Trustworthiness

This is no longer a “nice-to-have.” An AI model that exhibits bias can do real harm to both people and your brand’s reputation.

- Key Metrics: Fairness metrics (e.g., demographic parity, equalized odds), bias detection scores, transparency reports.

- Why it Matters: Unfair AI can lead to discriminatory outcomes, legal trouble, and a complete loss of customer trust. It’s a business-critical issue.

6. Data Quality & Governance: The Foundation of All AI Success

As we said before, your AI is only as good as your data. Benchmarking your data processes is a crucial, often overlooked, step.

- Key Metrics: Data completeness, accuracy, timeliness, signal-to-noise ratio.

- Why it Matters: Poor data leads to poor models. Period. A robust data strategy is the bedrock of a competitive AI strategy.

7. Explainability & Interpretability (XAI): Understanding the “Why”

Can you explain why your AI made a particular decision? In many industries, like finance and healthcare, this is a regulatory requirement.

- Key Metrics: SHAP (SHapley Additive exPlanations) values, LIME (Local Interpretable Model-agnostic Explanations) outputs, feature importance rankings.

- Why it Matters: Black box models are risky. Explainability builds trust, aids in debugging, and ensures accountability.

8. User Experience & Adoption: Is Your AI Actually Helping People?

You can have the most technically brilliant AI in the world, but if nobody uses it or finds it helpful, it’s a failure.

- Key Metrics: User satisfaction scores (CSAT), task completion rates, adoption rates, user engagement metrics.

- Why it Matters: The ultimate goal of most business AI is to improve the human experience, whether for customers or employees. Don’t lose sight of the people you’re trying to help.

🔍 The AI Benchmarking Process: Your Step-by-Step Guide to Strategic Clarity

Alright, you know what to measure. But how do you actually do it? Following a structured process is key to getting meaningful results. We’ve adapted the classic benchmarking process with an AI-specific spin.

Phase 1: Defining Your Objectives & Scope (What Are We Measuring, Anyway?)

Before you collect a single data point, ask: What business goal are we trying to achieve? Are you trying to improve customer retention, reduce operational costs, or increase sales? Your objective will determine everything that follows.

Phase 2: Selecting the Right Benchmarks & Metrics (No One-Size-Fits-All!)

Once you have your objective, choose the KPIs that align with it. If your goal is to improve your customer service chatbot, you’ll focus on metrics like First Contact Resolution and Customer Satisfaction. If you’re building a stock trading algorithm, you’ll care more about prediction accuracy and latency. This is also where you decide who to benchmark against—a competitor, an industry standard, or your own previous version. It’s a critical question, as AI benchmarks can be used to compare the performance of different AI frameworks and models, so choosing the right ones is paramount.

Phase 3: Data Collection & Preparation (Garbage In, Garbage Out, Right?)

This is the heavy lifting. You need to gather clean, relevant data for both your own systems and your chosen benchmark. This could come from internal logs, customer surveys, financial reports, or public datasets used in industry benchmarks like SuperGLUE.

Phase 4: Execution & Evaluation (Let the AI Models Battle!)

Run the tests! This involves running your AI models on the prepared datasets and carefully recording the performance metrics you defined in Phase 2. Ensure a controlled environment to get a true apples-to-apples comparison. Our Developer Guides often cover the technical nitty-gritty of this phase.

Phase 5: Analysis & Interpretation (What Do These Numbers Mean for Us?)

Data is just data until you turn it into insight. Analyze the results to identify performance gaps. Where are you excelling? Where are you falling short? Dig deep to understand the root causes of these gaps. Is your competitor’s model better because they have more data, a better algorithm, or more efficient hardware?

Phase 6: Action Planning & Iteration (Time to Make Some Changes!)

This is where the magic happens. Use your insights to develop a concrete action plan. This could involve retraining your model, optimizing your code, investing in better hardware, or even rethinking your entire AI strategy. Then, you implement those changes and… you guessed it… you benchmark again! It’s a continuous cycle of improvement.

📊 Types of AI Benchmarking: Internal, External, and the Quest for Competitive Edge

Not all benchmarking is about staring down your competitors. There are several flavors, each offering unique insights. Understanding them helps you build a more holistic view of your performance.

Internal Benchmarking: Self-Improvement Starts at Home

This involves comparing different teams, projects, or even different versions of the same AI model within your own organization.

- Example: Your US data science team has a fraud detection model with 95% accuracy. Your European team’s model is at 92%. Internal benchmarking helps the EU team learn from the US team’s best practices.

- Benefit: Fosters collaboration and is often easier to execute since you control all the data.

Competitive Benchmarking: Peeking Over the Fence at Your Rivals

This is the classic approach: comparing your AI’s performance directly against your top competitors. This is crucial for understanding your position in the market.

- Example: You run your new language model against a public leaderboard and see how it stacks up against models from Google, OpenAI, and Anthropic. Our Model Comparisons live and breathe this stuff.

- Benefit: Provides a clear picture of your competitive standing and highlights market trends you need to follow.

Industry Standard Benchmarking: Are You Keeping Up with the Joneses?

This involves measuring your performance against established, public benchmarks and best practices for your entire industry.

- Example: A self-driving car company measures its perception system’s accuracy on the nuScenes dataset to see if it meets the industry standard for safety and reliability.

- Benefit: Helps you set realistic performance goals and ensures you’re not falling behind the broader technological curve.

Ethical & Responsible AI Benchmarking: Beyond Performance, Towards Trust

A newer but critically important category. This focuses on measuring fairness, bias, and transparency, often using specialized toolkits and frameworks.

- Example: Using tools like IBM’s AI Fairness 360 to audit your loan approval algorithm to ensure it doesn’t discriminate based on gender or race.

- Benefit: Mitigates legal and reputational risk, builds customer trust, and frankly, is the right thing to do.

🛠️ Essential Tools & Platforms for AI Benchmarking: Our Top Picks

You don’t have to build your benchmarking infrastructure from scratch. There’s a whole ecosystem of tools out there to help. Here are some of our team’s favorites.

Open-Source Powerhouses: Hugging Face, MLPerf, and More

- Hugging Face: The undisputed king for all things NLP. Their

evaluatelibrary is a go-to for quickly calculating dozens of standard metrics. Their public leaderboards, like the Open LLM Leaderboard, are the de facto standard for competitive benchmarking. - MLCommons (MLPerf): If you care about the performance and efficiency of your hardware and software stack, MLPerf is the industry benchmark. It provides standardized tests for training and inference across a variety of AI tasks.

- EleutherAI Language Model Evaluation Harness: A powerful framework for testing LLMs on a massive range of benchmarks, essential for anyone serious about language model development.

Cloud Provider Offerings: AWS, Azure, Google Cloud’s Benchmarking Capabilities

The big three cloud providers have built-in tools to help you monitor and evaluate your models.

- Amazon SageMaker Model Monitor: Automatically monitors your models in production for things like data drift and performance degradation.

- Google Cloud AI Platform: Offers a suite of MLOps tools, including model evaluation services that help you understand model performance and explainability.

- Azure Machine Learning: Includes features for responsible AI, such as interpretability and fairness assessment dashboards, which are crucial for modern benchmarking.

Specialized AI Evaluation Frameworks

Beyond the big names, there are specialized startups and platforms focused entirely on AI evaluation and validation. These can offer deeper, more domain-specific insights. Keep an eye on this rapidly growing space!

Ready to run your own benchmarks? Check out these platforms for the compute power you’ll need:

- NVIDIA GPUs on: DigitalOcean | Paperspace | RunPod

- Cloud AI Platforms: AWS SageMaker | Google Cloud AI | Azure ML

🏆 Leveraging AI Benchmarks for a Sustainable Competitive Advantage

Let’s bring it all home. How does this meticulous process of measuring and comparing translate into winning in the market? It’s about turning data into decisions that give you an edge.

Informed Decision-Making: From Guesswork to Data-Driven AI Strategy

Benchmarking replaces “we think” with “we know.” Instead of guessing which AI project to fund, you can allocate resources based on hard data about which models provide the best performance, efficiency, and potential ROI.

Optimizing Resource Allocation: Smarter Spending, Better Results

Are you spending too much on cloud computing for a model that’s only marginally better than a smaller, cheaper one? Benchmarks on efficiency and resource utilization can save you a fortune and allow you to reinvest those savings into other innovations.

Accelerating Innovation: Benchmarks as a Catalyst for Breakthroughs

When your team has a clear, objective target to hit—beating a competitor’s score or reaching an industry standard—it focuses their efforts and sparks creativity. Public leaderboards have been a massive driver of innovation in the AI community for this very reason.

Mitigating Risks: Proactive Problem Solving in AI Development

Benchmarking for things like bias, fairness, and robustness isn’t just about ethics; it’s about risk management. Identifying these issues early, before a model is deployed to millions of users, can save you from regulatory fines, lawsuits, and brand-damaging PR nightmares.

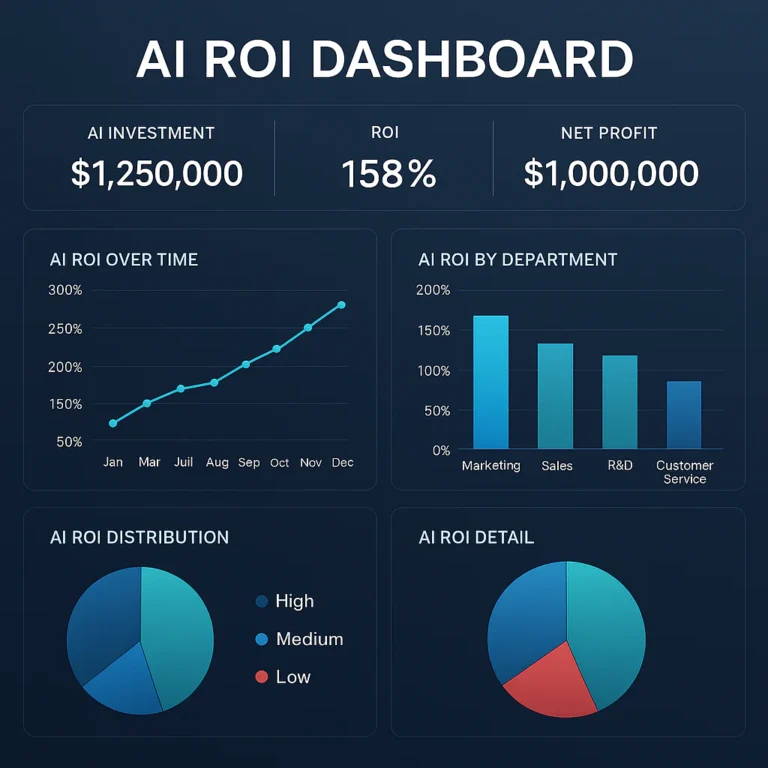

Boosting ROI: Proving the Value of Your AI Investments

Finally, benchmarks give you the language to communicate the value of your AI initiatives to the C-suite. When you can say, “We implemented this new model, and our customer satisfaction benchmark improved by 15%, leading to a 5% reduction in churn,” you’re proving the tangible business impact of your work.

🚧 Common Pitfalls & How to Dodge Them: Our Hard-Earned Lessons

We’ve made a few mistakes along the way so you don’t have to. Benchmarking is powerful, but it’s not foolproof. Here are some common traps to avoid.

❌ The “Shiny Object” Syndrome: Chasing Every New Benchmark

A new benchmark or leaderboard seems to pop up every week. Don’t feel obligated to chase all of them.

- The Fix: Stay focused on the benchmarks and metrics that align with your specific business objectives (see Phase 1 of the process!).

❌ Ignoring Context: Why a High Score Isn’t Always a Win

Your model might top a public leaderboard, but if it’s too slow or expensive for your specific use case, that high score is just a vanity metric.

- The Fix: Always evaluate performance in the context of your production environment and business constraints. A holistic view is key.

❌ Data Drift & Model Decay: The Silent Killers of AI Performance

The world changes, and so does your data. A model benchmarked six months ago may be performing much worse today due to “data drift.”

- The Fix: Implement continuous monitoring and regular re-benchmarking of your models in production. As the experts behind the featured video in this article point out, “AI generates real-time benchmarks by continuously updating performance metrics,” a practice essential for staying relevant.

❌ Lack of Clear Objectives: Benchmarking Without a North Star

If you don’t know why you’re benchmarking, you’ll drown in data without gaining any real insight.

- The Fix: Never start a benchmarking project without a clear, measurable business goal.

❌ Over-Reliance on Single Metrics: The Danger of Tunnel Vision

Focusing solely on accuracy can hide other problems, like severe bias against a particular user group or terrible latency.

- The Fix: Use a balanced scorecard of metrics that covers performance, efficiency, fairness, and robustness to get a complete picture of your AI’s health.

💡 Real-World Impact: Case Studies of Organizations Crushing It with AI Benchmarks

Theory is great, but what does this look like in practice?

Google’s AI Research: Pushing the Boundaries with Public Benchmarks

Companies like Google and Meta constantly publish research where they benchmark new models against existing state-of-the-art (SOTA) results on public datasets. This not only validates their work but pushes the entire industry forward by setting a new bar for everyone else to clear.

Financial Services: Enhancing Fraud Detection with Continuous Evaluation

A major bank we know of uses a “challenger model” framework. Their primary fraud detection model is constantly benchmarked against several “challenger” models in a live environment. If a challenger consistently outperforms the champion on key metrics like precision and recall, it gets promoted, ensuring their system is always evolving and improving.

Healthcare: Improving Diagnostic Accuracy Through Rigorous Benchmarking

AI models that read medical scans (like MRIs or X-rays) undergo some of the most rigorous benchmarking in the world. They are tested against datasets annotated by multiple expert radiologists to ensure their diagnostic accuracy is on par with, or even superior to, human experts before they are ever considered for clinical use. This process is critical for patient safety and regulatory approval from bodies like the FDA.

🔮 The Future of AI Benchmarking: What’s Next on the Horizon?

The world of AI evaluation is moving at lightning speed. Here’s what our team at ChatBench.org™ is keeping a close eye on.

Towards Holistic AI Evaluation: Beyond Pure Performance

The future isn’t just about who has the highest accuracy score. It’s about a more holistic view of a model’s capabilities. We’re seeing the rise of benchmarks that measure things like a model’s reasoning ability, its resistance to manipulation, and its ethical alignment.

Automated Benchmarking & MLOps Integration: The Dream of Seamless Evaluation

Imagine a world where every time a developer commits new code, a full suite of performance, efficiency, and fairness benchmarks are automatically run, and the results are delivered right back to them. This deep integration into the MLOps pipeline is the future, making continuous evaluation a seamless part of the development lifecycle.

The Rise of Synthetic Data for Benchmarking: New Frontiers

What happens when you don’t have enough real-world data to test for rare “edge cases”? The answer is synthetic data. We’re seeing incredible advances in using generative AI to create realistic, challenging test datasets that can probe for model weaknesses in ways that were previously impossible.

✅ Your Action Plan: How to Implement AI Benchmarking Today

Feeling inspired? Here’s a simple, four-step plan to get started right now.

- Start Small: You don’t need to benchmark your entire AI portfolio at once. Pick one critical AI model or system that has a direct impact on a key business metric.

- Define One Clear Objective: What is the single most important thing you want to improve? Is it response time for your chatbot? Accuracy for your recommendation engine? Write it down.

- Choose Your Benchmark: Select a simple benchmark to start. It could be an internal one (last quarter’s performance) or an external one (a well-known open-source model).

- Measure, Analyze, Act, Repeat: Follow the process. Gather the data, analyze the gap, make one or two targeted changes, and then measure again to see if you moved the needle. Congratulations, you’re now in the continuous improvement loop

Conclusion: Don’t Just Build AI, Build Better AI!

Phew! That was quite the journey through the fascinating world of AI benchmarking. If you started this article wondering, “How can organizations use AI benchmarks to identify areas for improvement and stay competitive?” — now you have a clear roadmap.

AI benchmarking is not just a technical exercise; it’s a strategic imperative. It transforms guesswork into insight, assumptions into data, and isolated efforts into continuous improvement cycles that keep your AI strategy sharp and market-ready.

From measuring core model performance and efficiency to tackling the thorny issues of fairness and explainability, benchmarking covers all the bases. It helps you spot where your AI shines and where it stumbles, so you can make targeted improvements that matter.

Remember our ChatBench.org™ story about the sentiment analysis model? That initial “failure” was a blessing in disguise. It’s a perfect example of how benchmarking replaces ego with evidence — and that’s the secret sauce to building truly competitive AI.

So, whether you’re a startup launching your first AI-powered product or a Fortune 500 company refining a sprawling AI portfolio, embracing benchmarking is your ticket to sustainable success.

Now go forth, measure boldly, improve relentlessly, and watch your AI strategy soar! 🚀

Recommended Links

Ready to dive deeper or get hands-on with some of the tools and resources we mentioned? Here are some curated links to help you get started:

Benchmarking & AI Tools Platforms

- Hugging Face: Amazon search | Hugging Face Official Website

- MLPerf: MLCommons Official Site

- EleutherAI Evaluation Harness: GitHub Repository

- Amazon SageMaker: Amazon SageMaker on AWS

- Google Cloud Vertex AI: Google Cloud AI Platform

- Azure Machine Learning: Azure ML Official Site

Compute Platforms for Benchmarking

- DigitalOcean GPU Instances: DigitalOcean GPU Cloud

- Paperspace GPU Cloud: Paperspace

- RunPod GPU Cloud: RunPod

Recommended Books on AI Strategy & Benchmarking

- AI Superpowers by Kai-Fu Lee — Amazon Link

- Prediction Machines: The Simple Economics of Artificial Intelligence by Ajay Agrawal, Joshua Gans, and Avi Goldfarb — Amazon Link

- Human Compatible: Artificial Intelligence and the Problem of Control by Stuart Russell — Amazon Link

FAQ: Your Burning Questions About AI Benchmarking Answered

What are the key AI benchmarks organizations should track to enhance their AI strategy?

Organizations should focus on a balanced set of benchmarks covering:

- Model Performance: Accuracy, precision, recall, F1-score, depending on the task.

- Efficiency: Inference latency, training time, resource utilization.

- Robustness: Ability to handle noisy or adversarial data.

- Fairness and Ethics: Bias detection metrics and fairness audits.

- Explainability: Metrics that assess how interpretable the model’s decisions are.

- User Experience: Adoption rates, satisfaction scores, and engagement metrics.

Tracking these ensures a comprehensive understanding of AI effectiveness beyond just raw accuracy, aligning AI outputs with business goals and ethical standards.

How do AI benchmarks help companies measure the effectiveness of their AI initiatives?

Benchmarks provide objective, quantifiable data that allow companies to:

- Identify performance gaps relative to competitors or industry standards.

- Evaluate the impact of new AI models or updates before full deployment.

- Monitor ongoing model health to detect issues like data drift or bias.

- Communicate AI value to stakeholders with clear metrics tied to business outcomes.

- Prioritize improvements based on evidence rather than intuition.

This structured measurement turns AI from a black box into a transparent, manageable asset.

In what ways can benchmarking AI performance drive innovation and market competitiveness?

Benchmarking fosters innovation by:

- Setting clear performance targets that motivate teams to push boundaries.

- Highlighting best practices and emerging trends from industry leaders.

- Encouraging experimentation through iterative testing and evaluation.

- Accelerating time-to-market by identifying bottlenecks early.

- Reducing risk by detecting ethical or operational issues before deployment.

Companies that benchmark effectively can adapt faster and deliver superior AI-powered products, gaining a sustainable competitive edge.

How can businesses leverage AI benchmarking data to prioritize technology investments?

Benchmarking data reveals where AI investments yield the highest returns by:

- Highlighting underperforming models or systems that need upgrades.

- Identifying resource inefficiencies that can be optimized for cost savings.

- Showing which AI capabilities most directly impact customer satisfaction or revenue.

- Informing decisions on hardware upgrades, cloud services, or talent acquisition.

- Supporting business cases for AI projects with concrete performance evidence.

By aligning investments with benchmark insights, companies maximize ROI and avoid costly missteps.

Additional FAQs

How often should organizations perform AI benchmarking?

Continuous benchmarking is ideal, especially for models in production. Regular intervals (monthly or quarterly) help catch performance degradation early. Automated monitoring tools like Amazon SageMaker Model Monitor or Google Cloud’s Vertex AI can facilitate this.

Can benchmarking help with regulatory compliance?

Absolutely. Benchmarks on fairness, explainability, and robustness support compliance with emerging AI regulations (e.g., EU AI Act). They provide documented evidence that AI systems meet required standards.

What role does data quality play in AI benchmarking?

Data quality is foundational. Poor data leads to misleading benchmarks and flawed conclusions. Organizations must invest in data governance, cleaning, and validation to ensure benchmarking results are reliable.

Reference Links

- The Competitive Advantage of Using AI in Business | FIU College of Business

- MLCommons MLPerf Benchmarking

- Hugging Face Official Site

- IBM AI Fairness 360 Toolkit

- FDA on AI/ML Software as a Medical Device

- EleutherAI Language Model Evaluation Harness

- Amazon SageMaker Model Monitor

- Google Cloud Vertex AI

- Azure Machine Learning

We hope this comprehensive guide empowers you to harness AI benchmarking as a powerful lever for growth and innovation. For more expert insights, visit ChatBench.org™. Happy benchmarking!