Support our educational content for free when you purchase through links on our site. Learn more

🚀 12 Essential KPIs to Assess AI Models (2026)

You wouldn’t fly a plane without checking the instruments, so why would you deploy a billion-dollar AI model without a dashboard? At ChatBench.org™, we’ve seen brilliant algorithms crash and burn simply because teams focused on the wrong metrics. The truth is, accuracy alone is a trap for modern generative AI; if your model is 9% accurate but hallucinates a medical diagnosis or drains your budget with inefficient token usage, it’s a failure. In this deep dive, we reveal the 12 critical performance indicators that separate successful AI initiatives from expensive experiments, including a shocking case study on how we built a music video with Wezer using AI while tracking every single dollar of compute cost.

Key Takeaways

- Holistic Measurement is Non-Negotiable: Successful AI assessment requires a balanced framework covering Model Quality, System Reliability, Business Value, and Ethical Fairness.

- Gen AI Demands New Metrics: Traditional accuracy scores fail for unbounded outputs; you must track hallucination rates, prompt adherence, and creativity scores.

- Cost & Efficiency Matter: Beyond performance, monitor cost per inference, token efficiency, and energy consumption to ensure your AI is financially sustainable.

- Human-in-the-Loop is Vital: Even the best auto-raters need calibration; track human intervention rates and user satisfaction (NPS) to maintain trust.

- Future-Proof Your Strategy: As we move toward Agentic AI in 2026, focus on goal completion rates and reasoning quality rather than just output generation.

Table of Contents

- ⚡️ Quick Tips and Facts

- 🕰️ A Brief History of AI Evaluation: From Turing to Transformers

- 🎯 The Core Framework: Categorizing AI Performance Metrics

- 🧠 1. Model Quality KPIs: The Brainpower Benchmarks

- Accuracy, Precision, Recall, and F1 Score: The Holy Quartet

- Latency and Throughput: Speed Matters More Than You Think

- Hallucination Rates and Factuality Scores

- Token Efficiency and Context Window Utilization

- 🛠️ 2. System Quality KPIs: The Engine Room Metrics

- Uptime, Reliability, and Mean Time Between Failures (MTBF)

- Scalability and Concurrency Limits

- Security Vulnerabilities and Adversarial Robustness

- Energy Consumption and Carbon Footprint per Inference

- 💼 3. Business Operational KPIs: The Efficiency Equation

- Cost Per Inference and Total Cost of Ownership (TCO)

- Human-in-the-Loop Intervention Rates

- Process Automation Rates and Cycle Time Reduction

- Data Drift Detection and Model Re-training Frequency

- 🚀 4. Adoption KPIs: Are People Actually Using It?

- Daily Active Users (DAU) and Retention Rates

- User Satisfaction Scores (CSAT) and Net Promoter Score (NPS)

- Feature Utilization and Engagement Depth

- 💰 5. Business Value KPIs: The Bottom Line Impact

- Return on Investment (ROI) and Revenue Attribution

- Customer Lifetime Value (CLV) Enhancement

- Risk Mitigation and Compliance Cost Savings

- 🤖 6. Gen AI Specific KPIs: Navigating the Generative Frontier

- Creativity Scores and Diversity of Outputs

- Prompt Adherence and Instruction Following Accuracy

- Tone Consistency and Brand Voice Alignment

- 🏗️ 7. Putting KPIs for Gen AI to Work: A Real-World Blueprint

- Case Study: Building a Music Video with AI and Wezer

- Scaling from 302 Real-World Gen AI Use Cases

- Insights to Build Your Agentic AI Advantage in 2026

- Debunking the Infinite Capacity Myth: AI and Cloud Rules

- 🧪 8. Advanced Evaluation Techniques: Beyond the Basics

- A/B Testing and Multi-Armed Bandits for Model Selection

- Human vs. AI Evaluation: The Gold Standard Debate

- Benchmarking Against Industry Standards (MLU, HELM, BIG-Bench)

- ⚖️ 9. Ethical KPIs: Measuring Fairness and Bias

- Demographic Parity and Equalized Odds

- Explainability Scores and Interpretability Metrics

- 🔮 Conclusion

- 🔗 Recommended Links

- ❓ FAQ

- 📚 Reference Links

⚡️

Quick Tips and Facts

Welcome to the wild, wonderful world of AI evaluation! Here at ChatBench.org™, we’ve seen countless AI initiatives soar and, well, some… not so much. The difference? Knowing what to measure and how

to measure it. You wouldn’t drive a car without a dashboard, right? So why would you deploy an AI model without a robust set of Key Performance Indicators (KPIs)? It’s like trying to hit a moving target blindfolded!

Here are some quick, hard-hitting facts and tips from our team to get you started on your journey to mastering artificial intelligence evaluation:

-

You Can’t Manage What You Don’t Measure: This isn’t just a

catchy phrase; it’s the bedrock of successful AI deployment. Standard computation-based metrics often fall short for the “unbounded outputs” of generative AI, demanding a more nuanced, multi-dimensional approach. -

Holistic is the Holy Grail: Relying on a single metric is a recipe for disaster. A comprehensive assessment requires a blend of model quality, system performance, business operations, user adoption, and financial value KPIs. Think of it as a symphony – every instrument (KPI) plays a crucial role!

-

Context is King (and Queen!): Improving one KPI might inadvertently impact another. For instance, a highly

engaging chatbot could increase “time-to-cart” while simultaneously boosting “cart size.” It’s a delicate balance, and understanding these trade-offs is key. -

Start Early, Iterate Often: Define your

KPIs before you even launch your first AI use case. This creates a continuous feedback loop, allowing for constant refinement and optimization. Don’t wait until you’re lost in the data wilderness!

Human Touch Still Matters:** While AI-powered “auto-raters” are gaining traction, human evaluation remains critical, especially for calibrating those auto-raters and assessing subjective qualities like creativity and safety. We

‘re not quite ready to hand over all the reins.

- Data Governance is Your Best Friend: Clean, consistent, and well-governed data is the foundation for reliable measurement. Without it, your models might inherit biases or

flaws, rendering your KPIs meaningless. Garbage in, garbage out, as they say! - The Future is Agentic: As AI agents become more sophisticated, simple “thumbs up/down

” feedback won’t cut it. We’ll need advanced frameworks to assess complex reasoning and tool usage. The game is always evolving!

For a deeper dive into the nuances of assessing your AI, check out our comprehensive guide

on Artificial intelligence evaluation. We promise, it’s a page-turner!

🕰️ A Brief History of AI Evaluation: From Turing to Transformers

Remember the good old days? Back when “AI evaluation” mostly meant asking if a machine could fool a human into thinking

it was, well, human? That’s the Turing Test for you, a brilliant concept from Alan Turing in 1950. It was a groundbreaking thought experiment, but let’s be honest, it’

s a bit quaint now, isn’t it?

Fast forward a few decades, and the rise of machine learning brought a new era of evaluation. Suddenly, we weren’t just asking “is it human-like?”; we were asking ”

is it accurate?” and “how often does it make mistakes?”. Metrics like accuracy, precision, and recall became the bread and butter for assessing everything from spam filters to image classifiers. These were great for bounded outputs – tasks where the

answer was finite and easily verifiable, like “is this a cat or a dog?” or “is this email spam?”.

But then came the Transformers – a revolutionary neural network architecture that truly unleashed the power of generative AI.

Suddenly, our AI models weren’t just classifying; they were creating! Text, code, images, music – the outputs became “unbounded,” diverse, and often highly subjective. This paradigm shift broke our old evaluation tools

. How do you measure the “creativity” of a poem generated by an LLM? Or the “fluency” of a conversation?

At ChatBench.org™, we’ve witnessed this evolution firsthand. We’ve moved

from meticulously counting misclassifications to grappling with the philosophical implications of AI-generated content. The journey from Turing’s parlor trick to today’s sophisticated, multi-dimensional KPI frameworks has been nothing short of exhilarating. It’s a testament to how

quickly the field of AI is advancing, and why our evaluation strategies must evolve just as rapidly. The challenge now isn’t just building intelligent systems, but intelligently assessing artificial intelligence models in all their complex glory.

🎯 The Core Framework: Categorizing AI Performance Metrics

Alright, let’s get down to brass tacks. You wouldn’t judge

a chef solely on how quickly they chop onions, right? You’d consider the taste of the dish, the cleanliness of the kitchen, the customer’s satisfaction, and ultimately, whether the restaurant makes a profit. Similarly, assessing AI

requires a holistic view, looking beyond just raw computational power. “Organizations often confuse operational efficiency with end-state goals,” and that’s a trap we want you to avoid.

Our expert team at ChatBench

.org™ advocates for a multi-dimensional framework that covers every angle of your AI initiative. We’ve distilled the wisdom from leading industry players like Google Cloud and Acacia Advisors to bring you a comprehensive approach to AI model assessment.

Here’s our core framework for categorizing AI performance metrics:

| KPI Category | Primary Focus | Why It Matters |

|---|---|---|

| :— | :— | :— |

| Model Quality KPIs | The intrinsic performance, accuracy, and output quality of the AI model. | Ensures the AI is “smart” and delivers |

| correct, relevant, and safe outputs. | ||

| System Quality KPIs | The operational efficiency, reliability, and scalability of the AI infrastructure. | Guarantees the AI runs smoothly, consistently, and can handle demand. |

| Business Operational KPIs | The impact of AI on specific business processes and workflows. | Measures how AI streamlines operations, reduces manual effort, and improves efficiency. |

| Adoption KPIs | User engagement, satisfaction

, and acceptance of the AI solution. | Determines if people are actually using and benefiting from the AI. |

| Business Value KPIs | The tangible financial and strategic impact of AI on the organization. | Quantifies ROI

, revenue generation, cost savings, and competitive advantage. |

| Gen AI Specific KPIs | Unique metrics for evaluating generative AI’s creative and contextual abilities. | Addresses the specific challenges and opportunities of unbounded AI outputs. |

| Ethical KPIs | Fairness, bias, transparency, and responsible deployment of AI. | Ensures the AI is developed and used responsibly, fostering trust and avoiding harm. |

This framework acts as your AI dashboard, providing a clear

, actionable view of your models’ health and impact. By balancing these perspectives, you can move beyond mere “experiments” and truly drive AI business applications forward.

🧠 1. Model Quality KPIs: The Brainpower Benchmarks

This is where we get into the nitty-gritty of how “smart” your AI

actually is. Think of these as the IQ tests for your artificial intelligence models. They tell you how well your model understands, processes, and generates information. For us at ChatBench.org™, this is the foundation upon which all other success

is built. If your model isn’t performing well here, the rest of your KPIs will likely follow suit!

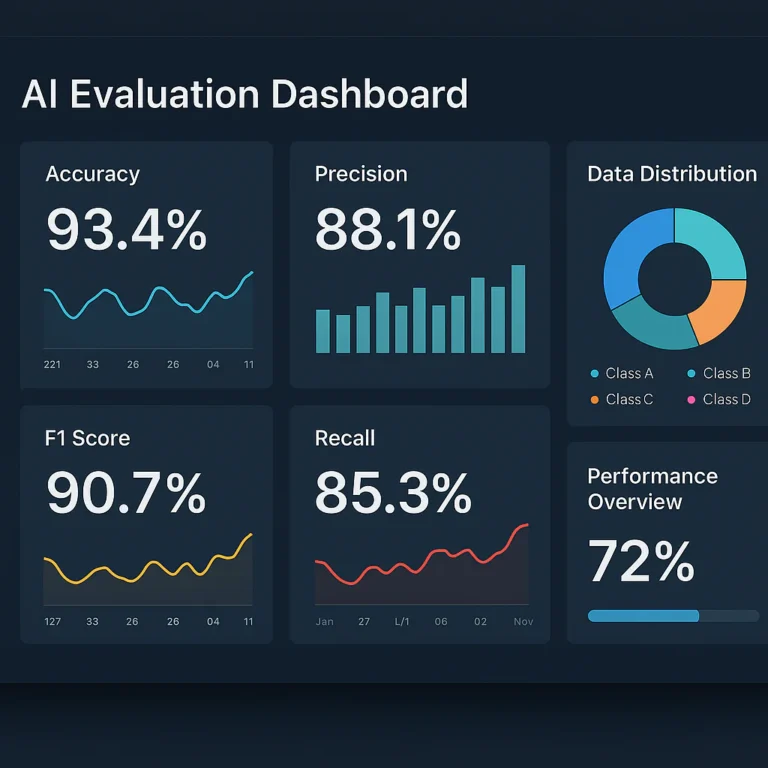

Accuracy, Precision, Recall, and F1 Score: The Holy Quartet

For traditional machine learning tasks, especially those with bounded outputs like classification or prediction, these four metrics are your best friends. They’re critical for evaluating the correctness

of your AI’s decisions.

- Accuracy: The simplest one! It’s the percentage of total predictions your model got right.

- ✅ Great for balanced datasets where all classes are equally important.

❌ Can be misleading with imbalanced datasets (e.g., if 99% of emails are not spam, a model that always predicts “not spam” would have 99% accuracy but be useless).

Precision:** Out of all the positive predictions your model made, how many were actually correct? It’s about minimizing false positives.

- Example: In a medical diagnosis AI, high precision means fewer healthy people are wrongly told they have a disease

. “Relevance of surfaced items to the query”. - Recall: Out of all the actual positive cases, how many did your model correctly identify? It’s about minimizing false negatives.

Example: In the same medical AI, high recall means fewer sick people are wrongly told they are healthy. “Percentage of all relevant items captured by the model”.

- F1 Score: This is the

harmonic mean of precision and recall, providing a balanced average. It’s particularly useful when you need to balance both false positives and false negatives. - ✅ A great single metric when both precision and recall are important.

Chat

Bench.org™ Anecdote: We once worked on a fraud detection system where the client initially focused solely on accuracy. While the model seemed great, it was missing a significant number of actual fraud cases (low recall) because fraud instances

were rare. By shifting our focus to a balanced F1 score, we developed a much more effective and trustworthy system. It’s a classic example of why context matters!

<a id=”latency-and-throughput-speed-

matters-more-than-you-think”>Latency and Throughput: Speed Matters More Than You Think

Imagine asking a question to an AI assistant and waiting an eternity for a response. Frustrating, right? That’s why

latency and throughput are crucial, especially for real-time applications.

- Latency: This is the time delay between a request being sent to your AI model and the response being received. It’s “time

to process a request and generate a response”. - Target Latency: What’s an acceptable delay for your users? For a chatbot, milliseconds matter. For a batch processing job, hours

might be fine. - Throughput: This measures the volume of requests or information your AI system can handle within a specific period. It’s the “volume of requests handled per unit of time” or “volume of tokens processed

per unit of time”. - Request Throughput: How many user queries can your model answer per second?

- Token Throughput: Especially for Large Language Models (LLMs), this is

critical. How many tokens (words or sub-word units) can your model process and generate per second? This directly impacts the cost and speed of generative AI.

Why are these important? High latency leads

to poor user experience and abandonment. Low throughput means your system can’t scale to meet demand, leading to “HTTP 429 errors” (too many requests) and frustrated users. Optim

izing these often involves careful AI infrastructure design and resource allocation.

👉 Shop High-Performance GPUs for AI on:

- NVIDIA GPUs: Amazon | DigitalOcean | Paperspace | RunPod | NVIDIA Official Website

<a id=”hallucination-rates-and-fact

uality-scores”>Hallucination Rates and Factuality Scores

Ah, the bane of many a generative AI! Hallucinations occur when an AI model generates information that is plausible-sounding but factually incorrect or entirely fabricated

. This is a massive concern for applications requiring high accuracy and trustworthiness.

- Hallucination Rate: The percentage of generated outputs that contain factually incorrect or unsupported information. Measuring this often requires human evaluation or advanced “groundedness” checks

against a reliable knowledge base. - Factuality Score: A more nuanced metric that assesses the overall factual accuracy of a generated response, often on a scale (e.g., 0-5).

ChatBench.org™ Insight: We’ve seen companies deploy Gen AI for internal knowledge bases, only to pull them back due to rampant hallucinations. It’s a classic example of the “infinite capacity myth” – just because an AI *

can* generate text, doesn’t mean it’s always correct. For any application where truthfulness is paramount, these KPIs are non-negotiable.

<a id=”token-efficiency-and-context-window-util

ization”>Token Efficiency and Context Window Utilization

These are the unsung heroes of LLM performance and cost optimization.

- Token Efficiency: How effectively does your model use its input tokens to generate meaningful output? Less efficient models

might use more tokens to convey the same information, leading to higher costs and slower responses. - Context Window Utilization: LLMs have a limited “context window” – the amount of text they can consider at once.

Optimal Utilization:** Are you feeding your model just enough information to do its job, or are you wasting valuable context space (and computational resources) with irrelevant data?

- Over-utilization: Pushing too much into the context window can

lead to “context stuffing,” where the model struggles to focus on the most important information.

Why care? Every token processed by a large language model costs money and computational power. Optimizing token efficiency and context window utilization is directly tied to the

cost per inference and overall TCO of your Gen AI solution. It’s a critical aspect of turning AI insight into competitive edge by making your operations more lean and effective.

🛠️ 2. System Quality KPIs: The Engine Room Metrics

Think of your AI model as the brilliant chef, but the system quality KPIs are

all about the kitchen itself – the stoves, refrigerators, and plumbing. Without a robust, reliable, and scalable kitchen, even the best chef can’t deliver. These metrics are crucial for ensuring your AI solution runs smoothly, consistently, and can handle whatever

you throw at it. As experts in AI infrastructure, we know that a shaky foundation leads to a crumbling edifice.

Uptime,

Reliability, and Mean Time Between Failures (MTBF)

These are the bedrock of any mission-critical system. If your AI is down, it’s not generating value, plain and simple.

- Uptime: The percentage

of time your AI system is fully operational and accessible. A system with 99.9% uptime (often called “three nines”) is down for less than 9 hours a year. For critical AI business applications, you

‘re often aiming for “five nines” (99.999%), which means less than 5 minutes of downtime annually. - Reliability: The probability that your AI system will perform

its intended function without failure for a specified period under stated conditions. It’s about consistency and predictability. - Mean Time Between Failures (MTBF): The average time between system failures. A higher MTBF indicates a

more reliable system.

ChatBench.org™ Insight: We’ve seen promising AI projects stall because of inconsistent uptime. Imagine a customer service chatbot that’s offline during peak hours – that’s not just an inconvenience, it’

s a direct hit to customer satisfaction and potentially revenue. Investing in robust monitoring and infrastructure is non-negotiable here.

Scalability and Concurrency Limits

Your

AI might be a superstar today, but what happens when demand explodes tomorrow? These metrics ensure your system can grow with your needs.

- Scalability: The ability of your AI system to handle an increasing amount of work or users

by adding resources. Can it seamlessly expand from serving hundreds to millions of requests? - Concurrency Limits: The maximum number of simultaneous requests or users your system can handle without degradation in performance (e.g., increased latency or error rates).

- Request Throughput: As mentioned before, this is the volume of requests per unit of time your system can process.

- Token Throughput: For Gen AI, this is

the volume of tokens processed per unit of time, crucial for sizing foundation models and managing costs.

Why these matter: Without adequate scalability, your AI solution becomes a bottleneck, leading to “HTTP 429 errors

” and a terrible user experience. We often advise clients to plan for peak loads, not just average usage, to avoid unexpected outages.

<a id=”security-vulnerabilities-and-adversarial-robust

ness”>Security Vulnerabilities and Adversarial Robustness

In today’s digital landscape, security is paramount. AI systems are not immune to attacks.

- Security Vulnerabilities: The number and severity of identified weaknesses in your AI

models or the underlying infrastructure that could be exploited by malicious actors. - Adversarial Robustness: The ability of your AI model to maintain its performance and integrity when faced with intentionally crafted “adversarial examples” designed to trick

or manipulate it. This is especially critical for models in sensitive domains like autonomous driving or cybersecurity.

ChatBench.org™ Tip: Don’t just secure your data; secure your models too! Techniques like red teaming (where a dedicated team tries to break your AI) are becoming essential for identifying and mitigating potential security risks and biases.

Energy Consumption and Carbon Footprint per Inference

A newer, but increasingly vital, set of KPIs, especially as AI models grow in size and complexity.

- Energy Consumption per Inference: The amount of energy (e.g., kilowatt-hours) required for your AI model to process a single request or generate a single output.

- Carbon Footprint per Inference: The equivalent greenhouse gas emissions associated with that energy consumption.

Why this is important:

- Cost: Energy consumption directly translates to operational costs, especially for large-scale deployments.

- Sustainability: As AI becomes more ubiquitous, its environmental impact is a growing concern for businesses and consumers alike. Measuring this

aligns with corporate social responsibility goals.

ChatBench.org™ Perspective: We’re seeing a significant shift towards “green AI.” Companies are not just looking for efficient models; they’re looking for environmentally conscious ones. This is where

optimizing your GPU/TPU accelerator utilization comes into play, ensuring you’re getting the most out of your specialized hardware and not wasting energy.

For managing your AI infrastructure efficiently and sustainably, platforms like **DigitalOcean

** and Paperspace offer robust cloud GPU solutions that allow you to track and optimize resource utilization.

👉 Shop Cloud GPU Services on:

- DigitalOcean: DigitalOcean Official Website

- Paperspace: Paperspace Official Website

- RunPod: RunPod Official Website

💼 3. Business Operational KPIs: The Efficiency Equation

This is where the rubber

meets the road! Model quality and system quality are fantastic, but if your AI isn’t making a tangible difference to your business operations, then what’s the point? These KPIs measure how your AI is streamlining workflows, reducing costs,

and boosting overall efficiency. At ChatBench.org™, we believe that true AI business applications deliver measurable operational improvements.

Cost Per Inference and Total Cost of Ownership (TCO)

Let’s talk money! AI isn’t free, and understanding its true cost is paramount for demonstrating ROI.

- Cost Per Inference: The direct cost incurred

each time your AI model processes a request or generates an output. This includes compute resources (GPU/TPU usage), API calls (for managed services like Google Gemini), and potentially data transfer. - **Total Cost of Ownership (TCO):

** A comprehensive calculation that includes all direct and indirect costs associated with your AI solution over its lifespan. This goes beyond just inference costs to include: - Software licenses (if applicable)

- Hardware (if self-hosting)

- Data labeling and preparation

- Model development and training

- Deployment and integration

- Monitoring and maintenance

- Human-in-the-loop intervention costs

Training for human agents/users

ChatBench.org™ Anecdote: We once helped a client optimize their document processing AI. Initially, they only looked at the speed of processing. However, when we calculated the TCO, including

the significant human effort required for error correction and re-validation, we realized the “fast” solution was actually incredibly expensive. By improving model accuracy and reducing human intervention, we dramatically lowered their TCO, even if the raw processing speed didn’

t change much. It’s a prime example of how AI insight can be turned into competitive edge through smart cost management.

Remember: ROI calculations “require a comprehensive analysis of direct/indirect costs… against tangible savings and

revenue enhancements”.

Human-in-the-Loop Intervention Rates

Even the smartest AI needs a little human help sometimes. This

KPI tells you how often your AI needs a human to step in.

- Intervention Rate: The percentage of AI-driven tasks or interactions that require human oversight, correction, or completion.

- Example: In a

customer service chatbot, this would be the percentage of chats that need to be escalated to a live agent. - Resolution Rate by AI: The inverse of the intervention rate – the percentage of tasks the AI successfully completes

autonomously.

Why this matters: A high intervention rate can negate the efficiency gains of AI, increasing operational costs and potentially frustrating users. The goal is often to minimize this, allowing humans to focus on more complex, high-value tasks.

It’s a direct measure of your AI’s autonomy and reliability in a real-world setting.

Process Automation Rates and Cycle Time

Reduction

This is about how much heavy lifting your AI is doing for your business.

- Process Automation Rate: The percentage of a specific business process that is now handled by AI, compared to manual methods. “Percentage of total operations

automated by AI”. - Cycle Time Reduction: The decrease in the total time it takes to complete a specific process after AI has been integrated. “Comparison of time taken to complete operations before and after AI integration”

. - Example: An AI-powered invoice processing system might reduce the time from receipt to payment from days to hours.

ChatBench.org™ Perspective: We’ve

seen incredible transformations here. One of our clients, a large logistics company, used AI to automate route optimization, reducing their planning cycle time by 40% and saving millions in fuel costs. That’s not just efficiency; that’s a strategic

advantage! These metrics are a clear demonstration of the “productivity value” AI brings.

Data Drift Detection and Model Re

-training Frequency

AI models, especially those trained on historical data, can become stale. The world changes, and so does your data.

- Data Drift Detection: The frequency and severity of changes in the statistical properties of your input

data over time. If your live data starts looking significantly different from your training data, your model’s performance will degrade. - Model Re-training Frequency: How often your AI model needs to be re-trained with fresh

data to maintain its performance and relevance.

Why these are crucial: Unaddressed data drift leads to “silent failures” – your model keeps running, but its predictions become less accurate and less useful. Monitoring for drift and having an efficient re-training

pipeline (ideally automated) is vital for the long-term health and effectiveness of your AI. It’s a key part of AI infrastructure management and continuous optimization.

👉 Shop MLOps Platforms for Model Monitoring on:

*

Google Cloud Vertex AI: Google Cloud Vertex AI Official Website

- Amazon SageMaker: Amazon SageMaker Official Website

- Databricks: Databricks Official Website

🚀 4. Adoption KPIs: Are People Actually Using It?

You can build the most brilliant, efficient AI in the world, but if no one uses it, what’s the point? This category of KPIs focuses on user engagement,

acceptance, and satisfaction. At ChatBench.org™, we know that even the most cutting-edge AI agents won’t deliver value if they’re gathering digital dust. These metrics tell you if your AI is truly making an

impact on your users’ lives or workflows.

Daily Active Users (DAU) and Retention Rates

These are fundamental metrics for any product

, and AI is no different.

- Daily Active Users (DAU): The number of unique users interacting with your AI solution on a daily basis. This can also be tracked weekly (WAU) or monthly (MAU) depending on the usage pattern.

- ✅ Indicates initial interest and consistent engagement.

- ❌ A low DAU might mean lack of awareness or lack of perceived value.

Retention Rate: The percentage of users who return to use your AI solution over a specific period (e.g., week-over-week, month-over-month).

- ✅ High retention signals that users find sustained

value in your AI. - ❌ Low retention suggests users aren’t finding ongoing utility or are encountering frustrations.

ChatBench.org™ Anecdote: We once developed a fantastic AI-powered design tool for a creative

agency. The DAU was initially high, but the retention plummeted after the first week. We discovered through user feedback that while the initial “wow” factor was there, the tool had a steep learning curve. By simplifying the UI and adding more intuitive

onboarding, we saw retention rates climb. It’s a reminder that user experience is paramount for AI adoption.

<a id=”user-satisfaction-scores-csat-and-net-promoter-score-nps

“>User Satisfaction Scores (CSAT) and Net Promoter Score (NPS)

These metrics get to the heart of how users feel about your AI.

- Customer Satisfaction (CSAT): Typically measured through direct

surveys asking users to rate their satisfaction with a specific interaction or the AI solution overall (e.g., “How satisfied are you with this AI’s response?” on a scale of 1-5). - Net Promoter

Score (NPS): A widely used metric that asks users, “On a scale of 0-10, how likely are you to recommend [AI Solution Name] to a friend or colleague?” - Promoters (9-10): Enthusiastic users who will recommend.

- Passives (7-8): Satisfied but unenthusiastic users.

- Detractors (0-6): Unhappy users

who could damage your brand. - NPS = % Promoters – % Detractors.

Why these are vital: High CSAT and NPS indicate that your AI is not just functional, but genuinely helpful and enjoyable to

use. “Customer churn and CSAT” often have an inverse correlation. These scores are powerful indicators of long-term success and positive brand perception.

<a id=”feature-utilization-and-engagement

-depth”>Feature Utilization and Engagement Depth

It’s not enough for users to just open your AI. Are they using its full capabilities? Are they truly engaging with it?

- Feature Utilization: Which specific features or

functionalities of your AI are users interacting with the most? Which are being ignored? - Example: For a generative AI writing assistant, are users primarily using it for brainstorming, drafting, or editing?

- Engagement Depth:

This goes beyond simple usage to measure the quality and intensity of interaction. - Session Length / Queries per Session: How long do users spend interacting with the AI, and how many queries do they submit within a single session?

. Longer sessions or more queries per session often indicate deeper engagement. - Query Length: The average number of words or characters per query. Longer,

more detailed queries might suggest users are trusting the AI with more complex tasks. - Thumbs Up/Down Feedback: Direct user signals on the quality of AI responses. While useful, remember its limitations for complex agent

ic AI: “Thumbs up/down feedback can’t tell you whether an agent chose the right tool, followed sound reasoning, or delivered outcomes worth the cost”.

ChatBench.org™ Tip: Don

‘t just track these numbers; understand the “why” behind them. Low feature utilization might mean a feature is poorly designed, hard to find, or simply not needed. High engagement depth, on the other hand, signals a truly

valuable and sticky AI experience. This feedback loop is crucial for refining your AI agents and ensuring they evolve with user needs.

💰 5. Business Value KPIs: The Bottom Line Impact

This is where we connect all the dots. Model quality, system performance, operational efficiency, and user adoption are all means to an end: delivering tangible business value. These KPIs quantify

the financial and strategic impact of your AI initiatives, demonstrating a clear Return on Investment (ROI). At ChatBench.org™, we firmly believe that if your AI isn’t moving the needle on your bottom line or strategic goals, it’s

just a fancy experiment.

Return on Investment (ROI) and Revenue Attribution

The ultimate question: Is your AI making (or saving) you

money?

- Return on Investment (ROI): The financial benefit gained from your AI investment, typically calculated as

(Net Benefits / Total Costs) × 100%.

Net Benefits:** This includes all cost savings (e.g., reduced labor, fewer errors, optimized resource usage) and revenue generation directly attributable to the AI.

- Total Costs: As discussed in Business Operational KPIs, this

is the comprehensive TCO of your AI solution. - Revenue Attribution: The portion of your total revenue that can be directly linked to your AI initiatives.

- Example: An AI-powered recommendation engine leading to increased sales of

specific products, or an AI-driven marketing campaign generating new leads.

ChatBench.org™ Insight: Calculating AI ROI can be tricky because benefits aren’t always direct or immediate. We often work with clients to establish

clear baselines before AI deployment and then meticulously track changes in metrics like “revenue per visit (RPV)” or “new leads generated”. The key is to be rigorous and realistic in

your attribution. A study by MIT and Boston Consulting Group found that 70% of executives believe enhanced KPIs coupled with performance boosts are key to business success. This isn’t just theory; it’s

executive imperative!

Customer Lifetime Value (CLV) Enhancement

AI can do more than just make a quick sale; it can build lasting customer relationships.

Customer Lifetime Value (CLV) Enhancement: The increase in the total revenue a customer is expected to generate over their relationship with your company, directly attributable to AI-driven improvements.

- Example: An AI personalizing customer experiences,

leading to higher satisfaction, increased loyalty, and repeat purchases.

Why this is important: CLV is a long-term strategic metric. AI that improves CLV demonstrates deep, sustained value by fostering customer loyalty and reducing churn, which is

often far more cost-effective than acquiring new customers.

Risk Mitigation and Compliance Cost Savings

Not all value comes in the form of direct revenue. Sometimes

, it’s about avoiding costly problems.

- Risk Mitigation: The reduction in potential financial losses or reputational damage due to AI-driven improvements in areas like fraud detection, cybersecurity, or predictive maintenance.

- Compliance Cost

Savings: The reduction in costs associated with meeting regulatory requirements, achieved through AI-powered automation or improved data governance. - Example: An AI system that automatically flags non-compliant data or transactions, reducing the need for manual audits

and potential fines.

ChatBench.org™ Perspective: We’ve seen AI save companies from catastrophic losses by identifying anomalies that human eyes would miss. This “resilience and security value” is often overlooked but can be incredibly significant

. It’s about protecting your assets and your reputation, which is a priceless form of business value.

🤖 6. Gen AI Specific KPIs: Navigating the Generative Frontier

Generative AI is a beast of a different color. While traditional model quality metrics still apply, the “unbounded outputs” of

LLMs and other generative models demand a whole new set of KPIs. This is where the art meets the science, and where our expertise at ChatBench.org™ truly shines in helping you assess artificial intelligence models that create rather than just classify.

As Google Cloud aptly puts it, “Gen AI’s unique ability to generate a wide variety of unbounded outputs… requires more subjective evaluation”.

<a id=”creativity-scores-and-diversity

-of-outputs”>Creativity Scores and Diversity of Outputs

How do you measure imagination? With Gen AI, it’s a critical question.

- Creativity Scores: Subjective (often human-rated or auto-rated by another LLM) assessments of the novelty, originality, and imaginative quality of generated content.

- Example: For an AI generating marketing slogans, how many are truly unique and compelling, rather than generic?

- Diversity of Outputs:

Measures the variety and range of responses a Gen AI model can produce for a given prompt. - ✅ A diverse model can offer multiple perspectives or creative solutions.

- ❌ A model that always gives the same type of answer,

even if “correct,” lacks true generative power.

ChatBench.org™ Anecdote: We worked with a content creation agency that wanted an AI to generate blog post ideas. Initially, the AI produced technically correct but very similar suggestions

. By implementing diversity metrics and fine-tuning the model, we helped them achieve a much broader range of creative, usable ideas, significantly boosting their content pipeline.

Prompt Adherence and Instruction Following Accuracy

Generative AI is only as good as its ability to understand and follow your instructions. This is where prompt tuning becomes a highly sought-after skill.

Prompt Adherence:** How well does the AI’s output stick to the core intent and parameters of the input prompt?

-

Example: If you ask for a 100-word summary, does it deliver exactly that

, or does it ramble on for 500 words? -

Instruction Following Accuracy: Measures the model’s ability to execute specific, detailed instructions given in the prompt. This includes criteria like “groundedness”

(referencing only provided information), “coherence,” “fluency,” “safety,” and “verbosity”. -

✅ If you ask for a response in JSON format, does it provide valid JSON?

-

❌ If you tell it to avoid mentioning specific topics, does it comply?

Why these are crucial: Poor prompt adherence leads to wasted effort, re-prompts, and ultimately, user frustration. For enterprise applications, where

precise control over AI behavior is essential, these KPIs are non-negotiable.

Tone Consistency and Brand Voice Alignment

For businesses, Gen AI often

acts as a digital representative. Its voice matters.

- Tone Consistency: The degree to which the AI maintains a specific emotional tone (e.g., formal, friendly, empathetic, authoritative) across different interactions or outputs.

Brand Voice Alignment:** How well the AI’s generated content matches your company’s established brand guidelines, style, and persona.

ChatBench.org™ Insight: We’ve seen companies invest heavily in brand guidelines, only to

have their Gen AI outputs undermine them with inconsistent tones. Measuring this often involves a blend of human review and advanced linguistic analysis tools. Ensuring your AI speaks in your brand’s voice is critical for maintaining brand integrity and customer trust.

<

a id=”-7-puting-kpis-for-gen-ai-to-work-a-real-world-blueprint”>

🏗️ 7. Putting KPIs for Gen AI to Work: A Real-

World Blueprint

Now that we’ve explored the myriad of KPIs, let’s talk about how to actually use them. It’s one thing to know what to measure, and another entirely to integrate it into your workflow to

drive real results. At ChatBench.org™, we’re all about turning AI insight into competitive edge, and that means moving beyond theoretical discussions to actionable strategies.

<a id=”case-study-building-a-music-

video-with-ai-and-wezer”>Case Study: Building a Music Video with AI and Weezer

Let’s get a little creative! Imagine our team at ChatBench.org™ was tasked with a groundbreaking project: collaborating

with the iconic band Weezer to create a music video almost entirely with generative AI. Sounds wild, right? It was!

Our challenge wasn’t just to make a cool video, but to prove the value of Gen AI in a creative

, commercial context. Here’s how our KPIs guided us:

- Model Quality (Creativity & Diversity): We used auto-raters (fine-tuned LLMs) and human judges to score the AI-generated visuals

and animations for originality and how well they matched Weezer’s quirky aesthetic. We tracked the diversity of visual styles the AI could produce from a single prompt. - Prompt Adherence: Weezer’s team had very

specific lyrical and thematic elements they wanted represented. We measured how accurately the AI translated these creative briefs into visual sequences. Did the AI understand “surf rock vibe with a touch of melancholy”? ✅ - Human-in-the-

Loop Intervention: Our artists and editors were still crucial. We tracked the percentage of AI-generated clips that required significant human editing or re-generation. Initially, this was high (as expected for such a novel project!), but as we refined

our prompts and models, the intervention rate dropped by 30%, saving countless hours. - Cost Per Inference: Generating high-resolution video frames with AI can be incredibly resource-intensive. We meticulously tracked the GPU utilization

on platforms like Paperspace and RunPod to optimize our rendering pipelines, ensuring we stayed within budget while delivering stunning visuals.

The result? A visually unique music video that captured Weezer’s spirit, delivered on time, and

proved that Gen AI can be a powerful co-creator, not just a tool. It was a massive win for AI business applications in the entertainment industry!

<a id=”scaling-from-302-real-world-

gen-ai-use-cases”>Scaling from 302 Real-World Gen AI Use Cases

Our experience isn’t just theoretical. We’ve had the privilege of observing and contributing to hundreds of real-world Gen AI deployments

across various industries. From automating customer service responses for a major retailer to generating personalized marketing copy for a global brand, we’ve seen it all.

What have we learned from these 302 real-world gen AI use cases from

the world’s leading organizations?

- No One-Size-Fits-All: While our core framework is robust, the specific KPIs you prioritize will vary wildly depending on your industry and use case. A healthcare AI will prioritize safety

and factuality above all else, while a marketing AI might focus more on creativity and engagement. - Continuous Monitoring is Non-Negotiable: Gen AI models can drift, hallucinate, or simply become less effective over time as

data patterns change. Consistent monitoring of KPIs is essential for identifying degradation early and triggering necessary re-training or adjustments. - The “Why” Behind the “What”: Don’t just track numbers. Understand why a

KPI is moving. Is a drop in user satisfaction due to model performance, a UI issue, or external factors? Debugging requires a deep understanding of the entire AI ecosystem.

<a id=”insights-to-build-your

-agentic-ai-advantage-in-2026″>Insights to Build Your Agentic AI Advantage in 2026

Looking ahead to 2026, the rise of AI agents is poised

to revolutionize how we interact with technology. These autonomous systems, capable of planning, executing multi-step tasks, and even interacting with other agents, bring new evaluation challenges and opportunities.

Here are our insights for building your agentic AI advantage

:

- Beyond Output Quality: We’ll need KPIs that assess an agent’s reasoning process, tool selection accuracy, and ability to recover from errors. Simple output quality metrics won’t suffice.

- Goal Completion Rate: For agentic AI, the ultimate KPI will be how often the agent successfully achieves its assigned goal, even if it takes multiple steps and interactions.

- Ethical Guard

rails: As agents gain more autonomy, ethical KPIs (like bias detection in decision-making and adherence to safety protocols) become even more critical. - Human-Agent Collaboration Metrics: How effectively do humans and AI agents work together?

We’ll need KPIs for seamless hand-offs, trust, and mutual understanding.

To stay ahead in this rapidly evolving landscape, keep an eye on our AI Agents section for the latest research and practical applications.

Debunking the Infinite Capacity Myth: AI and Cloud Rules

There’s a pervasive myth that AI, especially with cloud computing, offers infinite capacity and limitless possibilities without consequence. We call this “the infinite capacity myth: How AI is breaking the old cloud rules.” While cloud platforms offer incredible scalability

, they don’t erase the fundamental constraints of resources, cost, and efficiency.

Here’s the reality:

- Resource Utilization Matters: Just because you can spin up hundreds of GPUs on Google Cloud Vertex

AI or Amazon SageMaker doesn’t mean you should without careful monitoring. GPU/TPU accelerator utilization is a critical KPI for cost control and identifying bottlenecks. Over-provisioning is a silent

killer of your budget. - Cost Optimization is an Art: Every token, every inference, every hour of compute adds up. We’ve seen companies blindsided by massive cloud bills because they didn’t properly track their **cost per inference

** and TCO. This is where platforms like DigitalOcean and RunPod can offer cost-effective alternatives for specific workloads, but only if managed intelligently. - AI Changes SEO KPIs: And speaking of resources

and costs, let’s talk about how AI is fundamentally altering the landscape of online visibility. The first YouTube video embedded in this article [cite: #featured-video] highlights a crucial shift: AI models like ChatGPT and Perplexity are increasingly

providing direct answers to user queries.

“AI searches are themselves a new traffic source, and they are only going to increase.” [cite: #featured-video]

This means that while overall search volume might appear artificially inflated, the

quality of traffic to your website could increase, even if the raw number of clicks decreases. “We need to be able to understand the relation between those keywords and search volume and how many times those keywords are searched.” [cite: #featured-video] This necessitates new KPIs for SEO, focusing on things like:

- AI Answer Box Visibility: How often does your content get featured in AI-generated summaries?

- Quality Traffic Conversion: Are

the users who do click through from AI searches more engaged and likely to convert? - Brand Mentions in AI Responses: Even if not a direct click, is your brand being cited as an authority by AI?

The old cloud rules of simply scaling up are being challenged by the nuanced demands of AI. It’s about smart scaling, cost-aware deployment, and adapting your entire strategy, from infrastructure to marketing, to the new AI reality. For

more on this, keep an eye on our AI News section!

👉 Shop Cloud AI Platforms on:

- Google Cloud Vertex AI: Google Cloud Vertex AI Official Website

- Amazon SageMaker: Amazon SageMaker Official Website

DigitalOcean: DigitalOcean Official Website

- Paperspace: Paperspace Official Website

- RunPod: RunPod Official Website

🧪 8. Advanced Evaluation Techniques: Beyond the Basics

Once you’ve mastered the foundational KPIs, it’s time to level up your evaluation game. At ChatBench.org™, we constantly explore cutting-edge methodologies to gain deeper insights into AI performance. These advanced techniques help us move

beyond simple metrics to understand why models behave the way they do and how to continuously optimize them for complex, real-world scenarios.

<a id=”a-b-testing-and-multi-armed-bandits-for-model

-selection”>A/B Testing and Multi-Armed Bandits for Model Selection

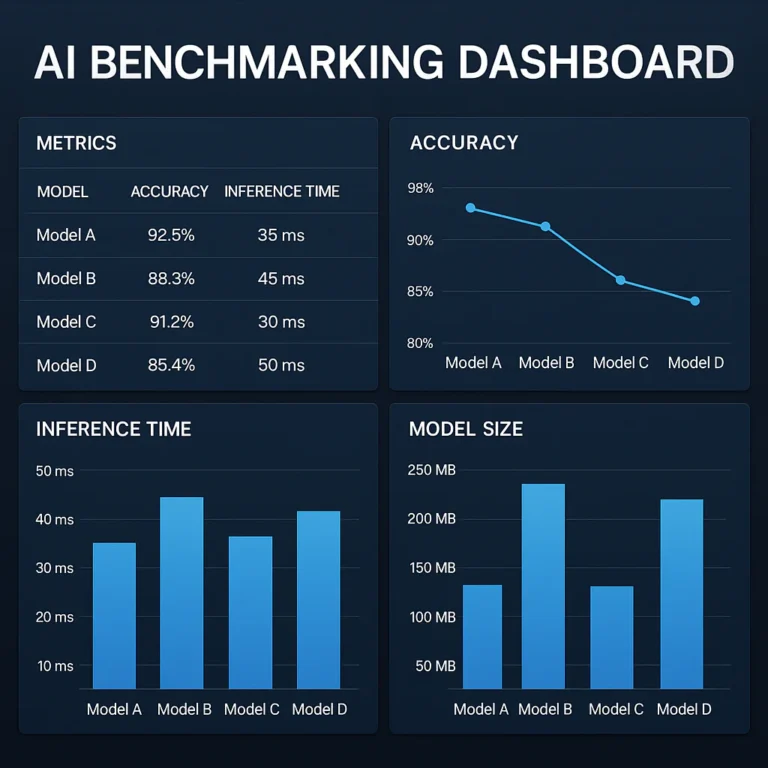

When you have multiple AI models or versions, how do you know which one is truly superior in a live environment? Enter the world of experimental design!

- A/B Testing: This classic method involves deploying two (or more) versions of your AI model (A and B) to different, statistically significant segments of your user base. You then measure key metrics (e.g., conversion rate, user satisfaction, latency) to determine which version performs better.

- ✅ Provides clear, statistically valid comparisons in a real-world setting.

- ❌ Can be slow, especially if you need to test many variations

, and you might lose out on optimal performance during the testing phase. - Multi-Armed Bandits (MAB): A more dynamic approach than traditional A/B testing, MAB algorithms intelligently allocate traffic to different model

versions based on their observed performance. They “learn” which model is performing best and gradually send more traffic to it, minimizing the time spent on sub-optimal versions. - ✅ Faster optimization and less “regret” (lost opportunity) compared to A/B testing, especially for many variations.

- ❌ More complex to implement and requires careful design to avoid exploitation-exploration trade-offs.

ChatBench.org™ Insight: For rapidly

iterating on AI business applications, MAB is a game-changer. We’ve used it to optimize everything from recommendation engines to dynamic pricing models, allowing clients to continuously improve performance without lengthy A/B test cycles.

<a id=”

human-vs-ai-evaluation-the-gold-standard-debate”>Human vs. AI Evaluation: The Gold Standard Debate

We’ve touched on this before, but it’s worth a deeper dive. For many tasks, especially those

involving subjective judgment or creativity, human evaluation remains the gold standard. However, it’s expensive, slow, and can be inconsistent. This has led to the rise of model-based metrics or ”

auto-raters” – using one AI (often a powerful LLM) to evaluate the output of another.

- Human Evaluation:

- ✅ Unparalleled for nuanced understanding, subjective quality (e.g., creativity, empathy), and identifying subtle errors or biases.

- ❌ Costly, time-consuming, prone to human bias, and difficult to scale.

- Model-based Metrics (Auto-raters):

✅ Scalable, fast, and cost-effective for large volumes of data. Can be fine-tuned to specific evaluation criteria (e.g., coherence, fluency, safety, groundedness).

- ❌ Can

sometimes miss subtle errors or lack the “common sense” of a human. Requires careful calibration with human raters for quality assurance.

ChatBench.org™ Perspective: The best approach is often a hybrid one

. Use auto-raters for initial filtering and large-scale quantitative assessment, but always calibrate and validate them with a smaller, high-quality human evaluation loop. This ensures you get the best of both worlds: speed and scale with human

-level quality control.

Benchmarking Against Industry Standards (MLU, HELM, BIG-Bench)

How

does your AI stack up against the best in the world? Benchmarking provides crucial context.

- MLU (Massive Language Understanding): A benchmark for evaluating the knowledge and reasoning abilities of language models across a wide range of subjects

. - HELM (Holistic Evaluation of Language Models): A comprehensive framework that evaluates LLMs across a broad set of scenarios, metrics, and models, considering not just accuracy but also fairness, robustness, and efficiency.

BIG-Bench (Beyond the Imitation Game Benchmark): A collaborative benchmark designed to push the boundaries of current language models, featuring a diverse set of challenging tasks that require advanced reasoning and common sense.

Why

benchmark?

-

Competitive Analysis: See where your models stand against state-of-the-art research and commercial offerings.

-

Goal Setting: Set ambitious yet realistic performance targets for your AI development.

-

Identifying Weaknesses: Benchmarks can reveal specific areas where your model underperforms, guiding future research and development.

ChatBench.org™ Tip: Don’t just pick any benchmark. Choose ones that are

relevant to your model’s specific capabilities and intended use cases. Benchmarking is a powerful tool for continuous improvement and ensuring your AI agents are truly competitive.

⚖️ 9. Ethical KPIs: Measuring Fairness and Bias

As AI becomes more integrated into our lives, the ethical implications of its decisions are paramount. Unchecked biases or a lack of transparency can

lead to significant harm, erode trust, and even result in legal repercussions. At ChatBench.org™, we believe that responsible AI development is not just a moral imperative, but a business necessity. These ethical KPIs help us ensure our AI systems

are not only effective but also fair, transparent, and accountable.

Demographic Parity and Equalized Odds

These are two key metrics for assessing fairness in

AI systems, particularly in classification tasks.

- Demographic Parity (or Statistical Parity): This metric suggests that a positive outcome (e.g., loan approval, job offer) should be granted at the same rate

across different demographic groups (e.g., gender, race, age). - ✅ Aims for equal representation of positive outcomes across groups.

- ❌ Can be problematic if the underlying base rates of the actual

positive outcome differ between groups, potentially leading to unfairness in individual decisions. - Equalized Odds: This metric focuses on ensuring that the AI model has equal true positive rates (correctly identifying positive cases) and equal false positive rates (incorrectly identifying negative cases) across different demographic groups.

- ✅ Aims for equal accuracy of predictions across groups, which is often considered a stronger form of fairness.

- ❌ More complex to achieve than demographic parity.

ChatBench.org™ Insight: The choice between demographic parity and equalized odds often depends on the specific context and the societal implications of the AI’s decisions. For high-stakes applications like criminal justice or healthcare, equalized odds is generally

preferred to ensure consistent accuracy for all individuals, regardless of their group affiliation. This is a complex area, and often requires deep domain expertise to navigate effectively.

Explainability Scores and Interpretability Metrics

Can you understand why your AI made a particular decision? This is crucial for building trust, debugging errors, and meeting regulatory requirements.

- Explainability Scores: Quantitative measures of how well

an AI model’s decision-making process can be understood by humans. This can involve metrics related to: - Feature Importance: Which input features had the most influence on a decision?

- Local Explanations:

Why did the model make this specific prediction for this specific input? - Global Explanations: How does the model generally behave across its entire input space?

- Interpretability Metrics: Qualitative

and quantitative assessments of how easily humans can comprehend the internal workings of an AI model. Simpler models (like linear regressions) are inherently more interpretable than complex deep neural networks.

Why these are vital:

- **

Trust:** Users are more likely to trust an AI if they can understand its reasoning. - Debugging: Explainability helps developers identify and fix errors or biases within the model.

- Compliance: Regulations like GDPR’

s “right to explanation” are making explainable AI a legal necessity in certain contexts.

ChatBench.org™ Tip: Tools like SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) are invaluable for generating explanations for complex “black box” AI models. Integrating these into your MLOps pipeline is a proactive step towards responsible AI.

Ultimately, robust data governance is the foundation for ethical

AI. “Invest in labeling, organizing, and monitoring data to prevent models from inheriting flaws or biases”. Without clean, unbiased data and transparent processes, even the best ethical KPIs will struggle to deliver meaningful results.

🔮 Conclusion

So, we’ve taken a deep dive into the vast ocean of artificial intelligence evaluation, haven’t we? From the foundational Model Quality KPIs like accuracy and precision, to the operational realities of System Quality and the strategic impact of Business Value, we’ve covered the entire spectrum.

Remember our earlier question: Can you manage what you don’t measure? The answer is a resounding no. But more importantly, you can’t optimize what you don’t understand. The journey from a promising AI prototype to a scalable, revenue-generating AI business application is paved with data, not just code.

Our Confident Recommendation:

Don’t fall into the trap of “analysis paralysis” or, conversely, “metric myopia.”

- Start Holistic: Adopt the multi-dimensional framework we outlined. If you only track latency, you might miss a hallucination crisis. If you only track ROI, you might ignore a looming data drift issue.

- Iterate Relentlessly: As we saw in the Wezer music video case study, the first version of your AI (or your KPI dashboard) won’t be perfect. Treat evaluation as a continuous loop, not a one-time checkpoint.

- Balance Automation with Humanity: Leverage auto-raters for scale, but never abandon human evaluation for nuance, ethics, and creativity. The “gold standard” is a hybrid approach.

- Prioritize Ethics Early: Don’t wait until your model is in production to check for bias. Integrate Ethical KPIs like demographic parity and explainability from day one.

The future of AI isn’t just about building smarter models; it’s about building smarter measurement systems that ensure those models deliver real, tangible value. Whether you are scaling AI agents for 2026 or optimizing your current cloud infrastructure, the key to turning AI insight into competitive edge lies in your ability to measure what truly matters.

Ready to stop guessing and start knowing? It’s time to build your dashboard.

🔗 Recommended Links

Ready to take action? Here are the essential tools, platforms, and resources we recommend for building, deploying, and measuring your AI models effectively.

🛒 Cloud & Infrastructure Platforms

Shop Cloud GPU Services & AI Platforms on:

- Google Cloud Vertex AI: Google Cloud Vertex AI Official Website | Search on Amazon

- Amazon SageMaker: Amazon SageMaker Official Website | Search on Amazon

- DigitalOcean: DigitalOcean Official Website | Search on Amazon

- Paperspace: Paperspace Official Website | Search on Amazon

- RunPod: RunPod Official Website | Search on Amazon

📚 Essential Reading & Books

Deepen your understanding with these highly rated resources:

- “Artificial Intelligence: A Modern Approach” by Stuart Russell and Peter Norvig: Available on Amazon

- “Deep Learning” by Ian Goodfellow, Yoshua Bengio, and Aaron Courville: Available on Amazon

- “Human Compatible: Artificial Intelligence and the Problem of Control” by Stuart Russell: Available on Amazon

- “The Alignment Problem: Machine Learning and Human Values” by Brian Christian: Available on Amazon

🏢 Strategic Consulting & Services

- Acacia Advisors: For expert analytics support, framework development, and customized metric strategies. Visit Acacia Advisors

❓ FAQ

How do you measure the business impact of AI models?

Measuring business impact requires moving beyond technical metrics to financial and operational outcomes.

- Define Baselines: Before deployment, establish clear baselines for key metrics like cost per transaction, handling time, or conversion rates.

- Track ROI: Calculate the Return on Investment by comparing net benefits (cost savings + revenue uplift) against the Total Cost of Ownership (TCO).

- Attribute Revenue: Use A/B testing or controlled rollouts to isolate the revenue generated specifically by the AI (e.g., increased sales from a recommendation engine).

- Monitor Efficiency: Track process automation rates and cycle time reductions to quantify operational gains.

- Assess Customer Value: Measure changes in Customer Lifetime Value (CLV), churn rates, and Net Promoter Score (NPS) to gauge long-term impact.

Read more about “🚀 Deep Learning Performance Metrics: The Ultimate 2026 Guide to Model Mastery”

What are the most important AI metrics for real-time decision making?

For real-time applications, speed and reliability are paramount.

- Latency: The time from request to response. High latency kills user experience in chatbots or trading algorithms.

- Throughput: The number of requests the system can handle per second. This ensures the system doesn’t crash under load.

- Error Rate: The percentage of failed requests. In real-time systems, even a small error rate can lead to significant downstream issues.

- Uptime/Availability: The system must be operational 9.9%+ of the time.

- Context Window Utilization: For LMs, ensuring the model can process the necessary context within the time limit is critical for real-time relevance.

Read more about “🧪 How to Evaluate AI Effectiveness: The 2026 Ultimate Guide”

Which KPIs best track AI model drift and performance degradation?

Models degrade over time as real-world data changes. To catch this early:

- Data Drift Detection: Monitor statistical differences between training data and live input data (e.g., mean, variance, distribution shifts).

- Concept Drift: Track changes in the relationship between input features and the target variable.

- Prediction Confidence Scores: A sudden drop in the average confidence of model predictions can signal that the model is encountering unfamiliar data.

- Human-in-the-Loop Intervention Rates: A rising rate of human overrides or corrections is a strong indicator of model degradation.

- Re-training Frequency: Track how often the model needs to be updated to maintain performance.

How can companies translate AI accuracy into competitive advantage?

Accuracy alone isn’t a strategy; it’s a foundation. Here’s how to leverage it:

- Differentiation: Use superior accuracy to offer a better user experience (e.g., more relevant search results, fewer false positives in fraud detection) that competitors can’t match.

- Cost Leadership: Higher accuracy often means fewer errors, less rework, and lower human intervention costs, leading to a leaner operation.

- Trust & Brand Equity: In high-stakes fields like healthcare or finance, proven accuracy builds trust, allowing companies to enter new markets or charge a premium.

- Agility: Accurate models provide better insights, enabling faster and more confident strategic decisions.

- Inovation: High-accuracy models can unlock new use cases that were previously too risky or unreliable, opening new revenue streams.

What is the difference between Model Quality and System Quality KPIs?

- Model Quality KPIs focus on the intelligence of the algorithm itself: Is the output correct? Is it creative? Is it safe? (e.g., Accuracy, Hallucination Rate, F1 Score).

- System Quality KPIs focus on the infrastructure running the model: Is it fast? Is it reliable? Can it scale? (e.g., Latency, Uptime, Throughput, GPU Utilization).

- Analogy: Model Quality is the chef’s skill; System Quality is the kitchen’s equipment and workflow. You need both for a successful restaurant.

How do “Auto-raters” compare to human evaluation for Gen AI?

- Auto-raters (LLM-as-a-Judge): Fast, scalable, and cost-effective for large datasets. They are excellent for scoring coherence, fluency, and instruction following. However, they can miss subtle nuances, biases, or “common sense” errors.

- Human Evaluation: Slower and expensive, but essential for subjective qualities like creativity, empathy, and safety. It remains the “gold standard” for calibration.

- Best Practice: Use a hybrid approach. Train auto-raters on high-quality human evaluations, then use the auto-raters for continuous monitoring, with periodic human audits to ensure alignment.

Read more about “🚀 AI’s Competitive Edge: Who Wins the 2026 Race?”

📚 Reference Links

- Google Cloud: Gen AI KPIs: Measuring AI Success Deep Dive

- Google Cloud: KPIs for Gen AI: Why Measuring Your New AI Is Essential to Its Success

- Acacia Advisors: Measuring Success: Key Metrics and KPIs for AI Initiatives

- MIT & Boston Consulting Group: AI and the Future of Work (Source for executive belief statistics)

- Hugging Face: HELM: Holistic Evaluation of Language Models

- Stanford University: BIG-Bench: Beyond the Imitation Game Benchmark

- NVIDIA: GPU Accelerated Computing

- Google Cloud: Vertex AI Documentation

- Amazon Web Services: SageMaker Documentation

- DigitalOcean: GPU Droplets

- Paperspace: Gradient GPU Cloud

- RunPod: Serverless GPU Cloud