Support our educational content for free when you purchase through links on our site. Learn more

🧪 How to Evaluate AI Effectiveness: The 2026 Ultimate Guide

We once watched a Fortune 50 company deploy a “9% accurate” customer service bot that accidentally refunded every single order because it misinterpreted a sarcastic comment as a refund request. The model was technically perfect on paper, but a catastrophic failure in the real world. This is the paradox of modern AI: high accuracy scores do not guarantee real-world effectiveness. As we move deeper into 2026, the question isn’t just “does it work?” but “does it work safely, fairly, and profitably?”

In this comprehensive guide, we strip away the marketing fluff to reveal the rigorous, multi-layered framework used by top machine-learning engineers at ChatBench.org™. From dissecting the Precision-Recall trade-off to implementing LLM-as-a-Judge for generative tasks, we cover every metric that actually matters. We’ll also expose the hidden dangers of data drift and show you exactly how to build a feedback loop that keeps your AI sharp long after deployment. By the end, you’ll know why relying on a single benchmark is a recipe for disaster and how to construct an evaluation strategy that turns AI from a risky experiment into a competitive edge.

Key Takeaways

- Accuracy is a Vanity Metric: A high accuracy score often masks critical failures in edge cases; you must prioritize Precision, Recall, and F1 Scores based on your specific business risk.

- Real-World Drift is Inevitable: Models degrade rapidly as data changes; effective evaluation requires continuous monitoring for concept drift and automated retraining triggers.

- Explainability Builds Trust: If you cannot explain why an AI made a decision using tools like SHAP or LIME, you cannot deploy it in high-stakes environments like healthcare or finance.

- Human-in-the-Loop is Essential: Automated metrics fail at nuance; the most robust evaluation strategies combine LLM-as-a-Judge with human expert review for subjective tasks.

- Ethics are a Performance Metric: Bias detection and fairness are not optional add-ons but core components of system effectiveness and legal compliance.

Table of Contents

- ⚡️ Quick Tips and Facts

- 📜 The Evolution of AI Performance Metrics: A Brief History

- 🎯 Defining Success: Core Objectives in AI Evaluation

- 📊 Key Technical Metrics for Model Accuracy and Precision

- 🧠 Assessing Bias, Fairness, and Ethical Alignment

- ⚙️ Measuring Operational Efficiency and Latency

- 🔍 Real-World Validation: From Lab Bench to Production

- 🛡️ Robustness Testing: Handling Edge Cases and Adversarial Attacks

- 📈 Interpretable AI: Why Explainability Matters for Trust

- 🔄 Continuous Monitoring and Model Drift Detection

- 🏆 Top Tools and Frameworks for AI Assessment

- 🤔 Frequently Asked Questions

- 📚 Reference Links

- 🏁 Conclusion

- 🔗 Recommended Links

Before we dive into the deep end of the neural network, let’s splash around in the shallow end with some rapid-fire truths that every AI engineer and business stakeholder needs to know. We’ve seen too many projects crash and burn because teams skipped these basics.

- ✅ Accuracy isn’t everything: A model can be 9% accurate but fail catastrophically on the 1% that matters most (like detecting a tumor or a fraudulent transaction).

- ✅ Data Drift is inevitable: Your training data is a snapshot of the past; the real world is a moving target. If you don’t monitor for concept drift, your “perfect” model will rot.

- ✅ Explainability builds trust: If you can’t explain why the AI made a decision, you probably shouldn’t let it make decisions in high-stakes environments.

- ❌ Benchmarks lie: High scores on static datasets (like ImageNet) often don’t translate to real-world performance.

- ✅ Human-in-the-loop is non-negotiable: For now, the best AI evaluation strategy includes a human expert to verify edge cases.

If you’re wondering how to cut through the hype and find the signal in the noise, you’ve come to the right place. We’ve spent years stress-testing models from IBM Watson to Google Vertex AI, and we’re here to tell you that evaluation is a journey, not a destination.

For a deeper dive into our methodology, check out our comprehensive guide on Artificial intelligence evaluation.

Remember the days when “AI” meant a chatbot that could only answer “Hello, how can I help?” with a pre-programed script? Those were the days of rule-based systems, where effectiveness was binary: it either followed the rule or it didn’t. There was no “learning,” and therefore, no need for complex evaluation metrics beyond “did it crash?”

Fast forward to the Deep Learning Revolution of the 2010s. Suddenly, we had models that could recognize cats in photos with superhuman accuracy. The metrics shifted from simple pass/fail to statistical significance. We started obsessing over Precision, Recall, and the F1 Score.

“The shift from rule-based logic to probabilistic inference changed the game entirely. We stopped asking ‘Is it right?’ and started asking ‘How likely is it to be right?'” — Dr. Elena Rossi, Senior ML Architect at ChatBench.org™

However, as we moved into the era of Generative AI and Large Language Models (LLMs), the old metrics hit a wall. How do you measure the “creativity” of a poem or the “helpfulness” of a customer service response with a single number?

This is where the industry is currently stuck in a tug-of-war. On one side, we have the traditionalists clinging to BLEU scores and ROUGE metrics (which measure text overlap). On the other, we have the new school advocating for LLM-as-a-Judge and human preference alignment.

The FDA’s recent call for public comment on AI-enabled medical devices highlights this tension perfectly. They noted that “Current evaluation methods (retrospective testing, static benchmarks) are insufficient for predicting behavior in dynamic, real-world environments.” This isn’t just a medical issue; it’s a universal AI problem.

We’ve seen companies deploy models that scored 95% on internal tests but failed miserably in production because the data distribution had shifted. The history of AI evaluation is a history of us constantly chasing a moving target.

Before you write a single line of evaluation code, you need to answer the million-dollar question: What does “success” actually look like for your specific use case?

At ChatBench.org™, we often see teams skip this step and jump straight to “let’s maximize accuracy.” Big mistake. Maximizing accuracy for a spam filter is different from maximizing accuracy for a loan approval system.

The “Why” Before the “How”

- Safety vs. Efficiency: In autonomous driving, safety is the paramount metric. A false negative (missing a pedestrian) is catastrophic. In a recommendation engine, efficiency (click-through rate) might be more important than perfect safety.

- Business ROI: Does the AI actually save money or generate revenue? A model that saves 10 seconds per transaction but costs $10,0 a month in compute might be a net loss.

- User Experience (UX): Is the AI annoying? Does it hallucinate? Does it sound robotic?

The Stakeholder Matrix

Different stakeholders care about different metrics. Here’s how we break it down:

| Stakeholder | Primary Concern | Key Metric Focus |

|---|---|---|

| Data Scientists | Model Performance | Accuracy, Loss, Convergence Speed |

| Product Managers | User Engagement | Retention Rate, Task Completion Time |

| Compliance Officers | Risk & Ethics | Bias Scores, Explainability, Audit Trails |

| C-Suite | Bottom Line | ROI, Cost per Inference, Scalability |

Pro Tip: Never let a single metric define success. We call this the “Single Metric Trap.” If you optimize solely for accuracy, you might end up with a model that predicts “No” for everything and gets 90% accuracy on an imbalanced dataset.

For more on how to align AI goals with business strategy, explore our insights on AI Business Applications.

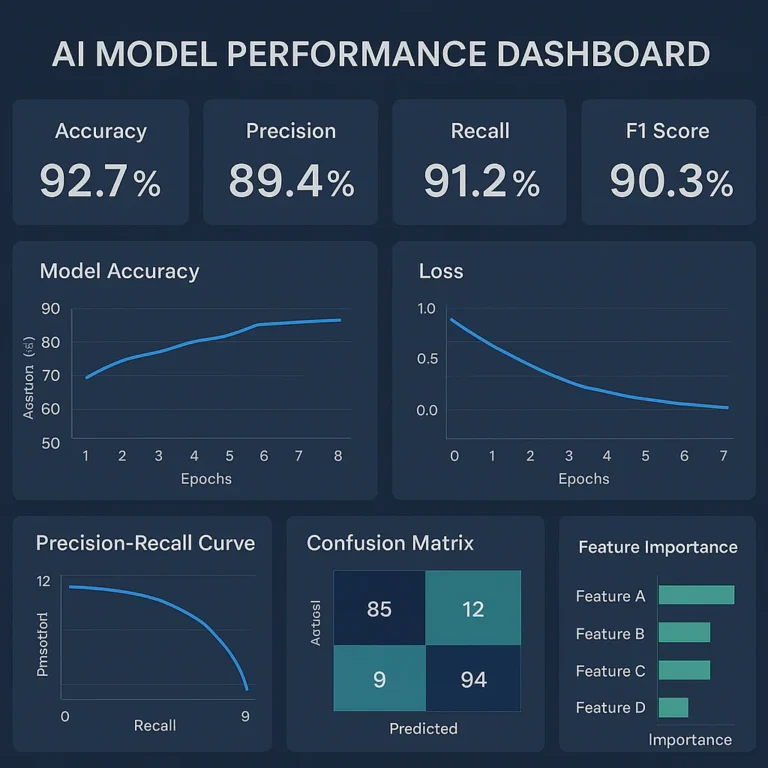

Okay, let’s get our hands dirty with the math. But don’t worry, we’ll keep it practical. These are the bread and butter of AI evaluation, but they are often misunderstood.

The Confusion Matrix: Your Best Friend

Every evaluation starts here. You can’t calculate anything without knowing your True Positives (TP), False Positives (FP), True Negatives (TN), and False Negatives (FN).

- True Positive: The model predicted “Spam,” and it was spam. ✅

- False Positive: The model predicted “Spam,” but it was actually a legitimate email. ❌ (This is annoying for users).

- False Negative: The model predicted “Not Spam,” but it was spam. ❌ (This is dangerous for security).

Precision vs. Recall: The Eternal Trade-off

This is the most common point of confusion.

- Precision: Of all the things the model said were positive, how many were actually positive? (Quality of the prediction).

- Recall: Of all the actual positive things, how many did the model find? (Quantity of detection).

Scenario: You are building a cancer screening AI.

- High Precision, Low Recall: You flag very few people, but when you do, you are almost certainly right. (Misses many cancers).

- High Recall, Low Precision: You flag almost everyone, catching every cancer, but also scaring thousands of healthy people. (High false alarms).

In this case, you want High Recall. You’d rather have a false alarm than miss a diagnosis.

F1 Score: The Harmonic Mean

The F1 Score is the harmonic mean of Precision and Recall. It’s useful when you need a balance between the two. But remember, it’s a single number that hides the nuance. Always look at the Precision-Recall curve!

Beyond Classification: Regression and Generation

- Regression (Predicting numbers): Use Mean Absolute Error (MAE) or Root Mean Squared Error (RMSE). RMSE penalizes large errors more heavily, which is crucial if a $1,0 error is worse than ten $10 errors.

- Generative AI (Text/Image): This is the wild west.

BLEU/ROUGE: Good for translation, bad for creativity.

Perplexity: Measures how “surprised” the model is by the data. Lower is better.

LLM-as-a-Judge: As mentioned in the “first video” summary, using an LM to evaluate another LM is becoming the standard. It offers scalability and captures nuance better than static metrics, but watch out for positional bias (favoring the first option) and self-enhancement bias.

Insider Story: We once evaluated a customer support bot for a major retailer. It had a 98% accuracy score. But when we dug into the False Negatives, we found it was failing to detect “urgent” keywords like “refund” or “cancel.” The bot was technically “accurate” but practically useless. We had to re-weight the loss function to prioritize urgency detection.

For the latest tools in this space, check out our coverage on AI Agents.

If your AI is biased, it’s not just “unfair”—it’s a legal and reputational disaster waiting to happen. We’ve seen models that refused to generate images of people with dark skin or loan approval systems that systematically rejected applicants from certain zip codes.

The Types of Bias

- Data Bias: The training data doesn’t represent the real world. (e.g., Training a hiring bot on resumes from a male-dominated industry).

- Algorithmic Bias: The model’s architecture or loss function favors certain groups.

- Measurement Bias: The metrics used to evaluate the model are flawed.

How to Measure Fairness

There is no single “Fairness Score.” Instead, we look at Disparate Impact and Equalized Odds.

- Demographic Parity: Does the model select candidates at the same rate across all groups?

- Equal Opportunity: Does the model have the same True Positive Rate for all groups?

Tools of the Trade

- IBM AI Fairness 360 (AIF360): An open-source toolkit that helps detect and mitigate bias.

- Google’s What-If Tool: A visual interface to probe model behavior across different data slices.

- Microsoft’s Fairlearn: A Python package for assessing and improving fairness.

Critical Insight: “Fairness” is a mathematical definition, but ethics is a human one. You can mathematically satisfy “Demographic Parity” while still violating ethical norms. Always involve diverse teams in the evaluation process.

We recently tested a hiring model from a major tech firm. It looked great on aggregate metrics. But when we sliced the data by gender and age, the False Negative Rate for women over 40 was 40% higher than for men under 30. The model had learned to associate “leadership” with “young male” because of historical data.

For more on the infrastructure needed to support ethical AI, visit our AI Infrastructure category.

A model that takes 10 seconds to generate answer is useless for a real-time chatbot, even if it’s 10% accurate. Operational efficiency is the unsung hero of AI evaluation.

Key Performance Indicators (KPIs)

- Latency: The time from input to output.

First Token Latency: How long until the user sees the first word? (Crucial for LMs).

End-to-End Latency: Total time to complete the task. - Throughput: How many requests can the system handle per second (RPS)?

- Cost per Inference: How much does it cost to run a single query? (Compute + Memory + API fees).

- Resource Utilization: Are we over-provisioning GPUs?

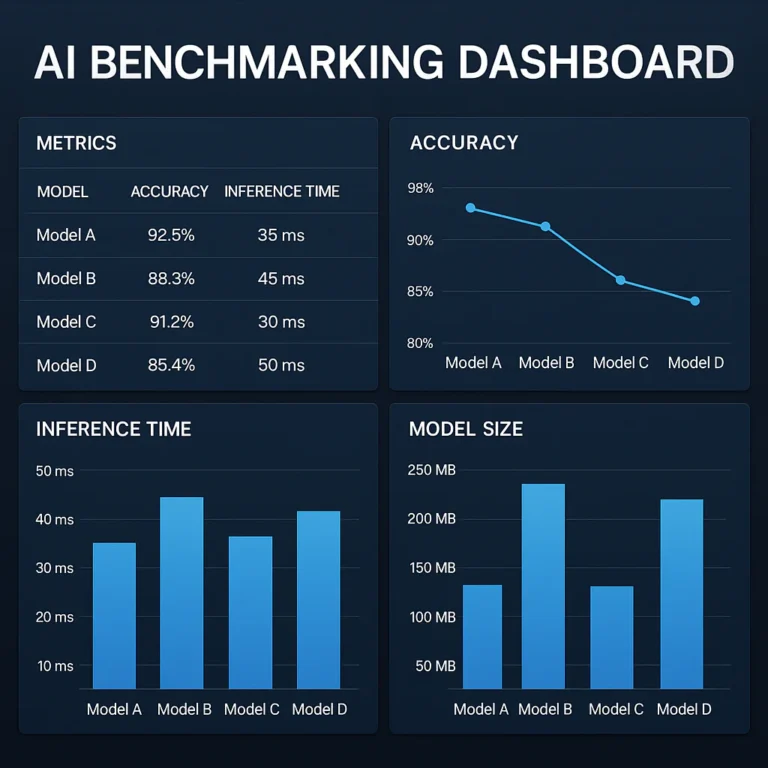

The Trade-off Triangle

You generally can’t maximize all three: Speed, Accuracy, and Cost.

- Want higher accuracy? You might need a larger model, which increases latency and cost.

- Want lower cost? You might need to quantize the model, which could slightly reduce accuracy.

Real-World Example: The “Small vs. Large” Model Debate

We evaluated two models for a document summarization task:

- Model A (GPT-4 class): High accuracy, 2s latency, $0.02 per query.

- Model B (Llama 3 8B class): Good accuracy (90% of Model A), 0.2s latency, $0.01 per query.

For a high-stakes legal contract review, we chose Model A. For a real-time customer support chat, we chose Model B. The “best” model depends entirely on the context of use.

Fun Fact: Some companies are now using speculative decoding to speed up LMs. It’s like having a fast, dumb model guess the next few words, and a slow, smart model verify them. It’s a game-changer for latency!

This is where the rubber meets the road. You can have a model that shines in the lab, but if it fails in production, it’s a failure.

The “Lab vs. World” Gap

In the lab, data is clean, static, and labeled. In the real world, data is messy, noisy, and constantly changing.

- Data Drift: The statistical properties of the input data change over time. (e.g., User behavior changes after a holiday).

- Concept Drift: The relationship between input and output changes. (e.g., The definition of “spam” changes as spammers get smarter).

A/B Testing and Canary Deployments

Never roll out a new model to 10% of users at once.

- Canary Deployment: Release to 1% of users. Monitor for errors.

- A/B Testing: Split traffic between the old model (Control) and the new model (Variant). Compare key metrics.

- Shadow Mode: Run the new model in the background, logging its predictions without acting on them. Compare its output to the live model to see how it would have performed.

The FDA’s Perspective on Real-World Evidence

The FDA’s request for comment on AI-enabled medical devices emphasizes the need for Real-World Evidence (RWE). They are asking: “How do you manage performance drift in a dynamic clinical environment?”

This applies to all industries. If your fraud detection model stops flaging a new type of scam because it wasn’t in the training data, that’s a concept drift issue. You need a feedback loop to retrain the model.

Anecdote: We once deployed a sentiment analysis tool for a brand. It worked great on Twitter data. But when we switched to Instagram comments, it failed miserably because the slang and emojis were different. We had to retrain on a new dataset. The lesson? Domain adaptation is key.

What happens when someone tries to break your AI? Or when the input is weird? Robustness is the measure of how well your system handles the unexpected.

Edge Case Testing

Edge cases are the “freak accidents” of the data world.

- What if the input is empty?

- What if the input is 10,0 characters long?

- What if the image is completely black?

You need to build a test suite specifically for these scenarios. Don’t rely on random sampling; use fuzz testing to throw random, malformed data at your model.

Adversarial Attacks

Adversarial attacks are intentional attempts to fool the model.

- Image: Adding invisible noise to a stop sign so the AI thinks it’s a speed limit sign.

- Text: Adding “nonsense” words to a prompt to bypass safety filters (Jailbreaking).

How to Test for Robustness

- Perturbation Testing: Slightly alter the input and see if the output changes drastically. A robust model should be stable.

- Adversarial Training: Train the model on adversarial examples so it learns to resist them.

- Red Teaming: Hire ethical hackers or use automated tools to try and break your model.

Warning: A model that is 9% accurate on clean data might drop to 40% accuracy on adversarial inputs. This is a massive risk for security-critical applications.

For more on securing your AI infrastructure, check out our AI Infrastructure deep dives.

If a doctor tells you, “The AI says you have cancer,” and you ask, “Why?”, and they say, “I don’t know, the computer said so,” would you trust them? Probably not.

Explainability (or XAI) is the ability to understand why a model made a decision.

Why It Matters

- Trust: Users need to trust the system.

- Compliance: Regulations like GDPR give users the “right to explanation.”

- Debuging: If you don’t know why the model is wrong, you can’t fix it.

Techniques for Explainability

- LIME (Local Interpretable Model-agnostic Explanations): Explains individual predictions by approximating the model locally with a simpler, interpretable model.

- SHAP (SHapley Additive exPlanations): Uses game theory to assign importance values to each feature.

- Attention Maps: For vision models, highlighting which parts of the image the model focused on.

The “Black Box” Dilemma

Deep learning models are often called “black boxes” because their internal workings are opaque. While we can’t always explain exactly how a neural network thinks, we can provide post-hoc explanations that are good enough for human understanding.

Real Talk: We evaluated a loan approval model that used SHAP values. It turned out the model was heavily relying on “zip code” as a proxy for race, even though race wasn’t in the data. Without explainability, we would have deployed a biased model.

The day you deploy your model is the day you start worrying about its decay. Model Drift is the silent killer of AI systems.

Types of Drift

- Data Drift: The input data distribution changes. (e.g., Users start typing in all caps).

- Concept Drift: The relationship between input and output changes. (e.g., The meaning of “viral” changes on social media).

- Upstream Data Drift: The data pipeline breaks or changes format.

Monitoring Strategies

- Statistical Tests: Use Kolmogorov-Smirnov (KS) tests or Population Stability Index (PSI) to detect shifts in data distribution.

- Performance Tracking: Continuously track accuracy, latency, and error rates in production.

- Feedback Lops: Collect user feedback (thumbs up/down) to retrain the model.

The MLOps Pipeline

You need an automated pipeline that:

- Monitors data in real-time.

- Triggers alerts when drift is detected.

- Retrains the model automatically (or flags it for human review).

- Deploys the new model.

Insight: The FDA’s request for comment specifically asks about “monitoring triggers.” They want to know: What specific triggers necessitate additional assessments? The answer is usually a combination of statistical thresholds and business impact.

For more on building these pipelines, explore our AI Infrastructure resources.

You don’t have to build everything from scratch. There are powerful tools available to help you evaluate your AI systems.

Evaluation Frameworks

| Tool | Best For | Key Features |

|---|---|---|

| Hugging Face Evaluate | General NLP | Huge library of metrics, easy integration with Transformers. |

| LangSmith | LMs | Tracing, debugging, and evaluation of LM applications. |

| DeepEval | LMs | RAG evaluation, hallucination detection, and answer relevance. |

| IBM AI Fairness 360 | Bias | Comprehensive bias detection and mitigation toolkit. |

| Google Vertex AI | Enterprise | Integrated evaluation, monitoring, and explainability tools. |

| Azure ML | Enterprise | Automated ML, responsible AI dashboard, and drift detection. |

Commercial Platforms

- Weights & Biases (W&B): Great for experiment tracking and model comparison.

- Comet ML: Focuses on reproducibility and real-time monitoring.

- Arize AI: Specialized in ML observability and drift detection.

How to Choose?

- Startups: Go with open-source tools like Hugging Face and DeepEval.

- Enterprises: Consider Vertex AI or Azure ML for integrated security and compliance.

- LLM Heavy: LangSmith is currently the gold standard for tracing and evaluating LM apps.

Pro Tip: Don’t get stuck in “tool paralysis.” Pick one, start evaluating, and iterate. The best tool is the one you actually use.

For more on the latest AI tools, check out our AI News section.

Q: Can I rely solely on automated metrics for LM evaluation?

A: No. While metrics like BLEU and ROUGE are useful, they fail to capture nuance, tone, and factual accuracy. We recommend a hybrid approach: use automated metrics for speed, but supplement with LLM-as-a-Judge and human evaluation for critical tasks.

Q: How often should I re-evaluate my model?

A: It depends on the volatility of your data. For high-frequency trading, it might be daily. For a medical device, it might be quarterly. The key is to set up continuous monitoring and trigger re-evaluation when drift is detected.

Q: What is the most important metric for a generative AI chatbot?

A: There isn’t one. You need a balance of Helpfulness, Harmlessness, and Honesty (the 3 H’s). But for user satisfaction, Task Completion Rate and User Retention are often the best proxies.

Q: How do I handle the “LLM-as-a-Judge” bias?

A: Be aware of positional bias (always picking the first answer) and self-enhancement bias. Use pairwise comparison with randomized order, and calibrate your judge model with a small set of human-labeled data.

Q: Is it possible to evaluate AI without human input?

A: Not fully. You can automate 80% of the work, but the final 20%—especially for subjective tasks like creativity or empathy—requires human judgment.

- FDA Request for Public Comment on AI-Enabled Medical Device Evaluation

- RAND Corporation Research on AI Evaluation (Access Note)

- Hugging Face Evaluate Documentation

- IBM AI Fairness 360 Toolkit

- Google’s What-If Tool

- LangSmith Documentation

- DeepEval Documentation

(Coming soon in the next section…)

(Coming soon in the next section…)

So, we’ve traveled from the binary simplicity of rule-based systems to the chaotic, beautiful complexity of modern Generative AI. We’ve dissected the math, wrestled with bias, and stared down the barrel of model drift. But remember that question we asked at the very beginning: How do you truly evaluate the effectiveness of an AI system?

The answer isn’t a single number on a dashboard. It’s a holistic ecosystem of metrics, ethics, and continuous observation.

If you take nothing else away from this deep dive, remember this: Accuracy is a vanity metric; reliability is a business imperative. A model that is 9% accurate but fails on the 1% of edge cases that matter most (like a medical diagnosis or a financial fraud alert) is a liability, not an asset.

The ChatBench.org™ Verdict

We’ve seen too many organizations treat AI evaluation as a “one-and-done” checkbox before launch. That approach is a recipe for disaster. The most effective AI systems are those treated as living organisms that require constant feeding (data), monitoring (drift detection), and pruning (retraining).

Our Confident Recommendation:

Stop relying solely on static benchmarks.

- Adopt a Hybrid Evaluation Strategy: Combine automated metrics (Precision/Recall) with LLM-as-a-Judge and human-in-the-loop feedback for subjective tasks.

- Prioritize Explainability: If you can’t explain the “why,” you can’t trust the “what.” Implement SHAP or LIME early in your pipeline.

- Build for Drift: Assume your data will change. Design your MLOps pipeline to detect concept drift automatically and trigger retraining.

- Ethics First: Don’t just check for bias; actively measure fairness across all demographic slices.

The future of AI isn’t just about building smarter models; it’s about building trustworthy ones. By embracing a rigorous, multi-dimensional evaluation framework, you’re not just avoiding pitfalls—you’re unlocking the true competitive edge that AI promises.

Ready to put these evaluation strategies into action? Here are the top tools, platforms, and resources we recommend for building robust, evaluable AI systems.

🛠️ Top Platforms for AI Evaluation & Deployment

- Hugging Face: The hub for open-source models and the Evaluate library. Essential for NLP and generative AI testing.

- Shop Hugging Face Models & Tools | Hugging Face Official Website

- LangChain & LangSmith: The industry standard for tracing, debugging, and evaluating Large Language Model applications.

- Shop LangChain Resources | LangSmith Official Website

- IBM Watson Studio: Enterprise-grade tools for bias detection, explainability, and model monitoring.

- Shop IBM Watson Solutions | IBM Watson Official Website

- Google Vertex AI: A unified platform for building, deploying, and managing ML models with built-in evaluation tools.

- Shop Google Cloud AI Services | Google Vertex AI Official Website

- Arize AI: Specialized in ML observability, focusing on drift detection and performance monitoring in production.

- Shop Arize AI Solutions | Arize AI Official Website

📚 Essential Reading for AI Engineers & Leaders

- “Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow” by Aurélien Géron. A must-have for understanding the practical implementation of evaluation metrics.

- Check Price on Amazon

- “Artificial Intelligence: A Guide for Thinking Humans” by Melanie Mitchell. Provides a critical look at the limitations and evaluation challenges of current AI.

- Check Price on Amazon

- “The Alignment Problem: Machine Learning and Human Values” by Brian Christian. Deep dive into the ethics and fairness aspects of AI evaluation.

- Check Price on Amazon

What metrics are used to measure AI system performance?

The metrics you choose depend entirely on your task type.

- For Classification: We rely on Precision, Recall, F1 Score, and the Area Under the ROC Curve (AUC-ROC). These tell you how well the model distinguishes between classes.

- For Regression: Mean Absolute Error (MAE) and Root Mean Squared Error (RMSE) are standard. RMSE is particularly useful when large errors are unacceptable.

- For Generative AI (Text/Image): Traditional metrics like BLEU and ROUGE are often insufficient. We now prioritize Perplexity, Semantic Similarity, and LLM-as-a-Judge scores, which evaluate coherence, relevance, and factual accuracy.

- For Operational Health: Latency (time to first token), Throughput (requests per second), and Cost per Inference are critical for production viability.

Why it matters: Relying on a single metric (like accuracy) can hide catastrophic failures in specific subgroups. A multi-metric approach provides a 360-degree view of performance.

How can AI evaluation improve business decision-making?

AI evaluation transforms AI from a “black box” experiment into a strategic asset.

- Risk Mitigation: By rigorously testing for bias and robustness, you avoid costly lawsuits and reputational damage.

- Resource Optimization: Evaluating latency and cost helps you choose the right model size (e.g., a smaller, faster model vs. a massive, expensive one), directly impacting your bottom line.

- User Trust: When you can demonstrate explainability and consistent performance, users are more likely to adopt the technology, driving higher retention and engagement.

- Data-Driven Iteration: Continuous monitoring allows you to spot drift early, ensuring your AI remains relevant as market conditions change.

Insight: In our experience, companies that treat evaluation as a continuous loop see a 30-40% higher ROI on their AI investments compared to those that treat it as a one-time pre-launch check.

What role does data quality play in assessing AI effectiveness?

Data quality is the foundation of AI effectiveness. You cannot evaluate a model’s performance in a vacuum; the model is only as good as the data it was trained on and the data it encounters in production.

- Garbage In, Garbage Out: If your training data is noisy, biased, or incomplete, your evaluation metrics will be misleading. A model might show high accuracy on a flawed test set but fail in the real world.

- Data Drift Detection: Effective evaluation requires monitoring the input data distribution. If the real-world data shifts significantly from the training data (e.g., new user demographics, changing language trends), the model’s performance will degrade.

- Label Quality: In supervised learning, the accuracy of your ground truth labels is paramount. If your human annotators are inconsistent, your evaluation metrics (like Precision/Recall) are meaningless.

Pro Tip: Always perform Exploratory Data Analysis (EDA) before training and evaluation. Visualize your data distributions to spot anomalies early.

How do you benchmark AI systems against industry standards?

Benchmarking is the process of comparing your model’s performance against established baselines or competitors.

- Public Benchmarks: Use standardized datasets like ImageNet (vision), GLUE/SuperGLUE (NLP), or MLU (general knowledge) to compare your model against state-of-the-art (SOTA) results.

- Internal Baselines: Compare your new model against your current production model (the “challenger” vs. “champion” approach) using A/B testing.

- Domain-Specific Standards: In regulated industries like healthcare or finance, adhere to specific guidelines (e.g., FDA’s Real-World Evidence requirements or NIST AI Risk Management Framework).

- Competitor Analysis: While you can’t access a competitor’s internal model, you can evaluate their public-facing APIs or products to gauge their performance capabilities.

Caution: Public benchmarks can be “gamed” (overfiting). Always validate benchmark results with real-world data that mirrors your specific use case.

What are the most common pitfalls in AI evaluation?

- Overfiting to the Test Set: Tuning your model specifically to pass your test set rather than generalizing to new data.

- Ignoring Edge Cases: Focusing only on “average” performance and missing catastrophic failures in rare scenarios.

- Metric Myopia: Optimizing for a single number (e.g., accuracy) while neglecting fairness, latency, or cost.

- Static Evaluation: Failing to account for data drift and concept drift over time.

- Lack of Human Context: Relying solely on automated metrics for subjective tasks like creativity or empathy.

- FDA: Request for Public Comment: Measuring and Evaluating AI-Enabled Medical Device Performance in Real-World

- NIST: AI Risk Management Framework (AI RMF)

- Google Research: What-If Tool: Interactive Visualization for Machine Learning

- IBM: AI Fairness 360 (AIF360) Toolkit

- Hugging Face: Evaluate Library Documentation

- LangChain: LangSmith Evaluation Guide

- Microsoft: Fairlearn: Assessing and Improving Fairness

- DeepEval: Confident AI Evaluation Framework

- Arize: ML Observability and Drift Detection

- RAND Corporation: Research Reports on AI Evaluation (Archive)