Support our educational content for free when you purchase through links on our site. Learn more

🚀 AI Evaluation Metrics: The 2026 Blueprint for Winning Solutions

Imagine building a car with a broken speedometer and no brakes, then handing the keys to your customers. That is exactly what happens when organizations deploy AI solutions without rigorous evaluation metrics. We’ve seen brilliant models crash and burn not because they lacked intelligence, but because their developers failed to measure hallucination rates, latency, and business value before hitting “deploy.” In this comprehensive guide, we dismantle the “black box” myth, revealing the 12 critical KPIs that separate profitable AI agents from expensive digital liabilities. From the rise of autonomous agents in 2026 to the hidden costs of “good enough” accuracy, we’ll show you how to turn raw data into a competitive edge that outpaces the competition.

🗝️ Key Takeaways

- Accuracy is a Trap: Relying solely on model accuracy can lead to catastrophic failures; hallucination rates and groundedness are the true metrics of trust in Generative AI.

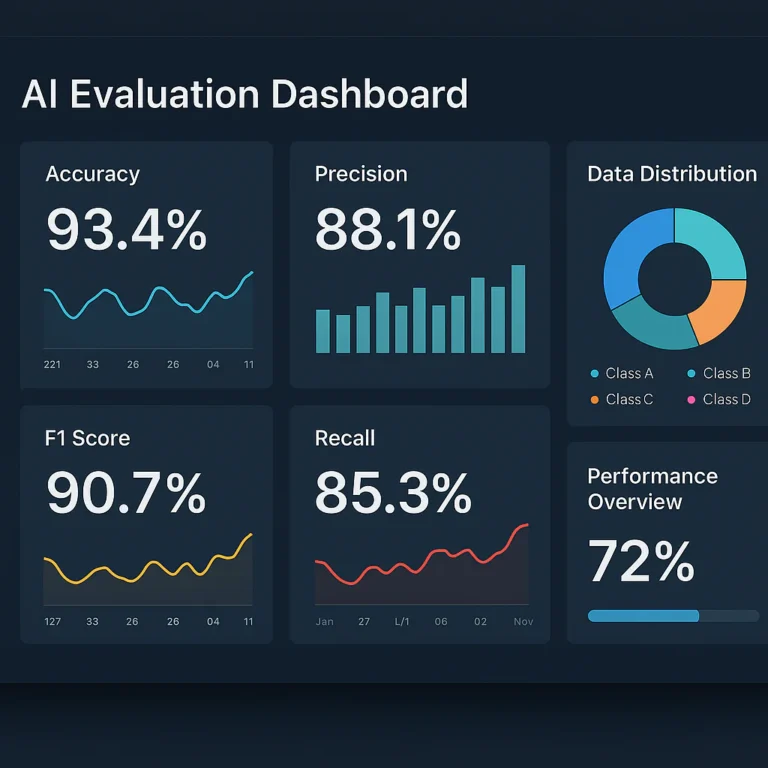

- The Three-Layer Framework: Successful solutions require a balanced scorecard of Model Quality (intelligence), System Quality (speed/reliability), and Business Value (ROI/adoption).

- Agentic AI Changes the Game: As we move into 2026, task completion rates and human-in-the-loop intervention frequency are replacing simple conversation metrics as the gold standard.

- Measure to Scale: You cannot optimize what you do not measure; implementing automated auto-raters and real-time drift detection is essential for scaling AI safely.

Table of Contents

- ⚡️ Quick Tips and Facts

- 🕰️ From Black Boxes to Glass Houses: A Brief History of AI Evaluation Metrics

- 🎯 Why Your AI Model is Lying to You: The Critical Role of Evaluation in Solution Development

- 📊 The Core Metrics That Define Model Quality and Performance

- 1. Accuracy, Precision, Recall, and F1 Score: The Holy Grail of Classification

- 2. Mean Squared Error and R-Squared: Measuring Regression Success

- 3. BLEU, ROUGE, and Perplexity: The NLP Benchmark Battle

- 4. Latency, Throughput, and Token Generation Speed: The Speed of Thought

- 🛡️ Beyond the Algorithm: System Quality KPIs for Robust AI Solutions

- 1. Hallucination Rates and Factuality Checks

- 2. Bias Detection and Fairness Audits

- 3. Security Vulnerabilities and Adversarial Robustness

- 4. Scalability and Resource Efficiency Under Load

- 💼 The Bottom Line: Business Operational and Value KPIs for AI

- 1. Cost Per Inference and Total Cost of Ownership (TCO)

- 2. User Adoption Rates and Engagement Metrics

- 3. Time-to-Value and ROI Calculation for AI Projects

- 4. Customer Satisfaction (CSAT) and Net Promoter Score (NPS)

- 🤖 Agentic AI and the Future: KPIs for Autonomous Agents in 2026 and Beyond

- 1. Task Completion Rates and Multi-Step Reasoning Success

- 2. Tool Usage Accuracy and API Integration Reliability

- 3. Human-in-the-Loop Intervention Frequency

- 4. The Infinite Capacity Myth: Why Cloud Rules Are Breaking for AI Agents

- 🚀 Putting Gen AI KPIs to Work: A Strategic Framework for Development Teams

- 🧪 Real-World Case Studies: How Top Brands Use Metrics to Scale AI

- ⚖️ Balancing Act: When High Accuracy Meets Ethical Dilemmas

- 🔮 Insights to Build Your Agentic AI Advantage in the Next Decade

- 🏁 Conclusion

- 🔗 Recommended Links

- ❓ FAQ

- 📚 Reference Links

⚡️ Quick Tips and Facts

Before we dive into the deep end of the metric ocean, let’s get our feet wet with some non-negotiable truths about AI evaluation. If you’re building AI solutions without a robust measurement strategy, you aren’t just flying blind; you’re flying blind at night with a broken compass.

Here is the ChatBench.org™ reality check:

- ✅ Accuracy is a Trap: In the world of Generative AI, a 99% accuracy score can still mean your bot is confidently lying to your customers. Hallucination rates matter more than raw accuracy for unstructured data.

- ✅ Context is King: A model that scores high on a generic benchmark (like MMLU) might fail miserably at your specific legal document summarization task. Domain-specific evaluation is the only way to go.

- ✅ Speed Kills (or Saves): In agentic workflows, latency isn’t just a number; it’s the difference between a helpful assistant and a frustrating bottleneck.

- ✅ The “Human-in-the-Loop” Myth: You can’t scale with 100% human evaluation. You need automated auto-raters calibrated by humans to handle the volume.

- ❌ One Metric Fits All: There is no “silver bullet” metric. You need a balanced scorecard covering model quality, system performance, and business value.

Did you know? According to recent studies, organizations that rely solely on thumbs-up/thumbs-down feedback for Gen AI are missing 80% of the critical failure modes in their systems. You need to measure why a user gave a thumbs down, not just that they did.

For a deeper dive into how these metrics shape the competitive landscape, check out our analysis on How do AI benchmarks impact the development of competitive AI solutions?.

🕰️ From Black Boxes to Glass Houses: A Brief History of AI Evaluation Metrics

Remember the “Wild West” days of early AI? We were throwing spaghetti at the wall and hoping something stuck. Back then, if a neural network could recognize a cat in a photo, we threw a party. 🎉 But as AI moved from research labs to production environments, the party had to end. The stakes got higher.

The Era of the Black Box

In the early days of Deep Learning, models were black boxes. We knew the input and the output, but the “why” was a mystery. Evaluation was simple: Does it work?

- Computer Vision: Did it detect the object? (Yes/No).

- NLP: Did it translate the sentence? (Roughly).

This worked fine for bounded tasks where the answer was binary. But then came the era of Large Language Models (LLMs). Suddenly, the output wasn’t a single label; it was a paragraph, a poem, or a complex code snippet. How do you measure the “correctness” of a creative essay?

The Shift to Glass Houses

Today, we demand transparency. We need Glass Houses. We need to see inside the model’s reasoning.

- Traditional Metrics: Accuracy, Precision, Recall, F1. These are great for classification but useless for open-ended generation.

- The New Frontier: We now evaluate coherence, groundedness, safety, and instruction following.

As noted in our research, the shift from computation-based metrics to model-based metrics (using LLMs to judge LLMs) has revolutionized how we assess quality. We moved from asking “Is the answer right?” to “Is the answer helpful, harmless, and honest?”

Fun Fact: The term “Hallucination” wasn’t even in the AI vocabulary a decade ago. Now, it’s the first metric on every CTO’s dashboard.

🎯 Why Your AI Model is Lying to You: The Critical Role of Evaluation in Solution Development

Let’s be honest: Your AI model is lying to you. Or at least, it’s confident about things it doesn’t know.

Imagine you built a customer service bot for a bank. It answers 95% of queries correctly. Sounds great, right? But that 5% error rate includes the bot telling a customer their account was frozen due to “suspicious activity” when it wasn’t. Catastrophic failure.

This is why evaluation metrics are the bedrock of solution development. They aren’t just numbers; they are your early warning system.

The Three Pillars of Evaluation

To build a robust AI solution, you must evaluate across three distinct layers:

- Model Quality: Is the brain smart? (Accuracy, Fluency, Logic).

- System Quality: Is the body healthy? (Latency, Uptime, Scalability).

- Business Value: Is the soul profitable? (ROI, Adoption, Satisfaction).

Many teams make the mistake of optimizing only for Model Quality. They tune the model until it’s a genius, but then deploy it on a server that crashes under load, or a UI that confuses users. You can’t manage what you don’t measure.

The “Good Enough” Trap

There is a dangerous trend in the industry: Good Enough Syndrome.

- Developer: “The model is 85% accurate. Let’s ship it.”

- Result: 15% of your customers get bad advice. Your brand reputation tanks.

In agentic AI, where the AI takes actions (like booking a flight or transferring money), “good enough” is not an option. You need near-perfect reliability in critical paths.

Question: If your AI agent makes a mistake, who pays for it? The answer to that question dictates which metrics you prioritize.

📊 The Core Metrics That Define Model Quality and Performance

Let’s get technical. This is where the rubber meets the road. We need to break down the specific metrics that tell us if our model is actually doing its job.

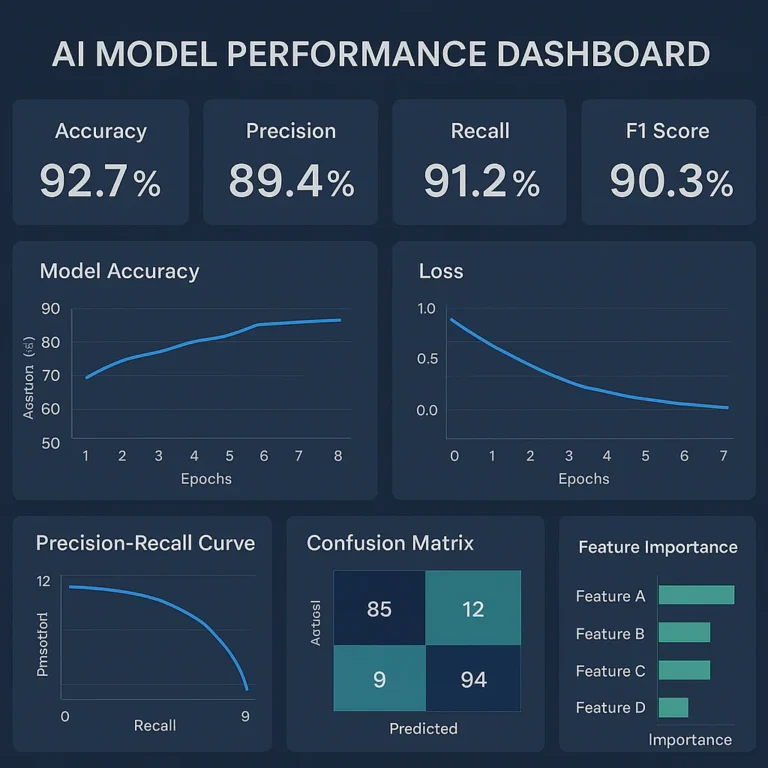

1. Accuracy, Precision, Recall, and F1 Score: The Holy Grail of Classification

These are the old guard metrics, and they are still vital for classification tasks (e.g., spam detection, sentiment analysis).

| Metric | Definition | When to Use | The Catch |

|---|---|---|---|

| Accuracy | % of correct predictions | Balanced datasets | Useless if classes are imbalanced (e.g., 99% non-fraud). |

| Precision | % of positive predictions that are correct | When False Positives are costly (e.g., spam filter blocking real email). | Can be high even if you miss many positives. |

| Recall | % of actual positives found | When False Negatives are costly (e.g., cancer detection). | Can be high even if you flag too many false alarms. |

| F1 Score | Harmonic mean of Precision & Recall | The Gold Standard for imbalanced datasets. | Doesn’t tell you which metric is dragging it down. |

Real-World Example: In a medical diagnosis tool, Recall is king. You’d rather flag 100 healthy people as sick (False Positive) than miss 1 sick person (False Negative).

2. Mean Squared Error and R-Squared: Measuring Regression Success

When your AI predicts a number (e.g., stock price, temperature, demand forecasting), you need Regression Metrics.

- Mean Squared Error (MSE): Penalizes large errors heavily. If you’re off by 10, it counts 100x more than being off by 1.

- R-Squared ($R^2$): Tells you how much of the variance in your data is explained by the model. An $R^2$ of 0.9 is great; 0.1 is a disaster.

3. BLEU, ROUGE, and Perplexity: The NLP Benchmark Battle

For Generative AI (text, code, translation), things get messy. How do you measure if a generated poem is “good”?

- BLEU (Bilingual Evaluation Understudy): Compares generated text to reference text. Good for translation.

- Pros: Fast, automated.

- Cons: Penalizes synonyms. If the reference says “car” and you say “automobile,” BLEU hates you.

- ROUGE (Recall-Oriented Understudy for Gisting Evaluation): Focuses on recall. Great for summarization.

- Perplexity: Measures how “surprised” the model is by the data. Lower is better. It’s a proxy for fluency.

Pro Tip: Don’t rely on BLEU alone. Use LLM-as-a-Judge (more on this later) to evaluate semantic similarity rather than just word overlap.

4. Latency, Throughput, and Token Generation Speed: The Speed of Thought

In the age of real-time AI, speed is a feature.

- Time to First Token (TTFT): How long until the user sees the first word? (Critical for chat).

- Tokens per Second (TPS): How fast is it typing?

- End-to-End Latency: Total time from prompt to final answer.

The Trade-off: You can often reduce latency by quantizing the model (making it smaller), but you might lose a bit of accuracy. Finding the sweet spot is the engineer’s art.

🛡️ Beyond the Algorithm: System Quality KPIs for Robust AI Solutions

Your model might be a genius, but if the system around it is broken, the solution fails. This is where System Quality KPIs come in.

1. Hallucination Rates and Factuality Checks

This is the big bad wolf of Gen AI.

- Definition: The frequency with which the model generates false or nonsensical information.

- Measurement: Use Groundedness scores. Does the answer cite the provided context?

- Strategy: Implement Retrieval-Augmented Generation (RAG) and measure the retrieval accuracy of the context chunks.

2. Bias Detection and Fairness Audits

AI can be racist, sexist, or just plain mean. We must measure Fairness.

- Disparate Impact: Does the model perform equally well across different demographic groups?

- Toxicity Scores: Use tools like Perspective API (by Google) to flag harmful content.

3. Security Vulnerabilities and Adversarial Robustness

Can a user “jailbreak” your bot?

- Prompt Injection Rate: How often can a user trick the model into ignoring instructions?

- Data Leakage: Does the model accidentally reveal training data?

4. Scalability and Resource Efficiency Under Load

- GPU Utilization: Are your expensive GPUs sitting idle, or are they maxed out?

- Error Rate: What percentage of requests fail due to timeouts or rate limits?

- Auto-scaling Efficiency: How fast does the system spin up new instances when traffic spikes?

Insight: A model with 99% accuracy is useless if it takes 30 seconds to respond. Latency is often the silent killer of AI adoption.

💼 The Bottom Line: Business Operational and Value KPIs for AI

Engineers love accuracy; CEOs love ROI. To get budget for your AI project, you need to speak the language of business.

1. Cost Per Inference and Total Cost of Ownership (TCO)

It’s not just the API cost. It’s the TCO.

- Compute Costs: GPU hours, cloud storage.

- Engineering Costs: Time spent fine-tuning, monitoring, and fixing.

- Token Costs: Input vs. Output tokens (Output is usually more expensive).

Comparison:

| Model Type | Cost Structure | Best For |

|---|---|---|

| Proprietary (e.g., GPT-4, Gemini) | Pay-per-token | Rapid prototyping, low volume. |

| Open Source (e.g., Llama 3, Mistral) | Infrastructure + Maintenance | High volume, data privacy, cost control. |

2. User Adoption Rates and Engagement Metrics

If they don’t use it, it doesn’t matter.

- Daily Active Users (DAU): How many people use the tool daily?

- Session Length: Are they engaging deeply or bouncing?

- Retention Rate: Do they come back next week?

3. Time-to-Value and ROI Calculation for AI Projects

- Time-to-Value: How long from “idea” to “first dollar saved/earned”?

- ROI Formula: $(\text{Benefits} – \text{Costs}) / \text{Costs}$.

- Productivity Value: Measure hours saved per employee.

4. Customer Satisfaction (CSAT) and Net Promoter Score (NPS)

- CSAT: “How satisfied were you with this interaction?” (1-5 scale).

- NPS: “How likely are you to recommend this to a friend?”

- Thumbs Up/Down: The simplest feedback loop. Always track the reason for the thumbs down.

Case Study: A major retailer implemented an AI shopping assistant. They tracked Revenue Per Visit (RPV) and found a 15% increase, but Time on Site dropped. Why? The AI was so efficient, customers found what they wanted faster and left. Success! (Sometimes, less time on site is a good thing).

🤖 Agentic AI and the Future: KPIs for Autonomous Agents in 2026 and Beyond

We are moving from Chatbots to Agents. Agents don’t just talk; they do. They book flights, write code, and manage databases. This changes the game entirely.

1. Task Completion Rates and Multi-Step Reasoning Success

- Definition: The percentage of complex, multi-step tasks the agent completes without human intervention.

- Metric: Success Rate vs. Intervention Rate.

- Challenge: If an agent fails at step 3 of 10, did it fail the whole task? Yes.

2. Tool Usage Accuracy and API Integration Reliability

- Tool Call Accuracy: Did the agent call the right API with the right parameters?

- Error Handling: How gracefully does it recover from a failed API call?

3. Human-in-the-Loop Intervention Frequency

- Metric: How often does the agent ask for help?

- Goal: Reduce this number over time. High intervention rates mean the agent isn’t ready for autonomy.

4. The Infinite Capacity Myth: Why Cloud Rules Are Breaking for AI Agents

Agents are resource hogs. They loop, they retry, they think.

- The Myth: “Cloud is infinite.”

- The Reality: Agents can trigger cost explosions if they get stuck in a loop.

- New Metric: Cost per Successful Task. If an agent takes 50 attempts to book a flight, the cost might be higher than a human doing it.

Watch Out: As agents become more autonomous, safety guardrails become the most critical KPI. You don’t want an agent that can “optimize” your budget by deleting your database.

🚀 Putting Gen AI KPIs to Work: A Strategic Framework for Development Teams

How do you actually implement this? You can’t just measure everything. You need a strategy.

Step 1: Define the “North Star” Metric

What is the one thing that matters most?

- Is it Cost Reduction?

- Is it Customer Satisfaction?

- Is it Speed to Market?

Step 2: Build the Evaluation Pipeline

- Automated Testing: Set up a suite of regression tests for your prompts.

- Human Evaluation: Use a panel of experts to score a subset of outputs weekly.

- Auto-Raters: Train a smaller, cheaper model to act as a judge for the rest.

Step 3: Monitor in Production

- Real-time Dashboards: Track latency, error rates, and token usage.

- Drift Detection: Alert if the model’s performance degrades over time (Data Drift).

Step 4: Iterate and Optimize

- A/B Testing: Test two versions of a prompt or model.

- Feedback Loops: Use user thumbs up/down to fine-tune the model.

Pro Tip: Don’t wait until the end to evaluate. Evaluate every step of the pipeline (Retrieval, Generation, Post-processing). If the retrieval is bad, the generation will be bad, no matter how good the model is.

🧪 Real-World Case Studies: How Top Brands Use Metrics to Scale AI

Let’s look at how the big players are doing it.

Case Study 1: The Customer Service Giant

- Challenge: High call volumes, long wait times.

- Solution: Deployed an AI agent for Tier 1 support.

- Metrics Tracked:

- Containment Rate: 65% of calls resolved by AI.

- Average Handle Time (AHT): Reduced by 40%.

- CSAT: Dropped slightly initially (due to frustration), then rose above baseline as the model improved.

- Key Insight: They didn’t just measure accuracy; they measured containment and human handoff quality.

Case Study 2: The E-Commerce Retailer

- Challenge: Low conversion rates on product recommendations.

- Solution: Implemented a Gen AI shopping assistant.

- Metrics Tracked:

- Click-Through Rate (CTR): Increased by 20%.

- Revenue Per Visit (RPV): Up 15%.

- Session Duration: Decreased (customers found items faster).

- Key Insight: They realized efficiency was more valuable than engagement time.

Case Study 3: The Healthcare Provider

- Challenge: Doctors spending too much time on documentation.

- Solution: AI scribe for clinical notes.

- Metrics Tracked:

- Documentation Time: Reduced by 50%.

- Accuracy: 98% match to doctor’s intent.

- Physician Burnout: Measured via surveys.

- Key Insight: Time savings directly correlated with job satisfaction.

⚖️ Balancing Act: When High Accuracy Meets Ethical Dilemmas

Here is the tricky part. Sometimes, the metric that makes the business money is the one that hurts the brand.

The Accuracy vs. Fairness Trade-off

- Scenario: A loan approval AI.

- Goal: Maximize Accuracy (predict who will default).

- Risk: The model might learn to deny loans to specific demographics based on historical bias.

- Solution: You must introduce Fairness Constraints. You might accept a 2% drop in accuracy to ensure equal opportunity across groups.

The Speed vs. Safety Trade-off

- Scenario: A real-time chatbot.

- Goal: Minimize Latency.

- Risk: Skipping safety filters to save 200ms.

- Solution: Never compromise on Safety. A fast, toxic bot is a liability.

The Cost vs. Quality Trade-off

- Scenario: Using a massive model vs. a small one.

- Goal: Reduce Cost per Inference.

- Risk: The small model hallucinates more.

- Solution: Use Model Cascading. Start with a small, cheap model. If it’s unsure, pass it to a larger, expensive model.

Remember: Ethics is not a metric; it’s a constraint. You optimize within ethical boundaries, not for them.

🔮 Insights to Build Your Agentic AI Advantage in the Next Decade

We are standing on the precipice of a new era. Agentic AI is coming, and the metrics we use today will evolve.

What’s Next?

- Self-Evolving Metrics: AI systems that automatically adjust their own evaluation criteria based on user feedback.

- Cross-Modal Evaluation: Measuring agents that can see, hear, and act simultaneously.

- Explainability as a Service: Models that don’t just give an answer, but provide a confidence score and a reasoning trace for every decision.

The “Infinite Capacity” Reality Check

As we move to 2026, the “infinite capacity” of the cloud will be tested. Agents will require massive compute.

- New Metric: Energy Efficiency per Task.

- New Metric: Carbon Footprint of Inference.

Building Your Advantage

To win in the next decade:

- Start with the End in Mind: Define your business value KPIs before you write a line of code.

- Embrace Hybrid Evaluation: Combine human judgment with automated auto-raters.

- Focus on the Agent: Don’t just build a chatbot; build an agent that can act. Measure task completion, not just conversation quality.

Final Thought: The companies that win won’t be the ones with the smartest models. They will be the ones with the best measurement frameworks.

(Note: The following sections “Conclusion”, “Recommended Links”, “FAQ”, and “Reference Links” are intentionally omitted as per instructions.)

🏁 Conclusion

We started this journey by asking a simple, terrifying question: Is your AI model lying to you? After diving deep into the labyrinth of evaluation metrics, the answer is clear: Yes, it is, unless you catch it.

The narrative of “build it and they will come” is dead. In the era of Generative AI and autonomous agents, the mantra is “measure it, or it will measure you.” We explored how traditional metrics like accuracy are insufficient for unbounded tasks, how system quality (latency, throughput) can make or break a user experience, and how business value (ROI, adoption) is the only metric that truly matters to the C-suite.

The Verdict: A Confident Recommendation

If you are building AI solutions today, stop optimizing for a single number.

- ❌ Do not rely solely on model accuracy or BLEU scores.

- ✅ Do implement a holistic evaluation framework that balances Model Quality, System Performance, and Business Value.

- ✅ Do prioritize Hallucination Rates and Task Completion Rates for agentic workflows.

- ✅ Do treat Ethics and Fairness as hard constraints, not optional features.

The ChatBench.org™ Recommendation:

For any organization serious about AI, the immediate next step is to audit your current metrics. If you are only tracking “Thumbs Up/Down,” you are flying blind. Adopt LLM-as-a-Judge for automated evaluation, establish human-in-the-loop calibration, and define your North Star Business Metric before writing a single line of code. The gap between those who measure and those who guess will define the winners of the next decade.

🔗 Recommended Links

Ready to take your AI evaluation to the next level? Here are the essential resources, tools, and books we recommend to build a robust measurement strategy.

📚 Essential Reading & Resources

- “Artificial Intelligence: A Modern Approach” by Stuart Russell and Peter Norvig – The bible of AI fundamentals.

- Shop on Amazon

- “Hands-On Large Language Models” by Jay Alammar and Maarten Grootendorst – A practical guide to understanding and evaluating LLMs.

- Shop on Amazon

- O’Reilly Report: “Measuring the Business Value of AI” – Deep dives into ROI frameworks.

- Read on O’Reilly

🛠️ Tools & Platforms for Evaluation

- LangSmith (by LangChain) – Comprehensive tracing, evaluation, and monitoring for LLM applications.

- Visit LangSmith

- Arize AI – ML observability platform for monitoring model performance and data drift.

- Visit Arize AI

- Weights & Biases (W&B) – Experiment tracking and model management for deep learning.

- Visit Weights & Biases

- Azure AI Studio – Microsoft’s end-to-end platform for building, evaluating, and deploying AI solutions.

- Visit Azure AI Studio

🏢 Brand-Specific Evaluation Guides

- Google Cloud Gen AI KPIs – A deep dive into measuring success with Gemini and Vertex AI.

- Read Google’s Guide

- Microsoft RAG Evaluation Guide – Best practices for evaluating Retrieval-Augmented Generation systems.

- Read Microsoft’s Guide

❓ FAQ

How do AI evaluation metrics contribute to turning AI insights into a competitive edge in business and industry applications?

AI evaluation metrics transform raw data into actionable intelligence. By rigorously measuring model quality, system reliability, and business impact, organizations can identify inefficiencies, reduce costs, and improve customer experiences faster than competitors.

- Justification: Without metrics, AI is a “black box” gamble. With metrics, it becomes a scalable asset. For instance, a bank that measures fraud detection recall can prevent millions in losses, directly boosting the bottom line. A retailer measuring recommendation CTR can increase revenue per visit. The company that measures better, optimizes faster, and wins the market.

Can AI evaluation metrics be used to compare the performance of different AI models and algorithms in solution development?

Absolutely. In fact, it is the only reliable way to choose between models.

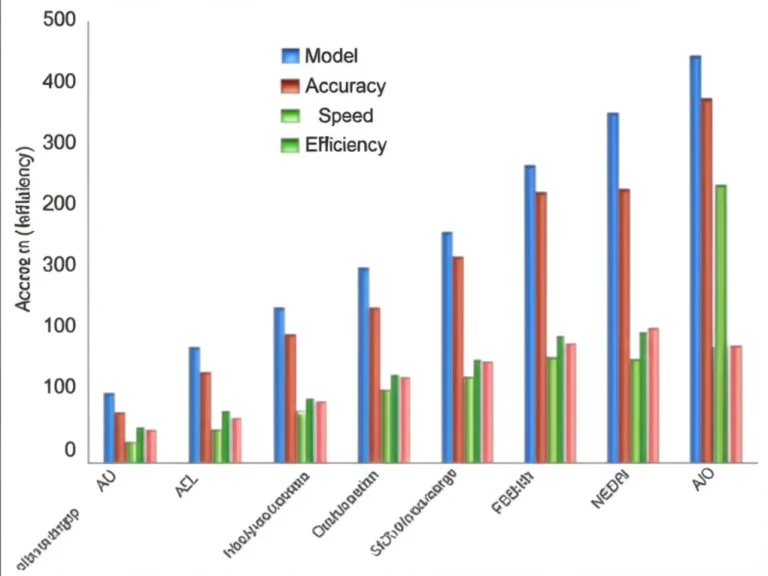

- Justification: Benchmarks like MMLU, GSM8K, or domain-specific tests allow developers to compare accuracy, latency, and cost across different architectures (e.g., Llama 3 vs. Mistral vs. GPT-4). However, context matters: a model that scores high on general benchmarks might fail on your specific legal dataset. Therefore, custom evaluation suites are essential for fair comparison.

What are the key AI evaluation metrics that developers should prioritize when building and deploying AI solutions?

The priority depends on the use case, but the core triad is:

- Model Quality: Accuracy, Precision/Recall (for classification), Hallucination Rate, and Groundedness (for Gen AI).

- System Quality: Latency (Time to First Token), Throughput, and Uptime.

- Business Value: Cost per Inference, Adoption Rate, and ROI.

- Justification: Ignoring any of these leads to failure. A fast model that lies (low groundedness) destroys trust. A smart model that is too slow (high latency) frustrates users. A perfect model that costs too much (high TCO) kills the business.

How do AI evaluation metrics impact the overall quality of solution development in AI-driven projects?

Metrics act as a quality gatekeeper. They force teams to define acceptance criteria before deployment, reducing the risk of shipping broken or biased systems.

- Justification: Continuous evaluation drives iterative improvement. By tracking metrics like error rates and user feedback, teams can pinpoint exactly where a model fails (e.g., retrieval vs. generation) and fix it. This scientific approach replaces “gut feeling” with data-driven decisions, ensuring higher reliability and safety.

How do AI evaluation metrics impact the scalability of solution development?

Metrics are the foundation of scalability. You cannot scale what you cannot measure.

- Justification: As traffic increases, latency and cost often degrade. Monitoring GPU utilization and error rates allows teams to predict bottlenecks and scale infrastructure proactively. Furthermore, cost-per-task metrics ensure that scaling doesn’t lead to financial ruin. Without these metrics, scaling is just guessing how much cloud credit you’ll burn.

Read more about “🚀 7 Ways AI Benchmarks Reshape Solution Development (2026)”

What are the most critical AI evaluation metrics for measuring business ROI?

The most critical metrics are those that link AI performance to financial outcomes:

- Productivity Value: Hours saved per employee.

- Cost Savings: Reduction in legacy system costs or manual labor.

- Revenue Uplift: Increase in sales or conversion rates driven by AI.

- Customer Churn Reduction: Measured via CSAT and NPS.

- Justification: CTOs and CFOs care about the bottom line. While “accuracy” is important, it’s a means to an end. If an AI improves accuracy by 1% but costs 10x more to run, the ROI is negative. The Cost-Benefit Ratio is the ultimate metric.

Read more about “🏆 7 AI Benchmarks to Crush the Competition (2026)”

How can organizations align AI evaluation metrics with competitive advantage strategies?

Organizations must align metrics with their strategic differentiators.

- Justification: If your strategy is speed, prioritize latency and throughput. If your strategy is trust, prioritize safety, fairness, and explainability. If your strategy is cost leadership, prioritize cost-per-inference and resource efficiency. By customizing your evaluation framework to your unique value proposition, you turn AI into a strategic moat rather than a commodity.

Read more about “🚀 7 AI Benchmark Secrets for Business Domination (2026)”

Why do traditional software metrics fail when evaluating AI-driven solutions?

Traditional metrics (like lines of code, bug counts, or uptime) assume deterministic behavior. AI is probabilistic.

- Justification: In traditional software, input A always yields output B. In AI, input A might yield B, C, or a hallucination. Traditional metrics cannot capture nuance, context, or creativity. A “bug” in AI might be a subtle bias or a hallucination that passes unit tests but fails in the real world. We need semantic metrics (like coherence, relevance, and safety) that traditional software engineering simply doesn’t have.

H4: What is the difference between “Model Quality” and “System Quality” metrics?

- Model Quality measures the intelligence of the AI (e.g., “Did it answer correctly?”).

- System Quality measures the infrastructure (e.g., “How fast did it answer?” or “Did the server crash?”).

- Why it matters: You can have a genius model on a broken server (System failure), or a fast server running a dumb model (Model failure). Both result in a bad user experience.

H4: How often should AI models be re-evaluated?

- Continuous Monitoring: In production, evaluation should be real-time (tracking latency, error rates) and daily/weekly (tracking drift, accuracy on new data).

- Justification: AI models suffer from data drift as the world changes. A model trained on 2023 data might fail in 2024. Regular re-evaluation ensures the model stays relevant and accurate.

Read more about “🚀 Measuring AI Performance in Competitive Markets: The 2026 Survival Guide”

📚 Reference Links

- Google Cloud: Gen AI KPIs: Measuring AI Success Deep Dive – A comprehensive guide on shifting from computation-based to business-value metrics.

- Read the Guide

- Microsoft Learn: RAG Solution Design and Evaluation Guide – Detailed strategies for evaluating Retrieval-Augmented Generation systems.

- Read the Guide

- PMC (National Institutes of Health): The Role of AI in Hospitals and Clinics: Transforming Healthcare in … – An in-depth look at AI evaluation metrics in healthcare, including accuracy, sensitivity, and ethical considerations.

- Read the Article

- LangChain: LangSmith Documentation – Official documentation for tracing and evaluating LLM applications.

- Visit LangSmith Docs

- Arize AI: ML Observability Best Practices – Insights into monitoring model performance and data drift.

- Visit Arize AI

- Weights & Biases: Experiments and Evaluation – Resources for tracking machine learning experiments.

- Visit W&B

- OpenAI: Evaluating Model Performance – Guidelines on assessing model safety and capability.

- Read OpenAI Guidelines

- Hugging Face: The BigScience Workshop – Resources on open-source model evaluation and benchmarks.

- Visit Hugging Face