Support our educational content for free when you purchase through links on our site. Learn more

Benchmarking Language Models for Business Applications in 2025 🚀

Choosing the right language model for your business can feel like navigating a labyrinth blindfolded. With giants like GPT-4 dominating headlines and a flood of new models hitting the market, how do you know which AI will actually deliver results tailored to your unique enterprise needs? Spoiler alert: bigger isn’t always better. In fact, our research at ChatBench.org™ reveals that fine-tuned, domain-specific models often outperform massive out-of-the-box giants when it comes to real-world business tasks.

In this comprehensive guide, we’ll unravel the mysteries of language model benchmarking, dissect the limitations of popular academic tests, and introduce you to cutting-edge enterprise benchmarks like Moveworks’ proprietary evaluation framework. Curious how a smaller 7-billion parameter model can beat GPT-4 on your company’s support tickets? Stick around — we’ll show you why custom benchmarks and fine-tuning are your secret weapons for AI success.

Key Takeaways

- Fine-tuned, enterprise-specific models consistently outperform larger general-purpose LLMs on real business tasks, delivering better accuracy, speed, and cost-efficiency.

- Standard academic benchmarks like GLUE and MMLU often fail to reflect enterprise realities, making custom or proprietary benchmarks essential for meaningful evaluation.

- A holistic benchmarking approach—including accuracy, latency, cost, trust, and sustainability—is critical for selecting the right model.

- Building your own benchmark with real business data is the ultimate test and provides actionable insights to optimize AI deployment.

- Smaller, specialized models reduce costs and improve user experience, debunking the myth that “bigger is always better.”

Ready to benchmark your way to smarter AI? Let’s dive in!

Table of Contents

- ⚡️ Quick Tips and Facts About Benchmarking Language Models for Business

- 📜 The Evolution of Language Models in Business Applications

- 🔍 Understanding Language Model Benchmarks: What, Why, and How?

- 1️⃣ Top 10 Language Model Benchmarks for Enterprise Use Cases

- ⚔️ Challenges and Pitfalls of Existing Benchmarks in Business Contexts

- 🚀 Introducing the Moveworks Enterprise Language Model Benchmark: A Game Changer?

- 📊 Deep Dive: Results and Insights from the Moveworks Enterprise Benchmark

- 💡 Key Lessons and Strategic Takeaways for Business Leaders

- 🤖 Fine-Tuned vs. Out-of-the-Box: Which Language Model Wins in Enterprise Tasks?

- 🛠️ How to Choose the Right Language Model Benchmark for Your Business Needs

- 📈 Future Trends: The Next Frontier in Language Model Benchmarking for Enterprises

- 🔗 The AI Assistant Platform: Empowering Your Entire Workforce with Language Models

- 📰 Subscribe to Our Insights Blog for the Latest in AI and Business

- 🎯 Conclusion: Benchmarking Language Models to Boost Your Business Edge

- 🔗 Recommended Links for Further Exploration

- ❓ Frequently Asked Questions About Language Model Benchmarking

- 📚 Reference Links and Credible Sources

⚡️ Quick Tips and Facts About Benchmarking Language Models for Business

Welcome, fellow AI enthusiasts and business trailblazers! You’re standing at the edge of a revolution, and Large Language Models (LLMs) are the engine. But how do you pick the right one? Here at ChatBench.org™, we live and breathe this stuff. Before we dive deep, here are some juicy tidbits to get you started:

- Bigger Isn’t Always Better: A shocking truth we’ve seen firsthand! A smaller, fine-tuned model can often run circles around a massive, general-purpose model like GPT-4 for specific business tasks. The secret sauce? Enterprise-specific data.

- One Size Fits Nobody: Moveworks, a leader in the space, puts it perfectly: “LLMs will not be one-size-fits-all.” Your business has unique jargon, processes, and data. Your LLM should too.

- Standard Benchmarks Can Lie (to Businesses): Popular academic benchmarks like GLUE, SuperGLUE, and MMLU are fantastic for measuring general knowledge but often fail to predict performance on real-world business tasks. They’re like using a road car’s specs to predict how it will perform in a swamp.

- Metrics Are More Than Just Accuracy: When evaluating an LLM for your business, you need a holistic view. Think about:

- ✅ Cost: How much will millions of API calls set you back?

- ✅ Speed (Latency): Can it keep up with real-time customer interactions?

- ✅ Trust & Safety: Does it avoid bias and protect private information?

- ✅ Sustainability: What’s the environmental footprint of running this model at scale?

- Fine-Tuning is Your Superpower: The most significant performance gains for enterprise applications come from fine-tuning a model on your own high-quality, domain-specific data. This is a core part of our Fine-Tuning & Training philosophy.

Ready to go beyond the hype and find a model that actually works for your business? Let’s get into it.

📜 The Evolution of Language Models in Business Applications

Remember the clunky, keyword-based chatbots of yesteryear? We’ve come a long, long way. The journey from simple statistical models to the sophisticated neural networks of today has been nothing short of breathtaking.

From Clippy to ChatGPT: A Brief History

In the early days, “AI” in business often meant rigid, rule-based systems. Think automated phone menus that made you want to tear your hair out. Then came statistical models that could predict the next word in a sentence—a neat trick, but hardly revolutionary for complex business needs.

The real game-changer was the arrival of the Transformer architecture, which powers modern marvels like Google’s Gemini and OpenAI’s GPT series. These models can understand context, nuance, and long-range dependencies in text, unlocking unprecedented capabilities. Since ChatGPT burst onto the scene, businesses have been scrambling to integrate LLMs into everything from customer service to code generation.

But this explosion of options created a new problem: the paradox of choice. With a “vast array of LLM solutions” on the market, how does a business leader choose the right tool for the job? That, our friends, is where benchmarking becomes not just useful, but absolutely essential.

🔍 Understanding Language Model Benchmarks: What, Why, and How?

So, what exactly is benchmarking? Think of it as a standardized exam for AI models. It’s a systematic process of evaluating an LLM’s effectiveness, efficiency, and suitability for specific tasks by comparing it against standardized metrics and datasets. This process is vital for making informed, strategic decisions about which AI to deploy. If you’re new to the concept, the embedded YouTube video below from IBM Technology offers a great primer on what LLM benchmarks are.

ChatBench.org™ Anecdote: We once worked with a retail client who was convinced they needed the largest, most expensive model on the market for their customer service bot. We ran a benchmark using their actual customer support logs. The result? A much smaller, open-source model, fine-tuned on their data, not only performed better but also responded faster and cost them a fraction of the price. They were stunned. This is a classic example of why real-world, business-specific benchmarking is a non-negotiable step in any AI Business Applications strategy.

Why Is Benchmarking So Critical?

- Drives Strategic Decisions: Benchmarking provides the hard data you need to choose the most suitable and cost-effective model for your specific needs.

- Creates Competitive Advantage: Understanding the strengths and weaknesses of different models allows you to innovate faster and optimize operations more effectively.

- Ensures Reliability and Trust: Rigorous testing helps identify potential issues like bias, toxicity, and hallucinations before a model interacts with your customers.

The process involves running models through standardized tasks and measuring their performance on key metrics like accuracy, speed, and resource consumption.

1️⃣ Top 10 Language Model Benchmarks for Enterprise Use Cases

While we’ve cautioned against relying solely on academic benchmarks, it’s crucial to know the landscape. These are the standardized tests that model creators use to tout their superiority. Understanding them helps you see what’s being measured—and more importantly, what isn’t. For a deeper dive, check out our guide on the most widely used AI benchmarks for natural language processing tasks.

Here’s a rundown of some of the most well-known benchmarks, with our expert take on their relevance for business.

| Benchmark | Primary Focus | Business Relevance | Key Takeaway for Business |

|---|---|---|---|

| HELM | Holistic evaluation across many scenarios & metrics. | High | Developed by Stanford, it’s one of the most comprehensive, looking at accuracy, fairness, bias, and efficiency. A great starting point. |

| MMLU | Massive Multitask Language Understanding. | Medium | Tests knowledge across 57 subjects (from history to law). Good for gauging general knowledge, but not specific business acumen. |

| SuperGLUE | Difficult language understanding tasks. | Medium | A tougher version of GLUE, it pushes models on complex reasoning. Useful, but still academic. |

| GLUE | General Language Understanding Evaluation. | Low | The original benchmark. Most top models have saturated it, making it less useful for differentiating state-of-the-art LLMs. |

| ARC | AI2 Reasoning Challenge. | Low | A collection of 7,787 science exam questions. Unlikely to reflect your business’s daily challenges. |

| HumanEval | Code generation capabilities. | High (for tech) | Essential if you’re using an LLM for software development, like a copilot. |

| TruthfulQA | Measures a model’s tendency to generate falsehoods. | Very High | Crucial for any application where factual accuracy is paramount, helping to avoid brand-damaging hallucinations. |

| Salesforce CRM Benchmark | CRM-specific tasks (e.g., lead nurturing, case summaries). | Very High (for CRM) | The first of its kind, it evaluates models on real-world business workflows, a huge step in the right direction. |

| Moveworks Enterprise Benchmark | Enterprise support tasks (e.g., IT tickets, HR questions). | Very High (for Ops) | A proprietary benchmark built on real enterprise data, showing the power of domain-specific evaluation. |

| Your Own Custom Benchmark | Your specific business processes and data. | CRITICAL | The ultimate test. Nothing beats evaluating a model on the exact tasks it will perform for you. |

⚔️ Challenges and Pitfalls of Existing Benchmarks in Business Contexts

So, you’ve seen the leaderboards. A new model from Google or Anthropic just crushed MMLU. Time to switch providers, right? Hold your horses! 🤠

Relying solely on these scores can be dangerously misleading. Here’s why standard benchmarks often fail the enterprise reality check.

The Core Problems

- ❌ Lack of Enterprise Specificity: Academic benchmarks test general knowledge, like science questions or pronoun resolution. Your business needs a model that understands “Q3 ARR reports,” “submitting a PTO request,” or your company’s unique product names, not the capital of Kyrgyzstan. This gap between general knowledge and domain-specific jargon is where out-of-the-box models stumble.

- ❌ Stagnant and Flawed Datasets: Language evolves, but benchmarks often don’t. They can become outdated, making it hard to evaluate newer, more capable models. Worse, some studies have found errors and biases baked right into the benchmark datasets themselves.

- ❌ “Teaching to the Test”: Models can be trained on data that overlaps with benchmark questions, leading to inflated scores that reflect memorization, not true comprehension.

- ❌ Mismatch with Real-World Tasks: As one report notes, benchmarks with doctorate-level physics questions don’t reflect how most people use LLMs—for tasks like drafting emails or summarizing meetings. The evaluation must match the application.

As Teradata’s experts wisely state, “benchmarks might favor models trained on specific types of data or those optimized for particular metrics, such as accuracy, at the expense of other important factors, like fairness or robustness.” This is a critical insight for any business leader.

🚀 Introducing the Moveworks Enterprise Language Model Benchmark: A Game Changer?

Frustrated by the limitations of existing benchmarks, some companies are taking matters into their own hands. One of the most compelling examples is the Moveworks Enterprise LLM Benchmark.

Moveworks, a company specializing in AI for employee support, built a benchmark from the ground up using its massive, proprietary dataset of real-world enterprise data. This includes support tickets, internal knowledge base articles, and employee-bot conversations—the messy, jargon-filled language of actual businesses.

What Makes It Different?

- Real-World Data: The benchmark is built on a corpus of 500 million enterprise support tickets, ensuring it reflects the tasks employees actually need help with.

- Enterprise-Centric Tasks: It includes 14 tasks that are highly relevant to businesses, such as:

- Generating API calls from natural language (e.g., “What’s our Q3 revenue?”).

- Classifying the intent of support tickets.

- Extracting specific information from long, unstructured text.

- Focus on Structured Output: Many business applications require an LLM to produce a structured output, like JSON, that another system can use. The Moveworks benchmark explicitly tests for this capability.

This approach represents a major shift from academic theory to practical application, providing a blueprint for how businesses should think about evaluating LLMs.

📊 Deep Dive: Results and Insights from the Moveworks Enterprise Benchmark

So what happened when Moveworks pitted giant, general-purpose models against their own specialized, fine-tuned model, MoveLM™? The results were a wake-up call for the entire industry.

The key finding was unambiguous: “LLMs fine-tuned on the enterprise dataset surpassed their larger counterparts.”

Let’s break down a couple of stunning examples:

Example 1: Stakeholder Analytics Intent Classification

- The Task: An employee submits a ticket: “I need to get a form to request a new laptop.”

- GPT-4’s Response: Misclassified the intent as “Manage” and the category as “Hardware.” This is logical in a general sense, but incorrect in an enterprise context.

- MoveLM™’s Response: Correctly classified the intent as “Provision” and the category as “Information.” It understood the specific enterprise workflow: the user isn’t managing hardware, they’re requesting information (the form) to provision new hardware.

Example 2: Generating API Calls from Natural Language

- The Task: A user asks, “What’s Moveworks ARR?”

- GPT-4’s Response: Failed to generate the correct API call, getting confused by the context.

- MoveLM™’s Response: Successfully generated the precise API call needed to retrieve the information.

These examples aren’t edge cases; they represent the daily reality of business operations. The Moveworks benchmark rigorously validated that their specialized training allowed MoveLM to achieve “outstanding performance on critical enterprise tasks.”

💡 Key Lessons and Strategic Takeaways for Business Leaders

The evidence is clear, and the message is powerful. If you’re a business leader looking to leverage AI, you need to shift your evaluation strategy. Here are the key takeaways from our research and experience at ChatBench.org™.

-

Embrace Domain-Specific Evaluation: Stop relying solely on public leaderboards. The most meaningful test is how a model performs on your data and your workflows. As Salesforce notes, a good benchmark should be a reliable indicator of how a model will perform “in the wild.”

-

Prioritize Fine-Tuning: The single biggest lever you can pull to improve performance for business applications is fine-tuning. It’s essential for achieving the accuracy, latency, and cost-effectiveness required for enterprise-grade AI.

-

Adopt a Holistic Scorecard: Move beyond just accuracy. Use a multi-metric approach like the one proposed by Salesforce AI Research, which evaluates models on Accuracy, Cost, Speed, Trust, and Sustainability. This provides a much more complete picture for making strategic decisions.

-

Don’t Underestimate Smaller Models: The Moveworks benchmark proved that a 7-billion parameter model (MoveLM) could consistently outperform models 10 times its size on enterprise tasks. This has massive implications for cost and efficiency.

- 👉 Shop LLM Platforms on: AWS | Google Cloud AI | Microsoft Azure

-

Treat Benchmarking as a Continuous Process: The world of AI is not static. New models are released constantly. Your evaluation framework should be a living process, adapting to new technologies and evolving business needs.

🤖 Fine-Tuned vs. Out-of-the-Box: Which Language Model Wins in Enterprise Tasks?

Let’s settle the debate. You have a choice: use a massive, off-the-shelf model like GPT-4, or invest in fine-tuning a smaller, more specialized model. Which path leads to victory? For business applications, the answer is overwhelmingly in favor of fine-tuning.

As Moveworks concluded, “task-specific models are more reliable than off-the-shelf alternatives.”

Here’s a head-to-head comparison based on what truly matters for an enterprise.

| Feature | Out-of-the-Box LLM (e.g., GPT-4) | Fine-Tuned Enterprise LLM (e.g., MoveLM™) | Winner for Business |

|---|---|---|---|

| Domain Knowledge | 🌍 General world knowledge. Struggles with company-specific jargon and processes. | 🏢 Deeply understands your business’s unique vocabulary, workflows, and data. | Fine-Tuned |

| Accuracy on Specific Tasks | ✅ Good, but often makes logical errors in a business context. Can hallucinate. | ✅✅ Excellent. Higher accuracy and reliability on the tasks it was trained for. | Fine-Tuned |

| Latency (Speed) | 🐢 Slower. Larger models take more time to generate responses. | 🚀 Faster. Smaller, optimized models provide quicker answers, crucial for user experience. | Fine-Tuned |

| Cost | 💰 High. Can be very expensive to run at scale due to the computational power required. | 💵 Lower. More cost-effective due to smaller size and greater efficiency. | Fine-Tuned |

| Customizability & Control | ❌ Limited. You are dependent on the provider’s updates and safety filters. | ✅✅ High. You have full control over the training data, behavior, and output format. | Fine-Tuned |

| Ease of Initial Setup | ✅✅ Very Easy. Just need an API key to get started. | ❌ More Complex. Requires data preparation, training infrastructure, and expertise. | Out-of-the-Box |

The Verdict: While out-of-the-box models are great for rapid prototyping and general tasks, a fine-tuned LLM is the undisputed champion for serious, scalable enterprise applications. The initial investment in data preparation and training pays massive dividends in performance, cost, and reliability.

🛠️ How to Choose the Right Language Model Benchmark for Your Business Needs

Feeling empowered to build your own evaluation process? Fantastic! Here’s a step-by-step guide from the experts at ChatBench.org™ to help you create a benchmarking framework that delivers real, actionable insights. This is a key part of our Developer Guides.

Step 1: Define Your Business Objectives

Before you write a single line of code, ask the most important question: What problem are we trying to solve?

- Are you automating customer support?

- Summarizing legal documents?

- Generating marketing copy?

- Powering an internal search engine?

Your goal will determine the metrics that matter most. For a customer-facing chatbot, speed and safety are critical. For document analysis, factual accuracy is paramount.

Step 2: Curate a “Golden” Dataset

This is your secret weapon. Assemble a diverse, high-quality dataset that is representative of the real-world scenarios your LLM will face.

- Collect Real Examples: Pull from customer emails, support tickets, internal documents, and chat logs.

- Include Edge Cases: Don’t just test the easy stuff. Include tricky, ambiguous, and complex queries.

- Ensure Diversity: Your dataset should reflect the full range of topics, user types, and language styles your model will encounter.

Step 3: Select Your Metrics (The Holistic Scorecard)

Don’t just measure accuracy. Create a balanced scorecard that aligns with your business objectives, drawing inspiration from frameworks like Salesforce’s and HELM.

- Performance Metrics:

- Accuracy: How often is the answer correct? (e.g., F1-score, Exact Match).

- Completeness: Does the answer contain all the necessary information?

- Conciseness: Is the answer direct and to the point?

- Operational Metrics:

- Latency: How fast is the response?

- Throughput: How many requests can it handle at once?

- Cost: What is the total cost of ownership?

- Trust and Safety Metrics:

- Hallucination Rate: How often does it make things up?

- Bias & Fairness: Does it perform equally well across different demographic groups?

- Toxicity: Does it avoid generating harmful or inappropriate content?

Step 4: Run the Benchmark and Analyze the Results

Test a variety of models, including both large, general-purpose options and smaller, fine-tuned candidates.

- Standardize Everything: Use the exact same prompts and datasets for every model to ensure a fair comparison.

- Look for Trade-offs: Model A might be the most accurate but also the slowest and most expensive. Model B might be slightly less accurate but much faster and cheaper. Which is better for your use case?

- Qualitative Analysis: Don’t just look at the numbers. Have human experts review the responses to catch nuances that automated metrics might miss.

By following this structured approach, you can move beyond the hype and make a data-driven decision that truly aligns with your business goals.

📈 Future Trends: The Next Frontier in Language Model Benchmarking for Enterprises

The world of LLM evaluation is evolving as rapidly as the models themselves. Here at ChatBench.org™, we’re keeping a close eye on the trends that will shape the future of how businesses measure AI performance.

- Rise of Agentic Benchmarks: The focus is shifting from evaluating simple Q&A to assessing complex, multi-step reasoning. Can an AI agent complete a task that requires using multiple tools, making decisions, and adapting to new information? Benchmarks like Salesforce’s CRMArena-Pro are leading the way, testing how agents perform in real business workflows. However, early results show that even top models struggle, with success rates dropping significantly in longer conversations.

- Holistic and Ethical Evaluation: There’s a growing consensus that traditional metrics are not enough. The future of benchmarking will incorporate a more holistic set of criteria, including fairness, interpretability, and environmental impact. We’ll see more emphasis on measuring a model’s alignment with ethical standards and societal values.

- Increased Automation and “LLM-as-Judge”: Evaluating LLMs can be slow and expensive. To scale the process, we’ll see greater use of automated platforms and the “LLM-as-judge” approach, where one powerful LLM is used to score the output of another. This allows for faster, more nuanced evaluation than simple benchmarks, though it requires careful calibration.

- Hyper-Specialization: As the “one-size-fits-all” myth continues to crumble, we’ll see an explosion of domain-specific benchmarks for industries like finance, healthcare, and law. These will become the gold standard for proving a model’s worth in a particular vertical.

The future is specialized, holistic, and automated. Businesses that adapt their evaluation strategies to these trends will be the ones who unlock the true potential of generative AI.

🔗 The AI Assistant Platform: Empowering Your Entire Workforce with Language Models

Ultimately, benchmarking isn’t just an academic exercise; it’s the foundation for building powerful, reliable AI tools that can transform your business. One of the most impactful applications of a well-benchmarked LLM is the creation of an AI assistant platform or enterprise copilot.

Companies like Moveworks are demonstrating how a finely-tuned language model can act as a central bridge, connecting employees to information locked away in hundreds of different business systems. An effective AI assistant, powered by a model that truly understands your business, can:

- Instantly Resolve Support Issues: Answer IT and HR questions, provision software, and troubleshoot problems without human intervention.

- Democratize Access to Information: Allow any employee to query complex business data using natural language.

- Automate Tedious Tasks: Help employees draft emails, summarize meetings, fill out forms, and take action across different applications.

The key to success is the underlying model’s ability to understand intent, access the right information, and take the correct action—all capabilities that must be rigorously tested and validated through enterprise-specific benchmarking. This is a core focus of our Model Comparisons research.

📰 Subscribe to Our Insights Blog for the Latest in AI and Business

Enjoying this deep dive? This is just a taste of the insights we share every week. The world of AI is moving at lightning speed, and staying ahead of the curve is the key to maintaining your competitive edge.

Subscribe to the ChatBench.org™ Insights blog to get expert analysis, practical guides, and the latest research delivered straight to your inbox. Don’t just follow the AI revolution—lead it.

🎯 Conclusion: Benchmarking Language Models to Boost Your Business Edge

We’ve journeyed through the fascinating, sometimes bewildering world of benchmarking language models for business applications. If you take one thing away from this deep dive, let it be this: the smartest AI investment is not the biggest model, but the right model—fine-tuned, rigorously benchmarked, and tailored to your unique business needs.

The Moveworks Enterprise LLM Benchmark has shown us a compelling truth: fine-tuned, domain-specific models like MoveLM™ can outperform larger, general-purpose giants like GPT-4 on real enterprise tasks. This isn’t just a technical curiosity—it’s a strategic imperative. Businesses that rely on out-of-the-box models risk paying more for less accuracy, slower responses, and frustrating user experiences.

But beware the siren song of public leaderboards and academic benchmarks. They’re helpful starting points but don’t tell the whole story. Your business deserves a customized benchmarking framework that evaluates models on the metrics that matter most to you—accuracy, speed, cost, trust, and sustainability.

By embracing this approach, you unlock the true potential of AI: transforming raw insight into a competitive edge that drives innovation, efficiency, and customer delight.

So, what about the unresolved question we teased earlier—which model should you pick? Our confident recommendation: start with a smaller, open-source or proprietary model that you can fine-tune on your own data. Use a rigorous, enterprise-specific benchmark like Moveworks’ or build your own. This path offers the best balance of performance, cost, and control.

At ChatBench.org™, we’re here to help you navigate this exciting frontier. Ready to benchmark your way to AI success? Let’s get started!

🔗 Recommended Links for Further Exploration

Looking to explore or deploy the models and tools we discussed? Here are some curated shopping and resource links to get you started:

- OpenAI GPT Models:

Amazon Search: GPT-4 | OpenAI Official Website - Moveworks MoveLM™:

Moveworks Official Website - EleutherAI GPT-J:

EleutherAI GitHub | Amazon Search: GPT-J - Stability AI StableLM:

Stability AI Official | Amazon Search: StableLM - Databricks Dolly:

Databricks Dolly Official - MosaicML MPT:

MosaicML Official | Amazon Search: MPT - Teradata ClearScape Analytics™:

Teradata Official - Books on AI and Language Models:

❓ Frequently Asked Questions About Language Model Benchmarking

What are the key metrics for benchmarking language models in business?

Benchmarking language models for business involves a multi-dimensional evaluation beyond simple accuracy. Key metrics include:

- Accuracy: How well the model’s output matches expected results, often measured by exact match or F1-score.

- Latency: The time it takes for the model to generate a response, critical for real-time applications.

- Cost: Total cost of running the model at scale, including compute and API expenses.

- Trust and Safety: Measures of hallucination rates, bias, fairness, and toxicity to ensure reliable and ethical outputs.

- Sustainability: Environmental impact, often approximated by energy consumption or response time.

- Scalability: Ability to maintain performance under increasing loads.

These metrics help businesses balance performance with operational constraints and ethical considerations.

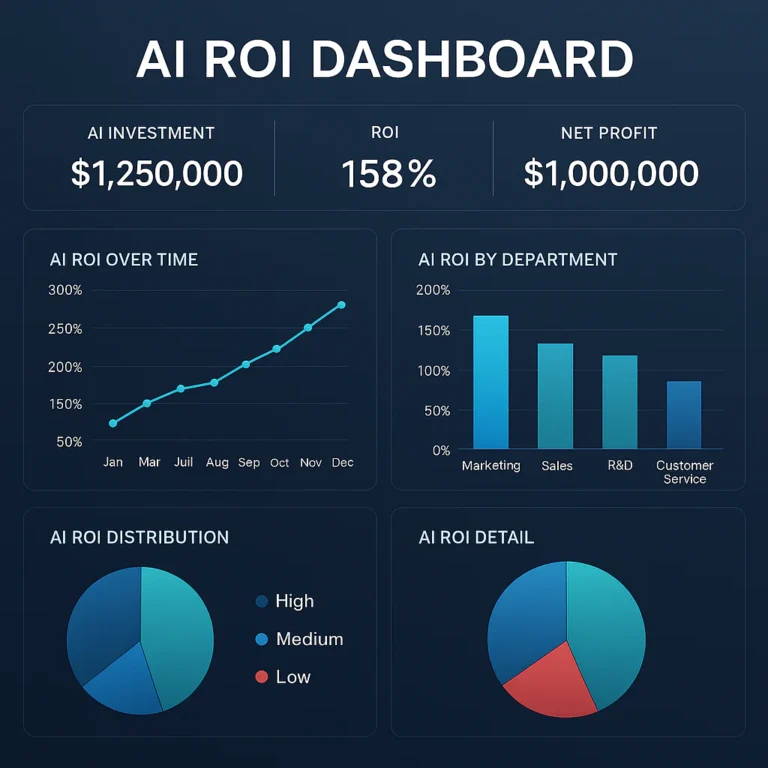

How can benchmarking language models improve AI-driven business strategies?

Benchmarking provides data-driven insights into which models perform best for specific tasks, enabling businesses to:

- Optimize Costs: Choose models that deliver the best ROI by balancing accuracy and resource consumption.

- Enhance User Experience: Select models with low latency and high trustworthiness to improve customer satisfaction.

- Drive Innovation: Identify strengths and weaknesses to tailor AI solutions that unlock new capabilities.

- Mitigate Risks: Detect biases and hallucinations early, protecting brand reputation and compliance.

- Inform Roadmaps: Guide investment in fine-tuning, infrastructure, and integration based on empirical evidence.

In essence, benchmarking turns AI from a black box into a strategic asset.

Which language models perform best for customer service applications?

For customer service, fine-tuned, domain-specific models consistently outperform large, general-purpose models. For example:

- Moveworks MoveLM™ excels in understanding enterprise-specific jargon and workflows, delivering accurate intent classification and API call generation.

- OpenAI’s GPT-4 offers strong general language understanding but may misinterpret specialized business contexts without fine-tuning.

- Open-source models like GPT-J or StableLM, when fine-tuned on customer support data, provide cost-effective alternatives with competitive performance.

The key is to fine-tune models on your own customer interaction data and benchmark them rigorously to ensure they meet your accuracy, latency, and trust requirements.

How does benchmarking language models help turn AI insight into competitive advantage?

Benchmarking transforms AI from a theoretical capability into actionable business intelligence by:

- Revealing Real-World Performance: It shows how models behave on your actual data, not just academic tests.

- Enabling Customization: Insights from benchmarking guide fine-tuning and prompt engineering to tailor models precisely.

- Reducing Deployment Risks: By identifying weaknesses early, benchmarking prevents costly failures and user dissatisfaction.

- Accelerating Innovation Cycles: Continuous benchmarking fosters rapid iteration and improvement.

- Supporting Strategic Decisions: It provides objective data to justify AI investments and align them with business goals.

This rigorous approach ensures AI initiatives deliver measurable value and sustainable competitive differentiation.

Additional FAQ Topics

What are the limitations of current public LLM benchmarks for enterprises?

Most public benchmarks focus on general knowledge and zero-shot tasks, lacking enterprise-specific jargon, workflows, and structured outputs. They often become outdated quickly and may not reflect real-world business complexity, leading to misleading conclusions.

How important is fine-tuning in improving LLM performance for business?

Fine-tuning on domain-specific data is crucial. It significantly boosts accuracy, reduces hallucinations, and improves latency and cost-efficiency. Without fine-tuning, even the most powerful models can underperform on specialized tasks.

Can smaller models really outperform giants like GPT-4?

Yes! When fine-tuned properly, smaller models (e.g., 7B parameters) can outperform much larger models on specific business tasks, offering faster responses and lower costs without sacrificing accuracy.

📚 Reference Links and Credible Sources

- Moveworks Enterprise LLM Benchmark: https://www.moveworks.com/us/en/resources/blog/moveworks-enterprise-llm-benchmark-evaluates-large-language-models-for-business-applications

- Teradata on LLM Benchmarking for Business Success: https://www.teradata.com/insights/ai-and-machine-learning/llm-benchmarking-business-success

- Salesforce AI Research CRM Benchmark: https://www.salesforceairesearch.com/crm-benchmark

- OpenAI GPT-4: https://openai.com/index/gpt-4/

- Moveworks Official Site: https://www.moveworks.com/

- EleutherAI GPT-J: https://github.com/sleekmike/Finetune_GPT-J_6B_8-bit

- Stability AI StableLM: https://stability.ai/news/introducing-stable-lm-2-12b

- Databricks Dolly: https://www.databricks.com/blog/2023/03/24/hello-dolly-democratizing-magic-chatgpt-open-models.html

- MosaicML MPT: https://www.mosaicml.com/blog/mpt-7b

- Teradata ClearScape Analytics™: https://www.teradata.com/platform/clearscape-analytics

For more on CRM-specific generative AI benchmarking, see Salesforce AI Research’s Generative AI Benchmark for CRM.

Ready to benchmark your AI models and turn insight into impact? Stay curious, stay informed, and keep pushing the boundaries of what AI can do for your business! 🚀