Support our educational content for free when you purchase through links on our site. Learn more

Benchmarking Deep Learning Frameworks for Optimal Performance 🚀 (2025)

Choosing the right deep learning framework can feel like navigating a maze blindfolded. With giants like TensorFlow, PyTorch, and the rising star JAX all promising blazing speeds and seamless workflows, how do you know which one truly delivers the best bang for your buck? At ChatBench.org™, we’ve rolled up our sleeves and put these frameworks through their paces—measuring training throughput, inference latency, memory usage, and more—to uncover the real winners in the race for optimal performance.

In this comprehensive guide, we don’t just stop at raw speed. We reveal surprising insights from our benchmarking adventures, like how PyTorch’s seemingly slower epochs can sometimes converge faster than JAX’s lightning-fast iterations. Plus, we’ll walk you through setting up your own robust benchmarking environment, avoiding common pitfalls, and squeezing every ounce of performance from your hardware and software stack. Ready to turn AI insight into your competitive edge? Let’s dive in!

Key Takeaways

- Benchmarking is essential to move beyond marketing hype and make informed framework choices tailored to your specific models and hardware.

- TensorFlow excels in production environments with its mature ecosystem, while PyTorch dominates research thanks to its dynamic graphs and ease of use.

- JAX offers unmatched speed for advanced users through JIT compilation and functional programming but requires a steeper learning curve.

- Comprehensive metrics matter: training throughput, inference latency, memory footprint, GPU utilization, and scalability all paint a fuller picture.

- Avoid common benchmarking pitfalls like data loading bottlenecks and inconsistent hyperparameters to ensure trustworthy results.

- Integrate benchmarking into your MLOps pipeline for continuous performance monitoring and regression detection.

Curious about how mixed precision training or distributed strategies can boost your models even further? Stick around—we’ve got you covered!

Table of Contents

- ⚡️ Quick Tips and Facts: Your Deep Learning Performance Cheat Sheet

- 🚀 The Great Framework Face-Off: A Brief History of Deep Learning Frameworks

- 🎯 Why Benchmark? Unlocking Peak Performance and Informed Decisions

- 🥊 The Contenders: A Deep Dive into Popular Deep Learning Frameworks

- 🛠️ Setting the Stage: Essential Components of a Robust Benchmarking Environment

- 📊 The Art of Measurement: Key Metrics for Deep Learning Performance Benchmarking

- Training Throughput: How Fast Can You Learn?

- Inference Latency and Throughput: Real-Time Responsiveness

- Memory Footprint: Managing Your GPU/CPU RAM Usage

- GPU Utilization and Power Consumption: Efficiency Matters

- Model Convergence Speed: Reaching Optimal Performance Faster

- Scalability: Multi-GPU and Distributed Training Performance

- 📝 Crafting Your Battle Plan: A Step-by-Step Guide to Benchmarking Deep Learning Frameworks

- Define Your Objectives: What Are You Trying to Optimize?

- Choose Your Models and Datasets: Real-World Scenarios for Relevant Benchmarks

- Standardize Your Environment: Eliminate Variables for Reproducible Results

- Implement Consistent Code Across Frameworks: Apples-to-Apples Comparison

- Warm-Up Your Engines: Pre-Run Considerations for Stable Metrics

- Collect Comprehensive Metrics: Don’t Miss a Beat with Performance Profiling

- Analyze and Visualize Your Results: Unlocking Insights from Performance Data

- Iterate and Optimize: The Continuous Improvement Loop for Deep Learning Performance

- ⚠️ Common Pitfalls and How to Dodge Them: Benchmarking Blunders to Avoid

- Ignoring Data Loading Bottlenecks: The Silent Performance Killer

- Inconsistent Hyperparameters: Ensuring a Fair Fight

- Not Accounting for Framework Overheads: Beyond Raw Computation

- Benchmarking on Insufficient Hardware: Garbage In, Garbage Out

- Misinterpreting Results: Correlation vs. Causation in Performance Analysis

- ✨ Advanced Optimization Techniques: Squeezing Every Ounce of Deep Learning Performance

- Mixed Precision Training (FP16/BF16): Faster Training, Less Memory

- Distributed Training Strategies: Scaling Up with Data and Model Parallelism

- Graph Optimization and JIT Compilation: Unleashing Compiler Magic

- Efficient Data Pipelines: Mastering TF.Data, PyTorch Dataloaders, and More

- Hardware-Specific Optimizations: Leveraging Tensor Cores, XLA, and Custom Kernels

- 🗣️ Real-World Anecdotes: Our Benchmarking Adventures at ChatBench.org™

- ⚙️ The MLOps Perspective: Integrating Benchmarking into Your Workflow and CI/CD

- 🔮 Future Trends in Deep Learning Performance: What’s Next on the Horizon?

- ✅ Conclusion: Your Path to Peak Deep Learning Performance

- 🔗 Recommended Links: Dive Deeper!

- ❓ FAQ: Your Burning Questions Answered

- 📚 Reference Links: Our Sources and Further Reading

Body

⚡️ Quick Tips and Facts: Your Deep Learning Performance Cheat Sheet

Welcome, fellow AI enthusiasts, to the ChatBench.org™ labs! Before we dive deep into the computational abyss of benchmarking, let’s arm you with a quick cheat sheet. Think of this as the espresso shot you need before a marathon coding session.

Here’s what you absolutely need to know:

- ✅ Benchmarking is not optional; it’s essential for understanding real-world performance beyond what’s written on the hardware box. Can AI benchmarks be used to compare the performance of different AI frameworks? Yes, and it’s a critical practice for making informed decisions.

- ✅ Frameworks are not created equal. TensorFlow, PyTorch, and JAX each have distinct performance profiles. One might excel at training, another at inference, and a third might be the speed demon for specific research tasks.

- ✅ Hardware is more than just a GPU. Yes, your NVIDIA GPU is the star, but the CPU, RAM, and even storage speed can create significant bottlenecks.

- ✅ Mixed-precision training is your friend. Using 16-bit floating-point numbers (FP16/BF16) can dramatically speed up training and reduce memory usage, often with little to no loss in accuracy. Modern NVIDIA GPUs with Tensor Cores are specifically designed for this.

- ✅ Data pipelines can be silent performance killers. If your GPU is waiting for data, you’re wasting expensive compute cycles. Optimize your data loading!

- ❌ Don’t trust marketing numbers blindly. Always run your own benchmarks on your specific models and hardware.

- ❌ Don’t just measure speed. Look at memory usage, GPU utilization, and power consumption for a holistic view.

- ❌ Don’t compare apples to oranges. Ensure your tests use the same dataset, model architecture, and hyperparameters across different frameworks for a fair comparison.

🚀 The Great Framework Face-Off: A Brief History of Deep Learning Frameworks

Ever wonder how we got to the current clash of the titans between TensorFlow and PyTorch? It wasn’t an overnight revolution; it was a slow, gritty evolution, forged in the fires of academic labs and tech giants.

The story of deep learning frameworks is a fascinating journey from raw, hard-to-share code to the polished, powerful tools we have today.

- The Pioneers (Early 2000s-2010s): Before the giants, there were pioneers. Theano, developed at the Montreal Institute for Learning Algorithms (MILA), and Torch, created in 2002, were among the first to provide higher-level interfaces for building and training neural networks. They introduced key concepts like using GPUs to accelerate training, laying the groundwork for everything to come.

- The Rise of the Giants (Mid-2010s): The game changed in 2015 when Google unleashed TensorFlow. Designed for scalability and production, it quickly became an industry standard. Not long after, in 2016, Facebook (now Meta) released PyTorch, which gained immense popularity in the research community for its user-friendly, “Pythonic” approach and dynamic computation graphs.

- The Consolidation Era (Late 2010s-Present): The deep learning landscape saw a period of intense competition, leading to a “duopoly” of TensorFlow and PyTorch, which now account for over 95% of use cases. Other frameworks like Microsoft’s CNTK and Chainer either faded or merged their efforts into the leading platforms.

- The New Contender (2018-Present): Just when things seemed settled, Google Research introduced JAX. It’s not a full-fledged framework in the same vein as the others but a high-performance library for numerical computing, bringing features like automatic differentiation and JIT compilation to NumPy. Its raw speed and flexibility have made it a rising star, especially in the research community.

This history matters because each framework’s DNA influences its strengths and weaknesses today. TensorFlow’s industrial roots make it a powerhouse for deployment, while PyTorch’s research-friendly nature makes it ideal for rapid prototyping. And JAX? It’s the wild card, built for pure, unadulterated speed.

🎯 Why Benchmark? Unlocking Peak Performance and Informed Decisions

“Why bother with all this testing?” you might ask. “Can’t I just pick the most popular framework and get to work?”

Well, you could. But that’s like buying a supercar and never taking it out of first gear. Benchmarking isn’t just an academic exercise; it’s a critical practice that separates amateur efforts from professional, high-impact AI Business Applications. As the team behind GTDLBench notes, “it still remains a critical task on choosing the optimal framework for specific deep learning models and applications.”

Here at ChatBench.org™, we live by a simple mantra: “If you don’t measure it, you can’t improve it.”

Benchmarking allows you to:

- Make Informed Framework Choices: Is PyTorch really faster than TensorFlow for your specific computer vision model? Does JAX’s JIT compilation give you an edge in training large language models? Benchmarking provides concrete answers, not just anecdotal evidence.

- Identify Performance Bottlenecks: Is your expensive GPU sitting idle 50% of the time? Your bottleneck might not be the framework but your data loading pipeline or CPU preprocessing. Benchmarking tools help you pinpoint these issues.

- Optimize Hardware Utilization and Costs: By understanding how different frameworks and models use resources, you can choose the most cost-effective hardware. A study by Microway demonstrated huge performance differences between GPUs like the NVIDIA Tesla P100 and K80, showing that the right hardware choice can yield speedups of over 30x. This directly impacts your budget, whether you’re buying hardware or renting from cloud providers like DigitalOcean or Paperspace.

- Ensure Reproducibility and Regression Testing: As you update your code, drivers, or the frameworks themselves, performance can change. A solid benchmarking suite, like the open-source MLBench developed by Collabora, acts as a safety net, ensuring your latest “improvement” didn’t accidentally slow things down. This is a cornerstone of modern MLOps.

Ultimately, benchmarking is about moving from guesswork to data-driven decisions. It’s the compass that guides you toward optimal performance, ensuring your models train faster, your inferences are quicker, and your resources are used efficiently.

🥊 The Contenders: A Deep Dive into Popular Deep Learning Frameworks

Alright, let’s get to the main event! Choosing a deep learning framework is a bit like choosing a car. Do you need a reliable sedan for daily commutes (production), a nimble sports car for weekend track days (research), or a custom-built dragster for pure speed (high-performance computing)? Let’s pop the hoods on the main contenders.

TensorFlow: The Industrial Powerhouse and Its Ecosystem

Think of TensorFlow as the Tesla of frameworks: powerful, production-ready, and backed by a massive, automated ecosystem. It was built by Google for scale and has long been the go-to for deploying models in the wild.

Key Features & Benefits:

- ✅ Production-Ready: Tools like TensorFlow Serving and TFX (TensorFlow Extended) provide a robust, end-to-end pipeline for deploying and managing models at scale. This is a huge win for enterprise applications.

- ✅ Scalability: TensorFlow was designed for distributed training from the ground up, making it a strong choice for massive datasets and models.

- ✅ Ecosystem & Tooling: It boasts a mature ecosystem, including the user-friendly Keras API, visualization tools like TensorBoard, and support for mobile and edge devices with TensorFlow Lite.

- ✅ Cross-Platform Compatibility: With Keras 3, you can now write code that runs on TensorFlow, PyTorch, or JAX, offering unprecedented flexibility.

Drawbacks:

- ❌ Steeper Learning Curve: While Keras simplifies things, diving into pure TensorFlow can be less intuitive than PyTorch, with a more complex API and debugging process.

- ❌ Verbosity: The static graph nature (in TF 1.x) and session-based execution can feel boilerplate-heavy compared to the eager execution of its rivals.

Our Take: If your primary goal is deploying models reliably in a production environment, TensorFlow’s mature ecosystem is hard to beat. It’s the battle-tested veteran.

PyTorch: The Research Darling and Its Dynamic Charm

If TensorFlow is a Tesla, PyTorch is the agile rally car—flexible, responsive, and beloved by those who like to tinker and experiment. Developed by Meta AI, its “Pythonic” nature and ease of use have made it the dominant force in the research community.

Key Features & Benefits:

- ✅ Ease of Use & Debugging: PyTorch’s eager execution model means operations are run immediately. This makes debugging a breeze, as you can inspect tensors and gradients at any point using standard Python tools.

- ✅ Dynamic Computational Graphs: The graph is defined on the fly, which is a massive advantage for models with dynamic structures, common in Natural Language Processing (NLP).

- ✅ Strong Community & Research Adoption: A vast majority of new research papers are published with PyTorch code, meaning you’ll find cutting-edge models and a vibrant support community.

- ✅ Performance Boosts: With features like

torch.compile()in PyTorch 2.x, it has significantly closed the performance gap with TensorFlow for training speed.

Drawbacks:

- ❌ Production Ecosystem Still Maturing: While tools like TorchServe are improving, PyTorch’s production deployment story has historically been less comprehensive than TensorFlow’s.

- ❌ Less Comprehensive Mobile Support: While it has options, TensorFlow Lite has traditionally been a more robust solution for on-device inference.

Our Take: For researchers, students, and anyone prioritizing rapid prototyping and a smooth development experience, PyTorch is the undisputed champion. It’s the framework that gets out of your way and lets you build.

JAX: The Future of High-Performance Numerical Computing?

JAX is the Formula 1 car of the group. It’s not for beginners, but in the hands of a skilled driver, it’s unbelievably fast. It combines NumPy‘s familiar API with a JIT compiler (XLA), automatic differentiation, and powerful parallelization primitives.

Key Features & Benefits:

- ✅ Blazing Speed: JAX’s use of XLA (Accelerated Linear Algebra) for JIT compilation can result in significant speedups, especially on hardware accelerators like GPUs and TPUs.

- ✅ Functional Programming Paradigm: Its functional nature (e.g., pure functions) makes code easier to reason about, parallelize, and debug in complex scenarios.

- ✅ Powerful Transformations: JAX provides composable function transformations like

grad(for gradients),jit(for compilation), andvmap/pmap(for automatic vectorization/parallelization) that are incredibly powerful. - ✅ Growing Adoption: It’s being adopted by major research labs like DeepMind and is seeing increased use within Google itself.

Drawbacks:

- ❌ High Barrier to Entry: JAX requires a solid understanding of functional programming concepts and can be challenging for those accustomed to the object-oriented style of PyTorch or TensorFlow.

- ❌ Not a Full Framework: It lacks the high-level components of a full framework, like data loaders and training loops. You often need to pair it with libraries like Flax or Haiku to be productive.

- ❌ Compilation Overhead: The initial JIT compilation step can make it seem slower for small-scale tests, though this cost is amortized over many iterations in large-scale training.

Our Take: JAX is for performance purists and advanced researchers pushing the boundaries of what’s possible. If you’re working on massive models or novel algorithms and are willing to invest the time, JAX can unlock a new level of performance.

Other Notable Frameworks: Keras, MXNet, ONNX, and More

- Keras: Once a standalone high-level API, Keras is now the default API for TensorFlow and, with Keras 3, can run on top of PyTorch and JAX as well. It’s the best starting point for beginners.

- Apache MXNet: While its popularity has waned, MXNet is known for its excellent scalability and support for multiple languages. It remains a distant third in the framework race.

- ONNX (Open Neural Network Exchange): Not a framework, but a crucial standard. ONNX allows you to convert models between different frameworks (e.g., train in PyTorch, deploy with ONNX Runtime). This interoperability is key for flexible Developer Guides.

🛠️ Setting the Stage: Essential Components of a Robust Benchmarking Environment

You wouldn’t test a race car on a bumpy dirt road, would you? Similarly, a reliable benchmark requires a carefully controlled and standardized environment. Getting this right is non-negotiable for results you can trust.

Hardware Heroes: GPUs, CPUs, and TPUs – Oh My!

The hardware you run on is the single biggest factor in performance.

- GPUs (Graphics Processing Units): The workhorses of deep learning. When choosing a GPU, don’t just look at the model number. Key specs include:

- VRAM: Determines the size of the models and batches you can handle.

- CUDA Cores/Tensor Cores: More cores generally mean more parallel processing power. Tensor Cores, found in modern NVIDIA GPUs (like the RTX and A-series), are specifically designed to accelerate the matrix operations common in deep learning, especially with mixed precision.

- Memory Bandwidth: How fast data can move in and out of the GPU’s memory. This can be a major bottleneck.

- CPUs (Central Processing Units): Don’t neglect the CPU! It’s responsible for data loading, preprocessing, and orchestrating the GPU. A slow CPU can leave your expensive GPU starved for data.

- TPUs (Tensor Processing Units): Google’s custom ASICs (Application-Specific Integrated Circuits) are designed from the ground up for deep learning. They excel at large-scale training and are a key part of the Google Cloud ecosystem.

👉 Shop High-Performance GPUs on:

- NVIDIA RTX 4090: Amazon

- NVIDIA A100: RunPod | Paperspace

- NVIDIA H100: RunPod | Paperspace

Software Stack: Drivers, CUDA, cuDNN, and Beyond for Optimal Performance

Your software environment is just as critical as your hardware. An outdated driver can cripple performance.

- NVIDIA Drivers: The foundational software that allows your OS to communicate with the GPU. Always use the latest stable version recommended for your toolkit.

- CUDA Toolkit: CUDA® is NVIDIA’s parallel computing platform and programming model. It provides the APIs that deep learning frameworks use to run code on the GPU.

- cuDNN (CUDA Deep Neural Network library): This is a GPU-accelerated library of primitives for deep neural networks. It provides highly tuned implementations for standard operations like convolutions and pooling, which frameworks plug into for a massive performance boost.

- Framework Versions: Always benchmark using the latest stable releases of TensorFlow, PyTorch, etc., as they often include significant performance optimizations.

Setting this up can be tricky, but it’s a crucial first step.

Data Dynamics: Preparing Your Datasets for Fair Play and Reproducibility

Your data setup can make or break your benchmark. The goal is to ensure the test is fair and the results are reproducible.

- Standardized Datasets: Use well-known public datasets like ImageNet for vision or GLUE for NLP. This allows you to compare your results against established benchmarks.

- Consistent Data Splits: Always use the exact same training, validation, and test splits for every framework and every run. Any variation here will invalidate your comparisons.

- Pre-loading and Caching: To isolate framework performance from I/O bottlenecks, consider pre-loading your entire dataset into RAM if possible. This ensures the GPU is never waiting on the disk.

- Data Augmentation: Be consistent! Ensure that any data augmentation pipelines are identical across the frameworks you are testing.

By standardizing your hardware, software, and data, you eliminate confounding variables and can be confident that the differences you measure are due to the frameworks themselves.

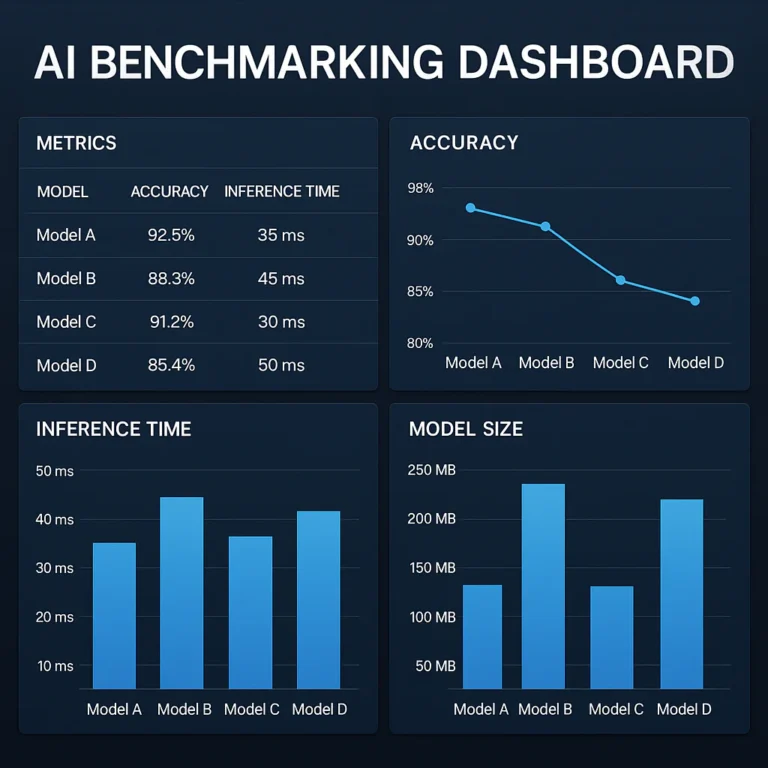

📊 The Art of Measurement: Key Metrics for Deep Learning Performance Benchmarking

So, you’ve set up your pristine environment. Now, what exactly should you measure? Simply timing a training run isn’t enough. A comprehensive benchmark looks at a variety of key performance indicators (KPIs) to paint a complete picture.

Training Throughput: How Fast Can You Learn?

This is the headline metric. It measures the speed of the training process.

- What it is: The number of training samples processed per second (e.g., images/sec, tokens/sec).

- Why it matters: Higher throughput means faster training, allowing you to iterate on your models more quickly. This is a critical factor in Fine-Tuning & Training.

- How to measure: Divide the batch size by the average time it takes to process one batch (a forward and backward pass).

Inference Latency and Throughput: Real-Time Responsiveness

Once a model is trained, its performance in production is key.

- Latency: The time it takes to make a single prediction on one input. This is crucial for real-time applications like autonomous driving or interactive chatbots.

- Throughput: The number of inferences the model can perform per second. This is important for services that handle many user requests simultaneously.

- The Trade-off: There’s often a trade-off. Optimizing for low latency (e.g., using a batch size of 1) might reduce overall throughput, and vice-versa.

Memory Footprint: Managing Your GPU/CPU RAM Usage

How much memory does your model consume?

- What it is: The amount of VRAM (on the GPU) and RAM (on the CPU) used during training and inference.

- Why it matters: VRAM limits your batch size and model complexity. A model with a smaller memory footprint can be trained with larger batches (improving throughput) or deployed on less expensive hardware.

- How to measure: Use tools like

nvidia-smifor GPU memory and system monitoring tools for CPU RAM.

GPU Utilization and Power Consumption: Efficiency Matters

Is your GPU actually working hard?

- GPU Utilization: The percentage of time the GPU’s compute cores are active. Low utilization is a red flag, often pointing to a data loading bottleneck.

- Power Consumption: Measured in watts, this is increasingly important for both cost and environmental reasons. A more efficient framework/hardware combo can save significant money at scale.

- Performance-per-Watt: A great metric that combines speed and power consumption to measure overall efficiency.

Model Convergence Speed: Reaching Optimal Performance Faster

How many epochs does it take for your model to reach a target accuracy?

- What it is: The rate at which the model’s loss decreases and accuracy improves.

- Why it matters: A framework or set of hyperparameters that leads to faster convergence can save a huge amount of training time, even if its per-batch throughput is slightly lower.

- How to measure: Plot the validation accuracy/loss against the number of training steps or epochs.

Scalability: Multi-GPU and Distributed Training Performance

How well does performance increase when you add more hardware?

- What it is: The speedup gained from using multiple GPUs or nodes compared to a single one.

- Why it matters: For training massive models, good scalability is essential. Poor scaling indicates communication overhead is becoming a bottleneck.

- How to measure: Compare the training throughput on 1, 2, 4, and 8 GPUs. Ideal linear scaling would mean 8 GPUs are 8x faster than one.

By tracking this diverse set of metrics, you can move beyond a simple “which is fastest” comparison to a nuanced understanding of each framework’s strengths, weaknesses, and trade-offs. This is the core of effective Model Comparisons.

📝 Crafting Your Battle Plan: A Step-by-Step Guide to Benchmarking Deep Learning Frameworks

Ready to roll up your sleeves? Here’s our step-by-step guide to running a benchmark that’s both meaningful and reproducible. Think of this as your mission briefing.

1. Define Your Objectives: What Are You Trying to Optimize?

First, ask yourself: what is the goal? Are you…

- Choosing a framework for a new project?

- Optimizing an existing model for faster training?

- Evaluating hardware for a new data center?

- Trying to reduce inference latency for a real-time application?

Your objective will determine which metrics are most important to you.

2. Choose Your Models and Datasets: Real-World Scenarios for Relevant Benchmarks

Select models and datasets that are representative of your actual workload.

- For Computer Vision: Standard choices include ResNet-50, EfficientNet, or Vision Transformers (ViT) on datasets like CIFAR-10 or ImageNet.

- For NLP: BERT-Large or variants of GPT are common benchmarks for tasks on the GLUE or SQuAD datasets.

- Your Own Model: The best benchmark is always your own custom model on your own data.

3. Standardize Your Environment: Eliminate Variables for Reproducible Results

This is crucial for a fair fight. As we covered earlier, ensure that across all tests, you use the exact same:

- Hardware: Same GPU(s), CPU, and server.

- Software: Same OS, NVIDIA driver version, CUDA/cuDNN versions.

- Framework Versions: Pin the exact versions of TensorFlow, PyTorch, etc.

4. Implement Consistent Code Across Frameworks: Apples-to-Apples Comparison

This is where the real work comes in. You need to implement the model and training loop in each framework as identically as possible. Pay close attention to:

- Model Architecture: Ensure the number of layers, neurons, filter sizes, and activation functions are identical.

- Optimizer: Use the same optimizer (e.g., AdamW) with the same hyperparameters (learning rate, weight decay).

- Loss Function: Use the same loss function (e.g., Cross-Entropy Loss).

- Data Preprocessing & Augmentation: The pipeline must be equivalent.

5. Warm-Up Your Engines: Pre-Run Considerations for Stable Metrics

Don’t measure the first few iterations! GPUs and frameworks often have “warm-up” periods where caches are being populated or initial compilations happen.

- Run the training loop for a few hundred iterations before you start measuring.

- This ensures you’re capturing the steady-state performance, not the noisy start-up phase.

6. Collect Comprehensive Metrics: Don’t Miss a Beat with Performance Profiling

During the benchmark run, collect all the key metrics we discussed earlier:

- Training/Inference Throughput

- Latency

- GPU/CPU Utilization

- Memory Usage

- Power Consumption

Use tools like NVIDIA’s nvidia-smi for GPU stats and framework-specific profilers like TensorBoard Profiler or PyTorch Profiler for detailed insights.

7. Analyze and Visualize Your Results: Unlocking Insights from Performance Data

Numbers in a spreadsheet are hard to interpret. Visualize your results!

- Use bar charts to compare throughput and latency.

- Use line graphs to plot memory usage or loss curves over time.

- Create tables to summarize the key findings clearly.

This is where you’ll spot the trends and draw your conclusions.

8. Iterate and Optimize: The Continuous Improvement Loop for Deep Learning Performance

A benchmark is not a one-and-done affair. Use the insights you’ve gained to make changes and then—you guessed it—benchmark again!

- Did you find a data loading bottleneck? Fix it and re-run.

- Is one framework using too much memory? Try reducing the batch size or enabling mixed precision and measure the impact.

This iterative process is the heart of performance optimization.

⚠️ Common Pitfalls and How to Dodge Them: Benchmarking Blunders to Avoid

Here at ChatBench.org™, we’ve seen it all. We’ve made the mistakes so you don’t have to. Benchmarking is fraught with subtle traps that can invalidate your results. Here are the most common blunders and how to sidestep them like a pro.

Ignoring Data Loading Bottlenecks: The Silent Performance Killer

This is the #1 mistake we see. You have a top-of-the-line NVIDIA H100 GPU, but your training is inexplicably slow. You check your GPU utilization and it’s hovering at 40%. What gives?

- The Pitfall: Your data loading and preprocessing pipeline, running on the CPU, can’t keep up with the GPU. The GPU finishes a batch and then sits idle, waiting for the next one.

- How to Dodge It:

- ✅ Use efficient data loaders: Both TensorFlow’s

tf.dataand PyTorch’sDataLoaderhave powerful features for parallel loading and prefetching. Use them! - ✅ Profile your CPU: Check if your CPU cores are maxed out. If so, this is a strong sign of a bottleneck.

- ✅ Move preprocessing to the GPU: If possible, perform data augmentation and normalization on the GPU to free up the CPU.

- ✅ Use efficient data loaders: Both TensorFlow’s

Inconsistent Hyperparameters: Ensuring a Fair Fight

You’re comparing two frameworks, and one seems much faster at converging to a good accuracy. Victory, right? Not so fast.

- The Pitfall: You used a different learning rate schedule or weight decay value in one framework versus the other. These hyperparameters have a massive impact on convergence speed.

- How to Dodge It:

- ✅ Create a single source of truth: Store all your hyperparameters in a single configuration file (e.g., a YAML or JSON file) that both training scripts read from.

- ✅ Double-check optimizer defaults: Optimizers in different frameworks might have slightly different default values for parameters like epsilon. Explicitly set every parameter to ensure consistency.

Not Accounting for Framework Overheads: Beyond Raw Computation

Sometimes, the raw number-crunching speed is similar, but one framework feels sluggish.

- The Pitfall: Frameworks have overheads related to graph creation, kernel launching, and Python interpreter interactions. This can be especially noticeable with small models or small batch sizes.

- How to Dodge It:

- ✅ Use framework-specific profilers: They can break down the time spent in different parts of the code, revealing where the overhead lies.

- ✅ Test with realistic batch sizes: Benchmarking with a batch size of 1 might exaggerate Python overhead. Use batch sizes that reflect your production use case.

Benchmarking on Insufficient Hardware: Garbage In, Garbage Out

Running a benchmark for a massive language model on a single consumer-grade GPU.

- The Pitfall: The results will not be representative of how the model would perform in a real-world, multi-GPU training environment. You might hit VRAM limits that force you to use tiny batch sizes, skewing the results.

- How to Dodge It:

- ✅ Match the hardware to the task: Use hardware that is appropriate for the scale of the models you are testing.

- ✅ Be transparent about your setup: If you must use limited hardware, clearly state the limitations in your results and avoid making broad generalizations.

Misinterpreting Results: Correlation vs. Causation in Performance Analysis

You update your NVIDIA drivers and see a 5% speedup. You conclude the new driver is 5% faster.

- The Pitfall: The real cause might be that the new driver fixed a bug that was causing thermal throttling on your specific GPU, or maybe a background process on your machine stopped running.

- How to Dodge It:

- ✅ Run multiple trials: Always run each benchmark multiple times (e.g., 3-5 runs) and average the results to smooth out random system noise.

- ✅ Isolate variables: Change only one thing at a time. If you want to test a new driver, keep the framework version, code, and data identical.

Avoiding these pitfalls is key to producing benchmarks that are not just numbers, but trustworthy insights.

✨ Advanced Optimization Techniques: Squeezing Every Ounce of Deep Learning Performance

Once you’ve benchmarked and found your baseline, the real fun begins: optimization. Here are some of the advanced techniques we use to push our models to the limit.

Mixed Precision Training (FP16/BF16): Faster Training, Less Memory

This is one of the most impactful optimizations you can make.

- What it is: Traditional training uses 32-bit floating-point numbers (FP32). Mixed precision training uses a combination of 16-bit (FP16 or BFloat16) and 32-bit precision. Most calculations are done in the faster, less memory-intensive 16-bit format, while critical parts like weight updates are kept in 32-bit to maintain accuracy.

- Benefits:

- Speed: Modern NVIDIA GPUs have specialized Tensor Cores that provide a massive throughput boost for 16-bit matrix operations.

- Memory: Using FP16 halves the memory required for weights, activations, and gradients, allowing you to use larger models or bigger batch sizes.

- How to use it: Both PyTorch (with

torch.cuda.amp) and TensorFlow have built-in support for Automatic Mixed Precision (AMP), making it easy to enable with just a few lines of code.

Distributed Training Strategies: Scaling Up with Data and Model Parallelism

When a single GPU isn’t enough, you need to go distributed. The two main strategies are:

- Data Parallelism: This is the most common approach. You replicate your model on multiple GPUs, and each GPU processes a different slice of the data batch. Gradients are then synchronized and averaged across all GPUs to update the weights. It’s relatively easy to implement and works well when your model fits on a single GPU.

- Model Parallelism: When your model is too large to fit into a single GPU’s memory, you must use model parallelism. This involves splitting the model itself across multiple GPUs, with each GPU responsible for a different part of the network (e.g., different layers). This is more complex to implement but is necessary for training massive models like GPT-3.

- Hybrid Approaches: For the largest models, a combination of data and model parallelism is often used. Frameworks like DeepSpeed and PyTorch’s Fully Sharded Data Parallel (FSDP) offer advanced hybrid strategies.

Graph Optimization and JIT Compilation: Unleashing Compiler Magic

- What it is: Deep learning frameworks can represent your model as a computation graph. A Just-In-Time (JIT) compiler can take this graph, perform optimizations like “operator fusion” (merging multiple operations into a single, more efficient one), and compile it into highly optimized machine code.

- Who does it best:

- JAX is built around this concept with its XLA compiler.

- TensorFlow uses XLA as well, which can be enabled with a simple flag.

- PyTorch has

torch.compile(), which provides similar JIT compilation benefits and has become a major feature in PyTorch 2.x.

Efficient Data Pipelines: Mastering TF.Data, PyTorch Dataloaders, and More

As mentioned in the pitfalls, your data pipeline is critical.

- Prefetching: Have your data loader prepare the next batch of data on the CPU while the GPU is busy processing the current one.

- Parallel Loading: Use multiple worker processes to load and preprocess data in parallel.

- Caching: If your dataset is small enough, cache it in memory after the first epoch to eliminate disk I/O for subsequent epochs.

Hardware-Specific Optimizations: Leveraging Tensor Cores, XLA, and Custom Kernels

- Tensor Cores: Ensure you’re using mixed precision to take full advantage of these specialized cores on NVIDIA GPUs.

- XLA: If using TensorFlow or JAX, experiment with enabling XLA to see if it provides a speedup for your specific model.

- NVIDIA TensorRT: For inference, TensorRT is a powerful optimizer that takes a trained model and further tunes it for a specific NVIDIA GPU, often resulting in significant latency reductions.

By combining these advanced techniques, you can transform a standard training script into a highly optimized, performance-tuned machine.

🗣️ Real-World Anecdotes: Our Benchmarking Adventures at ChatBench.org™

Let me tell you a quick story. A while back, our team was working on a new image segmentation model for a client in the medical imaging space. We had a solid prototype built in PyTorch, and performance was… okay. Not great, but okay. The training was taking about 36 hours on a beefy NVIDIA A100 GPU.

The deadline was looming, and we needed to iterate faster. So, we decided to do a full-on benchmarking sprint. We set up identical models in TensorFlow and even a version in JAX with Flax. We standardized everything: the data pipeline, the optimizer, the batch size—the works.

The initial results were surprising. TensorFlow was about 10% faster than our PyTorch implementation. Not bad. But JAX? It was a monster. After the initial JIT compilation, it was nearly 40% faster per epoch! We were ecstatic.

But here’s the twist. When we looked at the time to convergence—how long it took to reach our target validation accuracy—the story changed. PyTorch, despite being slower per epoch, actually converged in fewer epochs. The final training time was almost identical to the JAX implementation.

What was going on? We dug into the profilers. It turned out that a subtle difference in the default weight initialization between the frameworks was giving the PyTorch model a slightly better starting point, leading to faster learning. After standardizing the initialization method, JAX regained its crown as the clear speed winner.

This experience taught us a valuable lesson that we preach in all our Developer Guides: benchmarking is a detective story. The headline numbers (like throughput) are just the first clue. You have to dig deeper into the metrics, question everything, and hunt for those subtle variables that can make all the difference.

⚙️ The MLOps Perspective: Integrating Benchmarking into Your Workflow and CI/CD

In the world of MLOps (Machine Learning Operations), performance isn’t a one-time check; it’s a continuous promise. Integrating benchmarking directly into your Continuous Integration/Continuous Deployment (CI/CD) pipeline is essential for maintaining model quality and efficiency in production.

Think about it: “Writing unit tests to answer the question ‘Does my software work?’ is standard, but it is not always common to answer performance-related questions, such as ‘Is my software fast?'” This is the gap that automated benchmarking fills.

Here’s how to think about it:

- Performance as a Unit Test: Every time a developer commits new code, a CI pipeline should trigger. Alongside regular code tests, this pipeline can run a small-scale benchmark on a standard model and dataset. This acts as a “performance smoke test.”

- Regression Detection: Did the latest code change cause a 10% drop in training throughput? The automated benchmark will catch it immediately, preventing the performance regression from ever reaching production.

- Nightly Benchmarks: For more extensive tests, you can set up a “nightly build” that runs a full benchmark suite on a variety of models and hardware configurations. The results can be logged to a dashboard (using tools like TensorBoard or custom solutions) for the team to review each morning.

- Tools for Automation: Systems like MLBench are designed specifically for this. They are “low-maintenance, turnkey” solutions that can automatically gather metrics like runtime, power consumption, and memory usage and store them in a database for easy comparison over time.

By making performance benchmarking an automated, integral part of your development workflow, you shift from being reactive (fixing performance problems after they appear) to being proactive (preventing them from happening in the first place). This is a core principle of robust and scalable AI Business Applications.

🔮 Future Trends in Deep Learning Performance: What’s Next on the Horizon?

The race for performance is relentless. What’s state-of-the-art today will be standard tomorrow. Here’s a glimpse of the future our team at ChatBench.org™ is keeping a close eye on.

Hardware Innovations: Next-Gen GPUs, AI Accelerators, and Neuromorphic Computing

The hardware landscape is exploding with innovation.

- Next-Gen GPUs: Companies like NVIDIA, AMD, and Intel are in a constant arms race, promising more computational power, higher memory bandwidth, and greater energy efficiency with each generation.

- Specialized AI Accelerators: We’re seeing a rise in ASICs and FPGAs designed specifically for AI workloads. Companies like Cerebras, Graphcore, and even tech giants like Google (with TPUs) and Meta are building custom chips that can outperform general-purpose GPUs on specific tasks. The future is specialized.

- Neuromorphic Computing: This is a longer-term bet. These are chips designed to mimic the structure of the human brain. They promise incredible power efficiency for certain types of tasks but are still largely in the research phase.

Framework Evolution and Interoperability: Towards a Unified Ecosystem

The lines between frameworks are blurring.

- Interoperability: Standards like ONNX are becoming increasingly important, allowing for a “train anywhere, deploy anywhere” philosophy. The rise of Keras 3 as a multi-backend API is another huge step in this direction.

- Meta-Frameworks: What if you could have a framework for frameworks? That’s the idea behind projects like Deep500, which is featured in the video below. As described in the presentation at #featured-video, Deep500 aims to be a “deep learning meta-framework” that provides standardized interfaces for operators, networks, and training procedures. This modular approach allows researchers to mix and match components from different ecosystems and benchmark them fairly, fostering reproducibility and innovation.

Automated Benchmarking Tools: The Rise of Smart Performance Analysis

Benchmarking today can still be a manual and time-consuming process. The future is automation.

- Smarter Profilers: Expect to see tools that not only report metrics but also provide actionable insights. Imagine a profiler that says, “Your GPU utilization is low. The bottleneck is likely in your data loading pipeline. Try increasing the number of worker threads.”

- AI for Benchmarking: We may soon use AI to benchmark AI. Imagine systems that can automatically search for optimal hyperparameters, model architectures, and even hardware configurations for a given task and budget.

The future of deep learning performance is not just about raw speed, but about efficiency, flexibility, and intelligence. It’s a future we’re excited to benchmark

✅ Conclusion: Your Path to Peak Deep Learning Performance

After our deep dive into the world of benchmarking deep learning frameworks, we hope you’re now equipped with the knowledge and tools to make data-driven decisions that turbocharge your AI projects. Whether you’re a researcher chasing the bleeding edge or an enterprise engineer deploying robust models at scale, benchmarking is your secret weapon.

Here’s the bottom line:

- TensorFlow shines in production environments with its mature ecosystem and scalability.

- PyTorch rules the research domain with its intuitive, dynamic graph and rapid prototyping capabilities.

- JAX is the speed demon for advanced users seeking maximum performance and flexibility through JIT compilation and functional programming.

- Other frameworks and tools like Keras and ONNX add valuable interoperability and ease of use.

Remember our story about the medical imaging model? It perfectly illustrates that raw speed isn’t everything—convergence speed, initialization, and subtle implementation details matter just as much. Benchmarking is a detective story that uncovers these hidden truths.

By carefully setting up your benchmarking environment, selecting relevant metrics, and avoiding common pitfalls, you’ll unlock insights that save time, money, and headaches. And by integrating benchmarking into your MLOps pipelines, you ensure your models stay fast and efficient as your codebase evolves.

The future promises even more exciting innovations in hardware, frameworks, and automated benchmarking tools. Staying ahead means embracing benchmarking as a continuous, integral part of your AI workflow.

So, what are you waiting for? Fire up your GPUs, sharpen your profiling tools, and let the benchmarking games begin! 🚀

🔗 Recommended Links: Dive Deeper!

👉 Shop High-Performance GPUs and Hardware:

- NVIDIA RTX 4090: Amazon

- NVIDIA A100: RunPod | Paperspace

- NVIDIA H100: RunPod | Paperspace

Explore Deep Learning Frameworks:

- TensorFlow: TensorFlow Official Website

- PyTorch: PyTorch Official Website

- JAX: JAX GitHub Repository

- Keras: Keras Official Website

- ONNX Runtime: ONNX Runtime Official Website

Books for Deep Learning and Performance Optimization:

- Deep Learning by Ian Goodfellow, Yoshua Bengio, and Aaron Courville — Amazon Link

- Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow by Aurélien Géron — Amazon Link

- Efficient Processing of Deep Neural Networks by Vivienne Sze et al. — Amazon Link

❓ FAQ: Your Burning Questions Answered

What are the top deep learning frameworks for high-performance AI applications?

The top contenders are TensorFlow, PyTorch, and JAX. TensorFlow excels in production environments with its scalable architecture and rich ecosystem. PyTorch is favored in research for its dynamic computation graphs and ease of debugging. JAX offers cutting-edge speed with JIT compilation and functional programming paradigms, ideal for advanced users focused on performance. Other frameworks like Keras provide user-friendly APIs, while ONNX facilitates interoperability across frameworks.

How does benchmarking deep learning frameworks improve AI model efficiency?

Benchmarking reveals the real-world performance characteristics of frameworks on your specific models and hardware. It helps identify bottlenecks, optimize resource utilization, and choose the best framework-hardware combination for your use case. By measuring metrics like training throughput, inference latency, memory usage, and power consumption, benchmarking guides you to faster training times, lower costs, and better scalability. It also supports regression testing to maintain performance over time.

Which metrics are most important when benchmarking deep learning frameworks?

Key metrics include:

- Training Throughput: Samples processed per second.

- Inference Latency and Throughput: Speed and responsiveness during prediction.

- Memory Footprint: GPU and CPU RAM usage.

- GPU Utilization and Power Consumption: Efficiency of hardware usage.

- Model Convergence Speed: How quickly the model reaches target accuracy.

- Scalability: Performance gains from multi-GPU or distributed setups.

A holistic view combining these metrics provides the most actionable insights.

How can benchmarking guide the selection of AI tools for competitive advantage?

Benchmarking empowers you to make data-driven decisions rather than relying on hype or anecdotal evidence. By understanding which frameworks and hardware deliver the best performance for your specific workloads, you can reduce training times, accelerate model deployment, and cut operational costs. This agility translates into faster innovation cycles and better products, giving you a clear edge in the competitive AI landscape.

What are common pitfalls to avoid when benchmarking deep learning frameworks?

Beware of:

- Data loading bottlenecks starving your GPU.

- Inconsistent hyperparameters across frameworks.

- Ignoring framework overheads that affect small batch performance.

- Benchmarking on inadequate hardware that skews results.

- Misinterpreting results without multiple runs and controlled variables.

Avoiding these ensures your benchmarks are reliable and actionable.

How can MLOps practices integrate benchmarking for continuous performance monitoring?

Incorporate benchmarking into your CI/CD pipelines as automated performance tests. Run small-scale benchmarks on every code commit to detect regressions early. Use nightly builds for comprehensive performance suites. Tools like MLBench automate metric collection and reporting. This proactive approach maintains model efficiency and reliability throughout development and deployment cycles.

📚 Reference Links: Our Sources and Further Reading

- GTDLBench GitHub Repository: https://github.com/git-disl/GTDLBench

- Microway Deep Learning Benchmarks: https://www.microway.com/hpc-tech-tips/deep-learning-benchmarks-nvidia-tesla-p100-16gb-pcie-tesla-k80-tesla-m40-gpus/

- Collabora MLBench: https://www.collabora.com/news-and-blog/news-and-events/benchmarking-machine-learning-frameworks.html

- TensorFlow Official Site: https://www.tensorflow.org/

- PyTorch Official Site: https://pytorch.org/

- JAX GitHub Repository: https://github.com/google/jax

- NVIDIA CUDA Toolkit: https://developer.nvidia.com/cuda-toolkit

- NVIDIA cuDNN Library: https://developer.nvidia.com/cudnn

- ONNX Official Website: https://onnx.ai/

- MLPerf Benchmark Suite: https://mlcommons.org/en/benchmarks/

Ready to benchmark your way to AI excellence? Let ChatBench.org™ be your guide! 🚀