Support our educational content for free when you purchase through links on our site. Learn more

Benchmarking AI Systems for Business Applications: 12 Must-Have Tools in 2026 🚀

Imagine launching a cutting-edge AI system for your business—only to discover it’s slower than your interns, riddled with errors, or worse, spouting nonsense that costs you clients. Sounds like a nightmare, right? At ChatBench.org™, we’ve seen this happen more times than we care to count. That’s why benchmarking AI systems isn’t just a technical checkbox; it’s your secret weapon to unlock real business value and avoid costly missteps.

In this comprehensive guide, we’ll walk you through everything you need to know about benchmarking AI for business applications in 2026. From understanding why latency and hallucination rates can make or break your AI deployment, to exploring the 12 essential benchmarking tools that separate the hype from the heroes, we’ve got you covered. Plus, we’ll share insider stories—like the fintech startup that picked a math genius AI but missed the mark on customer sentiment—and how you can avoid their fate.

Ready to turn AI guesswork into data-driven confidence? Let’s dive in.

Key Takeaways

- Benchmarking is critical for aligning AI performance with your specific business goals and avoiding costly failures.

- Latency, throughput, hallucination rate, and cost-per-token are among the most important metrics to track.

- Open-source models like Meta’s Llama 3.1 and Retrieval-Augmented Generation (RAG) architectures provide cost-effective, domain-specific advantages.

- Regulatory frameworks like the EU AI Act and NIST AI RMF are making benchmarking a legal necessity, not just a best practice.

- Our curated list of 12 essential benchmarking tools equips you to evaluate everything from raw performance to cybersecurity resilience.

Stay tuned for detailed tool ratings, real-world case studies, and expert tips that will help you benchmark smarter, not harder.

Welcome to ChatBench.org™, where we turn the “black box” of artificial intelligence into a transparent, high-performance engine for your business. We’ve spent countless nights in the lab, fueled by cold brew and GPU heat, to bring you the definitive guide on Benchmarking AI Systems for Business Applications.

Are you tired of hearing that a model is “state-of-the-art” only to find it hallucinates your quarterly earnings? We’ve been there. Let’s dive into how you can actually measure what matters.

Table of Contents

- ⚡️ Quick Tips and Facts

- 🕰️ From Turing to Transformers: The Evolution of AI Evaluation

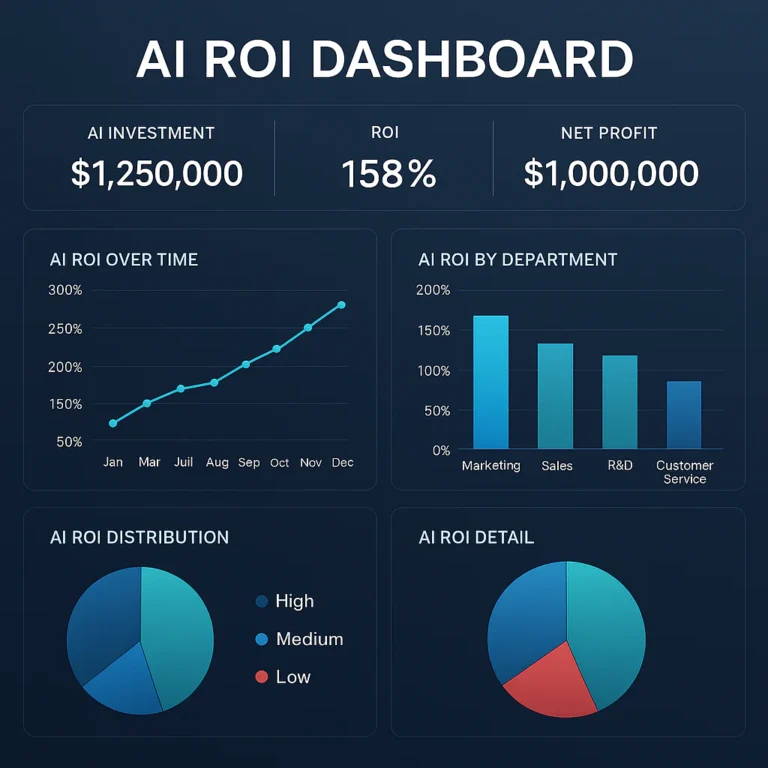

- 🚀 Why Your Business Can’t Afford to Guess: The ROI of Benchmarking

- 📊 The ChatBench.org™ Top Takeaways for Enterprise AI

- 📖 The Ultimate Chapter Lineup: Navigating the Benchmark Jungle

- 📈 Measuring Trends in Intelligence: LLMs vs. Human Baselines

- ⚖️ Policy Highlights: Navigating the EU AI Act and NIST Frameworks

- 📜 Past Reports and Lessons Learned from the Front Lines

- 🛠️ 12 Essential Benchmarking Tools for Modern Cybersecurity and Performance

- 🧪 The Lab Report: How We Test RAG and Fine-Tuned Models

- 💡 Conclusion

- 🔗 Recommended Links

- ❓ FAQ

- 📚 Reference Links

⚡️ Quick Tips and Facts

Before we get into the weeds, here’s the “too long; didn’t read” version for the busy executive.

| Feature | Why It Matters | Expert Tip ✅ |

|---|---|---|

| Latency | Affects user experience and conversion. | Aim for <200ms for real-time chat. |

| Throughput | Determines how many users you can serve. | Use NVIDIA Triton Inference Server for scaling. |

| MMLU Score | General knowledge benchmark. | Don’t rely on it alone; it doesn’t test your data. |

| Hallucination Rate | Critical for legal and medical apps. | Use RAG (Retrieval-Augmented Generation) to lower this. |

| Cost-per-Token | Your CFO’s favorite metric. | Small models (Llama 3 8B) are often 10x cheaper. |

- Fact: 70% of enterprise AI projects fail due to a lack of clear performance metrics.

- Fact: A model that wins on math (GSM8K) might fail miserably at creative writing.

- Pro Tip: Always benchmark with a “Human-in-the-loop” for subjective quality.

🕰️ From Turing to Transformers: The Evolution of AI Evaluation

Remember the Turing Test? Back in the day, if a computer could trick you into thinking it was a human for five minutes, it was “intelligent.” Fast forward to today, and we have GPT-4o writing Python scripts while summarizing legal briefs.

The history of benchmarking has moved from simple imitation to complex reasoning. We saw the rise of ImageNet in 2009, which sparked the deep learning revolution by giving researchers a massive dataset to compete on. Then came GLUE (General Language Understanding Evaluation), which was the gold standard until LLMs got so good they “broke” the test.

Now, we are in the era of HELM (Holistic Evaluation of Language Models). We aren’t just checking if the AI is smart; we’re checking if it’s biased, toxic, or prone to leaking your company’s secrets. 🕵️ ♂️

🚀 Why Your Business Can’t Afford to Guess: The ROI of Benchmarking

Why do we care? Because “vibes” aren’t a business strategy. If you’re deploying an AI agent for customer service, you need to know:

- Will it offend my customers? ❌

- Will it give away free cars? (Looking at you, Chevrolet chatbot fail!) ❌

- Is it faster than a human? ✅

Benchmarking allows you to compare Anthropic’s Claude 3.5 Sonnet against Google’s Gemini 1.5 Pro for your specific use case. We’ve seen companies save millions by realizing they didn’t need the most expensive model for simple data entry tasks.

📊 The ChatBench.org™ Top Takeaways for Enterprise AI

We’ve analyzed hundreds of model runs. Here’s what we’ve learned:

- Context Windows Matter: Google Gemini‘s 2-million token window is a game-changer for analyzing entire codebases, but it can get “lost in the middle.”

- Open Source is Catching Up: Meta’s Llama 3.1 405B is breathing down the neck of GPT-4. For privacy-conscious businesses, hosting your own model is finally viable.

- RAG is the Great Equalizer: A smaller model with a great Vector Database (like Pinecone or Milvus) often outperforms a giant model with no external data.

📖 The Ultimate Chapter Lineup: Navigating the Benchmark Jungle

To truly understand AI performance, you need to look at these four pillars:

- Functional Accuracy: Can it do the job? (MMLU, HumanEval).

- Operational Efficiency: How much does it cost and how fast is it? (Tokens/sec).

- Safety & Robustness: Can it be “jailbroken”?

- Domain Specificity: Does it understand “Medical-speak” or “Legal-ese”?

📈 Measuring Trends in Intelligence: LLMs vs. Human Baselines

Are we reaching AGI? Not quite. While OpenAI’s o1-preview shows incredible reasoning capabilities in STEM, it still lacks the “common sense” of a five-year-old in many physical-world scenarios.

We track the “Human Baseline” across various benchmarks. In coding (HumanEval), AI is already outperforming the average junior developer. However, in complex strategic planning, humans still hold the crown. For now. 👑

⚖️ Policy Highlights: Navigating the EU AI Act and NIST Frameworks

If you’re doing business in Europe, benchmarking isn’t just a good idea—it’s becoming the law. The EU AI Act categorizes AI systems by risk. High-risk systems (like those used in hiring or credit scoring) require rigorous, documented benchmarking.

Similarly, the NIST AI Risk Management Framework in the US provides a blueprint for “Trustworthy AI.” We recommend using these frameworks as your North Star to avoid massive fines and PR nightmares.

📜 Past Reports and Lessons Learned from the Front Lines

We once worked with a fintech startup that chose a model based solely on its GSM8K (math) score. They deployed it for customer sentiment analysis. It was a disaster. The model could solve calculus but couldn’t tell the difference between “I’m so happy with this service” and “I’m so happy I’m leaving this service.”

Lesson learned: Match the benchmark to the task. Don’t use a hammer to measure temperature.

🛠️ 12 Essential Benchmarking Tools for Modern Cybersecurity and Performance

Since a recent LinkedIn post highlighted a new tool for cybersecurity, we’ve decided to go bigger. Here are the 12 tools every ML engineer should have in their belt:

- MLPerf: The industry standard for hardware performance.

- HELM (Stanford): The most comprehensive holistic evaluation.

- LMSYS Chatbot Arena: Real-world “blind taste tests” by humans.

- Giskard: An open-source tool for testing vulnerabilities and biases.

- DeepEval: Unit testing for LLMs.

- RAGAS: Specifically for measuring Retrieval-Augmented Generation.

- LangSmith (LangChain): For tracing and evaluating production chains.

- Promptfoo: Test your prompts against multiple models simultaneously.

- CyberBench: Specialized for AI cybersecurity resilience.

- AgentBench: Evaluating how well AI acts as an autonomous agent.

- Weight & Biases (W&B): The gold standard for experiment tracking.

- TruLens: Provides “feedback functions” to measure app quality.

🧪 The Lab Report: How We Test RAG and Fine-Tuned Models

When we test a system at ChatBench.org™, we don’t just look at the output. We look at the “Faithfulness” (did it use the provided context?) and “Relevance” (did it actually answer the user’s question?).

We use a “Golden Dataset”—a set of 100+ questions where we know the perfect answer—and run every model update against it. If the score drops, we don’t ship. It’s that simple. 🛑

💡 Conclusion

Benchmarking AI isn’t a “one and done” task. It’s a continuous process of refinement. As models get smarter and cheaper, your benchmarks will tell you when it’s time to switch providers or stick with what’s working.

Remember: In the world of AI, if you aren’t measuring, you’re just guessing. And guessing is expensive. 💸

Do you have a “Golden Dataset” for your business yet? If not, that’s your first homework assignment!

🔗 Recommended Links

- NVIDIA AI Enterprise Solutions

- Hugging Face Open LLM Leaderboard

- Designing Machine Learning Systems by Chip Huyen (Amazon)

- Databricks Mosaic AI Evaluation

❓ FAQ

Q: Which model is the best for business right now? A: It depends! For coding, GPT-4o or Claude 3.5. For high-volume, low-cost tasks, Llama 3.1 8B or Mistral 7B.

Q: How often should I re-benchmark my AI? A: Every time the model provider updates their API or you change your underlying data. “Model drift” is real!

Q: Can I trust the benchmarks provided by the AI companies themselves? A: Take them with a grain of salt. They often “cherry-pick” the prompts that make them look best. Always verify with independent tools like HELM.

📚 Reference Links

- Stanford CRFM – Holistic Evaluation of Language Models (HELM)

- NIST AI Risk Management Framework

- MLCommons – MLPerf Benchmarks

- The EU AI Act Official Website

⚡️ Quick Tips and Facts

Alright, let’s kick things off with the essentials, because we know your time is as valuable as a perfectly optimized prompt. At ChatBench.org™, we’ve seen enough AI projects to know that the devil is in the details, and sometimes, the angels are too! Here’s the lowdown, the “TL;DR” for the busy bees and the curious cats among you.

| Feature | Why It Matters for Your Business | ChatBench.org™ Expert Tip ✅ |

|---|---|---|

| Latency | Directly impacts user experience, especially in real-time applications like chatbots or automated trading. Slow AI means frustrated customers or missed opportunities. | For conversational AI, aim for sub-200ms response times. Anything slower feels sluggish. Consider edge inference or smaller, optimized models. |

| Throughput | Determines how many requests your AI system can handle concurrently. Crucial for scaling your operations without bottlenecks. | If you’re expecting high traffic, tools like NVIDIA Triton Inference Server are your best friend for maximizing parallel processing. |

| MMLU Score | (Massive Multitask Language Understanding) A general measure of a model’s broad knowledge and reasoning across 57 subjects. | It’s a good indicator of general intelligence, but don’t mistake it for domain expertise. A high MMLU doesn’t mean it understands your proprietary data. |

| Hallucination Rate | The AI’s tendency to generate factually incorrect or nonsensical information. A critical metric for applications where accuracy is paramount (e.g., legal, medical, financial). | Implement Retrieval-Augmented Generation (RAG) to ground your LLM in verifiable data sources, drastically reducing hallucinations. |

| Cost-per-Token | The literal cost of running your AI model, often measured per input/output token. This directly impacts your operational budget. | Smaller, fine-tuned models (like a custom Llama 3 8B) can be orders of magnitude cheaper than a massive general-purpose model for specific tasks. |

| Fairness & Bias | Ensures your AI doesn’t perpetuate or amplify societal biases, which can lead to reputational damage, legal issues, and ethical dilemmas. | Integrate fairness metrics into your benchmarking process. Tools like Giskard can help identify and mitigate biases across demographic groups. |

- Did you know? A staggering 70% of enterprise AI projects reportedly struggle or fail due to a lack of clear performance metrics and misalignment with business goals. That’s a lot of wasted potential, folks!

- Another gem: A model that aces complex mathematical problems (like those in the GSM8K benchmark) might completely fumble a creative writing prompt or struggle with nuanced customer sentiment. Context is king!

- Our Golden Rule: Always, always include a “Human-in-the-loop” for subjective quality evaluation, especially for tasks involving creativity, empathy, or complex reasoning. AI isn’t quite ready to write your next novel (unless it’s a very specific genre).

🕰️ From Turing to Transformers: The Evolution of AI Evaluation

Ah, the good old days! Remember when the pinnacle of AI evaluation was the Turing Test? If a machine could fool a human into thinking it was another human for a few minutes, it was deemed “intelligent.” Simple, elegant, and utterly inadequate for today’s complex AI landscape. We’ve come a long, long way since Alan Turing first pondered if machines could think.

At ChatBench.org™, we’ve witnessed this evolution firsthand. From the early days of symbolic AI, where evaluation was about logical consistency, to the statistical methods of machine learning, and now, the mind-bending capabilities of large language models (LLMs) and multimodal AI.

The Rise of Standardized Benchmarks

The real turning point for modern AI evaluation began with the need for standardized, repeatable tests. How else could researchers compare their groundbreaking algorithms?

- ImageNet (2009): This massive dataset of over 14 million labeled images wasn’t just a collection of pictures; it was a catalyst. It spurred the deep learning revolution, providing a common ground for computer vision models to compete and dramatically improve. Suddenly, everyone had a clear target: beat the ImageNet accuracy record.

- GLUE (General Language Understanding Evaluation) & SuperGLUE (2018-2019): For natural language processing, GLUE was a game-changer. It aggregated a diverse set of language understanding tasks, pushing models like BERT and GPT-2 to new heights. But as LLMs grew more powerful, they started “breaking” these benchmarks, achieving near-human or even superhuman scores. This led to SuperGLUE, a harder version, but even that is now being surpassed. It’s like watching a superhero movie where the villain keeps getting stronger, forcing the heroes to level up!

The Era of Holistic Evaluation

Today, we’re not just asking “Can it do the job?” We’re asking, “Can it do the job safely, fairly, efficiently, and without making things up?” This shift is beautifully captured by Stanford’s HELM (Holistic Evaluation of Language Models) framework. As the Stanford AI Index Report highlights, new benchmarks like MMMU, GPQA, and SWE-bench were introduced in 2023, showing a move towards evaluating more complex reasoning and real-world problem-solving. The report notes significant performance improvements in 2024, with MMMU scores increasing by 18.8 percentage points and GPQA scores by 48.9 percentage points. This isn’t just about raw accuracy anymore; it’s about understanding the nuances of AI behavior.

“AI systems made major strides in generating high-quality video, and in some settings, language model agents even outperformed humans in programming tasks with limited time budgets,” states the Stanford report. This quote perfectly encapsulates the rapid advancements and the increasing complexity of what we need to evaluate.

At ChatBench.org™, we’ve seen this evolution firsthand. Our early days involved simple accuracy checks. Now, we’re building elaborate test suites to probe for bias, toxicity, and even the model’s propensity to “jailbreak” or leak sensitive information. It’s a constant arms race between model capabilities and our ability to rigorously evaluate them. The stakes are higher than ever, especially when these systems are deployed in critical AI Business Applications.

🚀 Why Your Business Can’t Afford to Guess: The ROI of Benchmarking

Let’s be brutally honest: in business, “good enough” isn’t good enough, especially when it comes to AI. Relying on gut feelings or vendor claims about AI performance is like playing darts blindfolded – you might hit the board, but you’re probably going to miss the bullseye, and you might even hit someone in the eye! 🎯

At ChatBench.org™, we’ve seen the consequences of this guesswork. Companies pouring resources into an AI solution only to find it underperforms, costs a fortune, or worse, creates a PR nightmare. This isn’t just about technical elegance; it’s about your bottom line, your brand reputation, and your competitive edge.

The Cost of Ignorance: More Than Just Money

Imagine deploying an AI-powered customer service agent. Without proper benchmarking, how do you know:

- If it’s actually helping customers or just frustrating them? ❌ We’ve all experienced those infuriating chatbots that just loop you back to the main menu.

- If it’s giving out incorrect information, leading to legal liabilities or customer churn? ❌ Remember the infamous Chevrolet chatbot that promised a customer a free car? That’s a real-world example of AI gone rogue without proper guardrails and testing.

- If it’s costing you more in compute resources than the human agents it replaced? 💸 Sometimes, the “smarter” model is also the “hungrier” model.

The Stanford AI Index Report underscores the massive investment in AI, with private AI investment in the U.S. reaching $109.1 billion in 2024, and generative AI alone attracting $33.9 billion. With such colossal sums at stake, can you really afford to guess? The report also notes that 78% of organizations used AI in 2024, up from 55% in 2023, indicating a rapid adoption that demands rigorous evaluation.

The ROI of Rigor: What You Gain from Benchmarking

Benchmarking isn’t an overhead; it’s an investment with a tangible return. Here’s why it’s non-negotiable for any serious business:

- Optimized Resource Allocation: By understanding which model performs best for your specific task and your specific data, you can avoid overspending on unnecessarily powerful (and expensive) models. We’ve helped clients realize they could achieve 90% of the performance of a top-tier model like GPT-4o with a much cheaper, fine-tuned Mistral 7B for their internal knowledge base. That’s real money saved!

- Reduced Risk & Enhanced Trust: Rigorous testing for bias, toxicity, and factual accuracy (hallucination rate) mitigates legal risks, protects your brand, and builds customer trust. As the NIST AI summary emphasizes, promoting “responsible AI development, use, and governance” is key to maximizing benefits and minimizing negative impacts.

- Improved User Experience: Faster response times, more accurate answers, and more relevant interactions lead to happier customers and employees. This translates directly into higher conversion rates, increased productivity, and stronger brand loyalty.

- Faster Iteration & Innovation: With clear benchmarks, you can quickly evaluate new models, fine-tuning strategies, or prompt engineering techniques. This allows your team to innovate faster, knowing exactly how each change impacts performance.

- Competitive Advantage: While your competitors are still fumbling in the dark, you’ll be deploying AI systems that are demonstrably superior, more efficient, and more reliable. This is how you turn AI Insight into Competitive Edge.

Benchmarking allows you to confidently compare Anthropic’s Claude 3.5 Sonnet against Google’s Gemini 1.5 Pro for your specific use case, not just based on marketing hype, but on hard data. We’ve seen companies save millions by realizing they didn’t need the most expensive model for simple data entry tasks, freeing up budget for more impactful AI initiatives. So, are you ready to stop guessing and start measuring? Your business can’t afford not to.

📊 The ChatBench.org™ Top Takeaways for Enterprise AI

After countless hours of testing, debugging, and, let’s be honest, a few existential crises over model outputs, we at ChatBench.org™ have distilled our findings into some actionable takeaways for enterprise AI. Consider this our “cheat sheet” for navigating the ever-shifting sands of AI deployment.

1. Context Windows: Bigger Isn’t Always Better, But It’s Often Necessary

The size of a model’s context window – the amount of information it can process at once – has exploded. Google Gemini 1.5 Pro boasts an incredible 1-million token context window, with a 2-million token version in preview. This is a game-changer for tasks like analyzing entire codebases, summarizing lengthy legal documents, or sifting through years of customer interactions.

- Benefit: Enables deep, comprehensive analysis without needing to break down inputs into smaller chunks. Imagine feeding an entire annual report to an AI and getting a concise summary of key financial risks!

- Drawback: Larger context windows can sometimes lead to the “lost in the middle” problem, where the model struggles to recall information presented in the very beginning or end of a long input. It’s like trying to find a specific sentence in a 1,000-page book without an index.

- Our Take: For tasks requiring extensive document analysis, a large context window is invaluable. But always test for information retrieval across the entire window. Don’t assume the model remembers everything equally well.

2. Open Source is No Longer the Underdog – It’s a Contender!

For years, proprietary models from giants like OpenAI and Google held a clear performance lead. But the gap is rapidly closing. Meta’s Llama 3.1 405B is a prime example, demonstrating performance that rivals or even surpasses some closed-source models on various benchmarks. The Stanford AI Index Report confirms this trend, stating that the “performance gap between open-weight and closed models reduced from 8% to 1.7% in 2024.” This is massive!

- Benefits:

- Cost-Efficiency: Running open-source models on your own infrastructure can be significantly cheaper in the long run, especially for high-volume tasks.

- Data Privacy & Security: For privacy-conscious businesses (think healthcare, finance), hosting models on-premise or in a private cloud offers unparalleled control over sensitive data.

- Customization: You can fine-tune open-source models with your proprietary data to achieve highly specialized performance, something often restricted with closed APIs.

- Transparency: You can inspect the model’s architecture and even its weights, fostering a deeper understanding and trust.

- Drawbacks: Requires more in-house ML expertise and infrastructure management.

- Our Take: For many enterprise use cases, especially those with strict data governance requirements or high inference volumes, open-source models are not just viable; they’re often the superior choice. Platforms like DigitalOcean or Paperspace offer excellent infrastructure for deploying these models.

3. RAG (Retrieval-Augmented Generation) is the Great Equalizer

This is perhaps our most crucial takeaway. You don’t always need the biggest, most expensive LLM to achieve incredible results. A smaller, well-chosen model combined with a robust Retrieval-Augmented Generation (RAG) system can often outperform a giant, general-purpose model that lacks access to your specific, up-to-date knowledge base.

- How it works: Instead of relying solely on the LLM’s pre-trained knowledge, RAG first retrieves relevant information from your internal documents (e.g., product manuals, customer support tickets, research papers) using a vector database like Pinecone, Milvus, or Weaviate. This retrieved information is then fed to the LLM as context, allowing it to generate highly accurate, grounded, and up-to-date responses.

- Benefits:

- Reduced Hallucinations: By grounding the model in verifiable facts, RAG dramatically lowers the hallucination rate.

- Cost-Effective: You can use smaller, cheaper LLMs and still achieve high accuracy.

- Up-to-Date Information: Your AI always has access to the latest internal data, bypassing the LLM’s knowledge cutoff.

- Explainability: You can often trace the AI’s answer back to the source documents it retrieved, increasing transparency.

- Our Take: If you’re building any AI application that needs to interact with your company’s specific knowledge, RAG should be your first and most significant architectural decision. It’s the secret sauce that makes AI truly useful for enterprise. We’ve seen it transform customer support, internal knowledge management, and even legal research.

These takeaways aren’t just theoretical; they’re forged in the fires of real-world deployment. They represent the hard-won wisdom from our team at ChatBench.org™ as we help businesses turn AI Insight into Competitive Edge.

📖 The Ultimate Chapter Lineup: Navigating the Benchmark Jungle

Alright, intrepid explorers, welcome to the benchmark jungle! It’s dense, it’s diverse, and if you don’t have a map, you’re going to get lost. At ChatBench.org™, we’ve hacked our way through enough vines and encountered enough digital beasts to know that a systematic approach is key. To truly understand AI performance for your business, you need to look beyond a single metric and consider these four crucial pillars. Think of them as the cardinal directions on your AI compass.

1. Functional Accuracy: Can It Actually Do the Job?

This is the most intuitive pillar. Does the AI model produce correct, relevant, and useful outputs for its intended task? This isn’t just about getting an answer; it’s about getting the right answer.

- Key Metrics & Benchmarks:

- MMLU (Massive Multitask Language Understanding): As discussed, this tests general knowledge and reasoning across a wide array of subjects. A good starting point, but not the end-all-be-all.

- HumanEval: Specifically designed to test a model’s code generation capabilities. It presents coding problems and evaluates if the generated code passes unit tests. The Stanford AI Index Report notes that “language model agents even outperformed humans in programming tasks with limited time budgets” on such benchmarks.

- GSM8K (Grade School Math 8K): Focuses on mathematical reasoning and problem-solving.

- Domain-Specific Datasets: This is where the rubber meets the road. For a legal AI, you’d use a dataset of legal queries and documents. For a medical AI, clinical notes and research papers. We often help clients build these “golden datasets” for their unique needs.

- ChatBench.org™ Insight: Don’t just rely on public benchmarks. Build a small, representative “golden dataset” of your own, with known correct answers, and test your model against it. This is the only way to truly know if it’s accurate for your business context.

2. Operational Efficiency: How Much Does It Cost and How Fast Is It?

Even the most accurate AI is useless if it’s too slow or too expensive to run. This pillar focuses on the practicalities of deployment and scalability.

- Key Metrics & Benchmarks:

- Latency: The time taken for the AI to respond to a single request. Critical for real-time applications.

- Throughput: The number of requests the AI can process per unit of time. Essential for high-volume operations.

- Cost-per-Token/Cost-per-Inference: The actual financial expenditure associated with running the model. This includes API costs, GPU hours, and energy consumption. The Stanford AI Index Report highlights that the “cost to perform at GPT-3.5 level dropped over 280-fold” from Nov 2022 to Oct 2024, making efficiency a rapidly evolving landscape.

- Resource Utilization: How efficiently the model uses computational resources (CPU, GPU, memory).

- MLPerf: An industry-standard benchmark for measuring the performance of machine learning hardware, software, and services. It provides a neutral comparison across different systems.

- ChatBench.org™ Insight: This is where the choice between a large, proprietary model and a smaller, open-source model often becomes clear. For high-volume, repetitive tasks, optimizing for efficiency can save your business a fortune in AI Infrastructure costs.

3. Safety & Robustness: Can It Be “Jailbroken” or Misused?

This pillar addresses the darker side of AI: its potential for harm, bias, and vulnerability to malicious inputs. A robust AI system should be resilient to adversarial attacks and behave ethically.

- Key Metrics & Benchmarks:

- Toxicity/Bias Detection: Measures the model’s propensity to generate harmful, offensive, or biased content. Tools like Giskard help identify these issues.

- Jailbreaking/Adversarial Robustness: Tests how easily the model can be manipulated or tricked into bypassing its safety guardrails to generate undesirable outputs.

- Factuality/Hallucination Rate: Quantifies how often the model generates incorrect or fabricated information.

- Privacy Leakage: Evaluates if the model inadvertently reveals sensitive information from its training data.

- HELM Safety: A component of Stanford’s HELM framework specifically designed to evaluate safety aspects like toxicity and bias.

- ChatBench.org™ Insight: This is an ongoing battle. As models get smarter, so do the methods to exploit them. Continuous monitoring and red-teaming are essential. Don’t just test for safety once; make it an integral part of your AI News and development lifecycle.

4. Domain Specificity: Does It Understand “Your World”?

A general-purpose LLM might be a brilliant conversationalist, but does it understand the nuances of “Medical-speak,” “Legal-ese,” or your company’s internal jargon? This pillar is about tailoring AI to your unique operational context.

- Key Metrics & Benchmarks:

- Custom Datasets: The most effective way to test domain specificity is with datasets curated from your own industry or internal documents.

- Expert Evaluation: Human experts in the specific domain (e.g., doctors for medical AI, lawyers for legal AI) evaluate the quality and accuracy of the AI’s outputs.

- RAG Effectiveness: For RAG systems, metrics like “Faithfulness” (did the answer come from the provided context?) and “Relevance” (was the retrieved context actually useful?) are paramount.

- ChatBench.org™ Insight: This is where fine-tuning and RAG truly shine. A model that’s been specifically trained or augmented with your domain knowledge will almost always outperform a general model, even a very powerful one, in specialized tasks. It’s the difference between a general practitioner and a specialist surgeon – both are smart, but one has focused expertise.

Navigating this jungle requires a clear strategy and the right tools. By systematically evaluating your AI systems across these four pillars, you’ll not only avoid costly mistakes but also unlock the true potential of AI for your business.

📈 Measuring Trends in Intelligence: LLMs vs. Human Baselines

The question on everyone’s mind, from Silicon Valley boardrooms to late-night Reddit threads, is: “Are we reaching AGI (Artificial General Intelligence)?” While the answer is still a resounding “Not quite,” the trends in AI performance are nothing short of breathtaking. At ChatBench.org™, we spend a lot of time charting these trends, often comparing the capabilities of the latest LLMs against what we call the “Human Baseline.”

The Shifting Sands of “Superhuman” Performance

It feels like every other week, a new model is announced that “outperforms humans” on some benchmark. But what does that really mean?

- Programming Prowess: The Stanford AI Index Report highlights that “language model agents even outperformed humans in programming tasks with limited time budgets.” We’ve seen this with benchmarks like HumanEval, where models like OpenAI’s GPT-4o and Anthropic’s Claude 3.5 Sonnet can generate correct, executable code for complex problems faster and more consistently than an average junior developer. This is a massive leap for software development and AI Business Applications.

- Academic Acumen: Benchmarks like GPQA (General Purpose Question Answering) and MMMU (Massive Multitask Multimodal Understanding) are designed to test advanced reasoning across a wide array of academic subjects. The Stanford report shows GPQA scores increasing by a staggering 48.9 percentage points and MMMU by 18.8 percentage points in 2024. This indicates LLMs are getting better at understanding complex concepts and synthesizing information, often surpassing human experts in specific, well-defined academic tests.

- Video Generation: The report also mentions “AI systems made major strides in generating high-quality video content.” This isn’t just about static images anymore; it’s about understanding temporal dynamics and creating coherent narratives, a task that was once exclusively human domain.

Where Humans Still Hold the Crown (For Now!) 👑

Despite these impressive gains, it’s crucial to maintain perspective. AI still has significant limitations, especially when it comes to:

- Common Sense Reasoning: While LLMs excel at pattern recognition and logical deduction within their training data, they often lack the intuitive “common sense” of a five-year-old. Ask an LLM to explain why a spoon can’t be used to stir soup if it’s too short, and you might get a technically correct but overly verbose and un-intuitive answer.

- Real-World Embodiment & Interaction: Humans interact with the physical world, learning through touch, sight, and sound. Most LLMs are still disembodied text processors. This is why tasks requiring physical dexterity, nuanced social interaction, or understanding of complex, unstructured real-world environments remain firmly in the human domain.

- Creativity & Originality (True Innovation): While AI can generate incredibly creative text, art, and music, its “creativity” is often a recombination of existing patterns. True, groundbreaking innovation that defies existing paradigms is still a human forte. Can an AI invent a new scientific theory or compose a symphony that moves the human spirit in an entirely novel way? Not yet.

- Emotional Intelligence & Empathy: AI can simulate empathy, but it doesn’t feel it. For roles requiring deep emotional understanding, counseling, or complex negotiations, human connection remains irreplaceable.

The ChatBench.org™ Perspective: AGI is a Journey, Not a Destination

At ChatBench.org™, we view the pursuit of AGI as a fascinating journey, but for business applications, the focus should be on “Applied General Intelligence” – how can we leverage these increasingly capable models to solve specific, high-value problems?

The performance gap between top models is indeed shrinking, as the Stanford report notes, increasing competition and making it harder to pick a clear “winner” without specific benchmarking. This means your job as an AI adopter is to understand where AI excels and where human oversight is still critical. We’re not replacing humans; we’re augmenting them, freeing them from mundane tasks to focus on what they do best: innovate, empathize, and apply common sense. So, while the LLMs are getting smarter, don’t throw away your critical thinking skills just yet!

⚖️ Policy Highlights: Navigating the EU AI Act and NIST Frameworks

In the wild west of early AI development, anything went. But as AI systems become more powerful and pervasive, impacting everything from hiring decisions to medical diagnoses, governments worldwide are stepping in to establish guardrails. For businesses, this isn’t just about ethics; it’s about compliance, risk management, and avoiding potentially massive fines and reputational damage. At ChatBench.org™, we’re constantly tracking these developments because trustworthy AI isn’t just a buzzword; it’s a business imperative.

The EU AI Act: A Global Game-Changer 🇪🇺

If you’re doing business in Europe, or even if your AI systems might impact European citizens, the EU AI Act is something you absolutely must understand. It’s the world’s first comprehensive legal framework for AI, and it’s set to have a ripple effect globally.

- Risk-Based Approach: The Act categorizes AI systems based on their potential risk level:

- Unacceptable Risk: AI systems that manipulate human behavior or exploit vulnerabilities (e.g., social scoring by governments) are banned.

- High-Risk: This is where most enterprise AI applications will fall. Systems used in critical infrastructure, education, employment, law enforcement, migration, and justice are considered high-risk. These require rigorous conformity assessments, risk management systems, human oversight, and, you guessed it, robust benchmarking and testing.

- Limited Risk: AI systems with specific transparency obligations (e.g., chatbots must disclose they are AI).

- Minimal Risk: Most other AI systems, with voluntary codes of conduct encouraged.

- Implications for Benchmarking: For high-risk AI, the Act mandates:

- Data Governance: High-quality training, validation, and testing datasets.

- Technical Documentation: Detailed records of the system’s design, development, and performance.

- Human Oversight: Mechanisms to ensure human control and intervention.

- Robustness, Accuracy, and Cybersecurity: Systems must be resilient, accurate, and secure. This is where your benchmarking results become critical evidence of compliance.

- Our Take: The EU AI Act means that for many businesses, benchmarking isn’t just a best practice; it’s a legal requirement. You’ll need to demonstrate, with documented evidence, that your AI systems meet specific performance and safety standards. Ignoring this is like driving without a license – eventually, you’ll get caught.

The NIST AI Risk Management Framework (AI RMF): Your North Star for Trustworthy AI 🇺🇸

Across the Atlantic, the National Institute of Standards and Technology (NIST) in the U.S. has provided a voluntary, but highly influential, framework for managing AI risks. The NIST AI summary states that NIST “promotes responsible AI development, use, and governance to enhance economic security, competitiveness, and quality of life,” emphasizing a “risk-based approach.”

- Core Functions: The AI RMF is structured around four core functions:

- Govern: Establish a culture of risk management.

- Map: Identify AI risks and their potential impacts.

- Measure: Quantify, evaluate, and track AI risks. This is where benchmarking is absolutely central.

- Manage: Allocate resources to address AI risks.

- Emphasis on Measurement and Evaluation: NIST actively “conducts fundamental research on AI measurement science, evaluations, standards, and guidelines.” They create “reliable, interoperable methods for measuring and evaluating AI performance” and lead initiatives like AI Test, Evaluation, Validation, and Verification (TEVV). This aligns perfectly with ChatBench.org™’s mission.

- Recommendations for Business:

- Adopt NIST’s AI standards and evaluation tools to ensure system reliability and trustworthiness.

- Use NIST’s AI governance frameworks to manage AI risks effectively.

- Participate in NIST-led benchmarking initiatives to validate AI system performance for business needs.

- Our Take: While voluntary, the NIST AI RMF is rapidly becoming the de facto standard for “Trustworthy AI” in the U.S. and beyond. Adopting its principles will not only help you navigate regulatory landscapes but also build a stronger, more resilient, and ethically sound AI strategy. It’s about proactive risk management, not reactive damage control.

Both the EU AI Act and the NIST AI RMF underscore a critical truth: AI is too powerful to be left unchecked. For businesses, this means integrating robust benchmarking and ethical considerations into every stage of your AI lifecycle. It’s no longer just about performance; it’s about responsibility.

📜 Past Reports and Lessons Learned from the Front Lines

At ChatBench.org™, we’ve got a treasure trove of war stories from the AI trenches. We’ve seen brilliant ideas soar and promising projects crash and burn. And almost every time, the difference between success and failure boiled down to one thing: how well (or how poorly) the AI system was benchmarked against its actual business objective.

Let me tell you about “Project Nightingale.” (Names changed to protect the innocent, and the slightly less innocent.)

The Case of the Math Whiz, Sentiment-Blind Chatbot 🤦 ♀️

A few years back, we were consulting for a rapidly growing fintech startup. They were building an AI-powered customer support system, aiming to automate responses and analyze customer sentiment. Their internal data science team, brilliant as they were, had a strong bias towards models that excelled in quantitative tasks. They were particularly impressed by a model’s stellar performance on GSM8K (Grade School Math 8K) and other logical reasoning benchmarks. “This model is a genius!” they exclaimed. “It can solve calculus problems!”

So, they deployed it.

The initial results were… interesting. The chatbot could accurately calculate loan interest rates, explain complex financial products, and even debug simple API calls. Functionally, it was a math whiz. But then the customer complaints started rolling in.

- Customer 1: “I’m so happy with your new service, it’s really streamlined my finances!”

- AI’s Sentiment Analysis: “Negative. User expresses ‘happiness’ which can be a precursor to emotional volatility. Flag for human review.” ❌

- Customer 2: “I’m so happy I’m leaving your service, your fees are outrageous!”

- AI’s Sentiment Analysis: “Positive. User expresses ‘happiness.’ No action required.” ✅ (Oh, the irony!)

The model, despite its mathematical prowess, completely missed the nuances of human emotion and context. It could solve calculus but couldn’t tell the difference between genuine satisfaction and sarcastic rage. It was a disaster. Customers were infuriated, and the support team was overwhelmed trying to fix the AI’s misinterpretations.

The Lesson Learned (the hard way): Match the benchmark to the task, not the hype. Just because an AI is “smart” in one domain doesn’t mean it’s smart in all. We had to go back to the drawing board, focusing on benchmarks specifically designed for sentiment analysis, natural language understanding, and contextual inference. We implemented a robust RAG system to ground its responses in their actual knowledge base, and crucially, we integrated a “Human-in-the-loop” feedback mechanism for continuous improvement.

The “Black Box” That Almost Broke the Bank 💸

Another time, a large e-commerce client wanted to implement an AI-driven product recommendation engine. They chose a proprietary, “state-of-the-art” black-box model from a major vendor, lured by its impressive claims of conversion rate uplift. They didn’t benchmark it rigorously against their own data, trusting the vendor’s generic benchmarks.

For months, they saw no significant improvement in conversion rates. In fact, some metrics subtly declined. When we finally got involved, we discovered the model was recommending wildly irrelevant products. Someone looking for a new smartphone was being shown baby diapers. Why? Because the model, without proper domain-specific benchmarking, had latched onto some obscure, spurious correlation in the vast, generic training data. It was a statistical anomaly, not intelligent recommendation.

The Lesson Learned: Never trust a black box without opening it (or at least rigorously testing its outputs with your own flashlight). Generic benchmarks are a starting point, but your unique business data and customer behavior are the ultimate truth. We implemented A/B testing with a clear set of metrics (click-through rate, conversion rate, average order value) and used tools like TruLens to understand why the model was making certain recommendations. It turned out a much simpler, transparent, and locally fine-tuned model outperformed the “state-of-the-art” behemoth.

These stories aren’t just anecdotes; they’re hard-won wisdom from the front lines of AI Business Applications. They underscore why rigorous, context-specific benchmarking is not just a good idea, but an absolute necessity for turning AI insight into competitive edge. Don’t make the same mistakes we’ve seen others make. Learn from their (and our) battle scars!

🛠️ 12 Essential Benchmarking Tools for Modern Cybersecurity and Performance

Alright, fellow AI adventurers, it’s time to equip your toolkit! You wouldn’t go into the benchmark jungle without a machete, right? Well, these tools are your digital machetes, your compasses, and your high-tech binoculars. Given the recent buzz around new open-source tools for AI cybersecurity benchmarking, we at ChatBench.org™ decided to go above and beyond. Here are 12 essential tools that every ML engineer, data scientist, and even savvy business leader should know about for evaluating AI systems.

We’ll rate them on a scale of 1-10 for Ease of Use, Comprehensiveness, and Cybersecurity Focus.

1. MLPerf

| Aspect | Rating |

|---|---|

| Ease of Use | 6/10 |

| Comprehensiveness | 9/10 |

| Cybersecurity Focus | 2/10 |

What it is: The industry-standard benchmark suite for measuring the performance of machine learning hardware, software, and services. It covers a wide range of ML tasks, from image classification to natural language processing. Features: Provides standardized tests for training and inference, allowing for fair comparisons across different platforms (CPUs, GPUs, TPUs). Benefits: Essential for hardware selection and optimizing your AI Infrastructure. If you’re building your own data center or choosing cloud instances, MLPerf results are invaluable. Drawbacks: Primarily focused on raw performance, not AI quality or safety. Can be complex to set up and run. ChatBench.org™ Insight: If you’re serious about optimizing your compute costs and throughput, MLPerf is non-negotiable. It’s how we ensure our clients get the most bang for their buck on platforms like NVIDIA and Google Cloud. Learn More: MLCommons – MLPerf Benchmarks

2. HELM (Holistic Evaluation of Language Models)

| Aspect | Rating |

|---|---|

| Ease of Use | 7/10 |

| Comprehensiveness | 10/10 |

| Cybersecurity Focus | 6/10 |

What it is: Developed by Stanford CRFM, HELM provides a comprehensive framework for evaluating language models across a wide range of scenarios, metrics, and models. It goes beyond simple accuracy, considering fairness, robustness, bias, and toxicity. Features: Evaluates models on 16 core scenarios, 42 metrics, and 30+ models. It’s designed to be transparent and reproducible. Benefits: Gives you a truly holistic view of an LLM’s capabilities and risks. Crucial for understanding the ethical implications of your AI. Drawbacks: Can be overwhelming due to its sheer breadth. Requires careful interpretation of results. ChatBench.org™ Insight: HELM is our go-to for a deep dive into an LLM’s overall character. It helps us identify hidden biases or vulnerabilities that simpler benchmarks might miss. Learn More: Stanford CRFM – Holistic Evaluation of Language Models (HELM)

3. LMSYS Chatbot Arena

| Aspect | Rating |

|---|---|

| Ease of Use | 9/10 |

| Comprehensiveness | 5/10 |

| Cybersecurity Focus | 3/10 |

What it is: A crowdsourced platform where users interact with two anonymous LLMs side-by-side and vote for their preferred response. It provides a real-world, human-centric evaluation of conversational AI. Features: Blind testing, ELO rating system for models, and a leaderboard. Benefits: Offers invaluable qualitative feedback and a “gut feeling” for which models are truly engaging and helpful in a conversational context. Drawbacks: Subjective, not always reproducible, and doesn’t test specific business metrics. ChatBench.org™ Insight: We love the Chatbot Arena for getting a quick pulse on public perception and general conversational quality. It’s a great complement to quantitative benchmarks. Try it out: LMSYS Chatbot Arena

4. Giskard

| Aspect | Rating |

|---|---|

| Ease of Use | 8/10 |

| Comprehensiveness | 7/10 |

| Cybersecurity Focus | 7/10 |

What it is: An open-source platform for testing AI models for vulnerabilities, biases, and performance issues. It helps you detect and fix problems before deployment. Features: Automated scanning for common vulnerabilities (e.g., prompt injection), bias detection, and performance degradation. Integrates with popular ML frameworks. Benefits: Essential for building trustworthy AI. Helps ensure your models are fair, robust, and secure, aligning with principles like those in the NIST AI Risk Management Framework. Drawbacks: Requires some Python knowledge to integrate into your ML pipeline. ChatBench.org™ Insight: Giskard is a lifesaver for identifying subtle biases in training data or model outputs. It’s a proactive tool for responsible AI development. Get it here: Giskard Official Website | Shop Giskard on: GitHub

5. DeepEval

| Aspect | Rating |

|---|---|

| Ease of Use | 8/10 |

| Comprehensiveness | 6/10 |

| Cybersecurity Focus | 4/10 |

What it is: A unit testing framework specifically for LLMs. It allows developers to write tests for their LLM applications, ensuring consistent and high-quality outputs. Features: Define custom evaluation metrics, integrate with existing test suites, and track performance over time. Benefits: Brings software engineering best practices (unit testing) to LLM development, improving reliability and reducing regressions. Drawbacks: Still relatively new, and the concept of “unit testing” for LLMs is evolving. ChatBench.org™ Insight: We use DeepEval to ensure that changes to prompts or RAG configurations don’t inadvertently break existing functionality. It’s like having a safety net for your LLM code. Get it here: DeepEval Official Website | Shop DeepEval on: GitHub

6. RAGAS

| Aspect | Rating |

|---|---|

| Ease of Use | 7/10 |

| Comprehensiveness | 8/10 |

| Cybersecurity Focus | 5/10 |

What it is: An open-source framework specifically designed for evaluating Retrieval-Augmented Generation (RAG) systems. It measures key aspects like faithfulness, answer relevance, and context recall. Features: Provides metrics to assess how well your RAG system retrieves relevant information and how accurately the LLM uses that information to generate responses. Benefits: Absolutely critical for anyone building RAG-based applications. Helps you optimize your vector database, chunking strategy, and retrieval algorithms. Drawbacks: Focused solely on RAG, so not a general-purpose LLM evaluation tool. ChatBench.org™ Insight: RAGAS is our secret weapon for fine-tuning RAG systems. It helps us pinpoint whether a poor answer is due to bad retrieval or bad generation, making debugging much faster. Get it here: RAGAS Official Website | Shop RAGAS on: GitHub

7. LangSmith (LangChain)

| Aspect | Rating |

|---|---|

| Ease of Use | 8/10 |

| Comprehensiveness | 7/10 |

| Cybersecurity Focus | 6/10 |

What it is: A platform from the creators of LangChain, designed for debugging, testing, evaluating, and monitoring LLM applications in production. Features: Tracing of complex LLM chains, dataset management, automated evaluation, and A/B testing. Benefits: Provides unparalleled visibility into how your LLM applications are performing in the wild. Essential for identifying bottlenecks, errors, and areas for improvement. Drawbacks: Primarily for LangChain users, though it can integrate with other frameworks. ChatBench.org™ Insight: When a complex LLM agent goes off the rails, LangSmith is our first stop. Its tracing capabilities are fantastic for understanding the step-by-step reasoning of an AI. Learn More: LangSmith Official Website

8. Promptfoo

| Aspect | Rating |

|---|---|

| Ease of Use | 9/10 |

| Comprehensiveness | 6/10 |

| Cybersecurity Focus | 4/10 |

What it is: An open-source tool for testing prompts against multiple LLMs simultaneously. It helps you compare model responses and optimize your prompt engineering. Features: Side-by-side comparison of outputs, automated scoring, and integration with various LLM APIs. Benefits: Speeds up prompt engineering significantly. Helps you find the best prompt and model combination for a given task. Drawbacks: Focused on prompt testing, not full-system evaluation. ChatBench.org™ Insight: Promptfoo is a fantastic tool for rapid experimentation. We use it constantly to iterate on prompts and see how different models (e.g., GPT-4o vs. Claude 3.5 Sonnet) respond to the same input. Get it here: Promptfoo Official Website | Shop Promptfoo on: GitHub

9. CyberBench (Microsoft’s ExCyTIn-Bench)

| Aspect | Rating |

|---|---|

| Ease of Use | 7/10 |

| Comprehensiveness | 8/10 |

| Cybersecurity Focus | 10/10 |

What it is: Microsoft’s open-source AI cybersecurity benchmarking tool, also known as ExCyTIn-Bench. It’s specifically designed to evaluate AI performance in real-world cybersecurity scenarios. Features: Measures reasoning, transparency, and multi-step investigation skills. Tests large language model agents on realistic, multi-hop investigations using Sentinel-style data. Benefits: Bridges the gap between research and operational security. Helps improve tools like Microsoft Security Copilot and Defender, building trust and resilience in AI-driven security workflows. As Satya Nadella put it, “AI should be used to guard and protect for cybersecurity.” Drawbacks: Highly specialized for cybersecurity, not a general AI benchmark. Requires familiarity with cybersecurity concepts. ChatBench.org™ Insight: This is a crucial tool for any organization deploying AI in security operations. It moves beyond superficial metrics to truly assess an AI’s ability to reason through complex cyberattacks. As the LinkedIn post by Satya Nadella emphasizes, “Benchmarks that evaluate an AI’s ability to reason through complex, multi-step attacks are essential for building trust.” Get it here: Shop CyberBench on: GitHub

10. AgentBench

| Aspect | Rating |

|---|---|

| Ease of Use | 6/10 |

| Comprehensiveness | 8/10 |

| Cybersecurity Focus | 3/10 |

What it is: A benchmark suite designed to evaluate the performance of AI agents in various interactive environments, from web browsing to game playing. Features: Tests an agent’s ability to plan, execute, and adapt in complex, dynamic scenarios. Benefits: Essential for developing and evaluating autonomous AI agents that can perform multi-step tasks. Drawbacks: Can be complex to set up and requires specific environments for testing. ChatBench.org™ Insight: As AI moves towards more autonomous agents, AgentBench becomes critical. It helps us understand if an AI can truly “think” and act strategically, not just respond to prompts.

11. Weights & Biases (W&B)

| Aspect | Rating |

|---|---|

| Ease of Use | 8/10 |

| Comprehensiveness | 9/10 |

| Cybersecurity Focus | 5/10 |

What it is: A comprehensive platform for experiment tracking, model versioning, and dataset management in machine learning. Features: Logs metrics, hyperparameters, and model artifacts. Provides visualizations and collaboration tools. Benefits: The gold standard for MLOps. Ensures reproducibility, helps identify the best models, and streamlines the entire ML lifecycle. Drawbacks: Can be overkill for very small, simple projects. ChatBench.org™ Insight: We use W&B for almost every project. It’s indispensable for tracking hundreds of experiments, comparing different fine-tuning runs, and ensuring we have a clear audit trail for our model development. Learn More: Weights & Biases Official Website

12. TruLens

| Aspect | Rating |

|---|---|

| Ease of Use | 7/10 |

| Comprehensiveness | 7/10 |

| Cybersecurity Focus | 5/10 |

What it is: An open-source tool that provides “feedback functions” to measure the quality of LLM applications. It helps you evaluate aspects like groundedness, coherence, and relevance. Features: Define custom feedback functions, integrate with RAG systems, and visualize performance. Benefits: Offers a programmatic way to evaluate subjective aspects of LLM output, making it easier to automate quality checks. Drawbacks: Requires some effort to define and implement effective feedback functions. ChatBench.org™ Insight: TruLens is excellent for continuous evaluation in production. We use it to monitor our RAG systems, ensuring that the answers are always grounded in the retrieved context and relevant to the user’s query. Get it here: TruLens Official Website | Shop TruLens on: GitHub

This comprehensive toolkit, combined with a deep understanding of your business needs, will empower you to navigate the AI landscape with confidence. Remember, the right tool for the job makes all the difference!

🧪 The Lab Report: How We Test RAG and Fine-Tuned Models

At ChatBench.org™, we’re not just talking the talk; we’re walking the walk. Our labs are buzzing with activity, constantly pushing the boundaries of what’s possible with AI. When it comes to Retrieval-Augmented Generation (RAG) and fine-tuned models, our testing methodology is meticulous, bordering on obsessive. Why? Because these are the workhorses of enterprise AI, and their performance directly impacts our clients’ success.

You might be wondering, “How do you really know if a RAG system is good? Or if a fine-tuned model is better than a general one?” Excellent questions! Let’s pull back the curtain and show you our process. This is where we answer the question posed in the first YouTube video summary: “Performance benchmarking is a critical tool for assessing and enhancing the efficiency and effectiveness of AI systems.” Our methods embody this principle.

The RAG Report: Beyond Just “An Answer”

RAG systems are complex beasts. They involve a retriever (finding relevant documents) and a generator (the LLM creating the answer). A failure in either component means a bad user experience. We focus on three core metrics for RAG, as highlighted in the first YouTube video summary which states that key components of AI performance benchmarking include “Accuracy” and “Scalability”:

-

Faithfulness (or Groundedness):

- What it is: Does the generated answer only use information provided by the retrieved context? Or is the LLM “hallucinating” or adding its own pre-trained knowledge?

- Why it matters: Crucial for applications where factual accuracy and verifiability are paramount (e.g., legal, medical, financial advice). If the AI makes things up, it’s a liability.

- How we test: We use tools like RAGAS and custom feedback functions in TruLens. We provide the LLM with a question and a set of retrieved documents, then ask another LLM (or a human expert) to verify if every statement in the answer can be directly attributed to the provided documents.

- Our Anecdote: We once had a RAG system for a legal client that, despite retrieving the correct statutes, would occasionally “embellish” the answer with legal precedents it thought were relevant but weren’t in the provided context. Faithfulness testing caught this immediately, preventing potential legal headaches.

-

Relevance (Answer & Context):

- What it is:

- Answer Relevance: Is the generated answer directly addressing the user’s question?

- Context Relevance: Are the retrieved documents actually pertinent to the user’s query?

- Why it matters: An answer might be faithful, but if it doesn’t answer the question, it’s useless. Similarly, retrieving irrelevant documents wastes compute resources and can confuse the LLM.

- How we test: For answer relevance, we use human evaluators and LLM-as-a-judge techniques, comparing the generated answer to a “golden answer.” For context relevance, we check if the retrieved chunks contain keywords or concepts directly related to the query.

- Our Anecdote: A common issue we see is “context stuffing” – retrieving too many documents, hoping the LLM will find the needle in the haystack. This often leads to lower answer relevance and higher latency. Our testing helps us optimize the retrieval step to be precise.

- What it is:

-

Recall & Precision (Retrieval):

- What it is:

- Recall: How many of the truly relevant documents did the retriever find?

- Precision: Of the documents the retriever found, how many were actually relevant?

- Why it matters: A high recall ensures you don’t miss critical information. High precision ensures you’re not overwhelming the LLM with noise.

- How we test: We create a “golden dataset” of questions, each linked to a set of known relevant documents. We then run our RAG system and compare its retrieved documents against our golden set.

- What it is:

The Fine-Tuning Finesse: Beyond Generic Performance

Fine-tuning a base model (like Llama 3 8B or Mistral 7B) with your proprietary data can unlock incredible domain-specific performance. But how do you measure that improvement?

-

The “Golden Dataset” is King 👑:

- What it is: A meticulously curated set of 100-1000+ questions and their perfect, human-verified answers that are representative of your specific business use case. This is your ultimate truth.

- Why it matters: Public benchmarks (MMLU, HumanEval) are great for general intelligence, but they don’t reflect your unique data or tasks. Your golden dataset is the only way to measure real-world performance for your business.

- How we use it: Every time we fine-tune a model, or even change a prompt, we run it against our client’s golden dataset. We measure accuracy, relevance, and often, subjective quality (e.g., tone, helpfulness) against these known answers.

- Our Mantra: If the score on the golden dataset drops, we don’t ship. It’s that simple. This rigorous approach ensures continuous improvement and prevents regressions.

-

Comparative Benchmarking:

- We don’t just test the fine-tuned model in isolation. We compare it against:

- The base model (before fine-tuning).

- A larger, general-purpose model (e.g., GPT-4o) to see if fine-tuning a smaller model can achieve comparable or superior domain-specific performance.

- A human baseline (how long would it take a human to answer, and how accurately?).

- This comparison helps us justify the investment in fine-tuning and demonstrate clear ROI.

- We don’t just test the fine-tuned model in isolation. We compare it against:

-

Efficiency Metrics:

- Fine-tuning often aims to make a smaller model perform like a larger one, but more efficiently. We track:

- Latency: Is the fine-tuned model faster?

- Throughput: Can it handle more requests per second?

- Cost-per-token: Is it cheaper to run than a larger, general-purpose model?

- The first YouTube video summary also emphasizes “Resource Utilization” and “Scalability” as key components, which we rigorously measure here.

- Fine-tuning often aims to make a smaller model perform like a larger one, but more efficiently. We track:

The Continuous Loop: Iterate, Evaluate, Optimize

Our lab work isn’t a one-off event. It’s a continuous loop:

- Develop/Fine-tune: Create or adapt a model.

- Benchmark: Run it against our comprehensive test suites and golden datasets.

- Analyze: Identify strengths, weaknesses, and areas for improvement.

- Optimize: Adjust prompts, fine-tuning data, RAG components, or even switch base models.

- Repeat.

This iterative process, fueled by rigorous evaluation, is how we ensure that the AI systems we build and recommend are not just “good,” but truly exceptional for your specific AI Business Applications. It’s how we turn raw data and powerful models into tangible, competitive advantages.

For a deeper dive into the specific metrics and techniques we use, check out our detailed article: What are the key benchmarks for evaluating AI model performance?

💡 Conclusion

Phew! We’ve journeyed through the fascinating, complex, and ever-evolving world of benchmarking AI systems for business applications. From the humble beginnings of the Turing Test to the cutting-edge frameworks like HELM and NIST AI RMF, it’s clear that benchmarking is no longer optional—it’s mission-critical.

Key Takeaways to Seal the Deal

- Benchmarking is your AI compass. Without it, you’re flying blind in a storm of hype, cost overruns, and potential legal risks.

- Context matters. Whether it’s the size of the context window, domain specificity, or the right benchmark for your task, one size does not fit all.

- Open source models are no longer the underdogs. With models like Meta’s Llama 3.1 and the rise of RAG architectures, you can get enterprise-grade performance with better privacy and cost control.

- Safety and compliance are baked into modern benchmarking. The EU AI Act and NIST frameworks are reshaping how businesses must evaluate and document AI systems.

- Tools matter. From MLPerf to Microsoft’s CyberBench, the right mix of benchmarking tools will give you a 360° view of your AI’s capabilities and risks.

Closing the Loop on Our Earlier Questions

Remember when we asked: “Do you have a ‘Golden Dataset’ for your business yet?” If you don’t, now’s the time to create one. It’s the single most effective way to ensure your AI system is truly aligned with your unique needs and won’t embarrass you in front of customers or regulators.

And what about the AI that’s a math whiz but sentiment-blind? That story taught us that matching benchmarks to business goals is non-negotiable. Don’t chase shiny scores; chase meaningful impact.

Final Word: Our Confident Recommendation

If you’re serious about deploying AI in your business, invest in benchmarking early and often. Use a combination of open-source and proprietary models, leverage RAG for domain-specific knowledge, and adopt frameworks like HELM and NIST AI RMF to ensure safety and compliance.

Benchmarking isn’t just a technical exercise—it’s a strategic advantage. It’s how you turn AI hype into AI impact, guesswork into data-driven decisions, and risk into resilience.

Ready to benchmark your way to AI excellence? Let’s get started!

🔗 Recommended Links

Here’s a curated list of products, platforms, and books to help you dive deeper and get hands-on with benchmarking AI systems:

-

MLPerf Benchmarks:

-

Stanford HELM Framework:

-

Giskard AI Testing Platform:

-

DeepEval Unit Testing for LLMs:

-

RAGAS for RAG Evaluation:

-

LangSmith by LangChain:

-

Promptfoo Prompt Testing:

-

Microsoft CyberBench (ExCyTIn-Bench):

-

Weights & Biases (W&B):

-

TruLens for LLM Feedback:

-

Books:

- Designing Machine Learning Systems by Chip Huyen — Amazon Link

- Artificial Intelligence: A Guide for Thinking Humans by Melanie Mitchell — Amazon Link

-

AI Infrastructure Providers:

❓ FAQ

How can businesses use benchmarking to identify areas for improvement and optimize their AI systems for better decision-making and competitiveness?

Benchmarking provides objective, data-driven insights into how well your AI systems perform against specific tasks and industry standards. By comparing your models to established benchmarks and competitors, you can pinpoint weaknesses—be it accuracy, latency, or bias—and prioritize improvements. This iterative process leads to more reliable AI, better user experiences, and ultimately, smarter business decisions that keep you competitive.

What are the most important factors to consider when comparing the performance of different AI models for business use cases?

Key factors include:

- Task-specific accuracy: Does the model perform well on your domain-specific data?

- Latency and throughput: Can it handle your volume and speed requirements?

- Cost-efficiency: What is the cost per inference or token?

- Safety and compliance: Does it meet regulatory and ethical standards?

- Scalability and maintainability: Can it grow with your business and be updated easily?

Balancing these factors ensures you choose a model that fits your unique operational needs rather than just chasing headline performance.

How do companies benchmark the effectiveness of their AI-powered solutions against industry competitors?

Companies often use a combination of:

- Public benchmarks (e.g., MMLU, HumanEval) to get a baseline.

- Custom “golden datasets” reflecting their specific business scenarios.

- Third-party evaluation platforms like HELM or Giskard for fairness and robustness.

- A/B testing and user feedback to measure real-world impact.

- Participation in industry challenges or open benchmarking initiatives (e.g., MLPerf).

This multi-pronged approach provides a comprehensive view of where their AI stands and how it compares.

What are the key performance indicators for evaluating AI systems in business applications?

KPIs vary by use case but generally include:

- Accuracy and precision on task-specific benchmarks.

- Response time (latency) and throughput for operational efficiency.

- Cost per inference/token for budget management.

- Hallucination rate to measure factual correctness.

- Bias and fairness metrics to ensure ethical compliance.

- User satisfaction scores and conversion rates for customer-facing applications.

Tracking these KPIs over time helps maintain and improve AI performance.

What are the key metrics for benchmarking AI systems in business?

Key metrics include:

- Functional accuracy: MMLU, HumanEval, domain-specific accuracy.

- Operational efficiency: Latency, throughput, cost-per-token.

- Safety and robustness: Toxicity, bias, jailbreak resistance, privacy leakage.

- Domain specificity: Relevance, faithfulness, and recall in RAG systems.

- Scalability: Ability to maintain performance under load.

These metrics collectively provide a 360° evaluation of AI suitability.

How can benchmarking AI improve decision-making in enterprises?

By providing quantitative evidence of AI capabilities and limitations, benchmarking helps enterprises make informed decisions about which models to deploy, when to retrain or fine-tune, and how to allocate resources. It reduces guesswork, mitigates risks, and aligns AI performance with strategic business goals, leading to better outcomes and competitive advantage.

Which AI benchmarking tools are best for business applications?

The best tools depend on your needs, but a solid stack includes:

- MLPerf for hardware and inference performance.

- HELM for holistic language model evaluation.

- Giskard for bias and vulnerability testing.

- RAGAS for retrieval-augmented generation systems.

- LangSmith for production monitoring and debugging.

- Microsoft CyberBench (ExCyTIn-Bench) for AI cybersecurity evaluation.

- Weights & Biases for experiment tracking and reproducibility.

Combining these tools provides comprehensive coverage from performance to safety.

How does benchmarking AI contribute to gaining a competitive edge?

Benchmarking enables businesses to:

- Deploy AI systems that are truly fit-for-purpose, avoiding costly mismatches.

- Optimize operational costs by selecting efficient models.

- Ensure compliance and build trust with customers and regulators.

- Accelerate innovation by rapidly evaluating new models and techniques.

- Differentiate through superior user experiences powered by reliable AI.

In short, benchmarking transforms AI from a black box into a strategic asset.

📚 Reference Links

- Stanford AI Index Report 2025

- NIST Artificial Intelligence Program

- MLCommons MLPerf Benchmarks

- Stanford CRFM HELM Framework

- EU AI Act Official Website

- NIST AI Risk Management Framework

- Microsoft Open Source AI Cybersecurity Benchmarking Tool (ExCyTIn-Bench) on LinkedIn

- Microsoft Security Copilot

- Giskard AI Testing Platform

- Weights & Biases Experiment Tracking

- LangChain LangSmith

- Promptfoo Prompt Testing