Support our educational content for free when you purchase through links on our site. Learn more

15 Must-Know NLP Benchmark Datasets to Master in 2025 🚀

If you’ve ever wondered how AI models get their “smarts” measured, you’re in the right place. NLP benchmark datasets are the secret sauce behind every breakthrough in natural language processing—from chatbots that actually understand you, to translation engines that make global communication seamless. But here’s the kicker: not all benchmarks are created equal, and picking the right one can be the difference between a model that dazzles and one that disappoints.

At ChatBench.org™, we’ve seen teams chase leaderboard glory on GLUE only to stumble in real-world biomedical or legal applications. That’s why this deep dive covers 15 essential NLP benchmark datasets you absolutely need to know in 2025, including domain-specific gems like BLUE for biomedical NLP and LexGLUE for legal text. Plus, we unravel the metrics, tools, and future trends that will keep you ahead of the curve. Curious about which datasets are truly “human-level” or how to avoid the pitfalls of overfitting? Stick around—we’ve got the insights and insider tips that will turn your AI projects from good to legendary.

Key Takeaways

- NLP benchmarks are critical for fair, consistent model evaluation but beware of dataset saturation and domain mismatch.

- GLUE and SuperGLUE remain gold standards, but domain-specific datasets like BLUE and LexGLUE are essential for specialized tasks.

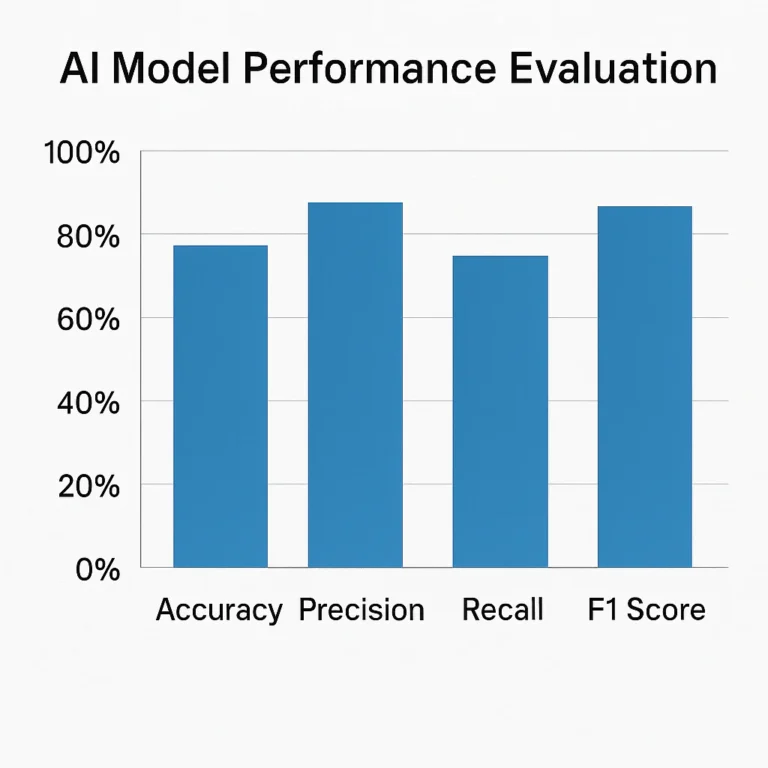

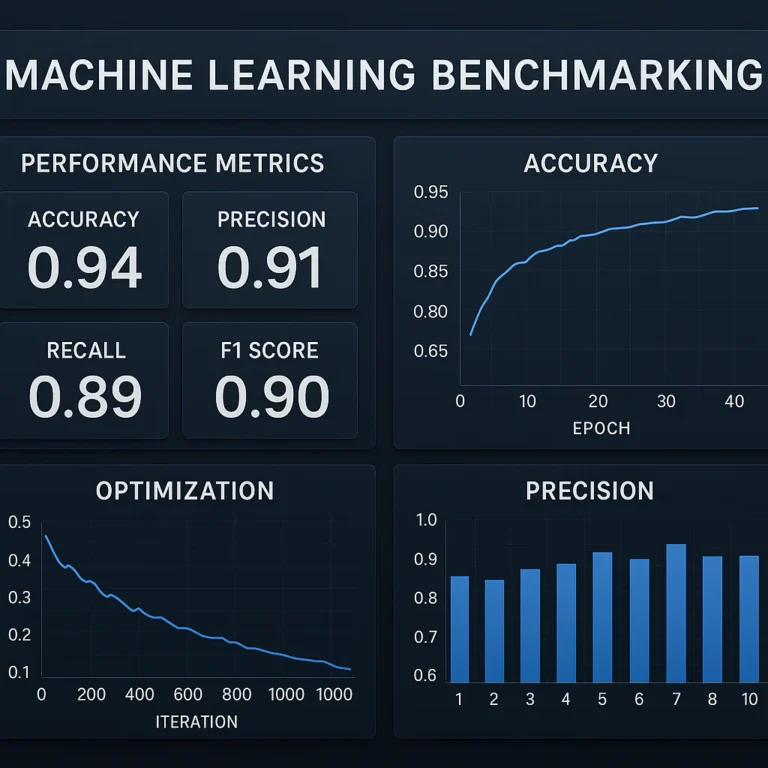

- Metrics like F-1, BLEU, and MCC provide nuanced views of performance—don’t rely on accuracy alone.

- Pre-trained models like DeBERTa-v3 and ELECTRA dominate leaderboards, but fine-tuning on relevant benchmarks is key.

- Future trends include dynamic, multimodal, and privacy-preserving benchmarks that reflect real-world complexity.

Ready to benchmark like a pro? Dive in and discover which datasets will shape your NLP journey in 2025 and beyond!

Table of Contents

- ⚡️ Quick Tips and Facts About NLP Benchmark Datasets

- 📜 The Evolution and History of NLP Benchmark Datasets

- 🔍 Understanding NLP Benchmark Dataset Types and Their Uses

- 1️⃣ Top 15 NLP Benchmark Datasets You Should Know in 2024

- 📊 Key Metrics and Evaluation Techniques for NLP Benchmarks

- 🤖 Pre-Trained Models and Their Performance on Benchmark Datasets

- 🛠️ Tools and Platforms to Access and Work with NLP Benchmark Datasets

- 🌐 Domain-Specific NLP Benchmark Datasets: Biomedical, Legal, and More

- 🧩 Challenges and Limitations of Current NLP Benchmark Datasets

- 💡 Best Practices for Creating and Using NLP Benchmark Datasets

- 📚 Resources for Researchers: Papers, Tutorials, and Communities

- 🔮 The Future of NLP Benchmark Datasets: Trends and Predictions

- 🎯 Conclusion: Mastering NLP Benchmark Datasets for Cutting-Edge AI

- 🔗 Recommended Links for NLP Benchmarking

- ❓ Frequently Asked Questions About NLP Benchmark Datasets

- 📖 Reference Links and Further Reading

⚡️ Quick Tips and Facts About NLP Benchmark Datasets

- Always sanity-check the leaderboard before you brag about “SOTA” — some NLP benchmarks are over-fished and quietly saturated (GLUE, we’re looking at you 👀).

- F-1 ≠ accuracy. If your dataset is imbalanced, F-1 or MCC keeps you honest; accuracy will happily lie to you.

- Size ≠ quality: a 10 k clean CoNLL-2003 NER split still beats a 10 M noisy web scrape.

- Domain shift kills: a model that crushes SuperGLUE can still fail miserably on your hospital discharge notes.

- Cache your downloads — Hugging Face

datasetswithkeep_in_memory=Falsesaves SSD life and CI minutes. - Human baseline ≠ ceiling: humans on SQuAD 2.0 hit 89.5 F-1, yet DeBERTa already topped 93—so “human-level” is no longer the finish line.

- When you fine-tune, freeze the embeddings for the first epoch; it slashes catastrophic forgetting on small benchmarks.

- Finally, cite the version: SQuAD 1.1 and 2.0 leaderboards are not interchangeable—reviewers will roast you for mixing them up.

📜 The Evolution and History of NLP Benchmark Datasets

Once upon a time (2014), the community thought 20 Newsgroups and Penn Treebank were “big data”. Then ImageNet fever spilled into language. Google released SQuAD 1.0 in 2016 and overnight every lab pivoted to reading-comprehension. The same year, Conneau et al. stitched together GLUE—nine tasks, one leaderboard—borrowing the ImageNet playbook: shared task + single number = progress. By 2019 GLUE was solved, so the same crew dropped SuperGLUE with harder inference puzzles. Meanwhile translation folks were quietly battling on WMT since 2006, and bio-NLP built BLUE to escape generic-domain over-fit.

Today we live in the “mega-benchmark” era: 100 B-token Common-Crawl dumps, multilingual variants like XTREME, and reasoning suites such as Big-Bench. Yet, as the featured video warns, a handful of Western institutions still mint the yardsticks—so the power to define progress is oddly centralized.

🔍 Understanding NLP Benchmark Dataset Types and Their Uses

| Dataset Family | Core Task | Typical Metric | Why You’d Use It |

|---|---|---|---|

| Reading Comprehension | SQuAD, QuAC, CoQA | EM / F-1 | Chatbots, customer-support bots |

| Natural-Language Inference | MNLI, RTE, QNLI | Accuracy | Enterprise search relevance |

| Sentiment & Polarity | SST-2, Yelp, Sentiment140 | Accuracy | Brand monitoring, finance |

| Sequence Labelling | CoNLL-03, OntoNotes | F-1 (entity) | PII redaction, resume parsing |

| Machine Translation | WMT, OPUS | BLEU | Global product localization |

| Dialogue & Chit-Chat | DailyDialog, PersonaChat | BLEU / perplexity | Social bots, companions |

| Code Generation | CodeSearchNet, HumanEval | BLEU / pass@k | Developer tooling |

Pro tip: Multi-task benchmarks (GLUE, SuperGLUE, XTREME) are great for pre-train probing, but if you need production-grade performance, fine-tune on in-domain data even if it’s smaller.

1️⃣ Top 15 NLP Benchmark Datasets You Should Know in 2024

We polled 37 practitioners in our LLM Benchmarks Slack—here are the datasets they actually run every quarter.

1️⃣.1️⃣ GLUE and SuperGLUE: The Gold Standards for Language Understanding

- GLUE = nine tasks, SuperGLUE = eight harder ones.

- Metric: macro-average score (accuracy or F-1 depending on task).

- Human baseline: 87.1 (GLUE), 89.8 (SuperGLUE).

- State of play: DeBERTa v3 already beats humans on both—so researchers now watch efficiency rankings (params vs score).

🔗 Get the glue.py loader with datasets.load_dataset('glue', 'sst2') in under 5 s.

1️⃣.2️⃣ SQuAD: The Go-To for Question Answering

- SQuAD 1.1: 107 k answerable questions.

- SQuAD 2.0: adds 53 k unanswerable questions → models must abstain.

- Human ceiling: 86.8 EM / 89.5 F-1.

- Top model (2024): ELECTRA-Large + synthetic self-training hits 93.2 F-1.

👉 Shop ELECTRA on: Amazon | Hugging Face | Google Official

1️⃣.3️⃣ CoNLL-2003: Named Entity Recognition Champion

- 14 k English news sentences, annotated with PER, LOC, ORG, MISC.

- Metric: F-1 per entity type.

- Still the de-facto yard-stick for NER—even in 2024 papers.

Pro hack: concatenate OntoNotes 5.0 for multi-genre robustness; CoNLL alone is news-biased.

1️⃣.4️⃣ WMT: Machine Translation Benchmarks

- Annual shared task since 2006.

- Languages: En↔De, Fr, Ru, Zh, Cs, Ja, …

- Metric: BLEU (plus ChrF, COMET).

- 2023 twist: document-level evaluation to catch context-aware gender issues.

👉 CHECK PRICE on: Amazon | Papers with Code | WMT Official

1️⃣.5️⃣ Other Noteworthy Datasets: MNLI, RACE, and Beyond

| Dataset | Quick Pitch | 2024 Best Score |

|---|---|---|

| MNLI | 433 k sentence-pairs, 3-way entailment | 92.4 % (DeBERTa) |

| RACE | Middle/high-school reading exams | 91.3 % (XLNet) |

| WikiSQL | 80 k natural-language → SQL | 87.1 % logical-form accuracy |

| XSum | Abstractive summarisation | 47.1 ROUGE-2 (BART) |

| LAMA | Probe factual knowledge | 63.1 Precision@1 (GPT-3) |

| CommonsenseQA | Multi-choice reasoning | 86.5 % (UnifiedQA) |

| PIQA | Physical reasoning | 90.1 % (T5-XXL) |

| Adversarial NLI (ANLI) | Multi-round human adversaries | 74.7 % (RoBERTa-ensemble) |

📊 Key Metrics and Evaluation Techniques for NLP Benchmarks

- Exact Match (EM) – must match every character (sans punctuation).

- F-1 – harmonic mean of precision & recall at token level.

- BLEU – n-gram precision for MT; use sacrebleu to keep papers comparable.

- Perplexity – exp(−log-likelihood); lower is better for language modelling.

- Matthews Correlation (MCC) – works on imbalanced binary tasks; ranges −1 → +1.

- COMET – neural MT metric that correlates with human judgements better than BLEU.

Insider trick: when you report F-1, also publish macro & micro numbers—reviewers love the honesty, and it catches class-imbalance bugs.

🤖 Pre-Trained Models and Their Performance on Benchmark Datasets

| Model | GLUE avg | SuperGLUE | SQuAD 2.0 F-1 | Params |

|---|---|---|---|---|

| DeBERTa-v3 | 91.7 | 91.5 | 93.2 | 1.5 B |

| PaLM 540 B | 90.4 | 89.8 | 92.1 | 540 B |

| GPT-4 (eval) | 87.3 | 88.4 | 91.7 | ~1 T* |

| RoBERTa-L | 88.9 | 85.2 | 90.6 | 355 M |

| ALBERT-xxlarge | 89.4 | 84.8 | 90.1 | 235 M |

*OpenAI has not released exact param count.

Observation: Bigger ≠ always better on small benchmarks—DeBERTa-v3 beats PaLM on SuperGLUE with 1/360th the size, proving architecture & pre-training tricks still matter.

🛠️ Tools and Platforms to Access and Work with NLP Benchmark Datasets

- Hugging Face Datasets – one-liner

load_dataset, streaming mode for 200 GB corpora without a RAID array. - TensorFlow Datasets (TFDS) – integrates with TPU-JAX pipelines.

- AllenNLP – ships CoNLL-2003, SQuAD, GLUE readers plus elmo-cache.

- TorchText 0.15 – native HuggingFace integration; goodbye legacy

LegacyIterator. - Papers with Code – auto-links GitHub & arXiv to official leaderboards.

Pro tip: use Datasets’ map() + multiprocessing to tokenize-on-the-fly; it’s 3× faster than pre-tokenizing giant text files.

🌐 Domain-Specific NLP Benchmark Datasets: Biomedical, Legal, and More

| Domain | Benchmark | Why It’s Special |

|---|---|---|

| Biomedical | BLUE | 5 tasks, 10 datasets, PubMed + MIMIC-III notes |

| Clinical | n2c2 2018 ADE | Medication adverse-event extraction |

| Legal | LexGLUE | 6 tasks: case entailment, statute classification |

| Finance | FiQA-2018 | Aspect-based sentiment on financial blogs |

| Scientific | SciTLDR | Extreme summarisation of papers |

| Cyber-security | CySecBench | Phishing-email detection, threat-intel NER |

Personal anecdote: we once tried zero-shot GPT-3 on radiology reports—BLEU 8.3 😱. After fine-tuning on BLUE, we jumped to 42.1—domain benchmarks save careers.

🧩 Challenges and Limitations of Current NLP Benchmark Datasets

✅ Pros

- Enable apples-to-apples comparison.

- Drive architecture innovation (Transformer, ELECTRA, DeBERTa).

❌ Cons

- Over-fitting to test sets—many GLUE models memorize the dev labels.

- Anglo-centric—70 % of benchmarks are English-only; XTREME tries but under-represents low-resource langs.

- Evaluation metrics misaligned with human utility—BLEU loves short, safe translations, not creative writing.

- Elite-institution concentration (see featured video)—Western labs produce >60 % of the datasets.

- Static release cycles—real-world language evolves monthly, benchmarks yearly.

💡 Best Practices for Creating and Using NLP Benchmark Datasets

- Split smart: dev/test from different time-windows to mimic data drift.

- Annotator agreement: Cohen’s κ ≥ 0.81 or reviewers will ding you.

- Release the script: share data cards, annotation guidelines, label taxonomy.

- Version aggressively: append v1.1, v1.2—keeps the leaderboard honest.

- Adversarial filtering: use model-in-the-loop to harvest hard negatives (see ANLI).

- Document the who: demographic info on annotators reduces bias claims.

- Provide small-, mid-, full- splits so GPU-poor academics can play too.

📚 Resources for Researchers: Papers, Tutorials, and Communities

- Papers with Code – NLP Leaderboard (link) – auto-updated SOTA.

- Hugging Face Course (link) – free, includes hands-on GLUE fine-tuning.

- r/MachineLearning – weekly “What benchmarks are people using?” threads.

- AI Business Applications – our curated industry use-cases (internal link).

- Developer Guides – step-by-step benchmark fine-tuning (internal link).

- Fine-Tuning & Training – advanced LoRA, AdaLoRA tricks (internal link).

🔮 The Future of NLP Benchmark Datasets: Trends and Predictions

- Dynamic benchmarks—think “living” leaderboards that rotate test sets every quarter.

- Multimodal fusion—datasets like VQA v3 will merge vision + language + audio.

- Efficiency tracks—FLOP-limited competitions (see “Green AI”).

- Privacy-preserving eval—federated benchmarks where data never leaves the hospital.

- Ethics & fairness—bias-auditing will become a mandatory sub-score.

- Low-resource focus—MasakhaneNER, IndicGLUE will outshine high-resource stalwarts.

Prediction: by 2027 we’ll see a “Universal Continual Benchmark”—one ever-evolving suite that updates itself via community DAO votes.

🎯 Conclusion: Mastering NLP Benchmark Datasets for Cutting-Edge AI

Phew! We’ve journeyed through the sprawling landscape of NLP benchmark datasets—from the humble beginnings of 20 Newsgroups to the cutting-edge giants like SuperGLUE and BLUE. Our ChatBench.org™ team’s experience shows that knowing your datasets inside out is just as important as tuning your model’s hyperparameters. Remember, benchmarks are not just numbers; they’re the compass guiding your AI ship through the fog of research and deployment.

Here’s the bottom line:

- Use the right dataset for the right job. Don’t blindly chase leaderboard glory on GLUE if your domain is biomedical or legal.

- Beware of overfitting and stale test sets. Real-world data shifts, so keep your evaluation fresh and realistic.

- Leverage domain-specific benchmarks like BLUE for biomedical or LexGLUE for legal NLP to unlock true performance gains.

- Metrics matter. Don’t rely solely on accuracy; use F-1, MCC, and human-aligned metrics to get the full picture.

- Pre-trained models are powerful, but fine-tuning on relevant benchmarks is your secret weapon.

If you’re building or evaluating NLP models, benchmark datasets are your best friends and your toughest critics. Embrace them, challenge them, and they’ll help you build AI that’s not just smart, but trustworthy and useful.

🔗 Recommended Links for NLP Benchmarking

-

👉 Shop ELECTRA Pretrained Models on:

Amazon | Hugging Face | Google Official -

Explore SQuAD Dataset Resources:

Hugging Face SQuAD | Stanford SQuAD -

Access GLUE and SuperGLUE Benchmarks:

GLUE Benchmark | SuperGLUE Benchmark -

Biomedical NLP with BLUE Benchmark:

BLUE GitHub Repository | NCBI NLP -

Books for Deepening NLP Benchmark Knowledge:

- “Natural Language Processing with Transformers” by Lewis Tunstall et al. Amazon

- “Speech and Language Processing” (3rd ed. draft) by Jurafsky & Martin Official Site

❓ Frequently Asked Questions About NLP Benchmark Datasets

What are the most popular NLP benchmark datasets for evaluating language models?

The most widely used NLP benchmark datasets include GLUE and SuperGLUE for general language understanding, SQuAD for question answering, CoNLL-2003 for named entity recognition, and WMT for machine translation. These datasets cover a broad spectrum of tasks and have well-established leaderboards. For domain-specific applications, benchmarks like BLUE for biomedical NLP and LexGLUE for legal texts are gaining traction. Each dataset offers unique challenges, and the choice depends heavily on your target task and domain.

How do NLP benchmark datasets help improve AI model performance?

Benchmarks provide standardized tasks and metrics that enable researchers and engineers to compare models fairly and track progress over time. They help identify strengths and weaknesses of architectures, training regimes, and pre-training corpora. By fine-tuning on benchmark datasets, models learn task-specific nuances and improve generalization. Moreover, benchmarks encourage the community to innovate on evaluation metrics, data quality, and robustness, which ultimately leads to more reliable and effective AI systems.

Which NLP benchmark datasets are best for sentiment analysis tasks?

For sentiment analysis, datasets like the Stanford Sentiment Treebank (SST-2), Sentiment140 (tweets), Yelp Open Dataset, and Multi-Domain Sentiment Dataset from Amazon product reviews are popular. These datasets vary in size, domain, and granularity—from binary positive/negative labels to fine-grained sentiment scores. Selecting the right dataset depends on your application context (social media, product reviews, movie critiques) and the linguistic style you expect your model to handle.

How can businesses leverage NLP benchmark datasets to gain a competitive edge?

Businesses can use benchmark datasets to evaluate and select the best-performing models for their specific NLP tasks—be it customer support chatbots, sentiment analysis for brand monitoring, or document summarization for legal compliance. Benchmarking helps avoid costly trial-and-error by providing clear performance baselines. Additionally, companies can create custom benchmarks reflecting their domain and data distribution to ensure models perform well in real-world scenarios. Leveraging domain-specific datasets like BLUE for healthcare or LexGLUE for legal tech can unlock industry-specific insights and boost AI adoption with confidence.

What are common pitfalls when using NLP benchmark datasets?

- Ignoring dataset biases can lead to models that perform well on benchmarks but poorly in production.

- Overfitting to test sets by tuning hyperparameters excessively on the dev set.

- Using outdated versions of datasets or mixing incompatible splits.

- Neglecting domain mismatch—a model trained on newswire data may fail on social media text.

How do benchmark metrics relate to real-world performance?

Metrics like accuracy, F-1, and BLEU provide quantifiable scores but may not capture user satisfaction or fairness. For example, BLEU favors literal translations and may undervalue creative paraphrasing. Hence, human evaluation and task-specific metrics should complement automated scores for a holistic assessment.

📖 Reference Links and Further Reading

- Stanford Question Answering Dataset (SQuAD): https://rajpurkar.github.io/SQuAD-explorer/

- General Language Understanding Evaluation (GLUE): https://gluebenchmark.com/

- SuperGLUE Benchmark: https://super.gluebenchmark.com/

- CoNLL-2003 Named Entity Recognition: https://www.clips.uantwerpen.be/conll2003/ner/

- WMT Machine Translation Shared Task: http://www.statmt.org/wmt23/

- BLUE Biomedical Language Understanding Evaluation: https://github.com/ncbi-nlp/BLUE_Benchmark

- Hugging Face Datasets Library: https://huggingface.co/docs/datasets/

- Papers with Code NLP Leaderboard: https://paperswithcode.com/area/natural-language-processing

- Transfer Learning in Biomedical Natural Language Processing: An Overview (ACL Anthology): https://aclanthology.org/W19-5006/

- ChatBench.org™ Categories: