Support our educational content for free when you purchase through links on our site. Learn more

12 Essential Key Performance Indicators for AI Success in 2026 🚀

Artificial Intelligence is no longer just a futuristic concept—it’s the engine driving innovation and competitive advantage across industries. But how do you really know if your AI initiatives are working? Spoiler alert: it’s all about the right Key Performance Indicators (KPIs). In this comprehensive guide, we unveil 12 essential KPIs that separate AI winners from the also-rans. From technical metrics like accuracy and model drift to business-focused indicators such as ROI and customer satisfaction, we cover every angle you need to master AI performance measurement in 2026 and beyond.

Did you know that companies using AI-enriched KPIs are over 4 times more likely to achieve cross-functional alignment and measurable business impact? Later in this article, we’ll also share real-world case studies and expert tips from ChatBench.org™ and Acacia Advisors to help you quantify AI’s ROI like a pro and avoid common pitfalls that trip up many AI projects. Ready to turn your AI insights into unstoppable success? Let’s dive in!

Key Takeaways

- AI KPIs are your essential dashboard—they measure not just technical accuracy but also business impact, user engagement, and ethical compliance.

- 12 KPIs to track include accuracy, recall, latency, model drift, ROI, and customer satisfaction—each providing unique insights into AI health and value.

- Quantifying AI ROI requires linking cost savings and revenue gains directly to AI initiatives, backed by clear, measurable business objectives.

- Continuous monitoring and MLOps best practices are critical to detect model drift, maintain performance, and ensure ethical AI deployment.

- Partnering with experts like Acacia Advisors can accelerate your AI measurement maturity and help tailor KPIs to your unique business context.

Ready to master these KPIs and unlock your AI’s full potential? Keep reading to discover actionable strategies, tools, and case studies that will transform how you measure AI success in 2026!

Table of Contents

- ⚡️ Quick Tips and Facts About AI KPIs

- 🤖 The Evolution of AI Performance Measurement: A Historical Perspective

- 🔍 Understanding Key Performance Indicators (KPIs) for Artificial Intelligence

- 📊 12 Essential KPIs to Track for AI Project Success

- 1. Accuracy and Precision Metrics

- 2. Recall and F1 Score

- 3. Model Latency and Response Time

- 4. Throughput and Scalability

- 5. Data Quality and Completeness

- 6. User Engagement and Adoption Rates

- 7. Business Impact and ROI

- 8. Model Drift and Stability

- 9. Explainability and Transparency Scores

- 10. Cost Efficiency and Resource Utilization

- 11. Compliance and Ethical Considerations

- 12. Customer Satisfaction and Feedback

- 💡 How to Quantify AI’s Return on Investment (ROI) Like a Pro

- ⚠️ Common Challenges and Pitfalls in Measuring AI Performance

- 🔧 Tools and Platforms to Monitor AI KPIs Effectively

- 📈 Case Studies: Real-World Success Stories in AI KPI Measurement

- 🚀 Best Practices for Continuous AI Performance Improvement

- 🤝 Partnering with Experts: How Acacia Advisors Helps You Master AI Metrics

- 🌟 Kickstart Your AI Transformation Journey Today

- 🔗 Quick Links to Essential AI KPI Resources

- 🛠️ AI Solutions Tailored for KPI Optimization

- 👥 About ChatBench.org™: Your AI Performance Gurus

- 📞 Contact Us: Let’s Solve Your AI KPI Challenges Together

- 🏁 Conclusion: Mastering AI KPIs for Unstoppable Success

- 🔍 Recommended Links for Deep Dives on AI KPIs

- ❓ Frequently Asked Questions About AI Performance Indicators

- 📚 Reference Links and Further Reading

⚡️ Quick Tips and Facts About AI KPIs

Welcome, fellow AI enthusiasts and business leaders! At ChatBench.org™, we’ve spent countless hours in the trenches, wrestling with data, deploying models, and, most importantly, measuring what truly matters. When it comes to Artificial Intelligence, simply deploying a model isn’t enough. You need to know if it’s actually working, delivering value, and not just consuming resources. That’s where Key Performance Indicators (KPIs) for AI come into play. Think of them as your AI’s report card, but way more dynamic and insightful!

Here are some rapid-fire facts and tips we’ve gathered from our journey in turning AI insight into competitive edge:

- AI-enriched KPIs (Smart KPIs) are a game-changer. According to MIT Sloan, executives using AI to prioritize KPIs are 4.3 times more likely to see improved cross-functional alignment. This isn’t just about measuring AI; it’s about AI helping you measure everything better!

- Not all data is meaningful data. As Acacia Advisors rightly points out, the key is tracking meaningful data aligned with project objectives and business goals, not just all available data. Don’t drown in data; surf on insights!

- Accuracy isn’t the only metric. While model accuracy is vital, it’s just one piece of the puzzle. We also need to consider latency, throughput, user engagement, and crucially, business ROI.

- AI can improve KPI accuracy by up to 30-50%. The Sloan Review highlights that AI-driven analytics can significantly enhance the precision of your KPI measurements, making your insights sharper and more reliable.

- Real-time monitoring is key. Traditional KPIs often lag. AI enables dynamic, real-time performance tracking, potentially reducing decision-making time by approximately 40%. Imagine the agility!

- Model drift is a silent killer. Your AI model might perform brilliantly today, but data distributions change. Continuously monitoring for model drift is essential to maintain performance over time.

- Ethical AI isn’t just a buzzword; it’s a KPI. Fairness, transparency, and accountability are becoming non-negotiable metrics for responsible AI deployment.

- Start with the “Why.” Before you even think about how to measure, ask why you’re deploying AI. What business problem are you solving? Your KPIs should directly reflect that.

🤖 The Evolution of AI Performance Measurement: A Historical Perspective

Remember the early days of AI? It felt like a wild west, didn’t it? Back then, “performance” often meant “does it work at all?” or “can it beat a human at chess?” (Deep Blue, anyone? You can read more about its historic match against Garry Kasparov on IBM’s website: https://www.ibm.com/history). Our focus was primarily on technical benchmarks – accuracy, error rates, computational speed. We were thrilled if a machine learning model could classify images with decent precision or predict a simple outcome.

As AI matured, moving from research labs into real-world business applications, the conversation shifted dramatically. It wasn’t enough for a model to be technically sound; it had to deliver tangible business value. This meant expanding our definition of “performance.” Suddenly, we weren’t just asking “Is it accurate?” but “Is it making us money? Is it saving us time? Is it delighting our customers?”

This evolution mirrors the broader journey of technology adoption in business. Initially, the focus is on functionality. Then, it moves to efficiency, and finally, to strategic impact and competitive advantage. For AI, this meant a pivot from purely academic metrics to a blend of technical, operational, and financial KPIs. We started seeing the need to link AI’s output directly to the bottom line, to customer satisfaction scores, and to the overall strategic goals of an organization. This shift is crucial for understanding what are the key benchmarks for evaluating AI model performance? You can dive deeper into this topic on our site: https://www.chatbench.org/what-are-the-key-benchmarks-for-evaluating-ai-model-performance/.

Today, with the rise of complex AI systems, large language models, and ethical considerations, the measurement landscape is even more intricate. We’re not just looking at accuracy; we’re scrutinizing fairness, explainability, and the environmental impact of training massive models. It’s a testament to how far we’ve come, and a clear indicator that AI performance measurement is a constantly evolving field.

🔍 Understanding Key Performance Indicators (KPIs) for Artificial Intelligence

So, what exactly are KPIs for AI, and why should you care? Simply put, Key Performance Indicators (KPIs) are quantifiable measures that help you assess how effectively your AI initiatives are achieving their objectives. They’re not just arbitrary numbers; they’re the pulse of your AI project, telling you if it’s healthy, thriving, or perhaps in need of some serious intervention.

Think of it this way: you wouldn’t drive a car without a speedometer, fuel gauge, or warning lights, right? Your AI projects are complex machines, and KPIs are their dashboard. Without them, you’re driving blind, hoping for the best, and probably heading for a ditch.

As our friends at Acacia Advisors emphasize, “Meticulous measurement of AI’s impact through well-defined KPIs and metrics is indispensable for maximizing technology investments.” We couldn’t agree more! KPIs provide:

- Clarity: They define what “success” looks like for your AI.

- Accountability: They set benchmarks and allow you to track progress against them.

- Guidance: They highlight areas for improvement and inform strategic decisions.

- ROI Justification: They help you prove the value of your AI investments to stakeholders.

But here’s the kicker: not all metrics are KPIs. A metric is just a measurement (e.g., “model accuracy is 92%”). A KPI is a metric tied to a strategic objective (e.g., “improve customer service response time by 20% using our new AI chatbot, achieving 92% accuracy in query resolution”). See the difference? It’s about purpose and impact.

The Dual Nature of AI KPIs: Measuring AI and Measuring with AI

This is where things get really interesting, and where the insights from MIT Sloan become particularly relevant. We often talk about KPIs for AI – how well is our AI model performing? But there’s a powerful secondary layer: using AI to build better KPIs for your entire organization.

- KPIs for AI: These are the metrics we use to evaluate the AI system itself – its accuracy, speed, efficiency, and direct impact on a specific task.

- KPIs with AI (Smart KPIs): This is where AI becomes a tool to enhance your overall business intelligence. As the MIT Sloan article “Build Better KPIs with Artificial Intelligence” explains, AI-enriched KPIs can provide predictive insights and situational awareness. Instead of just looking at past sales, AI can help you predict future sales based on complex patterns, making your KPIs proactive rather than reactive.

This distinction is vital. We, at ChatBench.org™, believe that truly leveraging AI means not just deploying it, but also allowing it to transform how you measure and manage your entire business. It’s about turning static metrics into dynamic, intelligent tools that drive strategic actions.

📊 12 Essential KPIs to Track for AI Project Success

Alright, let’s get down to brass tacks. You’ve got an AI project, you’re excited, but how do you actually know if it’s a success? We’ve seen countless projects falter because they didn’t define their success metrics upfront. Don’t let that be you! Based on our extensive experience and insights from industry leaders, here are 12 essential KPIs that every AI project manager and machine learning engineer should be tracking. This list goes beyond the basic “accuracy” and dives into the real-world impact and sustainability of your AI.

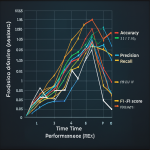

1. Accuracy and Precision Metrics

This is often the first thing people think of, and for good reason! Accuracy measures the proportion of correct predictions out of the total predictions made. Precision (for classification tasks) measures the proportion of true positive predictions among all positive predictions.

- Why it matters: It tells you how often your model is right. For a fraud detection system, high precision means fewer legitimate transactions are flagged incorrectly, saving customer frustration.

- Our take: While crucial, don’t get tunnel-visioned here. A model can be highly accurate but useless if it’s too slow or biased.

- Example: A sentiment analysis model correctly identifies 90% of positive and negative customer reviews.

- ✅ Best Practice: Always consider the context. For medical diagnoses, you might prioritize precision (avoiding false positives) over recall, or vice-versa, depending on the condition.

- ❌ Common Pitfall: Over-optimizing for accuracy on imbalanced datasets, leading to poor performance on the minority class.

2. Recall and F1 Score

Often paired with precision, Recall (also known as sensitivity) measures the proportion of true positive predictions among all actual positive instances. The F1 Score is the harmonic mean of precision and recall, providing a single metric that balances both.

- Why it matters: For tasks like identifying rare diseases or detecting critical system failures, you want high recall – you don’t want to miss any actual positives. The F1 score gives you a balanced view when both precision and recall are important.

- Our take: These are indispensable for classification problems, especially when false negatives or false positives have different costs.

- Example: A spam filter with high recall ensures almost no spam emails reach your inbox, even if it occasionally flags a legitimate email (lower precision).

- ✅ Best Practice: Understand the business cost of false positives vs. false negatives to decide which metric to prioritize.

- Reference: For a deeper dive into these metrics, check out this article on machine learning evaluation metrics: https://towardsdatascience.com/understanding-precision-recall-and-f1-score-in-machine-learning-b03a45c091b

3. Model Latency and Response Time

This KPI measures the time it takes for your AI model to process an input and generate an output.

- Why it matters: In real-time applications (think self-driving cars, live chatbots, or high-frequency trading), milliseconds matter. A highly accurate model is useless if it takes too long to respond.

- Our take: This is a critical operational efficiency metric. We’ve seen brilliant models shelved because their latency made them impractical for production.

- Example: A customer service chatbot responds to user queries within 500ms, ensuring a smooth user experience.

- ✅ Best Practice: Set clear latency targets based on user experience requirements and system integration needs.

- ❌ Common Pitfall: Focusing solely on offline accuracy benchmarks without considering real-time inference speed.

4. Throughput and Scalability

Throughput measures the number of requests or transactions your AI system can handle per unit of time. Scalability refers to its ability to handle increasing workloads by adding resources.

- Why it matters: As your business grows, your AI needs to keep up. A system that can handle 100 requests per second won’t cut it if you suddenly need to process 10,000. This is a core AI Infrastructure concern. Learn more about it here: https://www.chatbench.org/category/ai-infrastructure/

- Our take: This is where cloud providers like AWS, Google Cloud, and Microsoft Azure shine. Their managed AI services are built for scale.

- Example: An AI-powered recommendation engine processes 5,000 user requests per second during peak shopping hours.

- ✅ Best Practice: Design your AI architecture with scalability in mind from day one, leveraging containerization (Docker, Kubernetes) and serverless functions.

- 👉 Shop Cloud AI Services:

- AWS SageMaker: Amazon.com AWS SageMaker

- Google Cloud AI Platform: Google Cloud AI Platform

- Azure Machine Learning: Microsoft Azure Machine Learning

5. Data Quality and Completeness

This KPI assesses the accuracy, consistency, and comprehensiveness of the data used to train and operate your AI models.

- Why it matters: Garbage in, garbage out! Poor data quality is the silent killer of AI projects. It leads to biased models, inaccurate predictions, and wasted resources.

- Our take: This is often overlooked but is foundational. We’ve spent countless hours cleaning and validating data, and it always pays off.

- Example: A customer segmentation model relies on complete and accurate demographic and purchase history data for each customer.

- ✅ Best Practice: Implement robust data governance strategies, data validation pipelines, and regular data audits.

- ❌ Common Pitfall: Assuming your data is “good enough” without rigorous testing and profiling.

6. User Engagement and Adoption Rates

These KPIs measure how frequently and intensely users interact with your AI-powered product or feature, and how many users actually start using it.

- Why it matters: As the first YouTube video embedded above highlights, “Measure user engagement through metrics such as time spent using the product.” A technically perfect AI is useless if no one uses it. High engagement and adoption indicate that your AI is solving a real problem and providing value to its end-users.

- Our take: This is where the rubber meets the road. We’ve seen incredible AI models fail because they weren’t designed with the user in mind.

- Example: A new AI-powered search feature sees a 30% increase in daily active users and an average session duration of 5 minutes.

- ✅ Best Practice: Integrate user feedback loops (surveys, A/B testing) directly into your AI development cycle.

- ❌ Common Pitfall: Building AI in a vacuum without considering the user experience or conducting user research.

7. Business Impact and ROI

This is the ultimate KPI! It measures the direct financial and strategic benefits your AI initiative brings to the business. This includes cost savings, revenue generation, increased efficiency, and competitive advantage.

- Why it matters: As Acacia Advisors notes, AI initiatives must “determine if AI meets goals like efficiency, sales, or customer satisfaction.” This is how you justify your investment and secure future funding. Without a clear ROI, your AI project is just an expensive experiment.

- Our take: This is where our expertise in “Turning AI Insight into Competitive Edge” truly comes into play. We help clients connect the dots between model performance and financial outcomes.

- Example: An AI-driven inventory management system reduces holding costs by 15% and increases sales by 5% due to optimized stock levels.

- ✅ Best Practice: Define clear business objectives and quantifiable financial targets before starting your AI project.

- Reference: We’ll dive deeper into quantifying ROI in the next section!

8. Model Drift and Stability

Model drift occurs when the relationship between the input data and the target variable changes over time, causing your model’s performance to degrade. Stability measures how consistently your model performs over time in a production environment.

- Why it matters: The world isn’t static! Customer behavior changes, market conditions shift, and new data patterns emerge. A model trained on old data will eventually become obsolete.

- Our take: This is a critical MLOps challenge. We’ve seen models go from hero to zero in months if not properly monitored. Continuous monitoring and retraining are non-negotiable.

- Example: A fraud detection model’s accuracy drops from 95% to 80% over six months due to new fraud patterns emerging that it wasn’t trained on.

- ✅ Best Practice: Implement automated monitoring systems (e.g., using tools like Datadog, Prometheus, or specialized MLOps platforms) to detect performance degradation and trigger alerts for retraining.

- 👉 Shop MLOps Monitoring Tools:

- Datadog: Datadog Official Website

- Prometheus: Prometheus Official Website

- Weights & Biases: Weights & Biases Official Website

9. Explainability and Transparency Scores

These KPIs measure how understandable and interpretable your AI model’s decisions are to humans.

- Why it matters: In regulated industries (finance, healthcare) or for critical applications, “black box” models are a no-go. Users and stakeholders need to understand why an AI made a particular decision to build trust and ensure fairness. This is a key aspect of ethical AI.

- Our take: This is becoming increasingly important, especially with GDPR and other regulations. Tools like SHAP and LIME are invaluable here.

- Example: A loan approval AI provides a clear explanation for why an application was denied, detailing the contributing factors.

- ✅ Best Practice: Incorporate Explainable AI (XAI) techniques into your model development and deployment, especially for high-stakes decisions.

- Reference: Learn more about XAI techniques from IBM: https://www.ibm.com/watson/explainable-ai

10. Cost Efficiency and Resource Utilization

These KPIs track the computational resources (CPU, GPU, memory) and associated costs required to train, deploy, and run your AI models.

- Why it matters: AI can be expensive! Optimizing resource usage directly impacts your budget and the overall ROI of your project. Are you getting the most bang for your buck?

- Our take: We’ve seen companies overspend dramatically on cloud resources due to inefficient model architectures or lack of monitoring. This is a prime area for optimization.

- Example: An optimized deep learning model reduces its GPU usage by 20% while maintaining performance, leading to significant cloud cost savings.

- ✅ Best Practice: Regularly monitor resource consumption, optimize model size and inference efficiency, and leverage cost-effective cloud instances (e.g., spot instances).

- 👉 Shop Cloud Computing Resources:

- DigitalOcean: DigitalOcean Official Website

- Paperspace: Paperspace Official Website

- RunPod: RunPod Official Website

11. Compliance and Ethical Considerations

This KPI assesses whether your AI system adheres to relevant regulations (e.g., GDPR, HIPAA), internal policies, and ethical guidelines regarding fairness, privacy, and bias.

- Why it matters: Non-compliance can lead to hefty fines, reputational damage, and loss of customer trust. Ethical AI isn’t just good practice; it’s increasingly a legal and business imperative.

- Our take: This is a complex but non-negotiable area. We often work with legal and compliance teams to ensure AI systems are deployed responsibly.

- Example: An AI-powered hiring tool is regularly audited to ensure it does not exhibit bias against protected groups, complying with anti-discrimination laws.

- ✅ Best Practice: Establish clear ethical AI principles, conduct regular bias audits, and implement data privacy by design.

- Reference: The European Union’s AI Act is a landmark regulation to watch: https://digital-strategy.ec.europa.eu/en/policies/artificial-intelligence-act

12. Customer Satisfaction and Feedback

This KPI measures how satisfied your users or customers are with the AI-powered product or service, often through surveys, ratings, and direct feedback.

- Why it matters: Ultimately, AI should serve humans. If your customers aren’t happy, your AI isn’t truly successful, regardless of its technical prowess. As the first YouTube video states, “Tracking these metrics provides valuable insights to create a product that meets user needs and delivers real value.”

- Our take: This is a lagging indicator, but a crucial one. It tells you if your AI is truly delivering on its promise of an improved user experience.

- Example: A virtual assistant receives an average customer satisfaction score of 4.5 out of 5 stars, with positive comments highlighting its helpfulness.

- ✅ Best Practice: Implement continuous feedback mechanisms (in-app surveys, NPS scores, sentiment analysis on support tickets) to gauge user sentiment and identify pain points.

💡 How to Quantify AI’s Return on Investment (ROI) Like a Pro

Ah, the million-dollar question (or perhaps, the multi-million-dollar question!): How do you actually prove that your AI investment is paying off? This isn’t just about fancy algorithms; it’s about cold, hard business value. We’ve seen too many promising AI projects get defunded because their impact wasn’t clearly articulated in terms of ROI. Don’t let that happen to you!

Quantifying AI ROI can feel like trying to catch smoke, especially with intangible benefits like “improved decision-making” or “enhanced innovation.” But trust us, it’s absolutely doable, and it’s essential for securing stakeholder buy-in and future investments.

The Core Components of AI ROI

At ChatBench.org™, we break down AI ROI into two main categories: Cost Savings and Revenue Enhancements.

1. Cost Savings: Where AI Trims the Fat ✂️

This is often the easiest place to start. AI excels at automating repetitive tasks, optimizing processes, and reducing errors.

- Reduced Labor Costs:

- Automation: Think AI chatbots handling customer inquiries, freeing up human agents for complex issues. Or robotic process automation (RPA) streamlining back-office operations.

- Efficiency Gains: AI optimizing logistics routes, reducing fuel consumption, or scheduling maintenance more effectively, leading to fewer breakdowns and less manual oversight.

- Error Reduction:

- Quality Control: AI vision systems detecting defects on a production line faster and more consistently than humans, reducing scrap and rework.

- Fraud Detection: AI identifying fraudulent transactions, preventing financial losses.

- Optimized Resource Utilization:

- Energy Management: AI controlling HVAC systems in smart buildings to minimize energy waste.

- Inventory Optimization: AI predicting demand more accurately, reducing overstocking and associated storage costs.

Example: A manufacturing company deployed an AI-powered predictive maintenance system. Before AI, they experienced 10 critical machine breakdowns per year, each costing an average of $50,000 in repairs and lost production. With AI, breakdowns dropped to 2 per year.

- Annual Savings from reduced breakdowns: (10 – 2) breakdowns * $50,000/breakdown = $400,000.

2. Revenue Enhancements: Where AI Fuels Growth 🚀

This is where AI helps you make more money, often by improving customer experience, personalizing offerings, or identifying new opportunities.

- Increased Sales and Conversion Rates:

- Personalized Recommendations: AI engines (like those used by Amazon or Netflix) suggesting products or content, leading to higher purchase rates or longer engagement.

- Targeted Marketing: AI segmenting customers and tailoring marketing campaigns, resulting in higher conversion rates.

- Dynamic Pricing: AI optimizing prices in real-time based on demand, competition, and inventory, maximizing revenue.

- Improved Customer Retention:

- Proactive Support: AI identifying customers at risk of churn and triggering personalized interventions.

- Enhanced Customer Experience: AI-powered tools providing faster, more accurate, and more personalized service.

- New Product/Service Development:

- Market Insights: AI analyzing vast datasets to identify unmet customer needs or emerging market trends, informing new product development.

- Accelerated R&D: AI speeding up drug discovery or material science research.

Example: An e-commerce retailer implemented an AI recommendation engine. Before AI, their average order value (AOV) was $75. After AI, AOV increased to $82 due to more relevant cross-sells and upsells. If they process 1 million orders annually:

- Annual Revenue Increase: 1,000,000 orders * ($82 – $75) = $7,000,000.

The ROI Calculation Formula

Once you have your savings and revenue gains, you can calculate a straightforward ROI:

ROI (%) = ( (Total Savings + Total Revenue Gains) – Total AI Investment Costs ) / Total AI Investment Costs × 100%

- Total AI Investment Costs include everything:

- Software licenses (e.g., for platforms like DataRobot or H2O.ai)

- Hardware (GPUs, servers, cloud compute like DigitalOcean or Paperspace)

- Data acquisition and preparation

- Talent (data scientists, ML engineers, MLOps specialists)

- Training and maintenance

- Integration costs

Let’s put it together: Suppose the manufacturing company’s AI system cost $200,000 to develop and deploy (including hardware, software, and personnel).

- Total Savings: $400,000 (from reduced breakdowns)

- Total AI Investment Costs: $200,000

- ROI: (($400,000 – $200,000) / $200,000) * 100% = 100% ROI

That’s a fantastic return!

Beyond the Numbers: Long-term Value and Strategic ROI

While financial ROI is paramount, don’t forget the less tangible, but equally important, long-term benefits. As Acacia Advisors highlights, these include:

- Innovation: AI fosters a culture of data-driven innovation.

- Competitive Advantage: Being an AI leader can differentiate you in the market.

- Scalability: AI solutions can often scale faster than human-powered processes.

- Improved Decision-Making: AI provides insights that lead to smarter, faster business decisions across all levels of the organization. This aligns perfectly with the MIT Sloan perspective on “Smart Descriptive KPIs” and “Smart Predictive KPIs” that synthesize data and forecast future performance.

Our Anecdote: We once worked with a retail client struggling with seasonal demand forecasting. Their traditional methods were constantly off, leading to either stockouts or massive overstock. We implemented an AI forecasting model that, in its first year, reduced forecasting errors by 30%. The direct ROI from reduced waste and increased sales was clear, but the strategic ROI was even bigger: they could now plan promotions with confidence, negotiate better with suppliers, and respond to market shifts with unprecedented agility. That’s the power of AI-driven insights!

⚠️ Common Challenges and Pitfalls in Measuring AI Performance

Measuring AI performance sounds straightforward, right? Pick some metrics, track them, and voilà! If only it were that simple. In our years at ChatBench.org™, we’ve stumbled, learned, and ultimately mastered the art of AI measurement, but not without encountering some gnarly challenges along the way. It’s a minefield out there, but with the right map, you can navigate it.

1. The Data Labyrinth: Quality, Consistency, and Accessibility 📉

This is perhaps the most common and frustrating challenge. As Acacia Advisors rightly points out, data complexity is a huge hurdle.

- Data Quality Issues: Missing values, incorrect entries, outdated information – you name it, we’ve seen it. Training an AI model on dirty data is like trying to build a skyscraper on quicksand. The model might seem to work, but its predictions will be unreliable, biased, or just plain wrong.

- Our Anecdote: We once inherited a project where the “customer churn” label was inconsistently applied across different departments. Some considered a customer churned after 30 days of inactivity, others after 90. The AI model, naturally, was utterly confused, leading to wildly inaccurate predictions. We had to spend weeks standardizing the definition before the model stood a chance.

- Data Inconsistency: Different data sources often use different formats, units, or definitions for the same information. Merging these can be a nightmare.

- Data Accessibility: Data might be siloed in legacy systems, spread across various departments, or simply not available in a format suitable for AI training. Getting access can be a bureaucratic battle.

Solution: Invest heavily in robust data governance. This means clear data definitions, standardized collection processes, automated validation pipelines, and a centralized data strategy. Think of it as building a solid foundation before you even think about the AI structure.

2. The Dynamic Environment: AI in a Constantly Shifting World 🌪️

Unlike traditional software, AI models are designed to learn from data, and that data is rarely static.

- Model Drift: We touched on this earlier, but it’s worth reiterating. The real world changes. Customer preferences evolve, new trends emerge, and external factors (like a pandemic or economic shift) can drastically alter data patterns. A model that was 95% accurate last month might be 70% accurate today.

- Quote from Acacia Advisors: They highlight “dynamic environments” including “internal changes (IT updates, policies) and external shifts (market, tech)” as key challenges.

- Concept Drift: This is even more insidious than model drift. It’s when the relationship between your input features and the target variable changes. For example, what constituted “fraud” five years ago might be different from today’s sophisticated schemes.

- Feedback Loops: Sometimes, the AI’s own actions can change the environment it’s operating in, creating complex feedback loops that are hard to measure and manage.

Solution: Implement continuous monitoring and automated retraining pipelines. Your AI isn’t a “set it and forget it” solution. It needs constant vigilance, performance checks, and mechanisms to adapt to new data. MLOps practices are essential here.

3. Defining “Success”: The Elusive Business Value 🎯

This is less about technical hurdles and more about strategic alignment.

- Lack of Clear Objectives: If you don’t know why you’re deploying AI, how can you measure its success? Vague goals like “improve efficiency” aren’t enough. You need quantifiable targets.

- Attribution Challenges: How do you definitively prove that a specific business outcome (e.g., increased sales) was because of the AI, and not other factors like a new marketing campaign or a seasonal trend? This is especially tricky with complex AI systems that integrate into multiple business processes.

- Measuring Intangibles: How do you put a number on “improved customer experience” or “enhanced innovation”? While we discussed ROI, some benefits are harder to directly monetize.

Solution: Start with the “Why.” Before any code is written, define SMART goals (Specific, Measurable, Achievable, Relevant, Time-bound) for your AI project. Use A/B testing or control groups where possible to isolate the AI’s impact. For intangibles, use proxy metrics (e.g., Net Promoter Score for customer experience, number of new patents for innovation).

4. The “Black Box” Problem: Explainability and Trust 👻

Many powerful AI models, especially deep learning networks, are notoriously difficult to interpret.

- Lack of Transparency: If you can’t understand why an AI made a particular decision, it’s hard to trust it, debug it, or explain it to stakeholders or regulators. This is a significant barrier to adoption in critical domains.

- Bias and Fairness: Opaque models can inadvertently perpetuate or even amplify existing societal biases present in the training data. Detecting and mitigating these biases is crucial but challenging.

Solution: Embrace Explainable AI (XAI) techniques. Tools like SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) can help shed light on model decisions. Prioritize transparency, especially in high-stakes applications.

5. Resource Constraints: Time, Talent, and Tools ⏳

Even with the best intentions, practical limitations can derail your measurement efforts.

- Lack of Skilled Talent: Measuring AI performance effectively requires a blend of data science, engineering, and business acumen. Finding individuals with this diverse skillset can be tough.

- Tooling Gaps: While many MLOps platforms exist, integrating them, customizing dashboards, and building robust monitoring systems requires significant effort.

- Time and Budget: Setting up comprehensive measurement frameworks, conducting regular audits, and continuously refining KPIs takes time and resources that are often underestimated.

Solution: Prioritize. Start with a core set of critical KPIs and expand as you gain experience and resources. Leverage managed services from cloud providers (AWS, Google Cloud, Azure) to offload some of the infrastructure burden. And don’t be afraid to partner with experts (like us at ChatBench.org™ or firms like Acacia Advisors) who specialize in AI measurement and strategy.

Navigating these challenges requires a proactive, iterative approach. It’s not about avoiding pitfalls entirely, but about having the strategies and tools in place to identify and overcome them quickly.

🔧 Tools and Platforms to Monitor AI KPIs Effectively

Alright, you’ve defined your KPIs, you understand the challenges, now what? You can’t just stare at a spreadsheet and hope for the best! To truly master AI performance, you need the right arsenal of tools and platforms. From our experience at ChatBench.org™, the MLOps landscape has exploded, offering incredible capabilities for monitoring, managing, and optimizing your AI models in production.

Think of these tools as your AI’s mission control center. They provide the telemetry, the alerts, and the dashboards you need to keep your AI systems flying high.

1. Cloud-Native MLOps Platforms: The Integrated Powerhouses ☁️

For many organizations, especially those already leveraging cloud infrastructure, integrated MLOps platforms from the major cloud providers are a natural fit. They offer end-to-end solutions from data ingestion to model deployment and monitoring.

- Amazon SageMaker:

- Features: SageMaker Model Monitor automatically detects data drift and model quality issues, sending alerts when performance degrades. It integrates seamlessly with other AWS services.

- Benefits: Comprehensive, scalable, and deeply integrated with the AWS ecosystem. Great for teams already invested in AWS.

- Drawbacks: Can be complex to set up and manage for newcomers; cost can scale quickly.

- 👉 Shop AWS SageMaker: Amazon.com AWS SageMaker

- Google Cloud AI Platform / Vertex AI:

- Features: Vertex AI offers unified MLOps capabilities, including model monitoring for drift detection, explainability features (Vertex Explainable AI), and robust data validation.

- Benefits: Strong focus on MLOps, excellent integration with Google Cloud’s data analytics tools (BigQuery, Dataflow), and powerful explainability features.

- Drawbacks: Can have a steeper learning curve for those unfamiliar with Google Cloud.

- 👉 Shop Google Cloud AI Platform: Google Cloud AI Platform

- Microsoft Azure Machine Learning:

- Features: Azure ML provides model monitoring dashboards, data drift detection, and integration with Azure Monitor for comprehensive operational insights.

- Benefits: User-friendly interface, strong integration with Microsoft’s enterprise tools, and good support for hybrid cloud scenarios.

- Drawbacks: Can sometimes feel less “open” than other platforms, potentially locking you into the Azure ecosystem.

- 👉 Shop Azure Machine Learning: Microsoft Azure Machine Learning

2. Specialized MLOps & Model Monitoring Platforms: The Focused Experts 🔬

Beyond the cloud giants, a new breed of specialized platforms has emerged, focusing specifically on the challenges of AI monitoring and MLOps. These often offer deeper insights into model behavior, bias detection, and explainability.

- Datadog:

- Features: While not AI-specific, Datadog is a powerful monitoring and analytics platform that can be configured to track AI model metrics (latency, error rates, resource utilization) and create custom dashboards and alerts.

- Benefits: Excellent for unified observability across your entire tech stack, including AI components.

- Drawbacks: Requires custom integration for AI-specific metrics like model drift or bias.

- 👉 Shop Datadog: Datadog Official Website

- Weights & Biases (W&B):

- Features: Primarily known for experiment tracking, W&B also offers robust model monitoring capabilities, allowing you to track model performance, data distributions, and system health in production.

- Benefits: Fantastic for data scientists and ML engineers, providing deep insights into model training and deployment.

- Drawbacks: More focused on the ML lifecycle than broader IT operations monitoring.

- 👉 Shop Weights & Biases: Weights & Biases Official Website

- Arize AI:

- Features: A dedicated AI observability platform that focuses on model performance monitoring, data quality, drift detection, and explainability in production.

- Benefits: Built from the ground up for AI, offering advanced analytics specific to model health.

- Drawbacks: Can be an additional tool to integrate into your existing stack.

- 👉 Shop Arize AI: Arize AI Official Website

- Fiddler AI:

- Features: Specializes in AI observability, explainability, and responsible AI. Helps monitor model performance, detect drift, and provide explanations for model predictions.

- Benefits: Strong focus on XAI and ethical AI, crucial for regulated industries.

- Drawbacks: Similar to Arize, it’s a specialized tool that needs integration.

- 👉 Shop Fiddler AI: Fiddler AI Official Website

3. Open-Source Tools & Libraries: The DIY Approach 🛠️

For teams with strong engineering capabilities or specific needs, open-source tools offer flexibility and cost-effectiveness.

- Prometheus & Grafana:

- Features: Prometheus is a powerful open-source monitoring system for time-series data, and Grafana is an open-source analytics and interactive visualization web application. Together, they can monitor virtually any metric from your AI models and infrastructure.

- Benefits: Highly customizable, free, and widely adopted in the DevOps community.

- Drawbacks: Requires significant setup and maintenance effort; not AI-specific out-of-the-box.

- 👉 Shop Prometheus: Prometheus Official Website

- 👉 Shop Grafana: Grafana Official Website

- MLflow:

- Features: An open-source platform for managing the end-to-end machine learning lifecycle, including experiment tracking, model packaging, and model registry. While not a dedicated monitoring tool, it helps track model versions and metrics.

- Benefits: Excellent for managing the ML lifecycle, widely used, and integrates with many other tools.

- Drawbacks: Monitoring capabilities are more focused on experiment tracking than real-time production monitoring.

- 👉 Shop MLflow: MLflow Official Website

- Deepchecks / Evidently AI:

- Features: Python libraries specifically designed for data validation, model testing, and monitoring data/model drift. They generate interactive reports and can be integrated into your MLOps pipelines.

- Benefits: Easy to integrate into existing Python workflows, provide deep insights into data and model quality.

- Drawbacks: Require custom integration and scripting for alerts and dashboards.

- 👉 Shop Deepchecks: Deepchecks Official Website

- 👉 Shop Evidently AI: Evidently AI Official Website

Our Recommendation: A Hybrid Approach is Often Best ✅

At ChatBench.org™, we often recommend a hybrid approach. Leverage the integrated capabilities of your chosen cloud provider for core infrastructure and basic monitoring. Then, layer on specialized MLOps platforms like Arize AI or Fiddler AI for deep model observability, explainability, and bias detection. For experiment tracking and model versioning, tools like Weights & Biases or MLflow are invaluable.

The key is to choose tools that fit your team’s expertise, your existing infrastructure, and the specific needs of your AI projects. Don’t over-engineer, but don’t under-monitor either! Your AI’s health depends on it.

📈 Case Studies: Real-World Success Stories in AI KPI Measurement

Enough theory! Let’s talk about how companies are actually crushing it by meticulously measuring their AI’s impact. These aren’t just hypothetical scenarios; these are real-world examples that showcase the power of well-defined KPIs in driving AI success.

Case Study 1: Optimizing Customer Service with an AI Chatbot (Retail Sector) 🛍️

A major online retailer was struggling with escalating customer support costs and long wait times, especially during peak seasons. They decided to deploy an AI-powered chatbot to handle common inquiries.

- Initial Problem: High operational costs, low customer satisfaction due to slow response times.

- AI Solution: Implemented a Google Dialogflow-powered chatbot integrated with their CRM.

- Key KPIs Tracked:

- Resolution Rate (Chatbot): Percentage of customer queries fully resolved by the chatbot without human intervention.

- Average Handle Time (AHT): Time taken for the chatbot to resolve a query.

- Customer Satisfaction Score (CSAT): Post-interaction survey rating for chatbot interactions.

- Human Agent Escalation Rate: Percentage of queries the chatbot couldn’t resolve, requiring human handover.

- Cost Per Interaction: Financial cost of a chatbot interaction vs. a human agent interaction.

- Results After 6 Months:

- Resolution Rate: Increased from 60% to 85%.

- AHT: Reduced by 40% (from 3 minutes to 1.8 minutes).

- CSAT: Maintained a high 4.2/5 stars, comparable to human agents for simple queries.

- Human Agent Escalation Rate: Decreased by 50%, freeing up human agents for complex issues.

- Cost Per Interaction: Reduced by 70%.

- Overall Impact: The chatbot significantly reduced operational costs, improved customer experience by providing instant answers, and allowed human agents to focus on higher-value tasks. The clear KPI tracking allowed the retailer to continuously refine the chatbot’s knowledge base and intent recognition.

Case Study 2: Predictive Maintenance in Manufacturing (Industrial Sector) ⚙️

A large industrial equipment manufacturer faced frequent, costly unplanned downtime due to machine failures. They explored AI to predict equipment malfunctions before they occurred.

- Initial Problem: High maintenance costs, significant production losses due to unexpected equipment breakdowns.

- AI Solution: Deployed an AWS SageMaker-based machine learning model that analyzed sensor data (temperature, vibration, pressure) from critical machinery to predict potential failures.

- Key KPIs Tracked:

- Accuracy of Failure Prediction: How often the model correctly predicted an impending failure.

- False Positive Rate: How often the model predicted a failure that didn’t occur (leading to unnecessary maintenance).

- False Negative Rate: How often the model missed an impending failure (leading to unplanned downtime).

- Unplanned Downtime Hours: Total hours of production lost due to unexpected failures.

- Maintenance Cost Reduction: Savings from proactive vs. reactive maintenance.

- Mean Time To Repair (MTTR): Time taken to fix a machine once a problem is identified.

- Results After 1 Year:

- Accuracy of Failure Prediction: Achieved 92%.

- False Positive Rate: Kept below 5%.

- False Negative Rate: Reduced to <1%.

- Unplanned Downtime Hours: Reduced by 75%.

- Maintenance Cost Reduction: 15% reduction due to optimized scheduling and fewer emergency repairs.

- MTTR: Improved by 20% due to better preparation.

- Overall Impact: The AI system transformed their maintenance strategy from reactive to proactive, saving millions in lost production and repair costs. The KPIs provided a clear roadmap for model improvement and demonstrated undeniable ROI.

Case Study 3: Personalized Content Recommendations (Media & Entertainment) 🎬

A streaming service wanted to improve user engagement and retention by offering more relevant content recommendations.

- Initial Problem: Users struggling to find content they liked, leading to lower engagement and higher churn rates.

- AI Solution: Developed a sophisticated recommendation engine using a combination of collaborative filtering and deep learning, deployed on a Kubernetes cluster with monitoring via Prometheus and Grafana.

- Key KPIs Tracked:

- Click-Through Rate (CTR) on Recommendations: Percentage of users who click on recommended content.

- Watch Time of Recommended Content: Total time users spend watching content suggested by the AI.

- User Retention Rate: Percentage of users who continue their subscription over time.

- Content Diversity Score: Ensuring recommendations weren’t too narrow or repetitive.

- A/B Test Lift: Comparing AI recommendations against a control group.

- Results After 9 Months:

- CTR on Recommendations: Increased by 25%.

- Watch Time of Recommended Content: Saw a 15% increase in average daily watch time.

- User Retention Rate: Improved by 3% (which translates to millions of dollars in a large subscriber base).

- Content Diversity Score: Maintained a healthy score, preventing filter bubbles.

- Overall Impact: The AI recommendation engine directly contributed to higher user engagement and retention, proving its value as a core component of the platform’s success. The A/B testing and continuous monitoring of these KPIs were critical to iterating and improving the recommendation algorithms.

These case studies underscore a fundamental truth: AI success isn’t just about building cool tech; it’s about delivering measurable business value. By rigorously defining and tracking KPIs, these companies not only proved the worth of their AI investments but also gained the insights needed to continuously optimize and scale their AI initiatives.

🚀 Best Practices for Continuous AI Performance Improvement

So, you’ve deployed your AI, you’re tracking your KPIs, and things are looking good. Mission accomplished, right? ❌ WRONG! In the fast-paced world of AI, “good enough” today is “obsolete” tomorrow. Continuous improvement isn’t just a buzzword; it’s the lifeblood of successful AI initiatives. At ChatBench.org™, we live and breathe this philosophy, constantly refining models and strategies to keep our clients ahead of the curve.

Here are our battle-tested best practices for ensuring your AI models don’t just perform, but thrive and evolve:

1. Establish a Robust MLOps Framework from Day One 🏗️

Don’t treat MLOps as an afterthought. It’s the operational backbone of your AI.

- Automated Pipelines: Implement CI/CD (Continuous Integration/Continuous Deployment) for your machine learning models. This means automated data ingestion, model training, testing, deployment, and monitoring. Tools like MLflow, Kubeflow, or cloud-native MLOps platforms (AWS SageMaker, Google Vertex AI, Azure ML) are your friends here.

- Version Control Everything: Not just code, but data, models, configurations, and even experiments. Git is your starting point, but specialized tools like DVC (Data Version Control) can help with large datasets.

- Infrastructure as Code (IaC): Define your AI infrastructure (compute, storage, networking) using code (e.g., Terraform, CloudFormation). This ensures reproducibility and consistency.

2. Implement Comprehensive Monitoring and Alerting 🚨

You can’t fix what you don’t see. Proactive monitoring is non-negotiable.

- Track All the Things: Monitor not just model performance (accuracy, precision, recall), but also data quality (drift, anomalies), model health (latency, throughput, resource utilization), and business impact (ROI, user engagement).

- Set Up Smart Alerts: Don’t just get alerted when things break. Set thresholds for performance degradation, data drift, or unusual resource spikes. Use tools like Datadog, Prometheus, or your cloud provider’s monitoring services.

- Visualize Your Data: Use dashboards (Grafana, Power BI, Tableau) to make your KPIs easily digestible for both technical and business stakeholders. A picture is worth a thousand data points!

3. Embrace Regular Model Retraining and Validation 🔄

Your model is a living entity; it needs to learn and adapt.

- Scheduled Retraining: Based on your model drift monitoring, establish a schedule for retraining your models with fresh data. This could be daily, weekly, or monthly, depending on the dynamism of your data.

- Triggered Retraining: Set up automated triggers for retraining when significant data drift or performance degradation is detected.

- A/B Testing in Production: Before fully deploying a new model version, run A/B tests to compare its performance against the current production model. This minimizes risk and provides empirical evidence of improvement.

- Champion/Challenger Models: Maintain a “champion” model in production while continuously developing and testing “challenger” models. Promote a challenger to champion status only when it demonstrably outperforms the current one on key KPIs.

4. Foster a Culture of Data Quality and Governance 📊

Garbage in, garbage out – it’s an old adage, but it’s never been truer for AI.

- Data Validation Pipelines: Implement automated checks at every stage of your data pipeline to ensure data quality, consistency, and completeness.

- Clear Data Ownership: Assign clear ownership for data sources and ensure accountability for data quality.

- Feedback Loops for Data Issues: Create mechanisms for data scientists and model users to report data anomalies or issues they observe in model predictions, which can then be fed back to data engineering teams.

5. Prioritize Explainability and Bias Detection (Responsible AI) ⚖️

Building trust and ensuring fairness are paramount for long-term AI success.

- Integrate XAI Tools: Use libraries like SHAP or LIME to understand why your models make certain predictions. This helps in debugging, building trust, and meeting regulatory requirements.

- Bias Audits: Regularly audit your models for potential biases against protected groups. Tools like IBM’s AI Fairness 360 can help detect and mitigate bias.

- Ethical Guidelines: Establish clear ethical AI guidelines within your organization and ensure your models adhere to them. This is not just a technical task; it’s a cultural one.

6. Close the Loop: Business Feedback and Iteration 🔄➡️📈

AI isn’t just a technical exercise; it’s a business transformation tool.

- Regular Stakeholder Reviews: Periodically review AI performance and business impact with key stakeholders. Discuss KPIs, ROI, and gather feedback on how the AI is affecting business processes.

- User Feedback Mechanisms: For user-facing AI, implement in-app surveys, sentiment analysis, and direct feedback channels to understand user satisfaction and pain points.

- Iterate, Iterate, Iterate: Use all the insights gathered from monitoring, feedback, and business reviews to inform the next iteration of your AI models and strategies. This continuous learning cycle is what truly drives competitive advantage.

Our Personal Story: We once helped a client deploy an AI-powered lead scoring model. Initially, the model was great, but after a few months, sales teams started complaining about “bad leads.” Our monitoring showed no significant model drift, but the business KPI (conversion rate of scored leads) was dropping. Digging deeper, we realized the definition of a “good lead” had subtly shifted for the sales team due to a new product launch. The model was still accurate based on its original definition, but that definition was no longer aligned with business reality. By closing the loop with sales, updating the target variable, and retraining, we quickly brought the model back into alignment, proving that technical KPIs alone are never enough.

By embedding these best practices into your AI lifecycle, you’ll ensure your AI initiatives are not just fleeting successes, but enduring assets that continuously deliver value and adapt to the ever-changing business landscape.

🤝 Partnering with Experts: How Acacia Advisors Helps You Master AI Metrics

You’ve seen the complexity, the challenges, and the sheer volume of work involved in effectively measuring AI performance. It’s a lot, right? For many organizations, especially those just embarking on their AI journey or scaling up their existing initiatives, navigating this landscape can feel overwhelming. This is where specialized partners, like Acacia Advisors, become invaluable.

At ChatBench.org™, we often collaborate with and recommend firms that bring deep expertise to the table, and Acacia Advisors is a prime example of a company that understands the nuances of AI measurement. Their approach aligns perfectly with our philosophy of turning AI insight into competitive edge.

What Acacia Advisors Brings to the Table: A Complementary Perspective

Acacia Advisors focuses on ensuring that your AI measurement frameworks are not just robust, but also adaptable to the unique needs and evolving conditions of your business. This isn’t a one-size-fits-all solution; it’s about tailoring the approach to your specific context.

Here’s how they typically help organizations master AI metrics, echoing many of the best practices we’ve discussed:

- Analytics Support & Custom Metrics: They don’t just hand you a generic list of KPIs. Acacia works with you to identify and define the most relevant, custom metrics that directly align with your specific business objectives and AI project goals. This means moving beyond generic accuracy to metrics that truly reflect your unique value proposition.

- Dashboard Development & Visualization: Raw data is meaningless without proper visualization. Acacia helps design and implement intuitive dashboards that provide real-time visibility into your AI’s performance, making complex data accessible to all stakeholders, from data scientists to C-suite executives.

- Ensuring Data Integrity: As we’ve highlighted, data quality is paramount. Acacia assists in establishing data governance frameworks and processes to ensure the data feeding your AI models (and your KPIs) is accurate, consistent, and reliable. This is about building trust in your numbers.

- Strategic Alignment: They help bridge the gap between technical AI performance and overarching business strategy. This ensures that your AI initiatives are not just technically sound, but also contribute directly to your organizational goals, proving clear ROI.

- Ongoing Guidance & Adaptability: The AI landscape is constantly evolving. Acacia provides continuous support, helping you adapt your measurement frameworks as your AI models mature, your business needs change, or new regulations emerge. This proactive approach is critical for long-term success.

A Quote from Acacia Advisors: “Our approach ensures that AI measurement frameworks are not only robust but also adaptable to the unique needs and evolving conditions of your business.” This statement perfectly encapsulates the dynamic and tailored nature required for effective AI KPI management.

Why Partnering Makes Sense

- Specialized Expertise: AI measurement is a niche skill. Experts bring years of experience, best practices, and knowledge of the latest tools and techniques.

- Objectivity: External partners can provide an unbiased assessment of your AI performance and identify blind spots that internal teams might miss.

- Accelerated Time to Value: Instead of spending months building out a measurement framework from scratch, partners can help you implement robust solutions much faster.

- Focus on Core Business: By offloading the complexities of AI measurement, your internal teams can focus on their core competencies – developing innovative AI solutions and driving business growth.

While ChatBench.org™ provides the insights and strategic guidance to help you understand the “what” and “why” of AI KPIs, partners like Acacia Advisors can provide the hands-on implementation and ongoing support to help you master the “how.” It’s about building a strong ecosystem of expertise around your AI initiatives to ensure their sustained success.

🌟 Kickstart Your AI Transformation Journey Today

Feeling inspired? A little overwhelmed? That’s perfectly normal! The world of AI is vast and exciting, but the path to truly impactful AI, the kind that delivers measurable business value and competitive edge, requires a strategic approach. You’ve seen the power of well-defined KPIs, the pitfalls to avoid, and the tools at your disposal. Now, it’s time to translate that knowledge into action.

Your AI transformation isn’t just about adopting new technology; it’s about fundamentally changing how your organization operates, makes decisions, and measures success. It’s about building a data-driven culture where every AI initiative is tied to clear objectives and rigorously evaluated.

Where Do You Begin? 🤔

- Define Your “Why”: Before anything else, clearly articulate the business problem you’re trying to solve with AI. What specific outcomes are you aiming for?

- Identify Your Core KPIs: Based on your “why,” select a handful of critical KPIs (technical, operational, and business) that will truly reflect the success of your AI. Don’t try to measure everything at once!

- Assess Your Data Foundation: Is your data clean, consistent, and accessible? This is often the biggest bottleneck.

- Pilot and Iterate: Start small, learn fast. Deploy a pilot AI project, measure its performance against your KPIs, gather feedback, and iterate.

- Build Your Team & Toolset: Invest in the right talent and the appropriate MLOps tools to support continuous monitoring and improvement.

- Seek Expert Guidance: Don’t hesitate to leverage the expertise of firms like ChatBench.org™ or our recommended partners like Acacia Advisors. We’ve walked this path countless times and can help you avoid common pitfalls and accelerate your journey.

The future of business is intertwined with AI. Those who master the art of measuring and optimizing their AI initiatives will be the ones who lead their industries. Are you ready to take the leap?

🔗 Quick Links to Essential AI KPI Resources

We know you’re eager to dive deeper! Here’s a curated list of essential resources to help you continue your journey in mastering AI KPIs and performance measurement.

- ChatBench.org™ AI Business Applications: https://www.chatbench.org/category/ai-business-applications/

- ChatBench.org™ AI News: https://www.chatbench.org/category/ai-news/

- ChatBench.org™ AI Infrastructure: https://www.chatbench.org/category/ai-infrastructure/

- What are the key benchmarks for evaluating AI model performance?: https://www.chatbench.org/what-are-the-key-benchmarks-for-evaluating-ai-model-performance/

- Acacia Advisors – Measuring Success: Key Metrics and KPIs for AI Initiatives: https://www.chooseacacia.com/measuring-success-key-metrics-and-kpis-for-ai-initiatives/

- MIT Sloan – Build Better KPIs with Artificial Intelligence: https://mitsloan.mit.edu/ideas-made-to-matter/build-better-kpis-artificial-intelligence

- Sloan Review – Improve Key Performance Indicators with AI: https://sloanreview.mit.edu/article/improve-key-performance-indicators-with-ai/

- IBM’s Explainable AI (XAI) Overview: https://www.ibm.com/watson/explainable-ai

- European Union’s AI Act: https://digital-strategy.ec.europa.eu/en/policies/artificial-intelligence-act

- Towards Data Science – Understanding Precision, Recall, and F1 Score: https://towardsdatascience.com/understanding-precision-recall-and-f1-score-in-machine-learning-b03a45c091b

🛠️ AI Solutions Tailored for KPI Optimization

Looking for the right tools to put these KPI strategies into action? Here are some leading platforms and services that can help you build, deploy, and monitor your AI models with KPI optimization in mind.

- Cloud AI Platforms:

- AWS SageMaker: Amazon.com AWS SageMaker

- Google Cloud AI Platform / Vertex AI: Google Cloud AI Platform

- Microsoft Azure Machine Learning: Microsoft Azure Machine Learning

- MLOps & Monitoring Tools:

- Datadog: Datadog Official Website

- Weights & Biases: Weights & Biases Official Website

- Arize AI: Arize AI Official Website

- Fiddler AI: Fiddler AI Official Website

- Prometheus: Prometheus Official Website

- Grafana: Grafana Official Website

- MLflow: MLflow Official Website

- Deepchecks: Deepchecks Official Website

- Evidently AI: Evidently AI Official Website

- Cloud Computing Resources (for deployment & training):

- DigitalOcean: DigitalOcean Official Website

- Paperspace: Paperspace Official Website

- RunPod: RunPod Official Website

👥 About ChatBench.org™: Your AI Performance Gurus

We are ChatBench.org™ – a passionate team of AI researchers and machine-learning engineers dedicated to Turning AI Insight into Competitive Edge. Our mission is to demystify the complex world of Artificial Intelligence, providing you with engaging, entertaining, and deeply informative content based on our hands-on experience and cutting-edge research.

We believe that AI isn’t just about algorithms; it’s about measurable impact, strategic advantage, and real-world solutions. From optimizing model performance to quantifying ROI and navigating ethical considerations, we’re here to guide you every step of the way. We’re not just talking about AI; we’re building it, measuring it, and helping businesses like yours succeed with it. Join our community and let’s unlock the full potential of AI together!

📞 Contact Us: Let’s Solve Your AI KPI Challenges Together

Got a burning question about AI KPIs? Struggling to define the right metrics for your next big AI project? Or perhaps you’re facing challenges in measuring the true ROI of your existing AI solutions?

Don’t go it alone! Our team of experts at ChatBench.org™ is always ready to lend an ear, share insights, and help you navigate the complexities of AI performance measurement. We thrive on solving real-world problems and turning those challenges into opportunities for growth.

Reach out to us today! Let’s discuss your specific needs and explore how we can help you transform your AI initiatives from promising experiments into undeniable business successes.

Contact ChatBench.org™: https://www.chatbench.org/

We look forward to hearing from you and helping you master your AI journey!

🏁 Conclusion: Mastering AI KPIs for Unstoppable Success

Phew! We’ve journeyed through the intricate landscape of Key Performance Indicators for Artificial Intelligence, uncovering what truly matters when it comes to measuring AI success. From the foundational metrics like accuracy and recall to the strategic KPIs that tie AI performance directly to business outcomes, it’s clear that effective AI measurement is both an art and a science.

Remember the unresolved question we posed early on: How do you prove your AI investment is paying off? Now you know — it’s about selecting the right KPIs, aligning them with your business goals, continuously monitoring performance, and quantifying ROI with rigor and clarity. AI isn’t a magic wand; it’s a powerful tool that demands thoughtful measurement and management to unlock its full potential.

We also explored the challenges — from data quality nightmares to model drift and the elusive nature of intangible benefits — and shared best practices and tools that can help you navigate these hurdles confidently. Whether you’re a startup launching your first AI pilot or an enterprise scaling complex AI systems, mastering KPIs is your ticket to sustained success.

Finally, partnering with experts like Acacia Advisors or leveraging the insights and frameworks from ChatBench.org™ can accelerate your journey, ensuring your AI initiatives deliver measurable, meaningful value.

Our confident recommendation: Don’t just build AI — measure it, manage it, and evolve it relentlessly. Your KPIs are your compass in the AI wilderness. Use them wisely, and you’ll turn AI insight into a formidable competitive edge.

🔍 Recommended Links for Deep Dives on AI KPIs

Looking to equip yourself with the best tools and knowledge? Here are some top picks to get you started:

-

Cloud AI Platforms:

- AWS SageMaker: Amazon.com AWS SageMaker

- Google Cloud AI Platform: Google Cloud AI Platform

- Microsoft Azure Machine Learning: Microsoft Azure Machine Learning

-

MLOps & Monitoring Tools:

- Datadog: Datadog Official Website

- Weights & Biases: Weights & Biases Official Website

- Arize AI: Arize AI Official Website

- Fiddler AI: Fiddler AI Official Website

-

Cloud Computing Resources:

- DigitalOcean: DigitalOcean Official Website

- Paperspace: Paperspace Official Website

- RunPod: RunPod Official Website

-

Books on AI Measurement and Strategy:

- “Measure What Matters: How Google, Bono, and the Gates Foundation Rock the World with OKRs” by John Doerr — Amazon Link

- “Artificial Intelligence: A Guide for Thinking Humans” by Melanie Mitchell — Amazon Link

- “Prediction Machines: The Simple Economics of Artificial Intelligence” by Ajay Agrawal, Joshua Gans, and Avi Goldfarb — Amazon Link

❓ Frequently Asked Questions About AI Performance Indicators

How can companies use Key Performance Indicators to compare the performance of different AI models and algorithms, and make data-driven decisions about their AI investments?

Companies leverage KPIs such as accuracy, precision, recall, latency, and business impact metrics to create a standardized evaluation framework. By applying these KPIs consistently across models, they can objectively compare performance. For example, a model with higher precision but slower response time might be preferred for fraud detection, whereas a faster model with slightly lower accuracy might suit real-time recommendations. Incorporating business KPIs like ROI and user engagement ensures decisions are aligned with strategic goals, not just technical specs. This data-driven approach enables informed investment choices, prioritizing models that deliver the best balance of performance, cost, and impact.

What role do Key Performance Indicators play in ensuring AI solutions are aligned with overall business objectives and strategic goals?

KPIs act as the bridge between AI technology and business strategy. They translate abstract business goals into measurable targets that AI initiatives can aim for. For instance, if a business goal is to improve customer satisfaction, KPIs might include chatbot resolution rate and customer satisfaction scores. This alignment ensures AI projects focus on delivering tangible value rather than just technical achievements. Regular KPI reviews also enable course correction, keeping AI efforts tightly coupled with evolving business priorities.

How can organizations effectively track and evaluate the performance of their AI systems to drive continuous improvement?

Effective tracking requires a comprehensive monitoring framework that covers technical, operational, and business KPIs. Organizations should implement automated monitoring tools (e.g., Datadog, Arize AI) to detect model drift, latency spikes, or data quality issues in real-time. Coupling this with periodic business impact assessments (e.g., ROI analysis, user feedback) creates a feedback loop for continuous improvement. Incorporating MLOps best practices such as automated retraining, A/B testing, and version control ensures models evolve with changing data and business needs.

What are the most important Key Performance Indicators for measuring the success of AI initiatives in a business setting?

While KPIs vary by use case, the most critical ones typically include:

- Technical KPIs: Accuracy, precision, recall, F1 score, latency, throughput.

- Operational KPIs: Model stability, data quality, resource utilization, uptime.

- Business KPIs: ROI, cost savings, revenue growth, customer satisfaction, user adoption rates.

Together, these KPIs provide a holistic view of AI success, balancing technical excellence with real-world impact.

How can AI performance indicators drive competitive advantage?

AI KPIs enable organizations to measure, optimize, and scale AI initiatives effectively, ensuring resources are focused on high-impact projects. By continuously monitoring KPIs, companies can rapidly identify underperforming models, adapt to market changes, and innovate faster than competitors. Moreover, KPIs related to ethical AI and compliance build trust with customers and regulators, further strengthening competitive positioning.

Which metrics best evaluate the impact of AI on operational efficiency?

Metrics such as latency, throughput, automation rate, error reduction, and resource utilization directly reflect operational efficiency. For example, reduced average handle time in a customer service chatbot or decreased unplanned downtime in predictive maintenance systems indicate improved efficiency. Tracking these KPIs helps quantify how AI streamlines processes and reduces costs.

How do you align AI KPIs with overall business goals?

Alignment starts with clear communication between business leaders and AI teams to define strategic objectives. KPIs should be selected based on their direct relevance to these objectives, ensuring every metric measured has a purpose. Using frameworks like SMART goals and involving stakeholders in KPI definition fosters alignment. Regular KPI reviews and adjustments keep AI initiatives responsive to changing business landscapes.

📚 Reference Links and Further Reading

-

Acacia Advisors: Measuring Success – Key Metrics and KPIs for AI Initiatives

https://www.chooseacacia.com/measuring-success-key-metrics-and-kpis-for-ai-initiatives/ -

MIT Sloan: Build Better KPIs with Artificial Intelligence

https://mitsloan.mit.edu/ideas-made-to-matter/build-better-kpis-artificial-intelligence -

MIT Sloan Review: Improve Key Performance Indicators with AI

https://sloanreview.mit.edu/article/improve-key-performance-indicators-with-ai/ -

IBM Explainable AI (XAI)

https://www.ibm.com/watson/explainable-ai -

European Union AI Act

https://digital-strategy.ec.europa.eu/en/policies/artificial-intelligence-act -

Towards Data Science: Understanding Precision, Recall, and F1 Score in Machine Learning

https://towardsdatascience.com/understanding-precision-recall-and-f1-score-in-machine-learning-b03a45c091b -

Amazon AWS SageMaker

https://aws.amazon.com/sagemaker/?tag=bestbrands0a9-20 -

Google Cloud AI Platform

https://cloud.google.com/ai-platform -

Microsoft Azure Machine Learning

https://azure.microsoft.com/en-us/services/machine-learning/ -

Datadog

https://www.datadoghq.com/ -

Weights & Biases

https://wandb.ai/ -

Arize AI

https://www.arize.com/ -

Fiddler AI

https://www.fiddler.ai/ -

DigitalOcean

https://www.digitalocean.com/ -

Paperspace

https://www.paperspace.com/ -

RunPod

https://www.runpod.io/

We hope this comprehensive guide empowers you to master AI KPIs and unlock the full potential of your AI initiatives. Ready to take the next step? Dive into the resources above or reach out to us at ChatBench.org™ for expert guidance tailored to your unique AI journey!