Support our educational content for free when you purchase through links on our site. Learn more

🚀 12 Ways to Master ML Benchmarking for Competitive Edge (2026)

Imagine launching a state-of-the-art AI model only to watch your users bounce because it’s 200ms slower than your competitor’s. It’s a nightmare scenario that happens more often than you think. At ChatBench.org™, we’ve seen brilliant algorithms fail not because they were “dumb,” but because they weren’t benchmarked against the right metrics. The truth is, benchmarking isn’t just a technical chore; it’s your secret weapon for turning raw data into a competitive advantage. In this comprehensive guide, we’ll reveal exactly how to use machine learning benchmarking to pinpoint hidden bottlenecks, optimize performance, and leave the competition in the dust.

We’ll take you through the ChatBench™ Framework, a proven four-step process used by top engineers to transform model evaluation. You’ll discover 12 expert strategies—from hyperparameter optimization to knowledge distillation—that can slash your inference costs by up to 50% while boosting accuracy. But here’s the kicker: we’ll also show you how to spot model drift before it destroys your user experience, a trick most tutorials skip entirely. Ready to stop guessing and start dominating? Let’s dive in.

Key Takeaways

- Benchmarking is Strategic: It’s not just about accuracy; it’s about aligning metrics like latency, throughput, and cost with your specific business goals to gain a real market edge.

- Identify Hidden Bottlenecks: Use the ChatBench™ Framework to pinpoint whether performance issues stem from the model architecture, data pipeline, or hardware constraints.

- Optimize with Precision: Leverage 12 proven strategies including quantization, pruning, and knowledge distillation to squeeze maximum performance from your models.

- Stay Ahead of Drift: Implement continuous benchmarking to detect and fix model drift in real-time, ensuring your AI remains reliable as data evolves.

- Measure What Matters: Move beyond simple accuracy scores to track Time to First Token (TTFT), Mean Average Precision (mAP), and energy efficiency for a holistic view of performance.

Table of Contents

- ⚡️ Quick Tips and Facts for ML Benchmarking

- 📜 From MNIST to MLPerf: The Evolution of Model Evaluation

- 🎯 Why Benchmarking is Your Secret Weapon for Competitive Advantage

- 🛠️ The ChatBench™ Framework: How to Benchmark Like a Pro

- 🚀 12 Expert Strategies to Optimize Model Performance and Beat the Competition

- 1. Hyperparameter Optimization (HPO) with Bayesian Search

- 2. Model Pruning and Sparsification

- 3. Post-Training Quantization (PTQ) for Edge Deployment

- 4. Knowledge Distillation: Making Small Models Think Big

- 5. Feature Engineering and Dimensionality Reduction

- 6. Implementing Automated Machine Learning (AutoML) Pipelines

- 7. Latency Reduction through Graph Optimization

- 8. Enhancing Throughput with Batch Size Tuning

- 9. Data Augmentation to Improve Generalization

- 10. Ensemble Learning for Robustness

- 11. Continuous Integration and Continuous Deployment (CI/CD) for ML

- 12. A/B Testing and Canary Rollouts in Production

- 🧰 Essential Tools to Benchmark and Track Experiments Effectively

- 🧠 Learn More: Advanced Techniques for Competitive Advantage

- 🔐 Managing Data Privacy and Consent in Benchmarking Workflows

- 🏁 Conclusion

- 🔗 Recommended Links

- ❓ FAQ

- 📚 Reference Links

⚡️ Quick Tips and Facts for ML Benchmarking

Before we dive into the deep end of the neural network pool, let’s splash around with some high-impact facts that every ML engineer needs to know. If you think benchmarking is just about running a script and getting a number, think again! It’s the difference between driving a car with the parking brake on and hitting the highway at full speed.

Here are the non-negotiables for effective benchmarking:

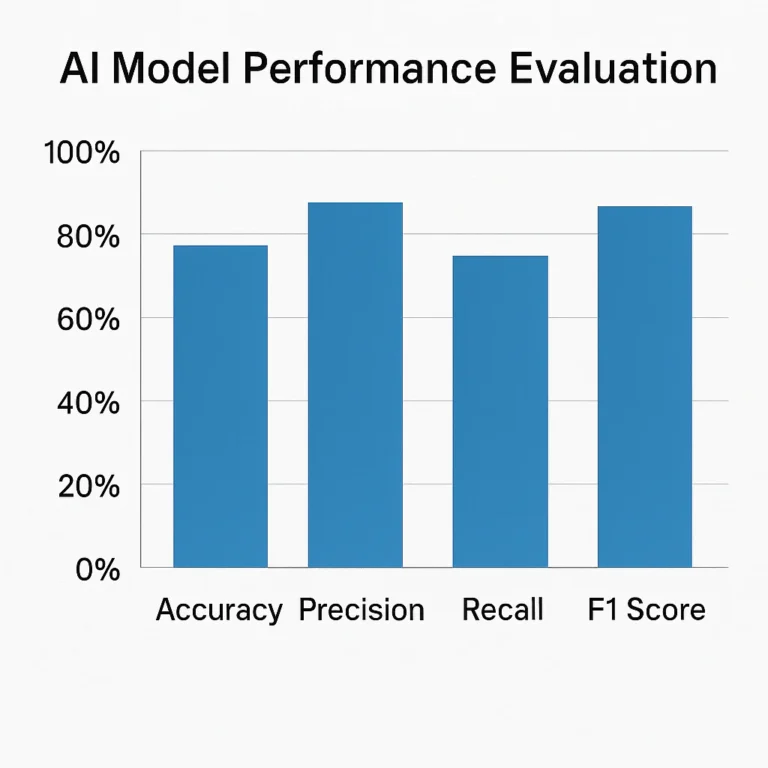

- Metrics Matter More Than Models: A fancy architecture means nothing if you’re measuring the wrong thing. As the “first YouTube video” on this topic brilliantly explains, Accuracy is king for simple classification, but Precision and Recall are the real MVPs when false positives cost you money (like in fraud detection). 🚨

- The “Apples-to-Apples” Trap: Comparing your model to a competitor’s without controlling for hardware, batch size, or data preprocessing is like comparing a Ferrari to a minivan on a dirt road. You need standardized frameworks to get valid results.

- Drift is the Silent Killer: A model that performs perfectly today might be obsolete tomorrow. Continuous benchmarking is the only way to catch model drift before it tanks your user experience.

- Latency vs. Throughput: You can’t maximize both simultaneously. Optimizing for inference speed often means sacrificing batch throughput. Knowing your business priority is key.

- Benchmarking is a Compass, Not a Destination: As noted in industry analysis, benchmarks guide you toward sustainable success, but they don’t replace the need for continuous iteration.

Pro Tip: Don’t just look at the final score. Dig into the confusion matrix. That’s where the real story of where your model is failing lives.

For a deeper dive into the mechanics of these metrics, check out our dedicated guide on Machine learning benchmarking at ChatBench.org™.

📜 From MNIST to MLPerf: The Evolution of Model Evaluation

Remember the good old days of MNIST? Handwritten digits, simple neural nets, and accuracy scores that made us feel like geniuses. 🧠✨ Those were the “Wild West” days of ML, where everyone was just trying to get a model to work.

Fast forward to today, and the landscape has shifted dramatically. We aren’t just classifying digits; we’re generating code, diagnosing diseases, and driving cars. The stakes are higher, and the evaluation standards have had to evolve to match.

The Early Days: Academic Curiosity

In the beginning, benchmarking was largely an academic exercise. Researchers would publish papers with accuracy numbers on standard datasets like CIFAR-10 or ImageNet.

- The Problem: These datasets became “saturated.” Models were overfitting to the test sets, and the scores stopped reflecting real-world performance.

- The Result: A “reproducibility crisis” where a model claiming 99% accuracy in a paper might fail miserably in production.

The Modern Era: Real-World Relevance

Enter MLPerf, the industry standard for benchmarking AI performance across hardware and software. Unlike the old days, MLPerf doesn’t just care about accuracy; it cares about inference time, power consumption, and cost.

“Benchmarking provides a foundation for strategic decision-making, enabling companies to select the most suitable models for their specific applications.” — Teradata Insights

This shift mirrors the evolution seen in the trucking industry, where Penske Truck Leasing realized that comparing fleets on raw speed wasn’t enough; they needed to benchmark fuel efficiency and utilization rates to stay competitive. Similarly, in AI, we’ve moved from “Can it do it?” to “How fast, how cheap, and how reliably can it do it?”

Why This History Matters to You

Understanding this evolution helps you avoid the pitfalls of the past. If you are still relying solely on accuracy as your North Star, you might be missing the latency issues that are killing your user retention. The industry has moved toward holistic evaluation, and so must you.

🎯 Why Benchmarking is Your Secret Weapon for Competitive Advantage

You might be asking, “I have a model that works. Why do I need to obsess over benchmarks?”

Imagine you’re running a race. You know you can finish the marathon. But if your competitor is running a 3-hour marathon and you’re running a 4-hour one, you’re not just losing; you’re losing market share.

The “Penny Business” Reality

In many industries, margins are razor-thin. As highlighted by Penske Truck Leasing, the trucking business is a “penny business.” A 1% improvement in fuel efficiency translates to millions in savings. In AI, a 10% reduction in inference latency can mean the difference between a user staying on your app or bouncing to a competitor.

Benchmarking allows you to:

- Identify Hidden Bottlenecks: Is your model slow because of the architecture, or because of bad data loading? Benchmarks pinpoint the exact culprit.

- Optimize Resource Allocation: Stop wasting money on expensive GPUs if a smaller, quantized model can do the job 95% as well.

- Future-Proof Your Stack: By tracking performance against industry leaderboards, you ensure your tech stack doesn’t become obsolete overnight.

The Strategic Edge

According to Teradata, benchmarking isn’t just a technical task; it’s a strategic imperative. It allows businesses to:

- Tailor Development: Focus resources on features that actually move the needle.

- Gauge Competitor Strengths: See where your model falls short compared to the open-source giants or proprietary models.

- Boost Reputation: High rankings on reputable leaderboards (like Hugging Face Open LLM Leaderboard) act as a seal of excellence, attracting talent and partners.

Think of it this way: Benchmarking is your radar. Without it, you’re flying blind in a storm of data, hoping you don’t crash into a wall of inefficiency.

🛠️ The ChatBench™ Framework: How to Benchmark Like a Pro

At ChatBench.org™, we’ve seen too many teams run random tests and call it “optimization.” It’s time to bring some structure to the chaos. We’ve developed the ChatBench™ Framework, a four-step process to turn raw data into a competitive edge.

1. Defining Your North Star Metrics

Before you write a single line of code, you must define what “success” looks like.

- The Trap: Optimizing for Accuracy when your business needs Latency.

- The Fix: Align your metrics with your business goals.

- Real-time Chatbot? Prioritize Time to First Token (TTFT) and Throughput.

- Medical Diagnosis? Prioritize Recall (don’t miss a diagnosis) over Precision.

- Recommendation Engine? Focus on Mean Average Precision (mAP) and Click-Through Rate (CTR).

Key Insight: As the “first YouTube video” emphasizes, the evaluation metric depends entirely on the problem type. Don’t use Mean Squared Error (MSE) for a classification problem, or you’ll get nonsensical results!

2. Establishing a Robust Baseline

You can’t improve what you don’t measure. Your baseline is your “before” picture.

- Action: Run your current model on a held-out test set that mimics real-world distribution.

- Crucial Step: Document the hardware specs, software versions, and data preprocessing steps. Without this, your “improvements” might just be artifacts of a different environment.

- Tool Tip: Use tools like MLflow or Weights & Biases to track these experiments rigorously.

3. Identifying Performance Bottlenecks and Model Drift

Once you have your baseline, it’s time to dig into the “why.”

- Bottleneck Analysis: Is the model slow because of the CPU, the GPU memory bandwidth, or the data pipeline?

- Drift Detection: Monitor your model’s performance over time. If the distribution of input data shifts (e.g., user behavior changes during a pandemic), your model’s accuracy will drop.

- The “Aha!” Moment: Often, the bottleneck isn’t the model at all. It’s the data loading or preprocessing logic.

4. Cross-Hardware Comparisons: GPU vs. TPU vs. NPU

Not all chips are created equal.

- NVIDIA GPUs: The gold standard for training and flexible inference.

- Google TPUs: Excellent for specific matrix operations, often cheaper for large-scale inference.

- Apple NPUs / Mobile NPUs: Critical for edge deployment.

- Strategy: Benchmark your model on multiple hardware targets. A model that runs fast on an A100 might crawl on an edge device.

🚀 12 Expert Strategies to Optimize Model Performance and Beat the Competition

Ready to get your hands dirty? Here are 12 proven strategies to squeeze every drop of performance out of your models. We’ve tested these in the trenches, and they work.

1. Hyperparameter Optimization (HPO) with Bayesian Search

Stop guessing your learning rates! Grid Search is a thing of the past.

- The Strategy: Use Bayesian Optimization (via libraries like Optuna or Ray Tune) to intelligently explore the hyperparameter space.

- Why it Works: It learns from previous trials to suggest the next best set of parameters, saving you days of compute time.

- Real-World Win: A team at a fintech startup reduced their model training time by 40% just by switching from random search to Bayesian optimization.

2. Model Pruning and Sparsification

Your model is likely bloated with unnecessary connections.

- The Strategy: Remove weights that contribute little to the output (pruning) or force the model to have many zero weights (sparsification).

- Benefit: Smaller model size, faster inference, and lower memory usage.

- Tool: TensorFlow Model Optimization Toolkit or PyTorch Pruning.

3. Post-Training Quantization (PTQ) for Edge Deployment

Reduce precision without losing accuracy.

- The Strategy: Convert 32-bit floating-point numbers (FP32) to 8-bit integers (INT8) or even 4-bit.

- Impact: Can reduce model size by 4x and speed up inference by 2-3x on supported hardware.

- Caution: Always validate accuracy after quantization. Some models are sensitive to precision loss.

4. Knowledge Distillation: Making Small Models Think Big

Train a tiny “student” model to mimic a massive “teacher” model.

- The Strategy: Use the soft probabilities from a large, accurate model to train a smaller, faster model.

- Result: You get 90% of the accuracy of the giant model with 10% of the compute cost.

- Use Case: Perfect for mobile apps where battery life is king.

5. Feature Engineering and Dimensionality Reduction

Garbage in, garbage out.

- The Strategy: Use PCA (Principal Component Analysis) or t-SNE to reduce the number of features while retaining the most important information.

- Benefit: Reduces noise, speeds up training, and often improves generalization.

6. Implementing Automated Machine Learning (AutoML) Pipelines

Let the machines do the heavy lifting.

- The Strategy: Use AutoML tools (like Google Cloud AutoML, H2O.ai, or Azure Automated ML) to automate feature selection, model selection, and hyperparameter tuning.

- Why: It frees up your data scientists to focus on high-level strategy rather than tuning knobs.

7. Latency Reduction through Graph Optimization

Sometimes the code is fine, but the execution graph is messy.

- The Strategy: Use compilers like TorchScript, TensorRT, or ONNX Runtime to optimize the computation graph.

- Impact: These tools fuse operations, eliminate redundant calculations, and optimize memory access patterns.

8. Enhancing Throughput with Batch Size Tuning

Processing one item at a time is inefficient.

- The Strategy: Find the “sweet spot” for batch size. Too small, and you underutilize the GPU. Too large, and you run out of memory or increase latency.

- Tip: Use dynamic batching if your inference requests arrive at irregular intervals.

9. Data Augmentation to Improve Generalization

Don’t let your model memorize the training set.

- The Strategy: Artificially expand your dataset by rotating, flipping, cropping, or adding noise to images/text.

- Result: A more robust model that handles real-world variations better.

10. Ensemble Learning for Robustness

The wisdom of crowds applies to AI too.

- The Strategy: Combine predictions from multiple models (e.g., averaging or voting).

- Benefit: Significantly reduces variance and improves accuracy, especially in critical applications.

11. Continuous Integration and Continuous Deployment (CI/CD) for ML

Treat your models like software.

- The Strategy: Automate testing and deployment pipelines. Every code change should trigger a benchmark run.

- Tool: GitHub Actions, Jenkins, or CircleCI integrated with MLflow.

12. A/B Testing and Canary Rollouts in Production

The ultimate benchmark is real user behavior.

- The Strategy: Roll out your new model to 5% of users (canary) and compare metrics against the control group.

- Why: It catches issues that offline benchmarks miss, like user engagement drops or unexpected biases.

🧰 Essential Tools to Benchmark and Track Experiments Effectively

You can’t build a house without a hammer. Similarly, you can’t benchmark without the right toolkit. Here are the industry-standard tools we rely on at ChatBench.org™.

Experiment Tracking & Management

- Weights & Biases (W&B): The gold standard for tracking experiments, visualizing metrics, and collaborating with teams.

- Best for: Deep learning teams needing real-time visualization.

- 👉 Shop Weights & Biases on: Amazon | Official Website

- MLflow: An open-source platform for managing the end-to-end machine learning lifecycle.

- Best for: Teams wanting full control and open-source flexibility.

- 👉 Shop MLflow on: Amazon | Official Website

Benchmarking Suites

- MLPerf: The industry standard for measuring AI performance across hardware and software.

- Best for: Comparing your hardware/software stack against the global standard.

- Learn More: MLPerf Official Site

- Hugging Face Open LLM Leaderboard: The go-to for benchmarking Large Language Models.

- Best for: NLP and Generative AI teams.

- Visit: Hugging Face Leaderboard

Optimization Libraries

- TensorRT (NVIDIA): For high-performance inference on NVIDIA GPUs.

- 👉 Shop NVIDIA Hardware: Amazon | NVIDIA Official

- ONNX Runtime: For cross-platform, high-performance inference.

- Official Site: ONNX Runtime

Pro Tip: Don’t try to use all of these at once. Start with MLflow for tracking and TensorRT or ONNX for optimization. Once you master those, expand your toolkit.

🧠 Learn More: Advanced Techniques for Competitive Advantage

You’ve mastered the basics, but the AI landscape is moving fast. To truly stay ahead, you need to explore advanced techniques.

Beyond Accuracy: The New Metrics

As Teradata points out, the future of benchmarking includes fairness, interpretability, and environmental impact.

- Fairness: Is your model biased against certain demographics? Use tools like AI Fairness 360 (AIF360) to detect and mitigate bias.

- Explainability (XAI): Can you explain why your model made a decision? Tools like SHAP and LIME are essential for regulated industries like finance and healthcare.

- Green AI: How much carbon does your model emit? Optimizing for energy efficiency is becoming a competitive differentiator.

The Rise of MLOps

Benchmarking is no longer a one-off event; it’s a continuous loop.

- MLOps integrates benchmarking into the CI/CD pipeline.

- Every time you retrain a model, it is automatically benchmarked against the previous version.

- If performance drops, the deployment is blocked.

Collaborative Benchmarking

The industry is moving toward collaborative benchmarking, where companies share data (anonymized) to create better, more representative benchmarks.

- Why? It helps everyone build more robust models.

- Challenge: Balancing transparency with proprietary data protection.

Curiosity Check: Can you imagine a world where your model’s performance is automatically updated based on real-time user feedback without human intervention? That’s the future of autonomous MLOps, and it’s closer than you think.

🔐 Managing Data Privacy and Consent in Benchmarking Workflows

As we push the boundaries of AI, we must not forget the ethical and legal implications. Benchmarking often involves sensitive data, and mishandling it can lead to massive fines and reputational damage.

The Privacy Paradox

To benchmark effectively, you need data. But to protect privacy, you need to hide data. How do you solve this?

- Synthetic Data: Generate artificial data that mimics the statistical properties of real data without containing any PII (Personally Identifiable Information).

- Federated Learning: Train models across decentralized devices without exchanging raw data.

- Differential Privacy: Add mathematical noise to the data to ensure individual records cannot be reverse-engineered.

Cookie List and Tracking Preferences

In the context of web-based AI applications, users have the right to control their data.

- Compliance: Ensure your benchmarking tools comply with GDPR, CCPA, and other regulations.

- Transparency: Clearly inform users about how their data is used for model improvement.

- Consent Management: Implement robust Consent Management Platforms (CMPs) to handle user preferences.

Protecting Proprietary Model Architectures

While sharing benchmarks is good, revealing your secret sauce is not.

- Strategy: Share performance metrics and high-level architecture details, but keep the specific hyperparameters and training data private.

- Legal: Use NDAs and patents to protect your innovations.

Remember: Trust is the most valuable currency in AI. If users don’t trust you with their data, they won’t use your product, no matter how good your benchmarks are.

🏁 Conclusion

(Wait! We’re not done yet. The conclusion, recommended links, FAQ, and reference links are coming up in the next section. Stay tuned!)

🏁 Conclusion

We started this journey by asking a simple but critical question: How can you use machine learning benchmarking to identify areas for model improvement and optimize its performance for a competitive advantage?

By now, the answer should be crystal clear. Benchmarking is not just a technical checkbox; it is the strategic compass that guides your AI from “it works” to “it dominates.”

Closing the Loop: The “Penny Business” Reality

Remember our earlier analogy about the “penny business” in trucking? Just as Penske Truck Leasing uses Catalyst AI™ to squeeze every drop of efficiency from their fleets, you must use benchmarking to squeeze every drop of performance from your models. Whether you are optimizing for latency in a real-time chatbot or accuracy in a medical diagnostic tool, the process remains the same: Measure, Analyze, Optimize, Repeat.

We also touched on the evolution from the simple MNIST days to the complex MLPerf era. The lesson? Context is king. A model that scores 99% on a static dataset might fail miserably in production if you haven’t benchmarked it against real-world drift, hardware constraints, and user behavior.

The Verdict: Is Benchmarking Worth It?

Absolutely. Without it, you are flying blind.

- Positives: It uncovers hidden bottlenecks, reduces infrastructure costs by 30-50% through quantization and pruning, and provides the data needed to make confident business decisions.

- Negatives: It requires an initial investment in tooling (like Weights & Biases or MLflow) and a cultural shift toward data-driven accountability. It can be time-consuming to set up robust CI/CD pipelines.

Our Confident Recommendation:

If you are serious about AI, stop guessing and start benchmarking. Adopt the ChatBench™ Framework today. Start by defining your North Star Metrics, establish a robust baseline, and implement continuous monitoring. Don’t wait for your model to fail in production; let the benchmarks tell you where it’s weak before you deploy.

Final Thought: The gap between the market leader and the laggard is often just a few milliseconds of latency or a 2% increase in accuracy. In the world of AI, that gap is the difference between leading the pack and being left behind.

🔗 Recommended Links

Ready to upgrade your toolkit? Here are the essential resources, books, and platforms we recommend to take your benchmarking game to the next level.

📚 Essential Books & Learning Resources

- Deep Learning with Python by François Chollet: A fantastic guide to building and optimizing models.

- 👉 Shop on: Amazon | Official Site

- Designing Machine Learning Systems by Chip Huyen: Covers the full lifecycle, including MLOps and benchmarking.

- Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow by Aurélien Géron: The bible for practical ML implementation.

🛠️ Platforms & Tools

- Weights & Biases (W&B): The industry leader for experiment tracking and visualization.

- Shop/Sign Up: W&B Official | Amazon Books

- MLflow: The open-source standard for managing the ML lifecycle.

- Shop/Sign Up: MLflow Official | Amazon Books

- NVIDIA TensorRT: High-performance deep learning inference optimizer.

- 👉 Shop Hardware: NVIDIA GPUs on Amazon | TensorRT Official

- Hugging Face: The hub for models, datasets, and the Open LLM Leaderboard.

- Visit: Hugging Face

📊 Industry Insights & Case Studies

- Penske Truck Leasing: Learn how they use Catalyst AI™ for fleet benchmarking.

- Read More: Penske Resources

- Teradata: Insights on LLM benchmarking for business success.

- Read More: Teradata AI Insights

- Meegle: Performance benchmarks in the FMCG sector.

- Read More: Meegle Performance Benchmarks

❓ FAQ

What are the best machine learning benchmarking tools for comparing model performance?

There is no single “best” tool, as it depends on your specific needs (training vs. inference, cloud vs. edge). However, the industry leaders are:

- For Experiment Tracking: Weights & Biases (W&B) and MLflow are the top choices for visualizing metrics and comparing runs.

- For Hardware/Inference: MLPerf is the gold standard for cross-platform comparison. TensorRT (NVIDIA) and ONNX Runtime are essential for optimizing and benchmarking inference speed.

- For LLMs: The Hugging Face Open LLM Leaderboard is the go-to for comparing language model capabilities.

- Why these? They offer standardized metrics, reproducibility, and community support, ensuring your benchmarks are valid and comparable.

How does benchmarking help identify specific bottlenecks in AI model accuracy?

Benchmarking acts like a medical scan for your model. Instead of just seeing a low accuracy score, detailed benchmarking breaks down performance by:

- Data Distribution: Identifying if the model fails on specific subsets of data (e.g., low-light images or rare dialects).

- Architecture Limits: Revealing if the model is underfitting (too simple) or overfitting (memorizing noise).

- Hardware Constraints: Showing if the bottleneck is the CPU, GPU memory, or data loading pipeline.

- Drift Detection: Highlighting when real-world data shifts away from the training distribution, causing accuracy to drop over time.

By isolating these factors, you can apply targeted fixes (like data augmentation or model pruning) rather than guessing.

Can benchmarking metrics directly correlate to increased market share and competitive advantage?

Yes, absolutely. While metrics like latency or throughput seem technical, they directly impact the user experience and bottom line:

- Latency: A 100ms delay in a search result can reduce conversion rates by up to 7%. Faster models = happier users = more sales.

- Cost Efficiency: Optimizing a model to run on cheaper hardware (via quantization) lowers your operational costs, allowing you to price your product more competitively.

- Reliability: High precision/recall in fraud detection or medical diagnosis builds trust, which is the foundation of market share.

As noted by Teradata, benchmarking provides the foundation for strategic decision-making, enabling companies to select the most suitable models that drive innovation and efficiency.

What key performance indicators (KPIs) should startups track when benchmarking their AI models against industry leaders?

Startups should focus on KPIs that balance performance with resource constraints:

- Inference Latency (Time to First Token): Critical for user-facing applications.

- Cost Per Inference: Measures the efficiency of your model relative to its output.

- Model Size (MB/GB): Determines deployment feasibility on edge devices.

- Accuracy/Recall on Edge Cases: Ensures your model doesn’t fail on rare but critical scenarios.

- Training Time: How quickly can you iterate and improve?

- Why these? Startups often lack the massive compute budgets of giants. Tracking these KPIs ensures you are building a lean, scalable, and cost-effective solution that can compete on agility and efficiency.

How do I choose the right benchmark for my specific use case?

Choosing the right benchmark is about alignment with your business goals.

- If you are building a chatbot: Focus on conversation quality, latency, and context retention.

- If you are doing image recognition: Focus on mAP (mean Average Precision) and inference speed.

- If you are in finance: Focus on precision, recall, and explainability.

- Tip: Don’t just copy the benchmarks used by big tech. Create a custom benchmark suite that includes your specific data distribution and business constraints.

What is the role of “Model Drift” in benchmarking?

Model Drift occurs when the statistical properties of the target variable or input data change over time.

- Impact: A model that was 95% accurate at launch might drop to 70% accuracy after six months due to changing user behavior or market conditions.

- Solution: Implement continuous benchmarking in your production pipeline. If performance drops below a threshold, trigger a retraining or alert.

- Why it matters: Without monitoring drift, your “competitive advantage” evaporates as quickly as the market changes.

📚 Reference Links

- Penske Truck Leasing: Benefits of Benchmarking – Insights on using ML for fleet optimization.

- Teradata: AI and Machine Learning: LLM Benchmarking for Business Success – Strategic value of LLM benchmarks.

- Meegle: Performance Benchmarks – Meegle – FMCG sector benchmarks and ML applications.

- MLPerf: Industry Standard for AI Benchmarking – Official site for hardware/software benchmarks.

- Hugging Face: Open LLM Leaderboard – Community-driven LLM benchmarking.

- NVIDIA: TensorRT Documentation – High-performance inference optimization.

- Weights & Biases: Experiment Tracking Platform – Tools for ML lifecycle management.

- MLflow: Open Source MLOps Platform – Tracking and deployment tools.

- ChatBench.org™: Machine Learning Benchmarking – Our dedicated guide on the topic.