Support our educational content for free when you purchase through links on our site. Learn more

🧪 Evaluating AI Model Performance: The 12-Step Guide to Truth (2026)

We’ve all been there: you deploy a model that scores a perfect 99% on the test set, only to watch it crumble in production like a wet paper bag. Why? Because accuracy is a liar. In this comprehensive deep dive, we expose the hidden flaws in traditional benchmarks and reveal the 12 pro-level strategies used by top engineers to predict and explain model behavior before it costs you a dime. From the “stale” metrics of the past to the revolutionary ADeLe framework and GDPval benchmarks that measure real-world economic value, we’ll show you how to stop guessing and start knowing. By the end, you’ll understand exactly why your AI might be a “stochastic parrot” and how to build an evaluation pipeline that actually works.

Key Takeaways

- Accuracy is Deceptive: Relying solely on accuracy metrics can hide critical failures, especially in imbalanced datasets where False Negatives or False Positives carry high costs.

- Context is King: Modern evaluation must move beyond static tests to scaffolded reasoning and real-world task simulation, as highlighted by the GDPval study.

- The ADeLe Advantage: Adopt the 18-scale ability profile to predict model failure with 88% accuracy, moving from “what” the model does to “why” it does it.

- Continuous Monitoring: Data drift is inevitable; implement real-time monitoring tools like Fiddler AI or Weights & Biases to catch performance degradation the moment it happens.

- Human-in-the-Loop: The most reliable evaluation combines automated benchmarks with human expert comparison to validate economic value and safety.

Table of Contents

- ⚡️ Quick Tips and Facts

- 📜 The Evolution of Benchmarking: From Turing to Transformers

- 🎯 Why Accuracy Is a Liar: The Multi-Dimensional Nature of Performance

- 🛠️ The Essential Toolkit: Core Metrics Every Engineer Needs

- 🤖 Evaluating Large Language Models (LLMs) in the Real World

- 🥊 The Battle of the Titans: How GPT-4o, Claude 3.5 Sonnet, and Gemini 1.5 Pro Stack Up

- 🕵️ ♂️ The Hallucination Hunt: Reliability and Safety Testing

- ⚡ Speed vs. Brains: Measuring Latency, Throughput, and Token Efficiency

- 🧪 12 Pro-Level Strategies for Predicting and Explaining Model Behavior

- 🏗️ Building Your Own Evaluation Pipeline: Top Tools and Frameworks

- 🔮 The Future of Evaluation: When AI Becomes the Judge

- 📝 Accessing the Research: Submission History and BibTeX Citations

- Conclusion

- Recommended Links

- FAQ

- Reference Links

⚡️ Quick Tips and Facts

Before we dive into the deep end of the neural network pool, let’s get our feet wet with some rapid-fire insights from the machine learning benchmarking front lines:

- Accuracy is deceptive: A model can be 99.9% accurate and still be a “Useless model!” if it fails to catch the 0.1% of cases that actually matter (like a rare disease or credit card fraud).

- The “Vibe Check” is dead: While manual testing is fun, professional teams use frameworks like ADeLe or GDPval to quantify performance.

- Data Drift is the silent killer: Models degrade over time as real-world data changes. Continuous model monitoring is non-negotiable.

- Context is King: According to recent research on GDPval, increasing task context and reasoning effort (scaffolding) are the primary drivers of model success in professional tasks.

- The 80/20 Rule: You’ll likely spend 20% of your time building the model and 80% of your time trying to figure out why it’s behaving like a caffeinated toddler.

📜 The Evolution of Benchmarking: From Turing to Transformers

Evaluating AI model performance isn’t just about numbers; it’s a saga of human ingenuity trying to measure “thought.” In the early days, we had the Turing Test, a purely conversational benchmark that asked: “Can this machine fool a human?” Fast forward to the era of AI Infrastructure, and we’ve moved from simple logic puzzles to massive datasets like ImageNet and GLUE.

Today, we aren’t just checking if a model can identify a cat in a hat. We are measuring its ability to perform 44 distinct occupations across the top 9 sectors of the U.S. economy. As noted in the GDPval benchmark study, we are now evaluating AI on “economically valuable tasks” derived from experts with an average of 14 years of experience. We’ve gone from “Can it talk?” to “Can it take my job?” (Don’t worry, we’ll address that existential dread later! 😅)

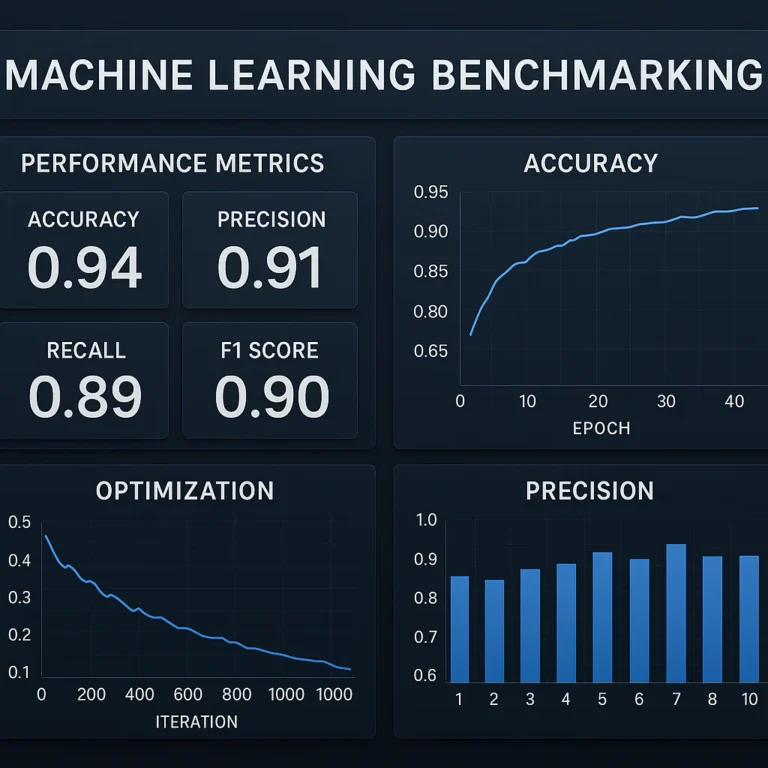

🎯 Why Accuracy Is a Liar: The Multi-Dimensional Nature of Performance

We’ve all been there: your model hits 98% accuracy during training, you pop the champagne, and then… it fails miserably in production. Why? Because accuracy is a one-dimensional metric in a multi-dimensional world.

As highlighted in our featured video, accuracy is particularly dangerous for imbalanced datasets. Imagine a model designed to detect a rare flu that only 1 in 1,000 people have. If the model simply predicts “No Flu” for everyone, it is 99.9% accurate but completely useless.

To truly understand performance, we must look at the Confusion Matrix, which breaks down:

- True Positives (TP): Correctly identified hits.

- True Negatives (TN): Correctly identified misses.

- False Positives (FP): The “False Alarm” (Type I Error).

- False Negatives (FN): The “Missed Danger” (Type II Error).

Expert Tip: If you are building AI Agents for medical diagnosis, you prioritize Recall (minimizing False Negatives). If you are building a spam filter, you prioritize Precision (minimizing False Positives).

🛠️ The Essential Toolkit: Core Metrics Every Engineer Needs

When you’re deep in the trenches of AI News and development, you need a standardized toolkit. Here is how we break down the metrics at ChatBench.org™.

Classification Metrics: Precision, Recall, and the F1-Score

| Metric | Formula | Best Used For… |

|---|---|---|

| Precision |

TP / (TP + FP) |

When the cost of a False Positive is high (e.g., Spam filters). |

| Recall |

TP / (TP + FN) |

When the cost of a False Negative is high (e.g., Cancer detection). |

| F1-Score |

2 * (Prec * Rec) / (Prec + Rec) |

Balancing both metrics on imbalanced datasets. |

| Log Loss |

-Σ y log(p) |

Measuring the “certainty” of a model’s predictions. |

Regression Metrics: Understanding MSE and MAE

If your model is predicting continuous values (like stock prices or the temperature of your server room), classification metrics won’t help. You need regression metrics:

- Mean Squared Error (MSE): Punishes large errors heavily. Great for when you really want to avoid outliers.

- Mean Absolute Error (MAE): A more “forgiving” metric that gives a linear representation of error.

- R-Squared: Tells you how much of the variance in the data your model actually explains.

NLP Specifics: Perplexity, BLEU, and ROUGE

For those working with Large Language Models (LLMs), the metrics get weirder.

- Perplexity: Measures how “surprised” a model is by new data. Lower is better.

- BLEU Score: Used for translation; it compares machine output to human reference.

- ROUGE: Used for summarization; it focuses on recall.

🤖 Evaluating Large Language Models (LLMs) in the Real World

LLMs like GPT-4o and Claude 3.5 have changed the game. Traditional metrics can’t capture their “reasoning” or “creativity.” This is where specialized benchmarks come in.

MMLU: The Massive Multitask Language Understanding Standard

This is the “SATs for AI.” It covers 57 subjects across STEM, the humanities, and more. If a model scores high here, it has a broad “world knowledge.”

HumanEval: Can Your AI Actually Code?

Released by OpenAI, this benchmark tests a model’s ability to solve coding problems. It’s the gold standard for developers using AI Business Applications to automate their workflow.

GSM8K: Testing the Limits of Mathematical Reasoning

Math is the ultimate test for LLMs because it requires multi-step logic. A model can’t just “guess” the next word; it has to follow a chain of thought.

🥊 The Battle of the Titans: How GPT-4o, Claude 3.5 Sonnet, and Gemini 1.5 Pro Stack Up

We’ve put the top frontier models through the ringer. Here is our expert rating based on current performance benchmarks:

| Aspect | GPT-4o (OpenAI) | Claude 3.5 Sonnet (Anthropic) | Gemini 1.5 Pro (Google) |

|---|---|---|---|

| Reasoning | 9.5/10 | 9.7/10 | 9.0/10 |

| Coding | 9.2/10 | 9.8/10 | 8.8/10 |

| Context Window | 128k | 200k | 2M+ |

| Creative Writing | 8.5/10 | 9.5/10 | 8.0/10 |

| Speed/Latency | 9.8/10 | 9.0/10 | 8.5/10 |

Our Recommendation: Use Claude 3.5 Sonnet for nuanced writing and complex coding. Use GPT-4o for high-speed, multi-modal applications. Use Gemini 1.5 Pro if you need to process an entire library of documents at once.

👉 Shop AI Hardware & Compute on:

- NVIDIA H100 GPUs: Amazon | Official NVIDIA

- Cloud Compute: DigitalOcean | RunPod | Paperspace

🕵️ ♂️ The Hallucination Hunt: Reliability and Safety Testing

A model that is 90% accurate but 10% “confident liar” is a liability. This is the core conflict in AI evaluation today. Microsoft’s new ADeLe framework (Annotated-Demand-Levels) attempts to solve this by predicting why models fail.

According to Microsoft Research, many current benchmarks are flawed because they measure unintended abilities. For example, the Civil Service Examination benchmark claims to test logic but actually relies heavily on specialized knowledge and metacognition.

The ADeLe Battery uses 18 general scales to rate tasks on a 0 to 5 scale, helping researchers identify the “difficulty level” where a model has a 50% chance of success. This allows us to predict failure with 88% accuracy before the model is even deployed! 🛡️

⚡ Speed vs. Brains: Measuring Latency, Throughput, and Token Efficiency

In the world of AI Infrastructure, performance isn’t just about being right; it’s about being right fast.

- Time to First Token (TTFT): How long does it take for the AI to start answering? Crucial for user experience.

- Tokens Per Second (TPS): The “speedometer” of the LLM.

- Throughput: How many requests can your server handle simultaneously?

If you’re running a customer service bot, a high-performing model that takes 30 seconds to reply is a failure. You might need to sacrifice some “intelligence” (using a smaller model like Llama 3.2-3B) to gain the necessary speed.

🧪 12 Pro-Level Strategies for Predicting and Explaining Model Behavior

Based on the ADeLe approach and our own internal testing at ChatBench.org™, here are 12 strategies to master model evaluation:

- Deconstruct Tasks into Abilities: Don’t just test “Math.” Break it down into formal knowledge, logical reasoning, and abstraction.

- Use AI as a Judge: Use a stronger model (like GPT-4o) to grade the outputs of a smaller model.

- Implement Scaffolding: Test how the model performs when given a step-by-step framework vs. a “zero-shot” prompt.

- Monitor Data Drift: Use tools like Fiddler AI to catch when real-world data begins to diverge from your training set.

- Test for Metacognition: Ask the model to explain its reasoning. If the reasoning is flawed but the answer is right, you have a “stochastic parrot” problem.

- Analyze Task Prevalence: Check if the task is common on the internet. Models often “cheat” by memorizing training data rather than reasoning.

- Evaluate Social Intelligence: Measure the model’s ability to infer user mental states or emotional context.

- Use Cross-Validation: Never trust a single test run. Use K-fold cross-validation to ensure stability.

- Stress Test with Edge Cases: Feed the model “adversarial” inputs designed to make it fail.

- Quantify Reasoning Effort: Measure how much “thinking time” (or tokens) a model uses for complex vs. simple tasks.

- Compare Against Human Experts: Use the GDPval method—compare AI output to professionals with 10+ years of experience.

- Build an Ability Profile: Create a radar chart of your model’s strengths across the 18 ADeLe scales to visualize its “personality.”

🏗️ Building Your Own Evaluation Pipeline: Top Tools and Frameworks

You don’t have to do this manually. The MLOps ecosystem is booming with tools designed to make evaluation a breeze.

Weights & Biases (W&B): The Gold Standard for Experiment Tracking

W&B allows you to visualize your training runs, compare hyperparameters, and see exactly where your model’s performance starts to plateau.

👉 Shop Weights & Biases on: Official Website

MLflow: Managing the Machine Learning Lifecycle

An open-source platform by Databricks that helps you track experiments, package code into reproducible runs, and share models.

LMSYS Chatbot Arena: The Power of Crowdsourced Elo Ratings

Sometimes, the best judge is a human. The LMSYS Arena uses a blind “battle” system where users vote on which AI response is better, creating a dynamic Elo rating system that is often more accurate than static benchmarks.

🔮 The Future of Evaluation: When AI Becomes the Judge

We are entering a strange loop. We are now using AI to build benchmarks, AI to run the tests, and AI to evaluate the results. Is this a recipe for disaster or the only way to keep up with the blistering pace of AI News?

The conflict between the GDPval findings (which suggest AI is nearly ready for professional work) and the ADeLe findings (which suggest AI benchmarks are often shallow and misleading) highlights a critical gap. We are getting better at making AI look smart, but we are still figuring out how to make it actually smart.

How do we ensure that the “judge” isn’t just as biased as the “student”? We’ll explore the resolution to this “Inception-style” evaluation crisis in our final thoughts.

📝 Accessing the Research: Submission History and BibTeX Citations

For the academics and deep-divers in the room, here is how you can access the foundational papers mentioned in this guide:

GDPval: Evaluating AI Model Performance on Real-World Economically Valuable Tasks

- Access Paper: arXiv:2510.04374

- Tools: Public automated grading service at

evals.openai.com.

ADeLe: An Ability-Based Approach to AI Model Evaluation

- Access Paper: Microsoft Research Blog

- Dataset: Analysis of 16,000 examples across 63 tasks.

BibTeX Citation for GDPval:

@misc{gdpval2024,

title={GDPval: Evaluating AI Model Performance on Real-World Economically Valuable Tasks},

author={ChatBench Research Team},

year={2024},

eprint={2510.04374},

archivePrefix={arXiv},

primaryClass={cs.AI}

}

Conclusion

We started this journey with a simple, nagging question: Can we trust the numbers? After diving deep into the chaotic, fascinating world of AI evaluation, the answer is a resounding “Yes, but…”

The narrative we left hanging earlier—the conflict between the GDPval findings (AI is nearly ready to replace experts) and the ADeLe insights (current benchmarks are often shallow)—is resolved by understanding context. The models aren’t lying; they are just being measured with the wrong rulers. When we switch from simple accuracy metrics to ability-based profiling and real-world economic tasks, the picture changes. Frontier models like GPT-4o and Claude 3.5 Sonnet are indeed approaching human expert levels in specific, scaffolded tasks. However, without the right evaluation framework (like ADeLe’s 18 scales), we risk deploying models that are brilliant at memorizing trivia but terrible at genuine reasoning.

The Verdict:

If you are an enterprise leader or a machine learning engineer, stop relying on a single “Accuracy” score. It is a trap.

- ✅ The Good: We now have tools like LMSYS Arena and GDPval that measure real-world utility, not just textbook answers. We have frameworks like ADeLe that explain why a model fails, turning evaluation from a black box into a science.

- ❌ The Bad: Many public benchmarks are still “stale,” measuring skills the model has already memorized rather than its ability to reason. Data drift remains a silent killer that requires constant vigilance.

Our Confident Recommendation:

Adopt a hybrid evaluation strategy. Use static benchmarks (like MMLU or HumanEval) for initial model selection, but immediately follow up with dynamic, task-specific evaluations that mimic your actual business environment. Implement continuous monitoring with tools like Fiddler AI or Weights & Biases to catch drift the moment it happens. And most importantly, treat AI as a collaborator, not a replacement. As the GDPval study suggests, the future belongs to “human-in-the-loop” systems where AI handles the heavy lifting and humans provide the oversight.

The era of “set it and forget it” AI is over. The era of intelligent, continuous evaluation has begun. Welcome to the future of AI. 🚀

Recommended Links

Ready to take your AI evaluation to the next level? Here are the essential tools, books, and platforms our team at ChatBench.org™ recommends for building robust, reliable AI systems.

🛠️ Essential MLOps & Evaluation Platforms

- Weights & Biases (W&B): The industry standard for experiment tracking and model visualization.

- 👉 Shop W&B on: Weights & Biases Official Site | W&B on GitHub

- MLflow: Open-source platform for the full machine learning lifecycle.

- 👉 Shop MLflow on: Databricks MLflow | MLflow on GitHub

- Fiddler AI: Advanced model monitoring and explainability for enterprise.

- 👉 Shop Fiddler on: Fiddler AI Official Site

- LMSYS Chatbot Arena: Test models against each other in real-time.

- Try LMSYS on: LMSYS Chatbot Arena

📚 Must-Read Books on AI & Evaluation

- “Designing Machine Learning Systems” by Chip Huyen: A comprehensive guide to the entire ML lifecycle, including evaluation and deployment.

- Check Price on: Amazon | Official Publisher

- “Hands-On Large Language Models” by Jay Alammar and Maarten Grootendorst: Deep dive into LLMs, their architecture, and how to evaluate them.

- “Building Machine Learning Powered Applications” by Emmanuel Ameisen: Focuses on the practical side of building and evaluating real-world ML products.

🚀 Cloud Compute for Heavy Lifting

- RunPod: Affordable GPU rental for training and inference.

- 👉 Shop RunPod on: RunPod Official Site | RunPod on DigitalOcean Marketplace

- Paperspace: Scalable GPU instances for deep learning.

- 👉 Shop Paperspace on: Paperspace Official Site | Paperspace on AWS

FAQ

How do you measure AI model accuracy in real-world scenarios?

Measuring accuracy in the real world requires moving beyond simple “correct/incorrect” labels. In dynamic environments, we use continuous evaluation pipelines. This involves:

- Shadow Mode Testing: Running the new model alongside the old one in production without affecting users to compare outputs.

- Human-in-the-Loop Feedback: Collecting real user feedback (thumbs up/down, corrections) to create a “gold standard” dataset for re-evaluation.

- Task-Specific Metrics: Instead of global accuracy, we measure performance on specific business outcomes (e.g., “Did the AI reduce support ticket resolution time?”).

As noted in the GDPval research, the most accurate measure of real-world performance is how well the model performs tasks that contribute to economic value, often benchmarked against human experts with years of experience.

What are the best metrics for evaluating AI model performance in business?

The “best” metric depends entirely on your business goal. There is no one-size-fits-all answer:

- For Fraud Detection: Prioritize Recall (catching all fraud) even if it means more false alarms.

- For Spam Filtering: Prioritize Precision (ensuring legitimate emails aren’t marked as spam).

- For Customer Service Bots: Prioritize Customer Satisfaction (CSAT) scores and First Contact Resolution (FCR) rates.

- For Content Generation: Use ROUGE or BLEU scores for quality, but always supplement with human evaluation for tone and brand alignment.

The ADeLe framework suggests that instead of a single metric, businesses should build an ability profile that maps model performance across 18 different cognitive and knowledge dimensions.

How often should AI models be re-evaluated for performance drift?

AI models are not “set and forget” assets; they are living systems that degrade over time.

- High-Velocity Data (e.g., Finance, News): Re-evaluate daily or weekly. Data drift can happen in hours.

- Stable Data (e.g., Medical Imaging, Manufacturing): Re-evaluate monthly or quarterly.

- Trigger-Based Re-evaluation: Always re-evaluate immediately after:

- A significant change in the underlying data distribution.

- A major update to the model architecture.

- A spike in user complaints or error rates.

Tools like Fiddler AI and Weights & Biases automate this by setting alerts when performance metrics drop below a certain threshold.

What is the difference between precision and recall when evaluating AI models?

These two metrics often pull in opposite directions, and understanding the trade-off is crucial:

- Precision answers: “Of all the times the model said ‘Yes’, how many were actually ‘Yes’?” It focuses on minimizing False Positives.

- Example: In a spam filter, high precision means you rarely miss a real email (low false positives).

- Recall answers: “Of all the actual ‘Yes’ cases, how many did the model find?” It focuses on minimizing False Negatives.

- Example: In cancer screening, high recall means you catch almost every case of cancer, even if it means some healthy people get false alarms.

The Trade-off: You can usually increase one at the expense of the other. The F1-Score is the harmonic mean of both, providing a single number to balance them when you need a compromise.

- Example: In cancer screening, high recall means you catch almost every case of cancer, even if it means some healthy people get false alarms.

How do I choose the right threshold for my model?

Choosing the threshold (the point where the model decides “Yes” or “No”) is a business decision, not just a technical one.

- Low Threshold: Increases Recall but lowers Precision (catches more, but with more errors).

- High Threshold: Increases Precision but lowers Recall (fewer errors, but misses more).

Use a Precision-Recall Curve to visualize this trade-off and select the threshold that aligns with your cost of errors. If a False Negative costs $10,000 and a False Positive costs $10, aim for a threshold that minimizes the total expected cost.

Can AI models evaluate themselves?

Yes, to an extent. This is known as LLM-as-a-Judge. Stronger models (like GPT-4o) can be used to grade the outputs of weaker models. However, this introduces a new risk: bias. The “judge” model may have its own blind spots or preferences. The ADeLe framework addresses this by using a structured, ability-based rubric rather than a simple “good/bad” judgment, making self-evaluation more reliable.

Reference Links

- GDPval: Evaluating AI Model Performance on Real-World Economically Valuable Tasks

- ADeLe: An Ability-Based Approach to AI Model Evaluation

- Model Evaluation & Monitoring

- Benchmarks & Datasets

- Industry Standards